On this page

TLDR

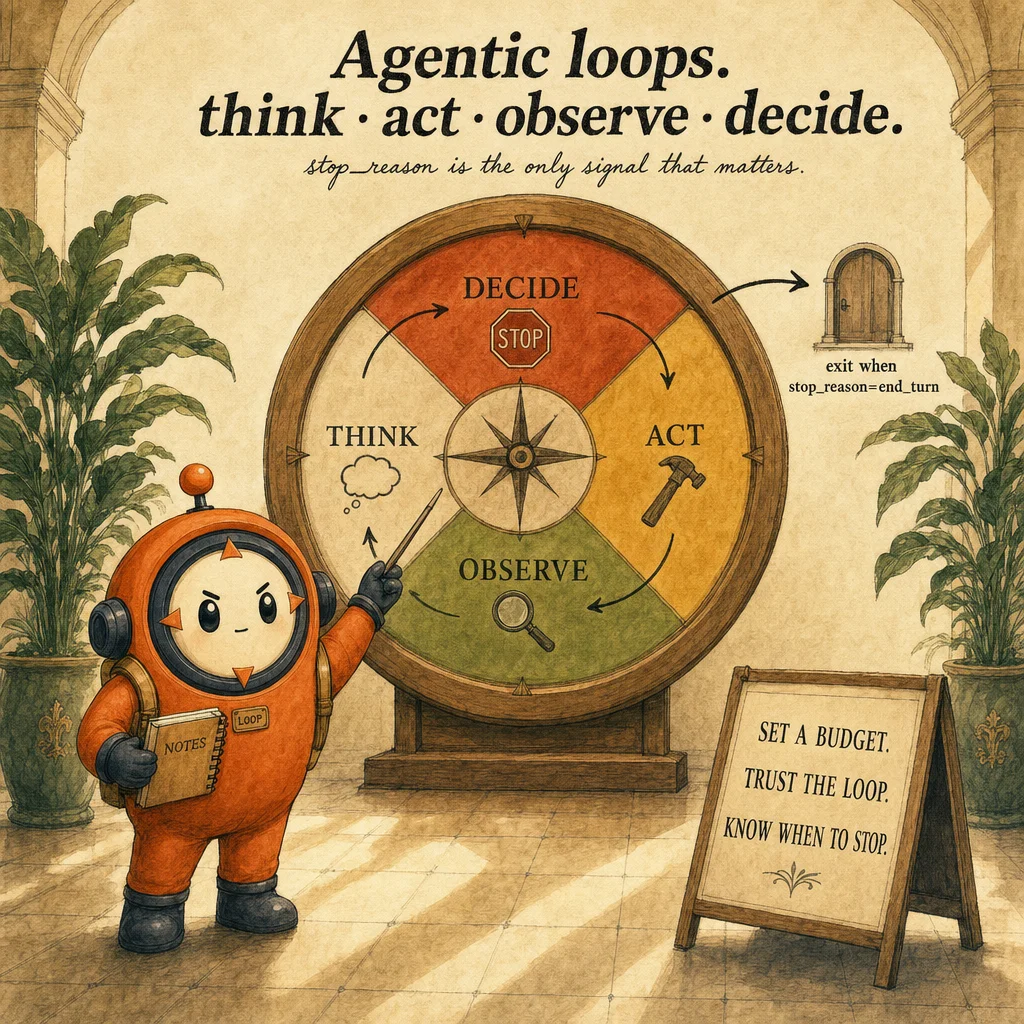

An agentic loop turns one API response into a controlled, multi-turn system. The harness reads stop_reason, dispatches tools, appends observations, and decides whether to continue. The common failure is treating natural-language text as the termination signal.

What it is

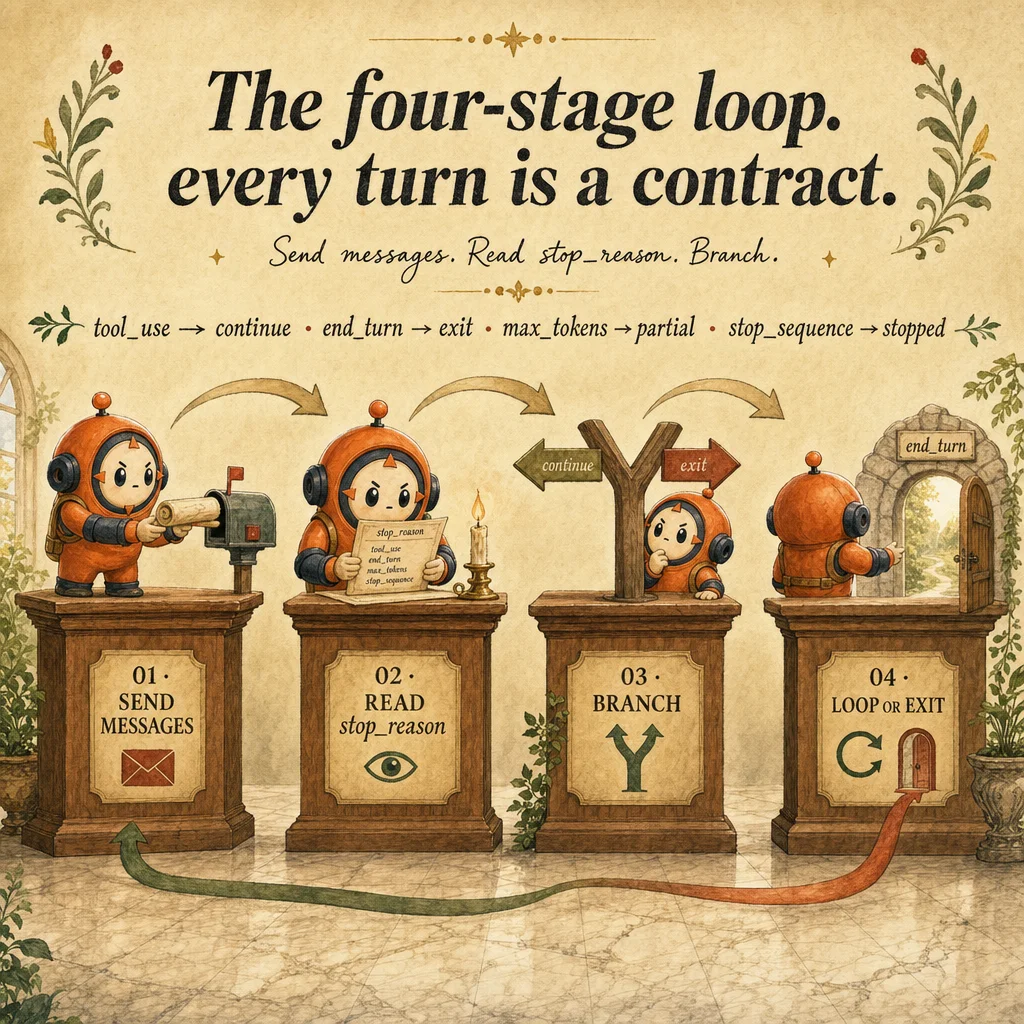

An agentic loop is a harness pattern that turns a single API call into a controlled, multi-turn system. The mental model: send a messages.create() request, read the response, branch on stop_reason, and either exit or append the tool result and call again. The user perceives one fluid agent conversation. The engineering surface is a plain while-loop, a growing message list, and a single termination check, nothing more, nothing less.

What makes the loop agentic rather than ordinary is that the model decides the next step, not the developer. Each turn, Claude inspects the message history and the available tools, then either returns a final response (stop_reason: "end_turn") or requests one or more tool executions (stop_reason: "tool_use"). Your harness executes the tools, appends tool_result blocks, and loops. The model's autonomy is bounded by the tools you provided, the budget you allotted (max_tokens, max_iterations), and the stop conditions you respect.

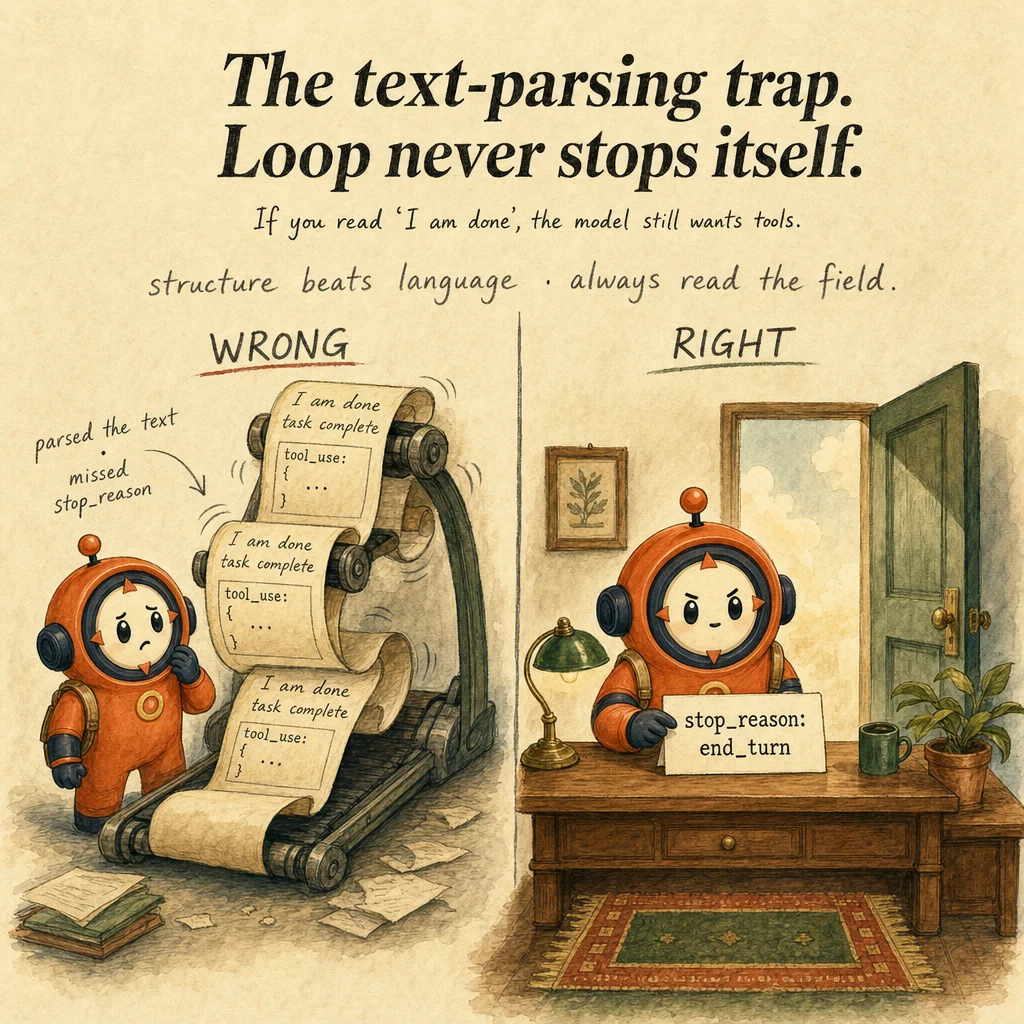

The contract is structural, not linguistic. Claude communicates termination through the stop_reason field, a small enum (end_turn, tool_use, max_tokens, stop_sequence). It does not communicate termination through the natural language of the response. A response can contain the words "I'm done" while stop_reason is still tool_use. A response can be silent on completion while stop_reason is already end_turn. Always read the field, never the text.

Production agentic loops fail in three predictable ways: text-shape parsing (treating the presence or absence of text as a completion signal), iteration caps as primary control (using max_iterations = 10 instead of fixing the root cause of a runaway loop), and missing tool_result appends (forgetting to push the tool execution result back into the message list, which makes Claude re-request the same tool indefinitely). Each is a one-line bug, and each is the most-tested distractor pattern on Domain 1 of the exam.

How it works

The loop runs in four conceptual stages per iteration. First, Think: Claude reads the cumulative message list, evaluates the request against available tools, and forms a plan. Second, Act: if a tool call is needed, Claude emits a tool_use block with a name and structured input. Third, Observe: your harness executes the tool, captures the result (or error), and appends a tool_result block to the message list. Fourth, Decide: Claude inspects the new history and either continues calling tools or returns a final answer. The stop_reason field surfaces the decision.

The message list grows monotonically. Every assistant response is appended; every tool execution result is appended. By turn 8, the list contains the full conversation: original user message, 7 assistant responses, 7 batches of tool results. This is what makes loops context-bound, you can't run forever because each turn enlarges the prompt, and eventually you hit max_tokens or context limits. Plan the budget before you start the loop, not when it's already three turns in.

Tool execution is your responsibility, not the model's. The tool_use block tells you what Claude wants to call (name, input JSON); your harness wraps the actual function call in try/except, validates inputs, returns either the result or a structured error message. Claude does not see exceptions, it sees whatever string you place in tool_result.content. If you swallow errors silently, Claude will retry the same broken tool call. Always return structured error messages so Claude can recover.

Termination is a single field check, repeated per iteration. end_turn means the model is done; you exit the loop and present the final text. tool_use means the model wants more tools; you execute, append, continue. max_tokens means the model exhausted its output budget mid-task; you save the partial result and decide whether to chunk or raise the limit. stop_sequence means a custom stop string matched (only relevant if you set one). Anything else is an error worth logging, but in practice, you'll see only these four values.

Where you'll see it

Customer support refund flow

A support agent receives a refund request. Turn 1 calls verify_customer. Turn 2 calls lookup_order. Turn 3 calls process_refund with policy hooks attached. Each turn the harness inspects stop_reason. The most-frequent production bug is checking the response text for the word "done" instead, Claude often says "I'm processing your refund" (text) while still emitting a tool_use block (the real next step), so the loop exits early and the refund never executes. The fix is one line: branch on stop_reason, not on content[0].type.

Multi-agent research orchestration

A coordinator delegates papers, regulations, and competitor analysis to three research subagents in parallel. The coordinator's loop polls each subagent's stop_reason. When a subagent returns end_turn, the coordinator collects the structured result; when tool_use, it forwards the call to the right tool registry. The trap here is missing tool_result appends between subagent turns, the coordinator forgets to push the result back into the subagent's message list, the subagent re-requests the same tool, and the loop runs until max_iterations saves it. The cap masks the real bug.

Headless CI code-review bot

GitHub Actions invokes Claude with claude -p on a PR diff. The agent calls Read, Grep, and Bash repeatedly to inspect changed files, run tests, and produce a structured review JSON. On large PRs, stop_reason: "max_tokens" arrives mid-review, the bot must emit a partial JSON summary with verdict: "hold" and a truncated: true flag, then exit cleanly. Production fails when the bot treats text-presence as success: it emits a JSON with empty findings: [] and a stale verdict: "approved" field, silently green-lighting a PR it never finished reading.

Long-document entity extraction

A 200-page contract gets entity-extracted by a single agent loop. Each turn calls mark_entity on a document chunk; the harness accumulates marked spans. Around chunk 18, stop_reason: "max_tokens" arrives because the assistant message list now contains 17 turns of tool_use + tool_result and there's no room for a 19th. The fix is context windowing: at every checkpoint, summarize earlier turns into a single facts block, drop the verbose history, and continue. Without windowing the loop dies at the same point in every document, regardless of model size.

Operations runbook executor

An on-call assistant runs a deployment runbook against a Kubernetes cluster. Each turn calls one of kubectl_describe, read_logs, restart_pod, or verify_health. The loop halts on stop_reason: "end_turn" after the assistant confirms the service is healthy. The common production bug is a stale tool_result append: the runbook calls restart_pod, the harness restarts, but the harness forgets to push the result back. The model then re-issues the same restart on the next turn and bounces the same pod three times before a human intervenes.

Conversational data analyst

A finance team asks an analytics assistant questions in natural language. The loop branches: simple aggregates resolve in two turns (one query_warehouse call plus a summary); cohort and funnel analyses span eight to twelve turns with intermediate build_view and validate_metric calls. The assistant terminates only when stop_reason: "end_turn" arrives with a chart spec attached. Iteration caps would silently truncate the cohort analyses; using stop_reason lets simple and complex questions share the same loop.

Code examples

from anthropic import Anthropic

client = Anthropic()

def run_agent(user_msg: str, tools: list, system: str, max_iter: int = 15):

messages = [{"role": "user", "content": user_msg}]

for i in range(max_iter):

resp = client.messages.create(

model="claude-opus-4-5",

max_tokens=2048,

system=system,

tools=tools,

messages=messages,

)

# CRITICAL: branch on stop_reason. Never on text content.

if resp.stop_reason == "end_turn":

text = "".join(b.text for b in resp.content if b.type == "text")

return {"status": "ok", "text": text, "iterations": i + 1}

if resp.stop_reason == "tool_use":

messages.append({"role": "assistant", "content": resp.content})

tool_results = []

for block in resp.content:

if block.type == "tool_use":

try:

result = execute_tool(block.name, block.input)

except Exception as e:

result = {"error": str(e)}

tool_results.append({

"type": "tool_result",

"tool_use_id": block.id,

"content": json.dumps(result),

})

messages.append({"role": "user", "content": tool_results})

continue

if resp.stop_reason == "max_tokens":

return {"status": "partial", "iterations": i + 1,

"reason": "token_limit"}

return {"status": "error", "stop_reason": resp.stop_reason}

return {"status": "error", "reason": "iteration_cap_exceeded"}Looks right, isn't

Each row pairs a plausible-looking pattern with the failure it actually creates. These are the shapes exam distractors are built from.

Check if the response text contains "done" or "complete" to know when to stop.

Natural-language phrases vary across generations and across models. Claude may say "I'm done now" while still emitting a tool_use block in the same response. Only stop_reason is authoritative, the text is the user-facing rendering, not the control signal.

Set max_iterations = 10 to prevent runaway loops.

Caps mask the real failure (missing tool_result append, ambiguous tool description, two tools that look interchangeable). A legitimate refund flow may need 12 iterations on a complex case. The cap converts a bug into a silent partial success. Fix the cause; don't bound the symptom.

If response.content[0].type === "text", the agent is finished.

Claude returns text and tool_use blocks in the same response. A typical assistant turn looks like [text, tool_use, tool_use], the leading text is preamble ("I'll start by checking the order"), not termination. Only stop_reason === "end_turn" is the exit signal.

When stop_reason: "max_tokens" arrives, the loop crashed and we should retry from scratch.

max_tokens is a normal partial-result signal, the model hit the output budget mid-task. Save the partial work, raise max_tokens for the retry, or chunk the input. Restarting from scratch loses real progress and burns budget twice.

Wrap the entire tool-execution block in try/except and return a generic "tool failed" string to Claude.

Generic errors strip the information Claude needs to recover. Return structured errors like {"error": "customer_id not found", "hint": "verify format starts with cus_"}. Claude reads them, adjusts arguments, and retries successfully, that's the whole point of the loop.

Side-by-side

| Aspect | Agentic Loop | Single-Turn Call | Subagent-Delegated | Workflow Orchestrator |

|---|---|---|---|---|

| Termination signal | stop_reason='end_turn' | Single response, exit | Subagent loop; coordinator polls | Step-graph completion |

| Tool execution | Multi-turn, results fed back | No tools | Subagent owns tools; returns summary | Coordinator routes per-step |

| Context fill | Grows monotonically per turn | None | Main stays clean; subagent context discarded | Per-step contexts; reset between |

| Failure mode | Text-shape parsing, missing tool_result | n/a | Coordinator assumes inheritance | Step config drift over time |

| Best for | Multi-step tasks (3–15 turns) | Simple Q&A, classification | Verbose work, parallel research | Fixed multi-step pipelines |

| Cost shape | Linear in turns × tokens | Constant per call | Coordinator + N subagent budgets | Per-step + orchestration overhead |

Decision tree

Does the task need multiple tool calls to reach a result?

Is the work verbose or independent (e.g., reading 50 files)?

Are you hitting stop_reason: "max_tokens" mid-loop?

Are tools throwing exceptions inside the harness?

Is the same tool being called repeatedly with no progress?

tool_result block. Without it, Claude re-requests indefinitely.Question patterns

Your agentic loop keeps running after Claude has clearly finished its task. Which control was likely missed?

stop_reason. The most-tested distractor is "add a max_iterations cap". The right answer is "branch the loop on stop_reason == 'end_turn'", structural field, never natural language.A subagent returns wrong facts about a customer the coordinator clearly mentioned earlier. Why?

"the subagent's max_tokens was too low". The actual fix is to pass every needed fact in the subagent's task string explicitly.An agent loop hits a budget ceiling and you keep raising `max_iterations` to make it pass. What is the real problem?

max_iterations is a safety cap, not the primary exit condition. Repeatedly raising it masks the underlying bug, usually a missing tool_result append, ambiguous tool descriptions, or two tools the model alternates between.Your refund agent emits the words "I'm processing your refund now" but then makes another tool call. The harness exits early. Why?

tool_use block in the same response; only stop_reason is authoritative. The fix is to ignore the text and read stop_reason: "tool_use" to continue the loop.Long-document extraction stalls at chunk 18 every single time, regardless of model. What is happening?

tool_use plus tool_result blocks; turn 18 hits stop_reason: "max_tokens". The fix is context windowing: summarize old turns into a facts block, drop the verbose history, continue. Raising max_tokens does not fix it past a certain point.Your code-review CI bot returns valid JSON with empty `findings: []` even on PRs that clearly have issues. Why?

stop_reason: "max_tokens" mid-review, but the code did not branch on that signal, so it serialized whatever was in the buffer, which happened to be empty. The fix is to handle max_tokens as a partial-result signal and emit truncated: true.A tool throws an exception inside your harness; on the next iteration Claude requests the same tool again with the same arguments. Why?

"tool failed" to tool_result. Claude saw no actionable error and re-requested. The fix is to catch the exception and return a structured error with a hint, e.g. {"error": "customer_not_found", "hint": "verify cus_ prefix"}.Two of your tools have similar names (`fetch_data` and `get_data`). The model picks the wrong one 30% of the time. Best first fix?

Frequently asked

How is an agentic loop different from a normal API call?

while block that re-sends the cumulative message list after every tool result. State accumulates across turns; the model decides each next step until stop_reason: "end_turn".Where do hooks (PreToolUse, PostToolUse) fit relative to the loop?

tool_use branch, before or after your harness executes the tool. They validate arguments, enforce policy, or log audit data. They do not replace stop_reason-based termination; that's the loop's responsibility.What's the difference between `stop_reason` and `stop_sequence`?

stop_reason is the field that says *why* the model stopped (end_turn, tool_use, max_tokens, stop_sequence). stop_sequence is the matched string when stop_reason === "stop_sequence". You only see this if you passed a stop_sequences parameter in the request.Should I always call `tool_use` and never use `tool_choice: "any"` or forced?

tool_choice: "auto" (the default) for open-ended loops. Use forced ({type: "tool", name: X}) only for mandatory first steps like identity verification. "any" and forced are incompatible with extended_thinking, see /concepts/tool-choice.Can a single response have both text and tool_use blocks?

[text, tool_use] or [text, tool_use, tool_use]. The text is preamble ("Let me check that for you"); the tool_use is the action. Always check `stop_reason`, not block presence.What's the right value for max_iterations?

stop_reason for that.How do I debug a loop that runs forever?

stop_reason and the names of any tool_use blocks. The two most common causes are: (1) you're not appending tool_result to the message list, so the model keeps requesting the same tool; (2) two tools have overlapping descriptions and the model alternates between them.Should I retry on `stop_reason: "max_tokens"`?

max_tokens if the input was just slightly over, or chunk the input if it's structurally too large. Treat it as normal partial completion, not failure.How do agentic loops interact with prompt caching?

cache_control: {type: "ephemeral"} and pay roughly 90% less for that section on every turn. The growing message list cannot be cached because each turn changes it.What if my agent needs to keep state across user sessions?

messages at session start. The loop doesn't manage persistence, that's your application's job.