On this page

TLDR

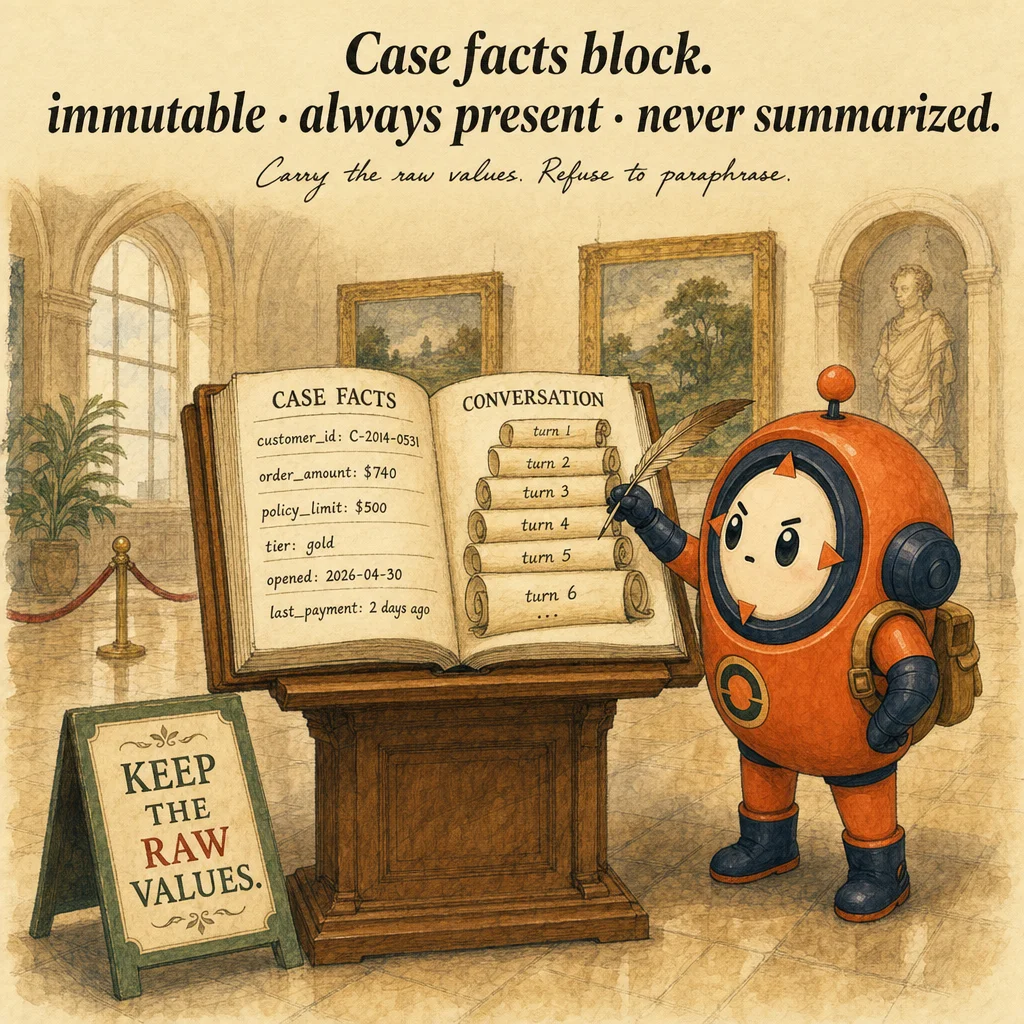

A case-facts block is an immutable set of transactional data (customer ID, order details, refund amount, policy limits) included at the top of every prompt during a multi-turn conversation. Unlike summaries (which lose precision), case facts are complete and never paraphrased.

What it is

A case-facts block is an immutable, high-visibility section at the start of every prompt that contains transactional facts the model must never lose or paraphrase. Customer ID cus_abc123. Order ID ord_xyz789. Refund amount $247.83. Refund deadline 2026-04-30. Policy tier gold. These are the values that survive until the case closes. Without the block, progressive summarization compresses them away: by turn 20 the model refers to "the customer" with no ID, and the next tool call fails as verify_customer(None).

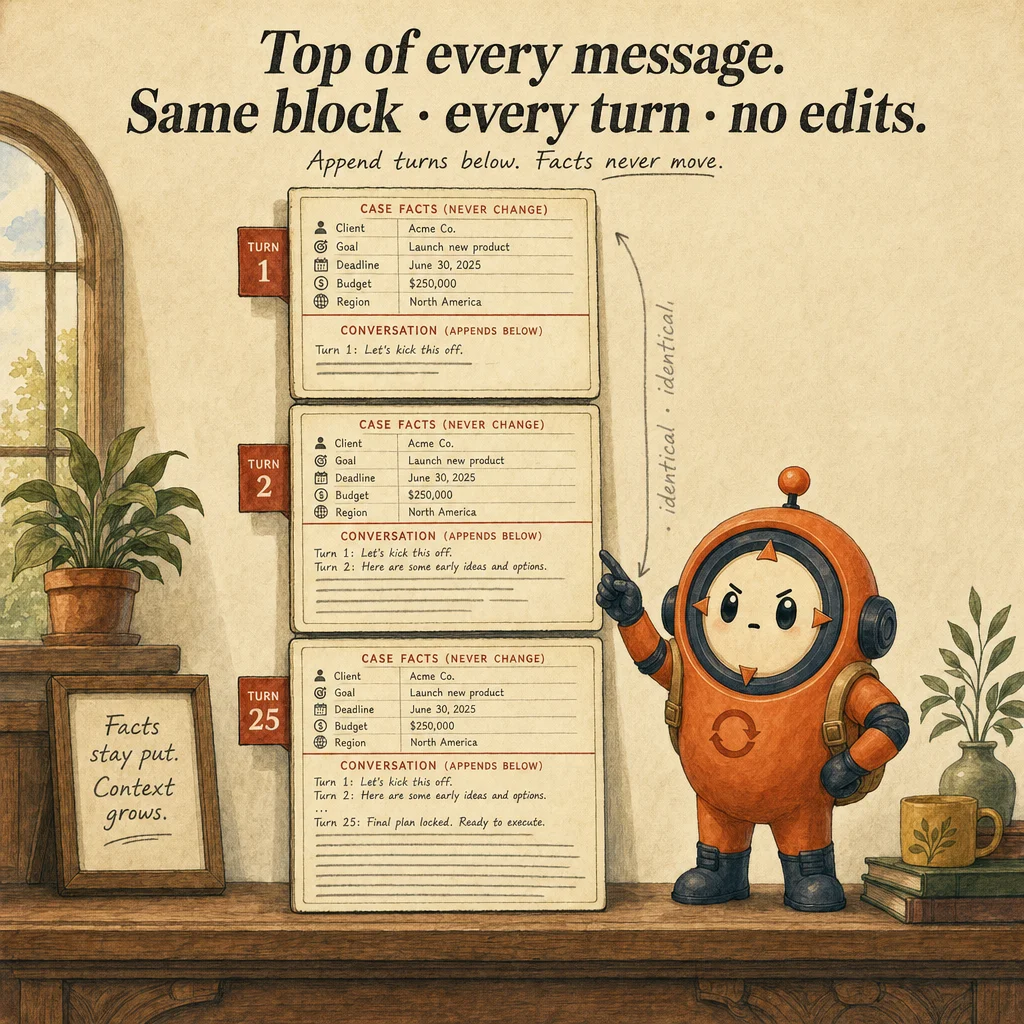

The structural answer is to anchor the facts in a dedicated block that lives outside the summarizable history. Format is a YAML or JSON dictionary inside delimiters like <CASE_FACTS>...</CASE_FACTS>, placed at the very top of the system prompt or the first user message. Fields are exact values from the source, never paraphrased. The block is written once at case start and never rewritten. Every subsequent message includes it unchanged. Modification is permitted only when the user explicitly corrects a fact.

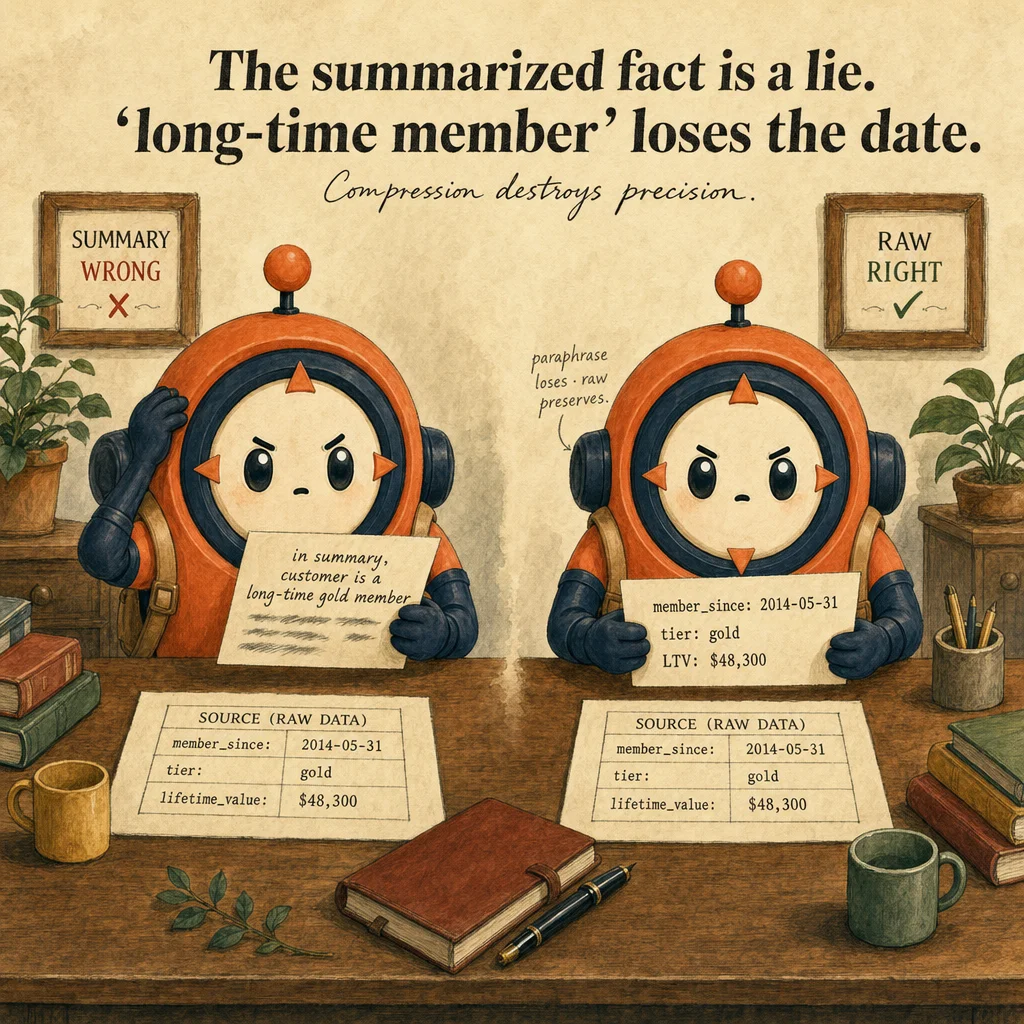

The block solves the progressive summarization trap. In a long conversation (30+ turns), context-management compresses old messages to free space. Summaries are lossy by design: "the customer asked for a refund, the agent checked the order, they discussed eligibility." The exact ID, the exact amount, and the exact deadline disappear into vague pronouns. By turn 40 the model says "let me verify the customer" without the ID to pass the tool. The case-facts block escapes this trap because it lives at the top, not in the history.

Implementation comes down to two architectural rules. Placement: prepend the block to every message (system prompt or first user turn, pick one and stay consistent). Persistence: include the block unchanged in the message list until the case closes. Use a preserve_case_facts_during_summarization helper that extracts the block, summarizes the rest, then prepends the block back. The overhead is tiny (50-500 tokens). The reliability gain is enormous: tools always have the exact values, audit trails are clean, and human escalations get authoritative facts without re-reading the conversation.

How it works

The lifecycle has four stages. Initialization: the user provides transactional facts at case open ("Customer cus_abc123, order ord_xyz789, amount $247.83"). You extract these and format them as a structured block. Injection: prepend the block to every subsequent message in the case (system prompt or first user turn, consistently). Preservation: when the message list grows past safe context size, summarize the conversation but keep the block intact. Immutability: the block changes only on explicit user correction; conversation flow never edits it.

Placement matters. System prompt placement keeps facts out of the user message stream and is always available, but counts against the system prompt budget. First-user-message placement is semantically cleaner (the user states the facts) but requires injecting the block per session. Both work. Consistency matters more than choice: switching strategies mid-case breaks tooling that expects facts at a known location. Pick one and stick with it.

Progressive summarization with facts preservation is the workhorse pattern. When the message list approaches the token budget, you (1) extract the case-facts block, (2) summarize the middle turns into 3-4 sentences, (3) rebuild the message list with the facts block at top, the summary as a synthetic user-role message, and the most recent 3-5 turns intact. The facts block is never included in what gets summarized; the helper deliberately omits it from the summarization input so the summary cannot accidentally paraphrase exact values like $247.83 into "about $250".

The block is a loop invariant inside agentic loops. At the start of each iteration, before calling messages.create(), verify the block is at the top of the message list. When you append tool_result blocks, they go below the block, never replacing it. When you summarize during long loops, use the preservation helper. The block is the anchor that keeps a 50-turn loop grounded without context drift, and it survives multi-session handoffs (store it in your database, retrieve at session start).

Where you'll see it

Customer support refund case (30+ turns)

A customer initiates a refund. You extract customer_id, order_id, refund_amount, reason, ticket_id and format the case-facts block. Inject at the start of every message in the loop. By turn 30 the conversation history is large but the block is immutable at the top. When the agent calls process_refund(customer_id, amount), it pulls values directly from the block, never "the customer" or "some amount." On turn 45 you summarize: extract the facts, summarize turns 5-40, reinject the facts. The block survives intact.

Long-document contract review (multi-session)

A contract review spans multiple sessions (the user returns the next day). Session 1 initializes facts: parties, execution_date, document_id. Store the block in your database. Session 2 retrieves the block and prepends it to the new conversation. The user asks new questions ("What's the termination clause?") and the model always has the original facts available. No re-entry friction, no need to restate context. The block is the persistent identity of the case.

Insurance claims processing with policy verification

A claims agent verifies eligibility against policy rules over 40+ turns. Case facts: claim_id, policy_id, claimant_name, incident_date, claim_amount. By turn 25, when checking against policy rules, the agent always has the exact claim ID and incident date (not vague references). When escalating to a human reviewer on turn 35, the block provides the human with authoritative facts without re-reading the conversation. The block doubles as the audit record.

Headless CI code review with tight context

A headless agent reviews a large PR. Context window is 100k tokens; the PR diff alone uses 60k. You can afford about 20 turns of loop before hitting limits. Inject a case-facts block: pr_number, branch, reviewer, coverage_floor. After turn 15 you summarize: extract facts (block stays intact), compress turns 1-12 into a 2-sentence summary, continue. Facts survive; verbose history is discarded. The bot completes the review under budget.

Code examples

from typing import TypedDict

import re

from anthropic import Anthropic

client = Anthropic()

class CaseFacts(TypedDict, total=False):

customer_id: str

order_id: str

refund_amount: float

reason: str

ticket_id: str

refund_deadline: str

policy_tier: str

def format_case_facts_block(facts: CaseFacts) -> str:

"""Format facts as an immutable YAML-style block."""

lines = ["<CASE_FACTS>"]

for k, v in facts.items():

if v is not None:

lines.append(f"{k}: {v}")

lines.append("</CASE_FACTS>")

return "\n".join(lines)

def inject_facts(messages: list[dict], block: str) -> list[dict]:

"""Prepend the block to the first user message if not already present."""

for msg in messages:

if msg["role"] == "user":

if block not in msg["content"]:

msg["content"] = block + "\n\n" + msg["content"]

break

return messages

def preserve_facts_through_summary(

messages: list[dict], block: str

) -> list[dict]:

"""Compress middle turns; keep block at top; keep last 3 turns intact."""

if len(messages) < 8:

return messages

middle = messages[2:-3]

summary_input = "\n".join(

f"{m['role']}: {str(m['content'])[:200]}" for m in middle

)

summary_resp = client.messages.create(

model="claude-opus-4-5",

max_tokens=300,

messages=[{

"role": "user",

"content": (

"Summarize this conversation in 3 sentences. Do NOT include "

"specific amounts, dates, or IDs (those live separately).\n\n"

+ summary_input

),

}],

)

summary = summary_resp.content[0].text

return [

{"role": "user", "content": block + "\n\nPRIOR CONTEXT: " + summary},

*messages[-3:],

]Looks right, isn't

Each row pairs a plausible-looking pattern with the failure it actually creates. These are the shapes exam distractors are built from.

Just remind Claude at the start of each message to remember the customer ID.

Reminders are probabilistic. By turn 40, the model can still drift. The case-facts block is structural: it's always at the top of the message list, never lost in summarization. A prompt reminder is guidance; a facts block is architecture.

Include the case facts in the system prompt once and they'll persist.

System prompt persists across messages, but case-specific facts (customer ID, order ID) are best in the first user message or injected before every loop iteration. System prompt is for universal rules, user message is for case state.

When the conversation summarizes, update the case-facts block to reflect the latest state.

The block is immutable. Update only on explicit user correction ("the customer ID is actually..."). Conversation flow (e.g. "agent confirmed the amount") does not change the block. Immutability is the guarantee that prevents lossy edits.

Progressive summarization is unnecessary if the model has a 200k context window.

Even with 200k tokens, early-conversation facts can drift into the Lost-in-the-Middle zone after turn 50. The block keeps facts at the top, eliminating drift. Larger context windows do not eliminate the need for the pattern.

Format the case-facts block as a natural-language sentence so it reads cleanly.

Use a structured format (YAML, JSON, or a labeled key-value block). Natural language is lossy: "the customer mentioned it was around $500" vs. precise: refund_amount: 500.00. Tools rely on exact values, vague prose causes failures and policy violations.

Side-by-side

| Aspect | Case-Facts Block | Progressive Summarization | Sliding Window | Full History |

|---|---|---|---|---|

| Transactional fact preservation | Immutable, always at top | Lossy (facts drift into summary) | Facts can drift mid-window | 100% preserved |

| Implementation overhead | Minimal (prepend + preserve) | Moderate (extract, summarize, reinject) | Low (FIFO trim) | None |

| Scaling to 50+ turns | Yes, facts never lost | Partial, depends on summarization care | No, early facts lost first | No, hits token limit |

| Tool integration | Tools read exact values from block | Tools read vague pronouns from summary | Tools read recent context only | Tools read full conversation |

| Audit trail | Clean, block doubles as record | Weak, summary-based | Weak, only recent visible | Excellent, raw history |

| Best for | Compliance, support, legal, handoff | Cost optimization, casual chat | Simple chat with no transactional state | Short conversations only |

Decision tree

Does the conversation contain exact transactional data (amounts, IDs, dates) that tools must consume?

Will this conversation cross 20+ turns or be handed off to another agent or human?

Are you coordinating multiple subagents on the same case?

Is this a compliance, healthcare, or legal context with audit requirements?

Are facts changing during the conversation (rare, but real)?

refund_amount_revised). Never overwrite the original.Question patterns

A support conversation hits turn 30 and the model loses the customer ID. You're using progressive summarization. What's missing?

Your case-facts block has `customer_id`, `order_id`, `amount`. By turn 20 the agent calls `process_refund(amount="about $250")`. Why?

messages.create() call.The user provides a corrected customer ID mid-conversation. How do you update the case-facts block?

customer_id_revised). Never let the agent self-modify the block.You pass the case-facts block to a subagent in the task string. The subagent ignores it and asks for the customer ID. Why?

Your case-facts block grows to 50 fields. Tokens add up. How do you trim?

After multi-session storage and retrieval, the case-facts block sometimes loses fields. What's the architecture fix?

You're using Anthropic's prompt caching. Should the case-facts block be cached?

cache_control: {type: "ephemeral"}. Saves ~90% on input cost for that section per turn.The conversation is 60 turns and you're hitting context limits. What's the right preservation strategy?

Frequently asked

Where exactly should the case-facts block go in the prompt?

How big should the case-facts block be?

What happens if the model tries to modify a fact mid-conversation?

cus_xyz," update the block. Conversation flow never modifies it. This is the immutability guarantee that prevents agentic drift.Does the case-facts block interact with prompt caching?

cache_control: {type: "ephemeral"} to save roughly 90% on the input cost of that section. The growing message list cannot be cached, but the block can.How do I know if progressive summarization preserved the facts?

verify_case_facts_present(messages) returns true only if the block is intact at the top. Failing this check should raise an exception, not silently continue with corrupted state.What if a fact is unknown at case start?

null or omit the field. Example: customer_id: cus_abc123, refund_amount: null. Tell the model: "if the amount is unknown, leave it null. Do not fabricate it." Honest absence beats invented values.Can a case-facts block be updated mid-loop?

How does the block integrate with multi-agent systems?

task = facts_block + "\n\n" + user_request.