On this page

TLDR

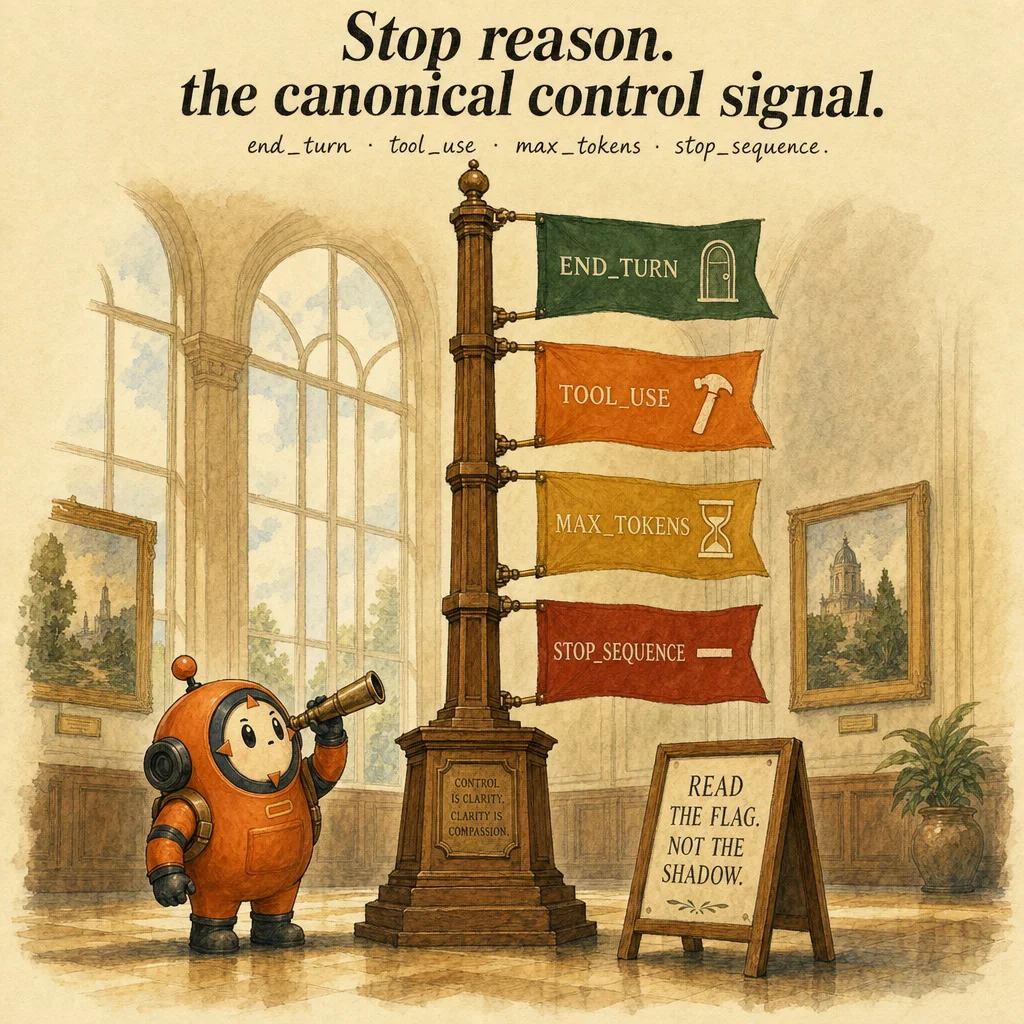

stop_reason is the authoritative struct field that says why Claude stopped, either end_turn (finished) or tool_use (wants to call a tool). Parsing natural-language phrases for termination is the most-tested distractor; it's unreliable and fails in production. Checking stop_reason is the only deterministic loop control.

What it is

stop_reason is a four-value enum field on every messages.create() response that signals why Claude stopped generating. The values you act on: end_turn (model is done), tool_use (model wants tools executed), max_tokens (output budget exhausted), and stop_sequence (custom stop string matched). It is the authoritative termination signal in any agentic loop.

The contract is structural, not linguistic. A response can contain the words "I'm done" while stop_reason is still tool_use, the text is preamble ("let me verify the customer first"), the tool_use is the real action. Conversely, a response can be silent on completion while stop_reason: "end_turn" signals it. Always read the field; never read the text.

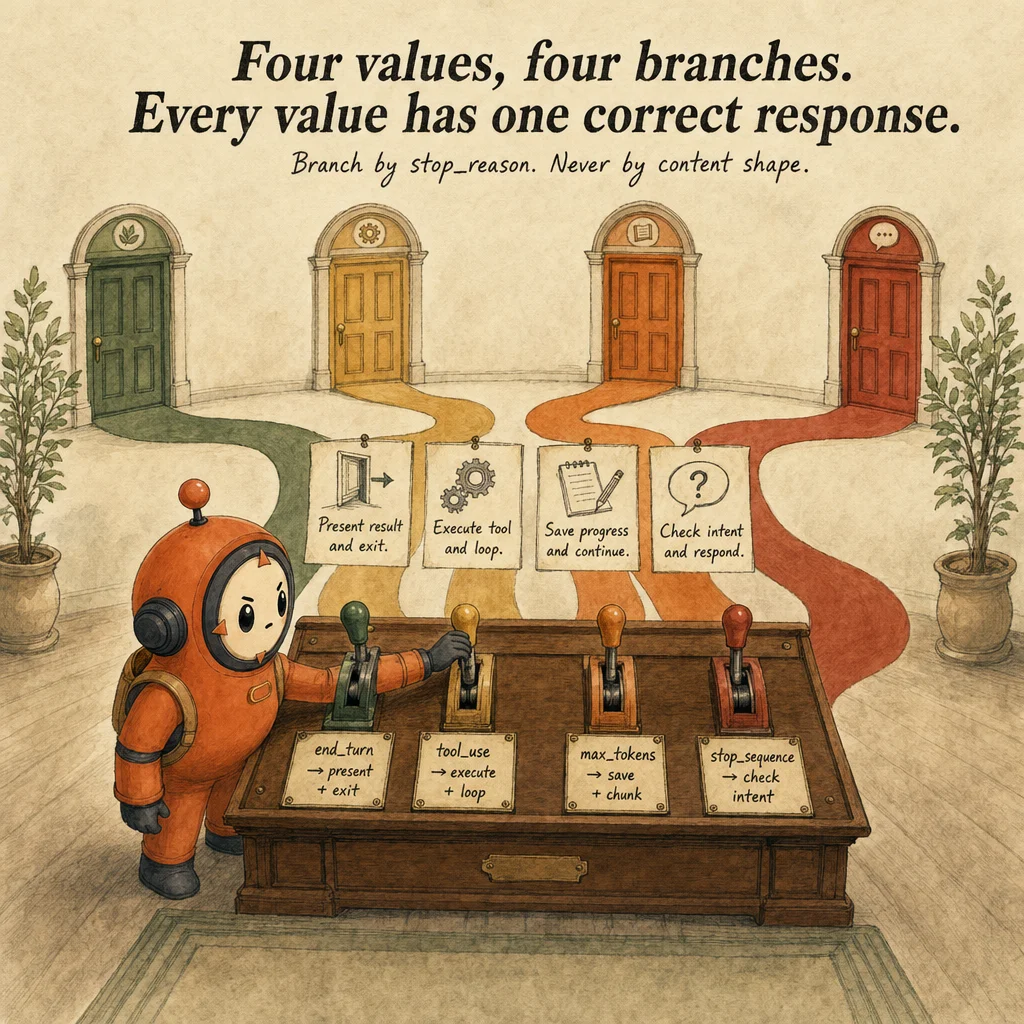

The two production branches you write code for every day are end_turn (exit the loop) and tool_use (execute tools, append results, continue). Everything else is either a graceful partial (max_tokens) or a rare edge case (stop_sequence). The exam drills these four branches relentlessly because text-shape parsing is the #1 bug: developers check content[0].type === "text" to decide "the agent is done," but a typical assistant message is [text, tool_use, tool_use].

`max_tokens` is not an error, it's a normal partial-result signal. When the output budget is exhausted mid-task, Claude emits stop_reason: "max_tokens" with whatever was generated so far. Save the partial work, then either raise max_tokens for a retry or chunk the input. Treating it as a crash loses real progress. The exam tests whether you recognize partial completion as a design decision, not a failure mode.

How it works

Every messages.create() call returns a Message object with a stop_reason field. The value is set by Claude during generation: if the model finishes its response, stop_reason is end_turn; if it requests a tool, the first tool_use block is appended to content and stop_reason becomes tool_use; if output reaches max_tokens, generation stops there. The field is always present and always one of these four values.

The canonical branching pattern: if stop_reason == "end_turn" then exit else if stop_reason == "tool_use" then execute and continue else if stop_reason == "max_tokens" then save partial else if stop_reason == "stop_sequence" then inspect match. Missing a branch is a logic bug, it crashes silently or retries indefinitely. TypeScript's discriminated unions make this safer: the compiler catches missed cases.

Tool execution happens only when stop_reason == "tool_use". In that case, content is an array containing one or more tool_use blocks (with name, id, input JSON). Your harness iterates, executes the tool, appends a tool_result block to the message list. The list grows: [user_msg, assistant_resp_1, tool_results_1, assistant_resp_2, tool_results_2, ...]. Growth is bounded by context size and by the stop_reason signal.

The max_tokens parameter sets the output budget per turn, not globally. If you ask for 2048 tokens and the model runs out at 1800, stop_reason: "max_tokens" arrives. The next call with max_tokens=4096 gets a fresh budget. This distinction matters: `max_tokens` is per-turn, not cumulative. Many developers confuse this and assume a single max_tokens value caps the entire loop. It does not.

Where you'll see it

Chatbot turn termination

User asks for an account balance. Agent calls get_balance. Response: stop_reason='tool_use'. Agent executes, appends result. Next turn: stop_reason='end_turn' with the answer. If code instead checks content[0].type === 'text', it exits at any text presence, even mid-tool-use.

Long-document extraction with token limits

Extracting entities from a 200-page contract. Mid-extraction, stop_reason='max_tokens' arrives. Code must save state, return partial result, and either chunk or raise the limit. Treating max_tokens as success silently truncates output.

Headless CI run

Claude in -p mode emits a single response. CI script reads stop_reason from the JSON output. end_turn → success path; max_tokens → truncated; stop_sequence → matched a custom guard. The script must branch all four outcomes.

Code examples

from anthropic import Anthropic

client = Anthropic()

def handle_response(resp) -> dict:

"""Branch on stop_reason. Never inspect content shape for termination."""

if resp.stop_reason == "end_turn":

# Normal completion. Extract text and exit loop.

text = "".join(b.text for b in resp.content if b.type == "text")

return {"status": "ok", "text": text}

if resp.stop_reason == "tool_use":

# Continue loop: execute tools, append results, resend.

return {"status": "continue", "tool_calls": [

{"id": b.id, "name": b.name, "input": b.input}

for b in resp.content if b.type == "tool_use"

]}

if resp.stop_reason == "max_tokens":

# Token budget exhausted. Return partial; chunk on retry.

partial = "".join(b.text for b in resp.content if b.type == "text")

return {"status": "partial", "text": partial,

"next_action": "chunk_input_or_raise_max_tokens"}

if resp.stop_reason == "stop_sequence":

# A custom stop_sequence matched. Treat as intentional termination.

return {"status": "stopped", "matched_sequence": resp.stop_sequence}

# Unknown stop_reason, log and fail safely.

return {"status": "error", "stop_reason": resp.stop_reason}

import Anthropic from "@anthropic-ai/sdk";

type LoopOutcome =

| { status: "ok"; text: string }

| { status: "continue"; toolCalls: Anthropic.ToolUseBlock[] }

| { status: "partial"; text: string; nextAction: string }

| { status: "stopped"; matched?: string }

| { status: "error"; stopReason: string | null };

function handleResponse(resp: Anthropic.Message): LoopOutcome {

switch (resp.stop_reason) {

case "end_turn":

return {

status: "ok",

text: resp.content

.filter((b): b is Anthropic.TextBlock => b.type === "text")

.map((b) => b.text)

.join(""),

};

case "tool_use":

return {

status: "continue",

toolCalls: resp.content.filter(

(b): b is Anthropic.ToolUseBlock => b.type === "tool_use",

),

};

case "max_tokens":

return {

status: "partial",

text: resp.content

.filter((b): b is Anthropic.TextBlock => b.type === "text")

.map((b) => b.text)

.join(""),

nextAction: "chunk_or_raise_max_tokens",

};

case "stop_sequence":

return { status: "stopped", matched: resp.stop_sequence ?? undefined };

default:

return { status: "error", stopReason: resp.stop_reason };

}

}

Looks right, isn't

Each row pairs a plausible-looking pattern with the failure it actually creates. These are the shapes exam distractors are built from.

If response has no tool_use blocks, the agent is done.

A response can have both text and tool_use blocks. stop_reason is the authoritative field. If stop_reason='tool_use', continue the loop even if text is present.

Treat max_tokens as a failure and abort.

max_tokens is a normal partial-result signal. Save what was returned, then either raise max_tokens or chunk the input. Aborting loses the partial work.

Stop the agent preemptively when token usage approaches the cap.

Preemptive stops lose real work and behave unpredictably. Let the agent finish its turn and read stop_reason, Claude already manages this gracefully.

Side-by-side

| stop_reason | Meaning | Next action | Production risk |

|---|---|---|---|

| end_turn | Agent finished naturally | Exit loop, present text | Low, success path |

| tool_use | Agent wants to call a tool | Execute, append result, continue | High if loop checks text instead |

| max_tokens | Output token budget hit | Return partial, plan retry/chunk | Medium, surfaces undersized max_tokens |

| stop_sequence | Custom stop string matched | Exit, inspect for intent | Low, only if you set it intentionally |

Decision tree

Are you building an agentic loop with tools?

Could max_tokens fire on long inputs?

Are you setting a custom stop_sequence parameter?

Question patterns

Your loop checks `response.text.includes('done')` to decide termination. What can go wrong?

tool_use block in the same response. The text is preamble; the tool_use is the real next step. Branch on stop_reason, not text.A response has both a text block and a tool_use block. Which should you handle first?

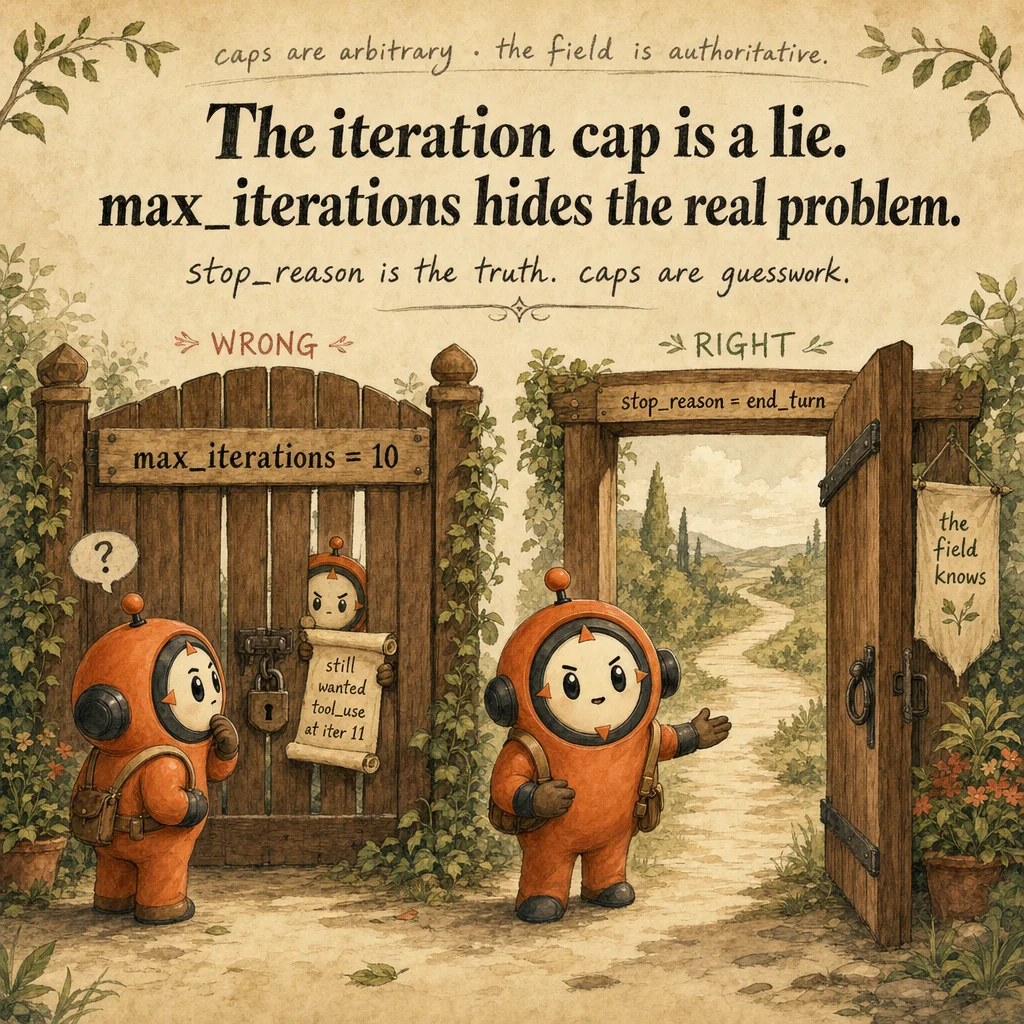

stop_reason. If it's tool_use, execute the tool call regardless of text presence. If it's end_turn, the text is the final response. Block-level inspection is unreliable; the field is authoritative.You set `max_iterations = 5` to prevent infinite loops. The agent fails on legitimate 7-iteration tasks. What's the real fix?

tool_result append, ambiguous tool descriptions, or two tools that look interchangeable. Caps mask bugs; stop_reason is the primary signal. Raise the cap to a safety buffer, not the primary control.An agent loop hits `stop_reason: "max_tokens"`. Your code throws an error and exits. What should production code do?

max_tokens as a partial-result signal, not a failure. Save what was generated, then either raise max_tokens for a retry or chunk the input. The agent did real work; don't discard it.You set a custom `stop_sequences` parameter. The agent stops mid-task. Why?

response.stop_sequence to see which one triggered. Either tighten the sequence (more specific) or remove it. Custom stop sequences are a sharp tool.Your TypeScript handler has if/else branches for `end_turn`, `tool_use`, and `max_tokens`. What's missing?

stop_sequence branch for completeness, and a default branch for unknown values (defensive). Use a discriminated union type so the compiler enforces exhaustive checks.An agent calls the same tool 5 times in a row with identical input. Why?

tool_result block to the message list, so Claude doesn't know the tool ran. Without the result, the model re-requests indefinitely until max_iterations saves you.Why is `stop_reason` better than counting tool_use blocks for loop control?

stop_reason is a single authoritative field set during generation, designed for control flow. It's faster, cleaner, and immune to multi-block responses.