On this page

TLDR

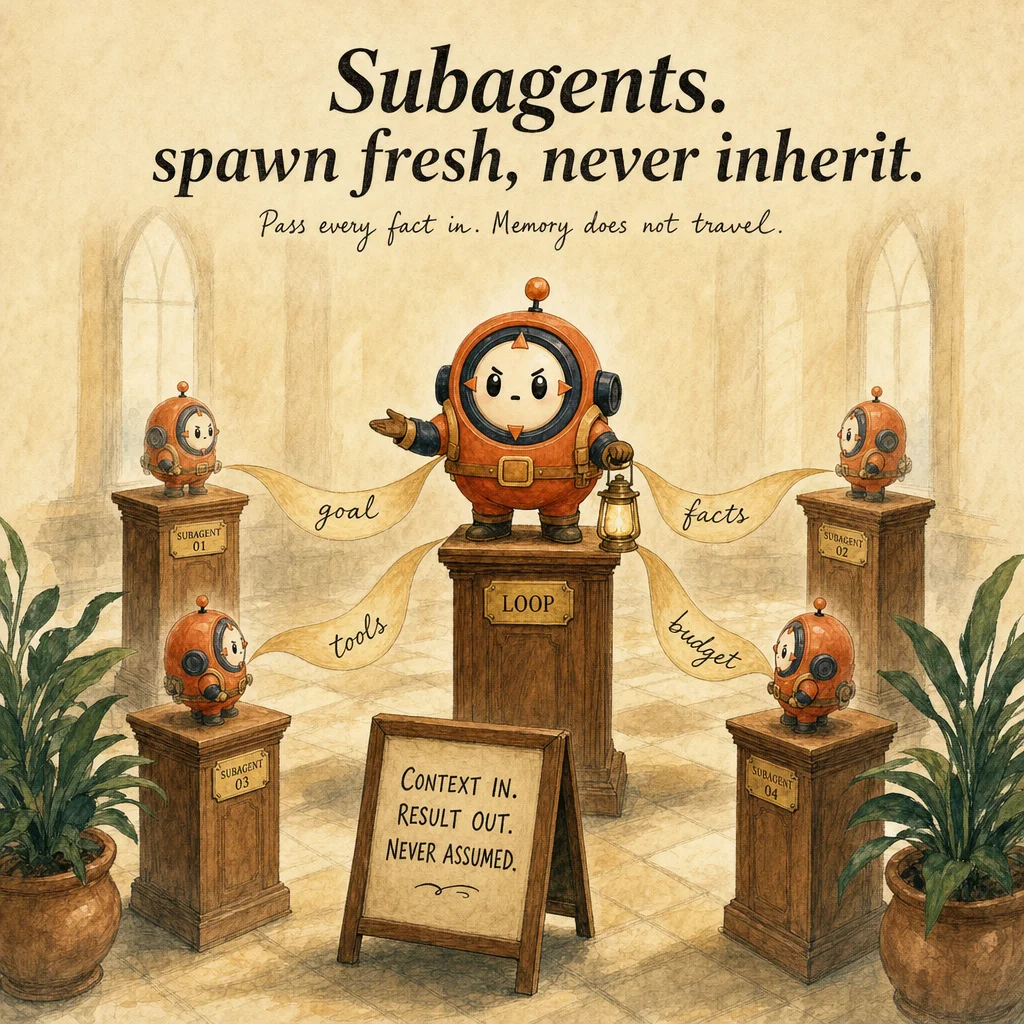

Subagents are specialized, isolated agents spawned by a coordinator to handle domain-specific tasks while preserving conversation isolation. They do NOT inherit memory, the coordinator passes context explicitly in the prompt. Hub-and-spoke prevents context creep from parallel work.

What it is

A subagent is a specialized assistant the coordinator spawns to handle a focused task in isolation. It receives an explicit task string, runs in its own fresh context window, and returns only a structured summary. The intermediate work (file reads, searches, tool calls) is discarded. From the coordinator's perspective the subagent is a black box: task in, summary out.

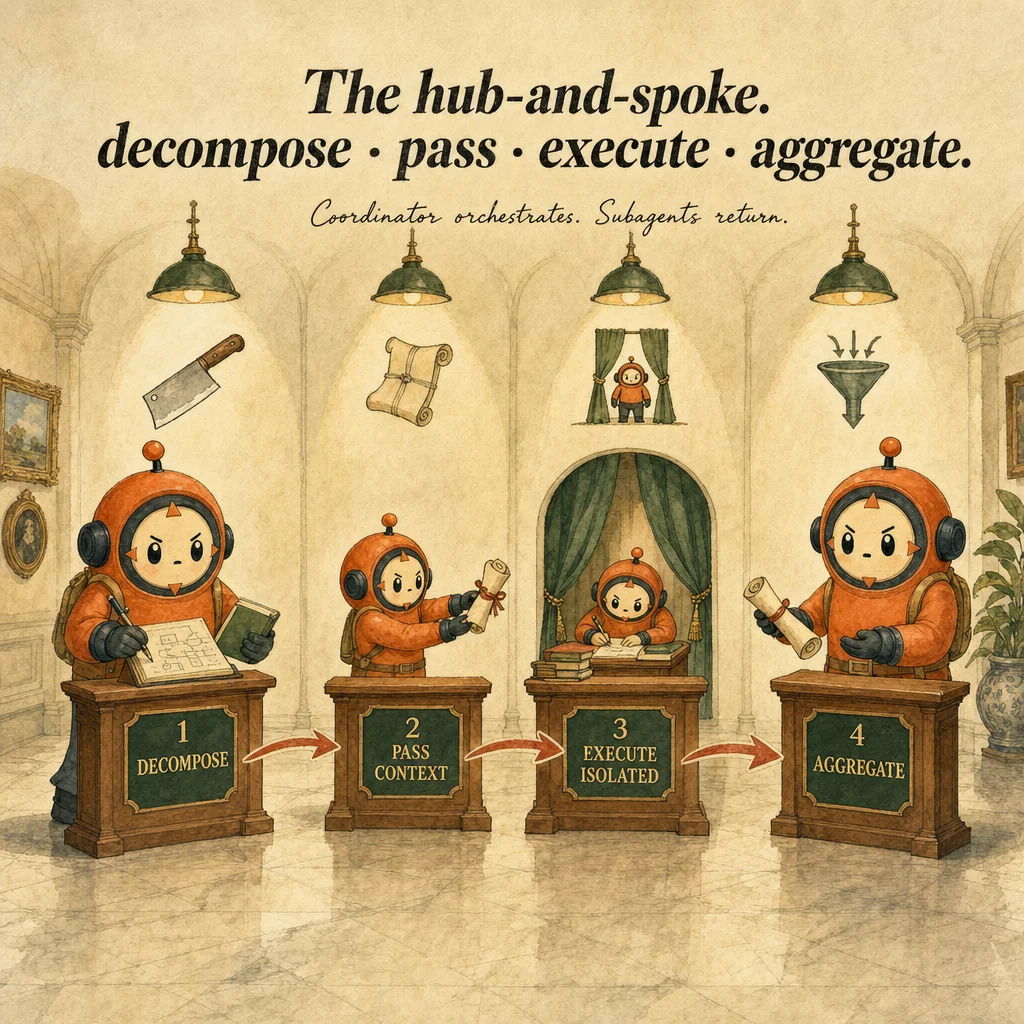

The mental model is hub-and-spoke. The coordinator (hub) owns task decomposition and result aggregation. Subagents (spokes) are stateless executors that never inherit the coordinator's history, never share memory between invocations, and never communicate directly with each other. Every fact a subagent needs must be embedded in its task string. This isolation is what lets subagents run in parallel without the context explosion that would kill a single mega-loop.

Subagents are scoped by three mechanisms: system prompt (the role description), allowed-tools (the SDK-enforced tool list), and output format (the shape of the summary). A code-review subagent gets [Read, Grep, Bash] but not Edit. A retriever gets [Read, Glob, WebSearch] but no destructive capability. The contract is tool-based, not language-based: the SDK enforces the allowed-tools list, you don't have to hope the subagent respects a prompt suggestion.

Production subagent failures cluster around three gaps: missing task context (the coordinator assumes the subagent knows facts it doesn't), vague output formats (the subagent doesn't know when to stop), and overscoped tools (Edit access on a reviewer that should only read). Each is a coordination bug, not a model limitation. The exam tests whether you recognize the right delegation signals: verbose work, parallel tasks, and read-only analysis are the canonical triggers for subagents over inline loops.

How it works

The coordinator invokes a subagent by passing three inputs: name (the subagent's identifier), task (a self-contained text description), and optional context metadata. The SDK spawns a fresh agent context, loads the subagent's system prompt and tool definitions from configuration, and starts an independent agentic loop inside the subagent. The subagent reads the task, plans, executes tools, and loops on stop_reason exactly as a standalone loop would.

The message list inside the subagent is isolated from the coordinator. Every file read, every tool call, every intermediate result stays in that nested context window. This is the efficiency win: if a code-review subagent reads 20 files to find one bug, the coordinator pays no token cost for those 20 reads. Only the final structured report ("vulnerability in auth.ts line 47, severity high") returns.

When the subagent reaches stop_reason: "end_turn", it emits a final message. The SDK extracts the text and returns it to the coordinator as a tool_result block. The subagent's entire history is then discarded, the agent is stateless by design. If the coordinator needs more work later, it spawns a new invocation with a fresh context. There is no resumption, no second turn, no continuation.

Parallel execution is free. If a coordinator needs four subagents to analyze four repos, the SDK spawns all four simultaneously, awaits all four results, and aggregates them. Each subagent runs in its own context window with no contention; the cost is four separate completions but the speedup and context cleanliness justify it for any decomposable task. The pattern collapses cleanly to one subagent when work isn't parallelizable.

Where you'll see it

Parallel multi-repo code analysis

The coordinator gets a task: "find all references to the deprecated client across our 12 repos." Instead of visiting each repo in a massive inline loop (token explosion), it spawns four subagents in parallel, each scoped to three repos. Each subagent reads files, greps imports, returns a structured report. The coordinator merges the four reports and deduplicates. Without parallelization the same task consumes roughly 5x more tokens because every read accumulates in the main context.

Code-review subagent in CI/CD

On every PR, a CI pipeline invokes a code-review subagent with the PR diff. The subagent is restricted to [Read, Grep, Bash], no Edit. It examines security, performance, and maintainability, and returns a JSON report. The pipeline decides whether to approve, request changes, or escalate. Without isolation the 50+ file reads pollute the main context; with the subagent, the main thread sees only the structured verdict.

Knowledge-base retriever subagent

A user asks a general question: "What's our refund policy for SaaS subscriptions?" A retriever subagent is spawned with [Read, Glob, WebSearch]. It searches internal docs and web, summarizes findings, returns a single answer. The coordinator never sees the eight intermediate searches or the three docs that were skipped. The output is clean, directly usable, and audit-ready.

Structured extraction with a dedicated extractor

An invoice-processing pipeline receives a scanned PDF. Rather than have the main agent loop through 40 pages of OCR, a dedicated extraction subagent is spawned with a precise output format {vendor, amount, date, items[]}. It runs, returns the structured data, and exits. The main pipeline continues without ever seeing the intermediate OCR noise or abandoned extraction attempts.

Code examples

# Coordinator delegates to subagents in parallel.

# Each subagent runs in isolation; only the summary returns.

import asyncio

from anthropic import Anthropic

client = Anthropic()

async def review_repo(name: str, files: list[str]) -> dict:

"""Spawn a code-review subagent for one repo."""

task = f"""Review the codebase in {name}.

Files to check: {", ".join(files)}

Return JSON:

{{

"verdict": "approved" | "needs_rework" | "hold",

"critical_issues": [{{"file": "...", "line": N, "issue": "..."}}],

"minor_issues": [...]

}}"""

# In Claude Code SDK this is invoke_subagent("code-reviewer", task)

resp = await asyncio.to_thread(

client.messages.create,

model="claude-opus-4-5",

max_tokens=1024,

messages=[{"role": "user", "content": task}],

)

return {"repo": name, "review": resp.content[0].text}

async def coordinate(repos: dict[str, list[str]]) -> dict:

"""Spawn one subagent per repo in parallel and aggregate."""

results = await asyncio.gather(*[

review_repo(name, files) for name, files in repos.items()

])

return {

"total": len(results),

"reviews": results,

"verdict": "approved" if all(

r["review"].get("verdict") == "approved" for r in results

) else "needs_review",

}

asyncio.run(coordinate({

"auth-service": ["auth.py", "oauth.py"],

"payment-service": ["payments.py", "webhooks.py"],

}))Looks right, isn't

Each row pairs a plausible-looking pattern with the failure it actually creates. These are the shapes exam distractors are built from.

Pass the entire coordinator conversation history to the subagent so it has full context.

Subagents do not inherit history. Passing prior messages confuses the subagent (it wasn't part of that conversation). Every fact must be embedded in the task string, make it self-contained.

Two subagents can coordinate with each other to share results.

Subagents communicate only through the coordinator. Direct subagent-to-subagent channels do not exist. If A's output feeds B's input, the coordinator must orchestrate: run A, collect its summary, pass that into B's task string.

Give every subagent full tool access (Edit, Bash, all of them) so it can handle any task.

Tool scope must match the role. A reviewer should not have Edit. A retriever should not have Bash. Overscoping causes accidental side effects (file edits, deployments) and obscures intent. Restrict to the minimum needed.

A vague output format is fine, the subagent will figure out when it's done.

Without a defined output format, subagents wander and run too long. Structured formats (JSON, fixed sections) create natural stopping points. A good subagent config includes an explicit output shape exactly because it doubles as a termination cue.

Subagent failure should trigger a retry with the same task.

Retries succeed only if the failure was transient. If the task is ambiguous or the tool set is wrong, retrying burns tokens without fixing the root cause. Debug the task string, the tool restrictions, and the output format first; retry second.

Side-by-side

| Aspect | Subagent (isolated) | Inline agentic loop | Parallel subagents | Sequential coordinator |

|---|---|---|---|---|

| Context window | Isolated; discarded after | Grows monotonically | N fresh windows | Main stays clean |

| Parallel execution | Yes, N concurrent | Single-threaded | Natural; N tasks async | Never parallel |

| Tool access | Scoped per role | Full set | Scoped per subagent | Full set |

| Output shape | Structured summary only | Full message history | N summaries merged | Single result |

| Visibility | Black box, no intermediate work shown | Every tool call visible | Summary only per subagent | Every step logged |

| Best for | Verbose or read-only tasks | 3 to 15 turn loops | 4+ parallel tasks | Fixed sequential pipelines |

Decision tree

Will the work produce verbose intermediate output (file reads, searches, false starts)?

Are the tasks independent and parallelizable (4 repos, each analyzed separately)?

Should this agent be unable to modify files (read-only analysis)?

allowed-tools to [Read, Grep, Glob, WebSearch]. Omit Edit, Bash, Write.Can the task be expressed as a self-contained string without prior context?

Does the output need a strict format (JSON, structured sections, checklist)?

Question patterns

Your code-review subagent missed three critical issues that you can clearly see in the file. Why?

Two subagents are returning conflicting reports about the same bug. How do you resolve it?

A research subagent ran for 40 turns and returned a perfect summary, but the bill is huge. What's the architectural fix?

{findings: [], confidence: number}) doubles as a stopping cue and caps token cost.Your retriever subagent has `[Read, Grep, Glob, WebSearch, Bash, Edit]` and accidentally modified a config file. What was the design error?

Edit. Restrict allowed-tools to [Read, Grep, Glob, WebSearch]. The minimum needed for the role.You spawned 4 subagents in parallel; 3 finished, the 4th hangs forever. How do you debug?

max_iterations and a deterministic output format to bound it. Don't retry blindly.Your coordinator passes the entire chat history to each subagent for "context." Subagents respond with confused outputs. Why?

A subagent returns `stop_reason: "max_tokens"` with a partial summary. Production code aborts. What should it do instead?

Your coordinator spawns Tool A, then waits for results, then spawns Tool B based on A's output. Why is this slower than ideal?

Promise.all. The coordinator merges both outputs.Frequently asked

What's the difference between a subagent and an agentic loop?

while block in the coordinator that runs tool_use to tool_result cycles. A subagent is a separate execution: the coordinator spawns it, it loops internally, and returns a summary. Subagents are best for isolated tasks; loops are best for single threads with complex tool interactions.Can a subagent call another subagent?

How do I know when to use a subagent vs. staying inline?

What happens if a subagent's task is ambiguous?

How do I aggregate results from N parallel subagents?

tool_result blocks, then append them to the coordinator's message list in a single user-role block. The coordinator can now reason over all N results together.Can a subagent's output feed into another subagent's task?

What if a subagent exceeds its token budget?

stop_reason: "max_tokens" with a partial summary. Handle it in the coordinator: either accept the partial result or spawn a new subagent with a refined task ("focus on files X to Y only").Are subagents cheaper than inline loops?

How do I prevent a subagent from entering an infinite loop?

max_iterations, define a clear output format (so the subagent knows when it's done), and ensure the task is well-scoped. A wandering subagent is usually a sign of vague task or vague output format.Can subagents use MCP servers or custom tools?

allowed-tools list) or MCP definitions (via .mcp.json). Tool access is scoped per subagent; the SDK enforces the restrictions.