On this page

TLDR

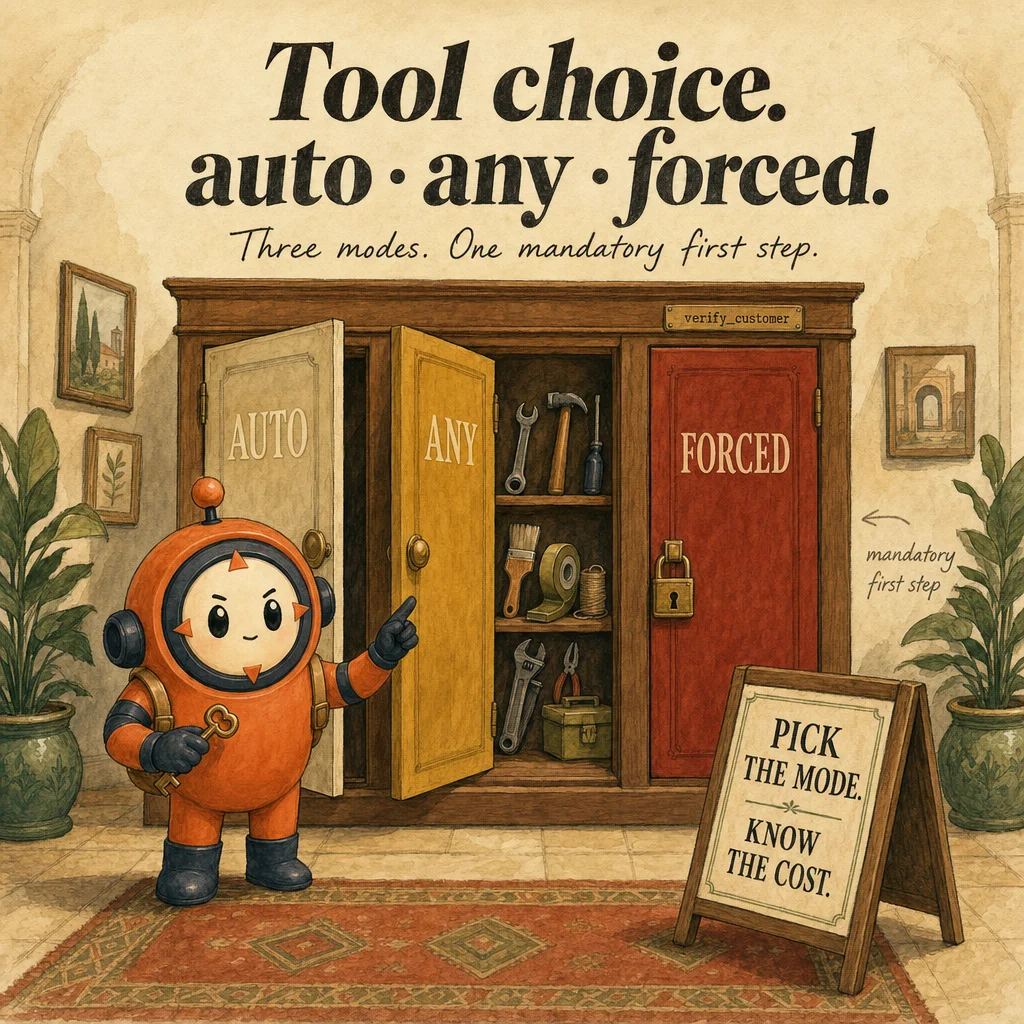

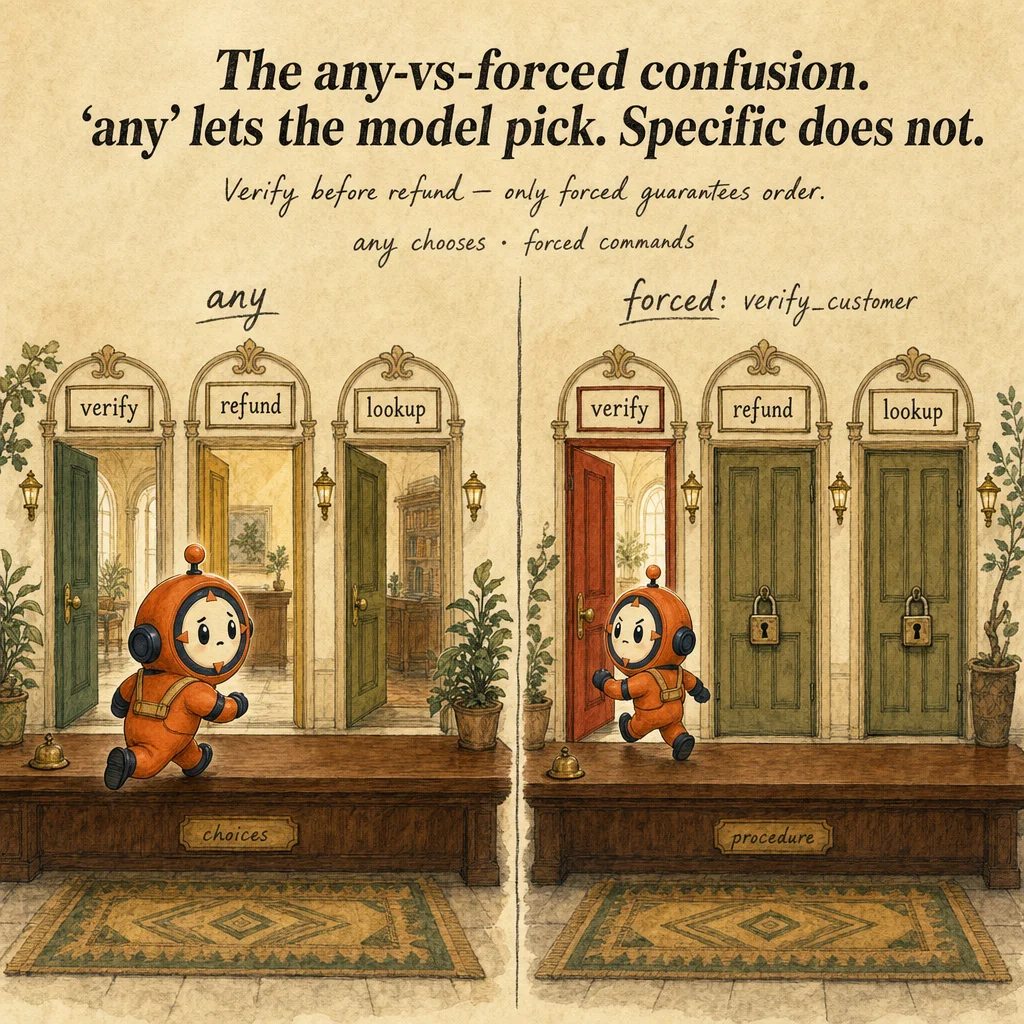

tool_choice is the parameter that controls whether Claude can decide to use a tool ("auto"), must call any tool ("any"), or must call a specific tool by name. Use specific-tool forcing for mandatory-first-step operations like identity verification before refunds.

What it is

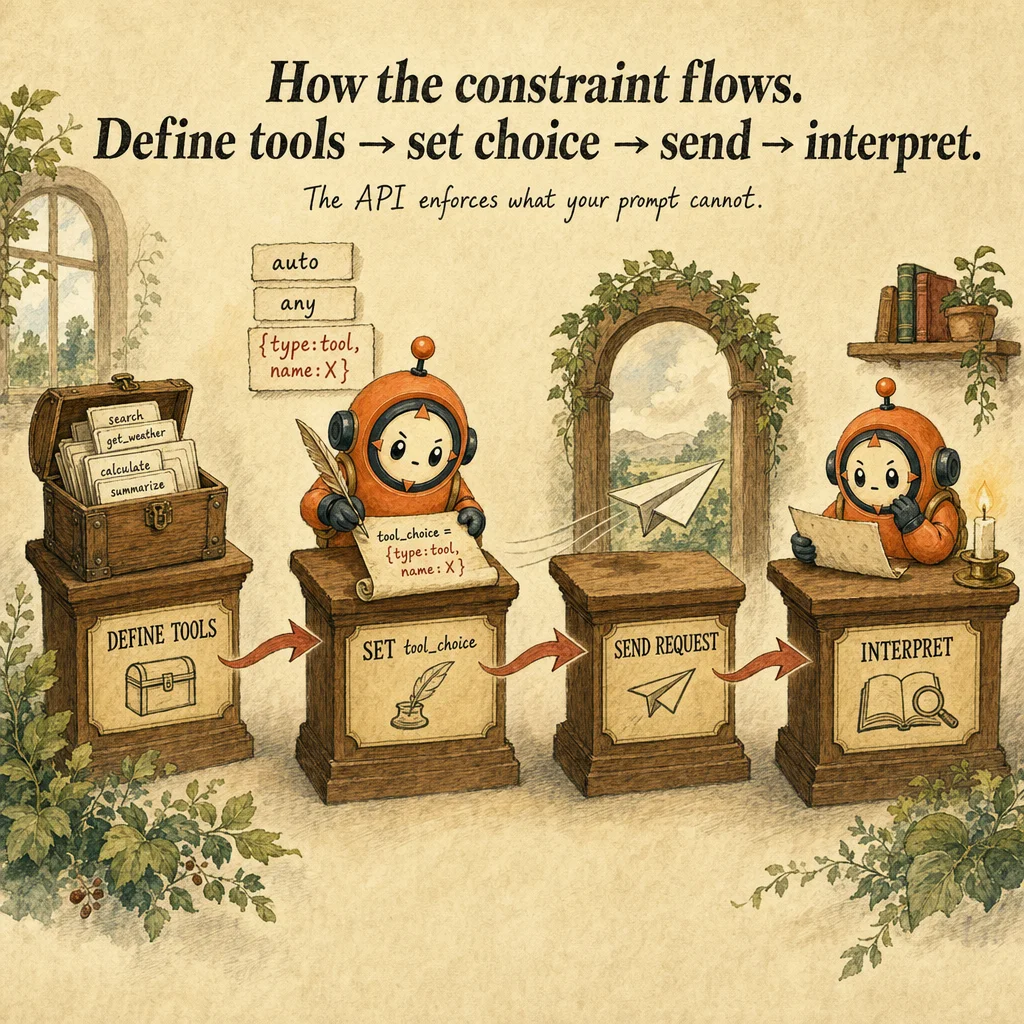

tool_choice is a request-level parameter that controls whether Claude must, may, or must not call a tool. Three modes: {type: "auto"} (default, model decides), {type: "any"} (model must call any tool), and {type: "tool", name: "X"} (forced to a specific tool). It bridges between probabilistic (the model decides) and deterministic (you decide) tool execution. The wrong choice leaves 30% of your output as unparseable text when determinism is required.

Without override, Claude reads the message history, evaluates available tools, and decides whether a tool is needed at all. In refund flows this is dangerous: 30% of the time Claude asks clarifying questions instead of calling verify_customer. With {type: "tool", name: "verify_customer"} that 30% becomes 0%. The tradeoff is inflexibility: forced tool_choice removes legitimate text-only responses. Use forced sparingly, only for mandatory architectural checkpoints.

The incompatibility with extended thinking is architectural, not cosmetic. When thinking: {type: "enabled"} is active, tool_choice: "any" and forced both return 400 validation errors. You must fall back to tool_choice: "auto". Reasoning-heavy tasks cannot guarantee tool calls; the model reasons, then decides whether to act. The fix is reframing: use extended thinking for analysis, not for deterministic control of execution.

Production fails when tool_choice is mistaken for a safety valve instead of a contract enforcer. tool_choice: "any" does not make your loop robust if tool descriptions are vague, the model still misroutes. It ensures a tool is called, not that the right tool is called. Combining unclear descriptions + forced tool_choice = guaranteed wrong action. Fix descriptions first, then use tool_choice to enforce when, not what.

How it works

When you call messages.create() with tool_choice, the request is validated before the forward pass. If thinking is enabled and tool_choice is anything other than auto, the API returns a 400 error immediately, no model inference happens. This check happens before token accounting, so invalid combinations fail instantly and cheaply. Valid combinations proceed to the transformer.

Inside the forward pass, the model sees three elements: the system prompt, the messages, and your tool_choice specification. With auto, Claude generates and samples a stop_reason: either end_turn (text is enough) or tool_use (tools needed). With any, the model is nudged to produce a tool_use block, sampling weights shift to favor tool calls over text termination. With forced, tool generation is implicit; sampling never considers end_turn.

The stop_reason is independent of tool_choice. A forced tool call still returns stop_reason: "tool_use". The tool's name is guaranteed to be X; the input might be empty or malformed, but the structure is assured. This is why forced tool_choice is safe for mandatory architecture gates: it guarantees the attempt, not the validity. Argument validation is still your harness's job.

Tool execution remains your responsibility. Even with tool_choice: "any", a tool call might have invalid input (missing required fields, wrong types). Your harness catches it, returns a structured error, and lets Claude retry. The difference is confidence: with auto, you code for text-or-tool branches and handle tool absence; with any, you code only for tool branches. Wrong inputs are handled identically in both.

Where you'll see it

Mandatory verification before refunds

Refund workflow forces verify_customer as the first call via tool_choice = {type:'tool', name:'verify_customer'}. Removes probabilistic skipping. Combined with PostToolUse policy hooks for amount limits, prompt-only enforcement of the same policy lands ~70% reliable in production.

Guaranteed structured extraction from unknown documents

Document type may be invoice / receipt / contract. Use tool_choice='any' with three extraction tools (one per type). Model picks the right one if descriptions are clear. 'auto' would return free text 30% of the time.

Subagent must take action

Research subagent must call at least one tool (search / load / query). Use tool_choice='any' so empty-text returns become impossible. Subagent always emits a tool call before returning.

Code examples

from anthropic import Anthropic

client = Anthropic()

# Mode 1: 'auto' (default), model picks tool OR returns text

resp_auto = client.messages.create(

model="claude-opus-4-5", max_tokens=512, tools=tools,

tool_choice={"type": "auto"},

messages=[{"role": "user", "content": "Help me with a refund."}],

)

# 30% chance: text only ("Sure, can you tell me your order number?")

# 70% chance: tool_use block with verify_customer or lookup_order

# Mode 2: 'any', model MUST call a tool, picks which one

resp_any = client.messages.create(

model="claude-opus-4-5", max_tokens=512, tools=tools,

tool_choice={"type": "any"},

messages=[{"role": "user", "content": "Help me with a refund."}],

)

# 100% chance: tool_use block. Model picks (likely verify_customer first).

# Mode 3: forced, model MUST call this specific tool

resp_forced = client.messages.create(

model="claude-opus-4-5", max_tokens=512, tools=tools,

tool_choice={"type": "tool", "name": "verify_customer"},

messages=[{"role": "user", "content": "Help me with a refund."}],

)

# 100% chance: tool_use block calling verify_customer.

import Anthropic from "@anthropic-ai/sdk";

const client = new Anthropic();

// ❌ This will fail, extended thinking + 'any' is rejected

try {

await client.messages.create({

model: "claude-opus-4-5",

max_tokens: 16000,

thinking: { type: "enabled", budget_tokens: 10000 },

tools,

tool_choice: { type: "any" }, // Validation error

messages: [{ role: "user", content: "Analyze and act." }],

});

} catch (e) {

// 400: tool_choice 'any' or specific incompatible with thinking

}

// ✓ Correct, extended thinking + 'auto' works

await client.messages.create({

model: "claude-opus-4-5",

max_tokens: 16000,

thinking: { type: "enabled", budget_tokens: 10000 },

tools,

tool_choice: { type: "auto" }, // Compatible

messages: [{ role: "user", content: "Analyze and act." }],

});

Looks right, isn't

Each row pairs a plausible-looking pattern with the failure it actually creates. These are the shapes exam distractors are built from.

Use 'any' to guarantee deterministic output.

'any' forces a tool call but the model still picks which one. If two tool descriptions overlap, the wrong one fires. For true determinism, use forced ({type:'tool', name:X}).

Forced tool_choice removes the need for hooks.

Forced ensures the tool is called, it does NOT validate inputs. Add PreToolUse hooks for argument validation (e.g., refund amount within policy).

Use 'any' or forced when extended_thinking is on for safer behavior.

Both modes fail validation when thinking is enabled. Only 'auto' is compatible. If you need both thinking and a guaranteed tool call, drop thinking or accept text fallback.

Side-by-side

| Mode | Behavior | Use case | Extended thinking |

|---|---|---|---|

| auto (default) | Model picks: tool OR text | Open-ended assistance | Compatible |

| any | Must call a tool; model picks which | Subagent must act; structured-only flows | Incompatible |

| {type:'tool', name:X} | Must call this exact tool | Mandatory first steps (verify before refund) | Incompatible |

| none | Tools available but model returns text | Rare: only allow tools as fallback | Compatible |

Decision tree

Is a SPECIFIC tool mandatory (verify-before-refund, identity gate)?

Must the model take SOME tool action (any tool)?

Are you using extended_thinking?

Question patterns

You enable extended_thinking and set `tool_choice: any`. The API returns a 400 error. Why?

any and forced tool_choice. Use auto only when thinking is enabled. The model reasons, then decides whether to call a tool.Your refund flow uses `tool_choice: any` to guarantee a tool call. The agent calls `lookup_order` instead of `verify_customer` first. Fix?

{type: "tool", name: "verify_customer"}). any lets the model pick; only forced guarantees a specific tool. Use forced for mandatory architecture gates.The user asks a simple question and your forced `tool_choice` calls `process_refund` with empty input. What went wrong?

auto. Forced is for mandatory operations only.Your support agent uses `tool_choice: auto`. 30% of the time it asks clarifying questions instead of calling tools. Is this a bug?

auto behavior. The model decides whether a tool is needed. If you need guaranteed tool calls, switch to any (model picks) or forced (you pick). Each has a tradeoff.You set `tool_choice: any` but the agent calls a tool and immediately returns valid input. Then a clarifying tool... Why is it slower than expected?

Your forced `tool_choice` produces tool calls with hallucinated arguments. How do you guard against this?

tool_result if inputs are invalid. The model retries with corrected arguments. Forced tool_choice does not validate inputs.Why is `tool_choice: any` rejected when extended_thinking is on, but allowed without it?

You want both deep reasoning and a guaranteed tool call. Is that possible?

auto for the reasoning step, then a follow-up turn with forced tool_choice if the model decides to act. Two turns, not one.