On this page

TLDR

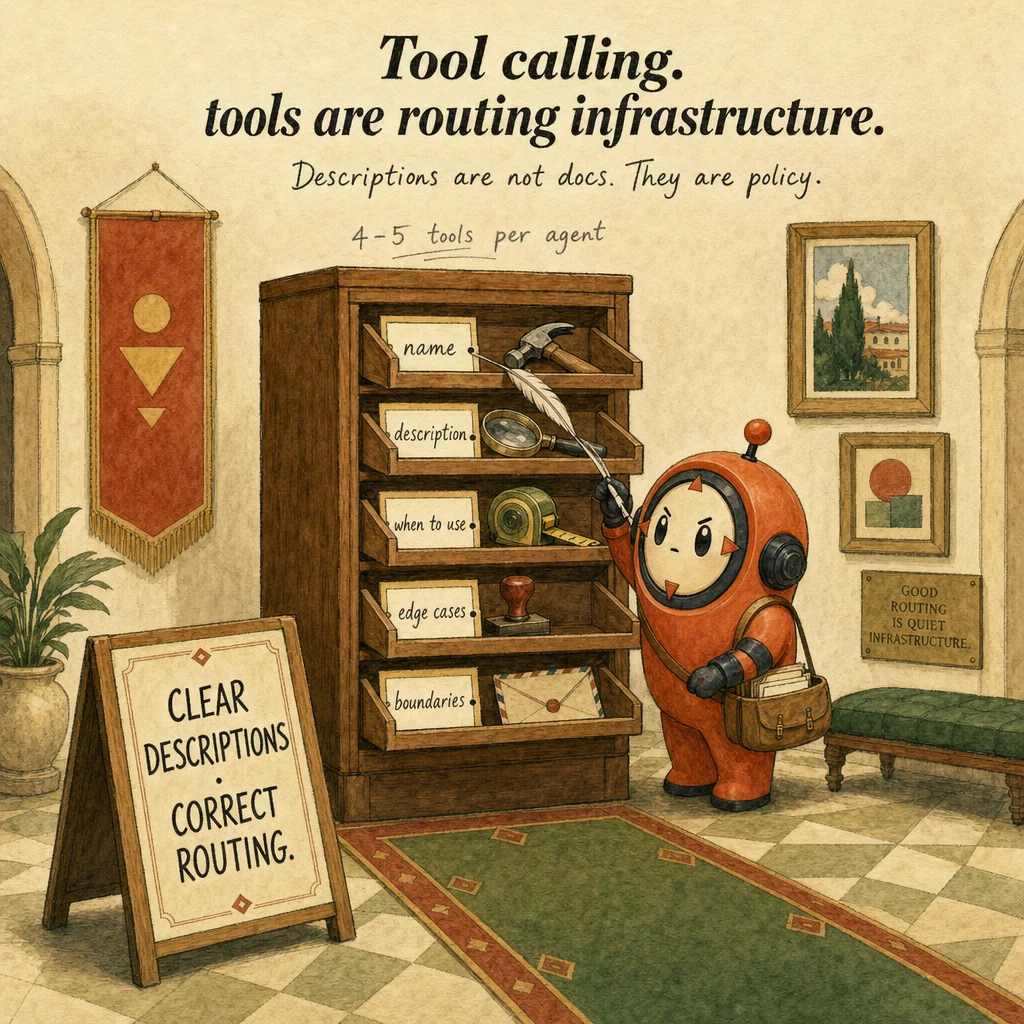

Tool calling is how Claude decides to invoke external functions and pass structured arguments. Tools are routing infrastructure, good descriptions reduce the need for classifiers or few-shot examples. The quality of tool design is the primary lever for correct task routing, not model size.

What it is

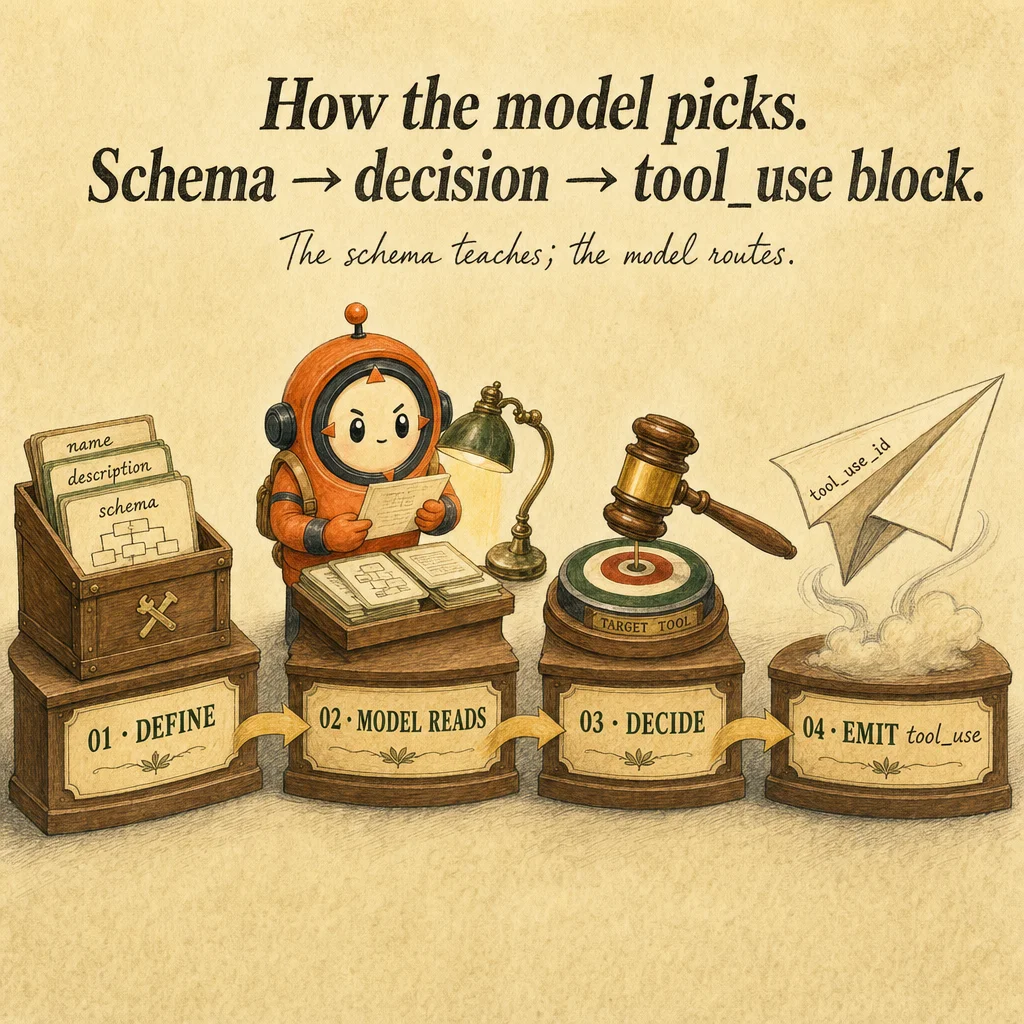

Tool calling is the mechanism that lets Claude request function execution. You define a tool schema (name, description, input_schema JSON), pass it to messages.create() with the tools parameter, and Claude decides whether to call a tool. A tool_use block lands in the response with the tool's name and input. Your harness executes the function, captures the result, and appends a tool_result block. From Claude's perspective, tool calling is probabilistic negotiation: the model reads what's available and decides if any tool fits.

The description field is load-bearing. It's not documentation, it's a policy document Claude reads every turn to decide when and how to call the tool. Vague descriptions cause misrouting, Claude guesses which tool is right. Strong descriptions have four parts: (1) what it does (one sentence), (2) when to use (concrete trigger), (3) edge cases (what's excluded), (4) boundaries (preconditions, ordering). A 4-line description dramatically reduces misrouting; bigger models do not fix vague descriptions.

The tool registry is your side of the contract. When Claude calls verify_customer, you look up the function in a registry, execute it, and catch all exceptions. Never let exceptions escape, Claude sees only what you put in tool_result.content. If you swallow errors silently, Claude retries the same broken call. If you return a structured error like {"error": "customer_not_found", "hint": "verify cus_ prefix"}, Claude reads it, adjusts, and retries successfully.

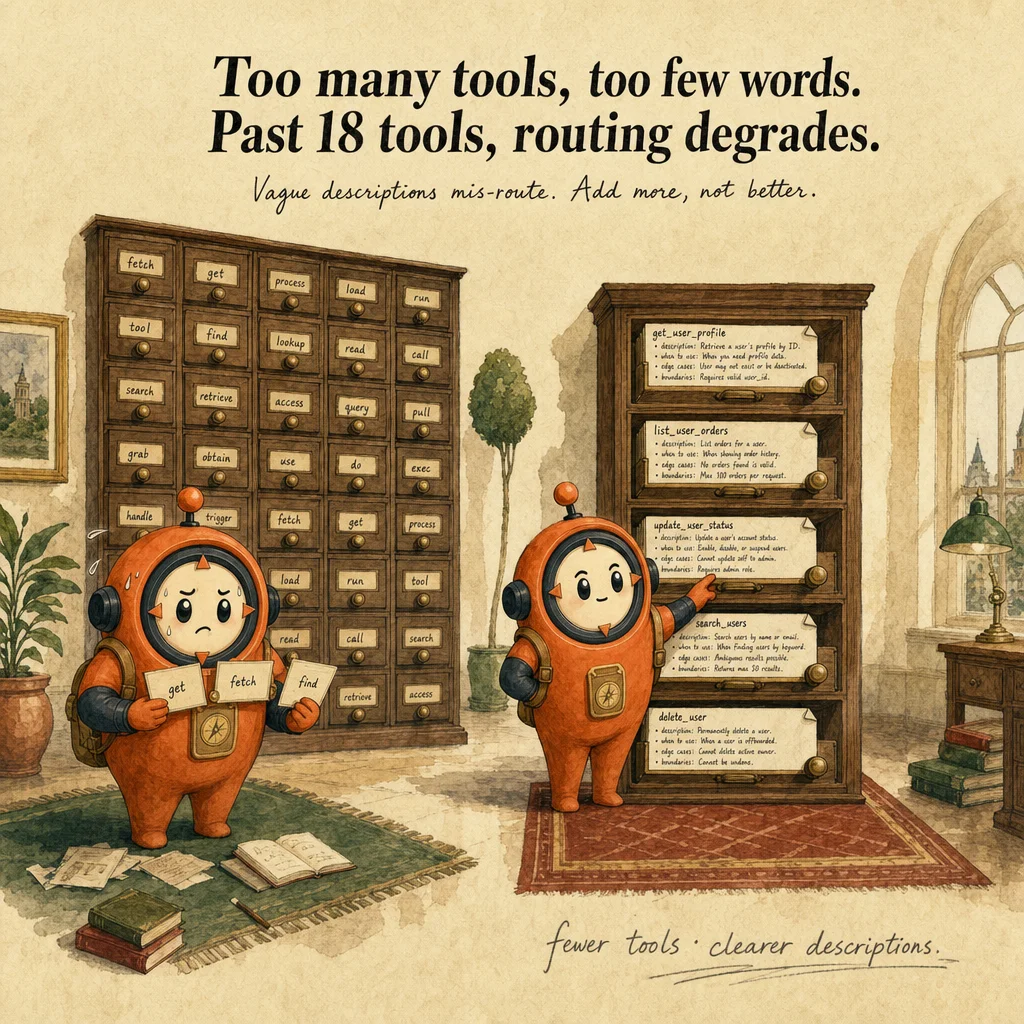

Production tool-calling fails in two predictable ways: description-shape misrouting (vague or overlapping descriptions) and tool proliferation (beyond ~18 tools, selection accuracy degrades sharply). The second is architectural: if you need 30 capabilities, split into specialized subagents. The exam drills both patterns. A common distractor: "upgrade the model to fix misrouting." The real fix is to rewrite the descriptions.

How it works

Tool definitions are structured metadata. Each tool has a name, description, and input_schema. The input_schema specifies field types, required fields, and field descriptions. Claude does not see the function body, only the schema. Precise schemas matter: they constrain Claude's input guessing. A permissive schema like {data: any} forces hallucination; a strict schema like {customer_id: string, method: enum} gives the model a deterministic target.

When Claude decides to call a tool, the response includes a tool_use block: {type: "tool_use", id: "tooluse_xyz", name: "verify_customer", input: {customer_id: "cus_123", method: "email_otp"}}. Your harness looks up the tool by name, validates the input, executes the function, and appends a tool_result block: {type: "tool_result", tool_use_id: "tooluse_xyz", content: "{...result...}"}. The id field links the result back to the tool_use so Claude knows which produced which.

The execution harness is critical. Wrap every tool call in try/except. If the tool throws, return a structured error string. Claude reads errors and adjusts, your harness's job is to surface them, not hide them. Structured errors look like {error: "out_of_stock", detail: "qty exceeds available", retry_hint: "try smaller quantity"}. Claude responds to hints; opaque errors break the loop.

Multiple tool_use blocks in a single response are independent. Claude might call verify_customer and lookup_order in the same turn. Your harness executes both, builds a tool_results array, and appends them as a single user-role message with all results. Don't append one at a time, batch them in a single {role: "user", content: [tool_result, tool_result, ...]} block. This is a frequent exam distractor.

Where you'll see it

Customer support routing

Agent has 5 tools: verify_customer, lookup_order, refund_policy, process_refund, escalate. Each tool's description spells out when-to-use and edge cases. Misrouting drops from 30% → 4% when descriptions become precise rather than vague.

Multi-source synthesis

Research agent has search_papers, search_blogs, query_database, web_fetch. Tool descriptions disambiguate (e.g., 'search_papers: peer-reviewed only; use when citation discipline matters'). Without the disambiguation, agent calls web_fetch instead of search_papers and pollutes results.

Sequential code review passes

Three sequential tool sets: pass 1 (security_scan), pass 2 (style_check), pass 3 (perf_check). Each pass dedicates the model's full attention to one concern. A single mega-tool with 20+ params dilutes attention and misses edge cases.

Code examples

# Anatomy of a good tool description:

# 1. What it does (one verb-led sentence)

# 2. When to use (concrete trigger conditions)

# 3. Edge cases (what's excluded; what's risky)

# 4. Boundaries (preconditions; ordering with other tools)

verify_customer = {

"name": "verify_customer",

"description": (

# 1. What it does

"Verify customer identity against the auth service. "

# 2. When to use

"Call FIRST in any refund or account-modification workflow. "

# 3. Edge cases

"Returns is_verified=false for closed accounts; do not proceed. "

# 4. Boundaries

"Must be called before lookup_order or process_refund."

),

"input_schema": {

"type": "object",

"properties": {

"customer_id": {

"type": "string",

"description": "Stripe customer ID (cus_xxx). Not email.",

},

"method": {

"type": "string",

"enum": ["email_otp", "sms_otp", "security_question"],

},

},

"required": ["customer_id", "method"],

},

}

# Anti-pattern (vague):

verify_customer_bad = {

"name": "verify_customer",

"description": "Verifies a customer.", # Too vague, model guesses

"input_schema": {

"type": "object",

"properties": {

"customer": {"type": "string"}, # email? id? phone?

},

},

}

from anthropic import Anthropic

import json

client = Anthropic()

TOOL_REGISTRY = {

"verify_customer": lambda i: {"verified": True, "tier": "gold"},

"lookup_order": lambda i: {"order_id": i["order_id"], "amount": 247.83},

"process_refund": lambda i: (

{"status": "blocked", "reason": "exceeds_500"} if i["amount"] > 500

else {"status": "ok", "refund_id": "R-789"}

),

}

def execute_tool(name: str, input_dict: dict) -> str:

if name not in TOOL_REGISTRY:

return json.dumps({"error": "unknown_tool"})

try:

result = TOOL_REGISTRY[name](input_dict)

return json.dumps(result)

except KeyError as e:

return json.dumps({"error": f"missing_field: {e}"})

except Exception as e:

return json.dumps({"error": "tool_failure", "detail": str(e)})

# In your loop:

# for block in resp.content:

# if block.type == "tool_use":

# result = execute_tool(block.name, block.input)

# tool_results.append({"type": "tool_result",

# "tool_use_id": block.id, "content": result})

Looks right, isn't

Each row pairs a plausible-looking pattern with the failure it actually creates. These are the shapes exam distractors are built from.

Misrouting is fixed by upgrading the model.

Bigger models route slightly better but cannot recover from vague descriptions. Fix the description anatomy first (what / when / edge cases / boundaries), almost always the root cause.

Add 25 tools so the agent can handle every edge case.

Beyond ~18 tools, selection accuracy degrades sharply. Reduce to 4-5 core tools per agent role. If you need more capabilities, split into specialized subagents.

Use a permissive schema like data: any to accept any extraction shape.

Permissive schemas force the model to guess structure, it returns null or fabricated values. Specify exact field types so the model has a deterministic target.

Side-by-side

| Aspect | Tool calling | Structured outputs | MCP server |

|---|---|---|---|

| What it is | Mechanism (Claude calls funcs) | Pattern (force a tool with JSON schema) | Infrastructure (pre-built tool sets) |

| You write | Tool definitions per agent | Schema in tool definition + tool_choice forced | .mcp.json config; server is pre-built |

| Best for | Custom logic per agent | Reliable extraction | Standard integrations (GitHub, Slack) |

| Failure mode | Vague descriptions cause misrouting | Permissive schema causes fabrication | Stale server config; auth misalignment |

Decision tree

Is the integration with a known service (GitHub, Slack, Postgres)?

Does the agent have more than ~18 tools?

Is misrouting happening?

Question patterns

Your agent calls the wrong tool 30% of the time across 8 similar tools. What's the first fix?

You add 25 tools and selection accuracy drops sharply. Why?

A tool's `input_schema` is `{data: any}` and Claude returns null. What happened?

Your tool throws an exception. The agent retries the same call with the same input. Why?

tool_result. Claude saw no error and re-requested. Return {error: "reason", hint: "how to fix"} so Claude can recover.Two tools have similar names: `fetch_user` and `get_user`. The agent alternates between them. Fix?

fetch_user: use only when you have an email. get_user: use when you have a numeric ID." Or merge them into one tool with an enum input.You force `tool_choice: any` to guarantee structured output. The agent still returns empty results. Why?

any forces a tool call but the model still picks which one, and might pick wrong if descriptions overlap. Either force a specific tool or fix description disambiguation.Your tool returns 50KB of JSON per call. The loop hits `max_tokens` after 3 iterations. Architectural fix?

fields parameter to the tool's input schema so Claude can request specific fields. Or use a resource (read-only catalog) for catalog data.A tool needs the customer ID but Claude calls it without one. The harness errors out. What should the schema enforce?

customer_id as required in input_schema. Claude won't call without it. Combine with a clear description: "call only after verify_customer returns a customer_id."