Quick answer

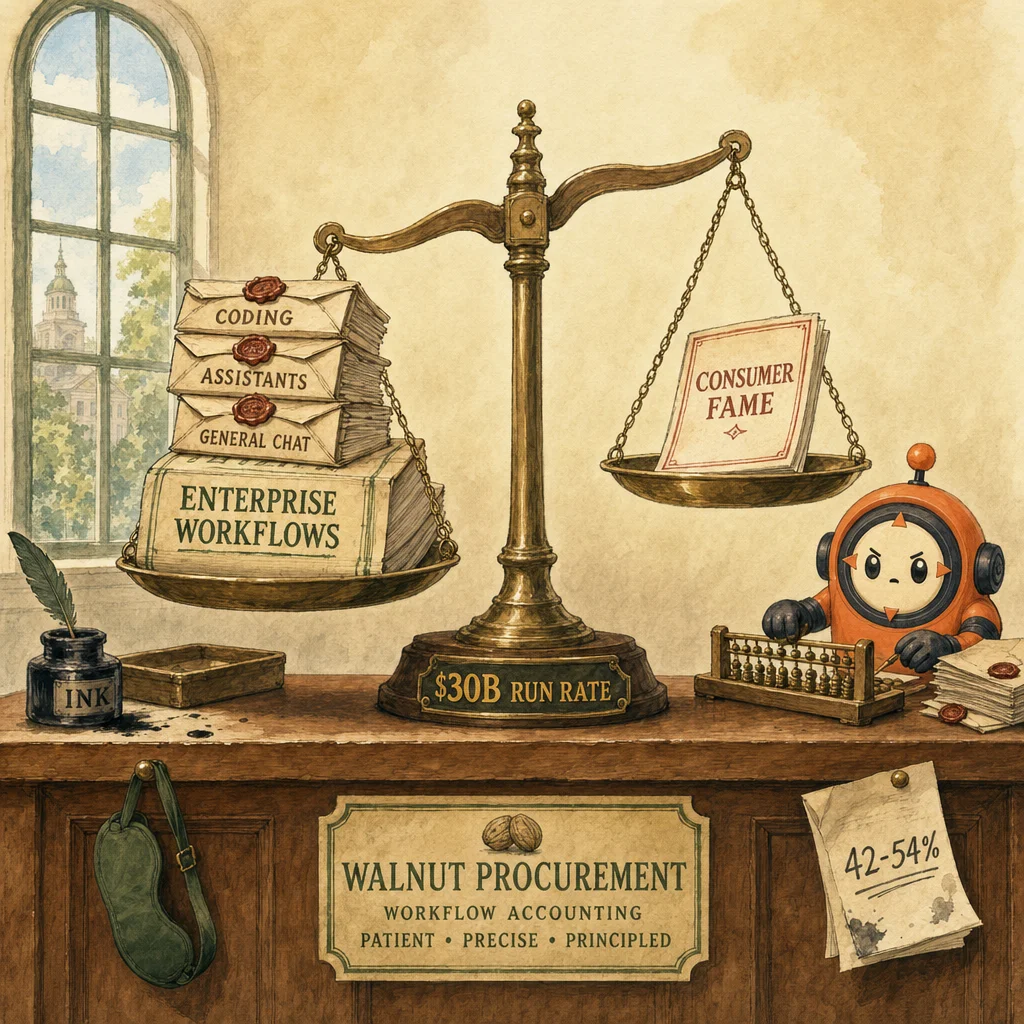

Workload-native procurement is the new pattern: score coding, assistants, and general chat as separate workloads, each on its own benchmarks. Claude's 4.5% consumer share but 29% enterprise assistant + 42-54% coding spend proves consumer fame is the wrong proxy for enterprise fit. Anthropic's $30B run rate tells buyers the market rewards narrower, higher-value workloads. The architectural lesson is the org-level expression of Delegation: pick the right model per task, not one model for everything.

The headline number, decoded

Anthropic at $30B annualized revenue just changed enterprise AI buying.

Not because $30B is intrinsically interesting — it's because of what produced it. The buyers aren't picking Claude because of brand or consumer enthusiasm. They're picking it for specific workloads, scored on specific evals, against specific governance and cost constraints. The aggregate revenue is the trailing indicator of a procurement-pattern shift.

The pattern: workload-native procurement

A pattern among AI-forward teams: they don't buy one model for everything anymore. They use what can be called workload-native procurement.

The challenge is simple. Yesterday's default was "ChatGPT first, exceptions later." That playbook doesn't hold when ChatGPT's share has slipped from roughly 87% to 60-68% while Gemini and Claude keep taking ground (Frontier News, May 07).

Leading teams approach this in four moves.

1. They don't standardize too early

Coding, internal assistants, and general chat get scored as separate workloads, each with its own benchmarks, cost envelope, and governance checklist. Standardization across workloads is treated as a deliberate trade-off, not the default.

2. They don't confuse consumer fame with enterprise fit

MindStudio's data is the cleanest illustration: Claude has about 4.5% consumer share, yet 29% enterprise assistant share and 42-54% enterprise coding spend. Consumer share measures chat preference and downloads. Enterprise share measures workload economics. They are different products.

3. They don't treat revenue as the only signal

Anthropic reportedly crossed a $30 billion run rate while growing faster than OpenAI. That tells buyers the market is rewarding narrower, higher-value use cases — Anthropic isn't winning more workloads; it's winning the workloads where the per-task economics matter most.

4. They don't ignore capital markets

When investors want Anthropic shares at a premium on secondary markets while OpenAI trades at a discount, vendor durability stops being finance gossip and becomes procurement data. Long-term roadmap velocity priced by people with skin in the game is a real signal about which vendor will still be best on your workload in 18 months.

The outcome

Fewer single-vendor rollouts. More quarterly model reviews. Budgets shifting to the model that wins a specific workflow.

But here's the part many teams still miss: enterprise AI now looks less like SaaS standardization and more like cloud workload placement. The best model isn't the loudest one. It's the one that clears governance, cost, and task accuracy at the same time — and that model is workload-specific.

Two lessons worth stealing

- Track enterprise spend share separately from consumer usage. The signals diverge by 6-10x on specific workloads.

- Score vendors by workflow economics, not chatbot fame. A 78% pass rate on your coding eval beats a 95% sentiment score on consumer chat — every time.

How this shows up on the exam

The CCA-F probes the workload-native pattern primarily through D1 (Agentic Architecture) and D4 (Prompt Engineering). D1 distractor: a question describes a multi-workload deployment and asks "which model do we standardize on?" The trap is to pick the popular answer. The architectural correct answer is per-workload routing with explicit eval gates per route — different models can serve different agents within the same system, with the orchestrator routing by task type. This is the org-level expression of Delegation from the 4D framework.

D4 distractor: questions about prompt-library consistency. The trap is "one prompt library across all teams." The correct answer is workload-scoped prompt libraries tied to the model that wins that workload's eval. The reason: prompts optimized for one model rarely transfer cleanly to another, and standardization at the prompt level locks you out of switching models per workload — which is the whole point of workload-native procurement.

What's the counterargument to workload-native procurement in your stack?

The honest counterargument is operational overhead: more contracts, more SDKs, more failure modes to internalize. For small teams, single-vendor standardization may still be the right call — the workload-economics gain has to exceed the integration cost. The threshold is usually somewhere around 10 engineers and 3 distinct production workloads. Below that, standardize. Above it, score per workload.

Where this lands in the exam-prep map

Each blog post bridges into the evergreen pillars. These are the most relevant follow-ups for this story.

Concept

Evaluation

Workload-native procurement is evaluation discipline at the org level — scoring per workload, not aggregate vibes.

Open ↗Scenario

Code generation with Claude Code

The 42-54% coding spend signal flows directly to which scenario architecture your team is most likely to be tested on.

Open ↗Scenario

Customer support resolution

29% enterprise assistant share lands in this scenario family — Claude wins customer-facing assistant workloads disproportionately.

Open ↗Concept

4D framework

Workload-native procurement is the org-level expression of Delegation — choosing the right model per task rather than standardizing one model for everything.

Open ↗6 questions answered

What is 'workload-native procurement'?

Why does Claude's 4.5% consumer share matter so little?

What does the $30B run rate actually tell buyers?

Should I switch off ChatGPT for my own workflow?

How does workload-native procurement map to the CCA-F?

What's the counterargument to workload-native procurement?

Synthesized from research output on 2026-05-12. LinkedIn cross-post pending.

Last reviewed 2026-05-12.