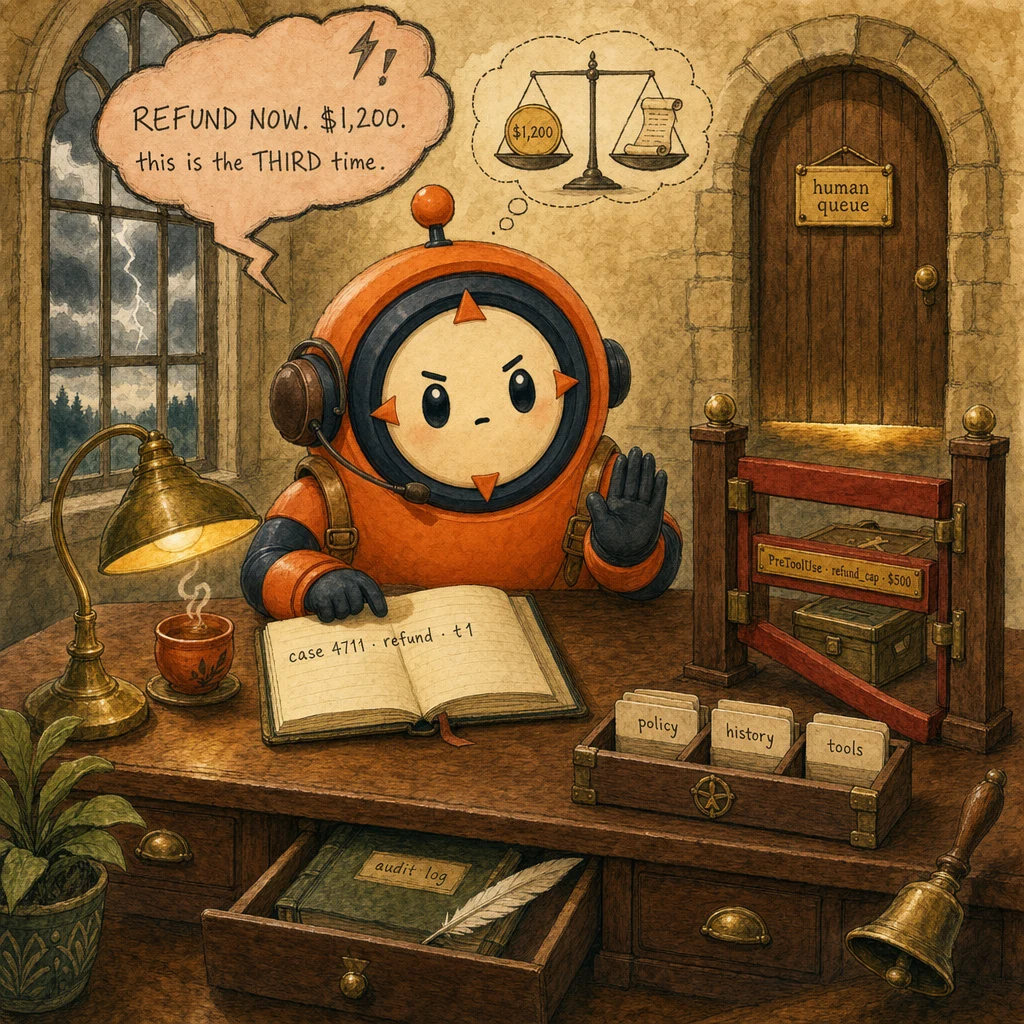

The problem

What the customer needs

- Resolve the request in one turn without multiple agent transfers.

- Get identity verified before any account-modifying action.

- See a clear path to a human when the agent can't help.

Why naive approaches fail

- Single-agent chatbots forget the customer ID by turn 8 (no case-facts pinning).

- Prompt-only refund-cap policy leaks 3% of refunds above the limit (no deterministic hook).

- Sentiment-triggered escalation creates false positives: angry users with valid policy denials get escalated unnecessarily.

- p95 resolution latency < 12 seconds end-to-end

- Refund-cap violations = 0 (hook-enforced, not prompt-enforced)

- Audit log entry per ticket with case-facts snapshot

- CSAT ≥ 4.2/5 across resolved tickets

The system

What each part does

5 components, each owns a concept. Click any card to drill into the underlying primitive.

Tool Registry

verify · lookup · process · escalate

Holds the 4-5 tools the specialist agent can call. Each tool has a clear description and JSON schema. Tool count stays low to keep routing accurate.

Configuration

tools: [verify_customer, lookup_order, process_refund, escalate_to_human]. tool_choice: auto. Each description is 4 lines: what it does, when to use, edge cases, ordering with peers.

PreToolUse Hook

policy gate · deterministic

Sits between Claude's tool_use request and actual tool execution. Enforces refund caps, escalation triggers, and time-of-day limits. Exits 2 (deny) on violation.

Configuration

Hook fires before process_refund. Reads tool_input.amount, compares to policy.refund_cap. Exit 2 with stderr message routes Claude to retry with adjusted args or escalate.

Case-Facts Block

pinned customer state

Pinned at the top of every system-prompt iteration. Holds customer_id, order_id, refund_amount, policy_limit. Survives summarization. Re-read every turn.

Configuration

system: f"CASE_FACTS: {customer_id} · {order_id} · ${amount} · cap=${cap}". Updated by hooks after state-changing tool calls.

Specialist Agent

the agentic loop

Runs the messages.create() loop. Reads stop_reason after every response: end_turn → exit, tool_use → execute + append result + continue, max_tokens → save partial.

Configuration

while True: resp = client.messages.create(...). if resp.stop_reason "end_turn": break. if resp.stop_reason "tool_use": execute_tools(...).

Escalation Queue

structured handoff

Receives blocked calls from PreToolUse hook + low-confidence + sentiment-triggered escalations. Each entry has a structured context block (cus_id, reason, partial_status, recommended_action).

Configuration

queue.push({customer_id, intent, partial_state, blocked_tool, reason, recommended_action}). Human triages in ~10s vs 5min for transcript review.

Data flow

Eight steps to production

Define the system prompt with case-facts

Anchor the agent's role + constraints + the case-facts block at the very top of the system prompt. The case-facts block is the immutable truth about this customer + order + policy.

from anthropic import Anthropic

client = Anthropic()

def build_system_prompt(case_facts: dict) -> str:

return f"""You are a customer support agent for ACME.

CASE_FACTS (immutable; re-read every turn):

- customer_id: {case_facts['customer_id']}

- order_id: {case_facts['order_id']}

- refund_amount: ${case_facts['amount']}

- policy_cap: ${case_facts['cap']}

Constraints:

- Verify customer before ANY account-modifying call.

- Refunds above policy_cap MUST escalate (a hook enforces this).

- Branch on stop_reason. Never on response text."""Define the 4-tool registry

Keep the tool count between 4-5. Each tool description is structured in 4 lines: what / when / edge cases / ordering. This is the primary lever for correct routing, fix descriptions, not the model.

tools = [

{

"name": "verify_customer",

"description": (

"Look up a customer by customer_id and confirm they are active.\n"

"Use this BEFORE any other tool that mentions the customer.\n"

"Edge cases: returns 'not_found' if customer_id is missing.\n"

"Always run before lookup_order or process_refund."

),

"input_schema": {"type": "object", "properties": {

"customer_id": {"type": "string"}

}, "required": ["customer_id"]},

},

# ... lookup_order, process_refund, escalate_to_human

]Wire the PreToolUse policy hook

The hook is deterministic, prompt-only enforcement leaks 3% of cases past the cap. Exit 2 to deny; exit 0 to allow; the SDK reads stderr to route Claude back with feedback.

# .claude/hooks/refund_policy.py

import sys, json, os

POLICY_CAP = float(os.environ.get("REFUND_CAP", "500"))

def main():

payload = json.loads(sys.stdin.read())

if payload["tool_name"] != "process_refund":

sys.exit(0) # not our concern, allow

amount = payload["tool_input"].get("amount", 0)

if amount > POLICY_CAP:

print(f"refund ${amount} exceeds cap ${POLICY_CAP}, escalate", file=sys.stderr)

sys.exit(2) # DENY

sys.exit(0) # allow

if __name__ == "__main__":

main()Run the agent loop on stop_reason

Branch on the structured field, never the response text. end_turn → exit. tool_use → execute, append, continue. max_tokens → save partial. stop_sequence → custom termination.

def run_agent_loop(user_msg: str, case_facts: dict, max_iter: int = 15):

messages = [{"role": "user", "content": user_msg}]

for _ in range(max_iter):

resp = client.messages.create(

model="claude-sonnet-4.5",

max_tokens=4096,

system=build_system_prompt(case_facts),

tools=tools,

messages=messages,

)

if resp.stop_reason == "end_turn":

return extract_text(resp)

if resp.stop_reason == "tool_use":

tool_uses = [b for b in resp.content if b.type == "tool_use"]

results = [execute_tool(t) for t in tool_uses]

messages.append({"role": "assistant", "content": resp.content})

messages.append({"role": "user", "content": results})

continue

if resp.stop_reason == "max_tokens":

return {"status": "partial", "text": extract_text(resp)}

return {"status": "iteration_cap"}Add the structured escalation block

When the hook denies or the agent reaches stop_reason with low confidence, push a structured block, not the transcript, to the human queue. Triage time drops from 5 minutes to 10 seconds.

def escalate(case_facts: dict, reason: str, partial: dict) -> dict:

return {

"customer_id": case_facts["customer_id"],

"order_id": case_facts["order_id"],

"intent": partial.get("intent", "unknown"),

"partial_status": partial.get("last_action"),

"blocker": reason,

"recommended_action": derive_recommendation(reason),

"evidence": [partial.get("last_tool_result")],

}Wire the sentiment + confidence gates

Two final guards on the response: sentiment monitor (orthogonal to policy, distress alone never triggers a refund) + confidence threshold. Either gate can route to escalation.

def post_response_gates(response: str, agent_confidence: float):

sentiment = sentiment_score(response)

if sentiment == "distressed" and agent_confidence < 0.7:

return {"action": "escalate", "reason": "low_confidence_distressed"}

if agent_confidence < 0.5:

return {"action": "escalate", "reason": "low_confidence"}

return {"action": "send"}Cache the system prompt for cost

The system prompt + tool definitions don't change between turns. Mark them with cache_control: ephemeral and pay roughly 90% less for those bytes on every turn.

resp = client.messages.create(

model="claude-sonnet-4.5",

max_tokens=4096,

system=[

{

"type": "text",

"text": build_system_prompt(case_facts),

"cache_control": {"type": "ephemeral"},

},

],

tools=tools, # tools also auto-cached when stable

messages=messages,

)Audit log every resolution

Every closed ticket writes a row: customer_id, agent_path, tool_calls, escalation_reason (if any), elapsed_ms, CSAT. This is your replay tool when production breaks at turn 18.

def audit_log(case_facts: dict, agent_path: list, elapsed_ms: int, csat: int | None):

db.audit.insert({

"ts": datetime.utcnow(),

"customer_id": case_facts["customer_id"],

"order_id": case_facts["order_id"],

"tool_calls": [c["name"] for c in agent_path if c["type"] == "tool_use"],

"stop_reasons": [c["stop_reason"] for c in agent_path],

"elapsed_ms": elapsed_ms,

"csat": csat,

})The four decisions

| Decision | Right answer | Wrong answer | Why |

|---|---|---|---|

| tool_choice | "auto" (default) | "any" or {type:"tool",name:"X"} | Customer requests are open-ended, let Claude pick. Forced tools only for mandatory first steps. |

| stop_reason handling | branch on field; max_tokens = partial | parse response text for 'done' | Text-shape parsing is the most-tested distractor. The structured field is authoritative. |

| Session state | case-facts block + threaded messages | progressive summarization of customer_id | Transactional values (IDs, amounts) must be pinned, never paraphrased. |

| Cache TTL | ephemeral on system + tools | no caching | System prompt + tool defs are stable across turns. ~90% cost reduction on those bytes. |

Where it breaks

Five failure pairs. Each one is one exam question. The fix is always architectural, deterministic gates, structured fields, pinned state.

Code checks response.text.includes('done') to decide termination.

Branch on stop_reason == 'end_turn'. Text + tool_use can co-exist in one response.

System prompt says 'never refund more than $500'. Production sees 3% violations.

AP-18PreToolUse hook checks tool_input.amount <= 500. Deterministic gate.

Customer raises voice → agent escalates regardless of policy.

AP-22Sentiment is orthogonal. Trigger only on policy exception, ambiguity, or explicit request.

By turn 8, agent has summarized cus_42 → 'a customer wanting a refund'.

AP-35Pin CASE_FACTS block in system prompt. Re-read every turn. Never paraphrased.

Agent calls lookup_order first; pulls wrong record 12% of the time.

Programmatic prerequisite: verify_customer is called via tool description ordering. Hook can also enforce.

Cost & latency

8 avg turns × (system + tools + accumulating history). Cache hits ~70% on system+tools.

Pre-cache: ~$0.04. With ephemeral cache on system+tools: ~$0.018. ~55% reduction.

Streaming first token in ~150ms. Tool round-trips 1.5-2s each. 4 tool calls × 2s + 800ms compose.

5-min TTL on ephemeral. Continuous traffic keeps cache warm.

Ship checklist

Two passes. Build-time gates verify the code; run-time gates verify the system in production.

Build-time

- System prompt anatomy: role · constraints · case-facts · escalation trigger

- Case-facts block pinned + re-read every turn↗ case-facts-block

- 4-tool registry with structured 4-line descriptions↗ tool-calling

- PreToolUse hook for refund cap (deterministic)↗ hooks

- Loop branches on stop_reason, never on text↗ stop-reason

- Identity verification prerequisite enforced via tool ordering

- Structured escalation block (not transcript) on handoff↗ escalation

- Sentiment + confidence post-response gates

- Prompt caching on system + tools (cache_control: ephemeral)↗ prompt-caching

- Audit log written per closed ticket

- Conversation history bounded by case-facts windowing

- Iteration cap (max_iter=15) as a safety net, not the primary control

Run-time

- Unit tests on every tool's input validation

- Integration test: end-to-end refund flow against test CRM

- Hook test: fire mock tool_input with amount > cap, verify exit 2

- Sentiment classifier evaluated against ≥ 200 labeled tickets

- Latency monitor: alert if p95 > 12s for ≥ 5 min

- Cost monitor: alert if per-conversation cost > $0.03

- Escalation queue dashboard with SLA breach alerts

- Runbook: top-5 escalation reasons + recommended human actions

Five exam-pattern questions

Your refund agent uses prompt-only enforcement: 'never refund over $500'. Production logs show 3% of refunds violate the policy. What's the architectural fix?

tool_input.amount <= 500. The hook exits 2 (deny) on violation, providing deterministic policy enforcement. Prompt-only is probabilistic and leaks ~3-5% in production. Tagged to AP-18 in the anti-pattern catalog.Your agent loop terminates after 7 turns by checking `response.text.includes('done')`. The customer says they're stuck. What's wrong?

[text, tool_use] in the same response, where text is preamble and tool_use is the real next step. Branch on stop_reason == "end_turn". The text "I'm done" can appear while stop_reason is still tool_use. Tagged to AP-12.By turn 8, the agent has lost the customer's order ID. What's the architectural fix?

customer_id, order_id, amount, policy_cap. Re-read every turn. Transactional values (IDs, amounts) must never be summarized, only reasoning chains can be paraphrased. Tagged to AP-35.An angry customer asks for a refund that exceeds the policy. Your agent escalates. Why is this wrong?

AP-22.You add a 6th tool to the registry and the agent's tool-selection accuracy drops 8%. What's happening?

lookup_order_details + lookup_order_status → lookup_order), or (b) move rare-use tools to a sub-agent. The Anthropic guide caps the optimum at ~5 tools per agent.Frequently asked

Why a separate hook for refund cap instead of putting it in the system prompt?

tool_input fields and exit 2 to deny. For policy-bearing limits (refunds, escalation thresholds), determinism is required. Use prompts for tone and behavior; use hooks for hard policy.How many tools should the registry have?

Should the system prompt include few-shot examples of past conversations?

CASE_FACTS block. Examples are for edge-case behavior; descriptions are for routing.What's the difference between sentiment escalation and policy escalation?

How do I handle a customer who switches topics mid-conversation?

What's a good escalation queue SLA?

Should I cache the message history across turns?

When should I use a sub-agent instead of expanding this one?

How do I prevent infinite loops?

tool_result append, ambiguous tool descriptions, or two tools alternating. Raising the cap masks the real issue.What should the audit log capture?

customer_id, order_id, full tool_call sequence (just names + timestamps), stop_reason per turn, elapsed_ms, escalation_reason (if any), csat (if surveyed). Skip the full transcript, the structured trace is enough to replay any failure. Store for 90 days minimum.