The problem

What the customer needs

- Generated code that matches the team's conventions automatically. Same file naming, same style, same architecture decisions across every developer's session.

- Plan a complex refactor before touching files so reviewers see the design first and rework drops below 10%.

- Review a 14-file PR without losing track of file 3's decisions by the time the reviewer reaches file 14.

Why naive approaches fail

- No CLAUDE.md → conventions drift across sessions; snake_case in one file, camelCase in another, even within the same PR.

- Skipping Plan Mode → Claude jumps to writing files; 40% of changes get reworked when the design turns out to be wrong.

- Single-context PR review → by file 14, lost-in-the-middle drops the conventions established on file 3; inline comments contradict each other.

- PR-level convention drift = 0 (CLAUDE.md + .claude/rules/ enforced)

- Plan-Mode usage = 100% of refactors > 5 files (developer norm, not optional)

- Two-pass PR review on every PR > 6 files (per-file local, then integration)

- Subagent code review on the auto-generated diff before commit

The system

What each part does

5 components, each owns a concept. Click any card to drill into the underlying primitive.

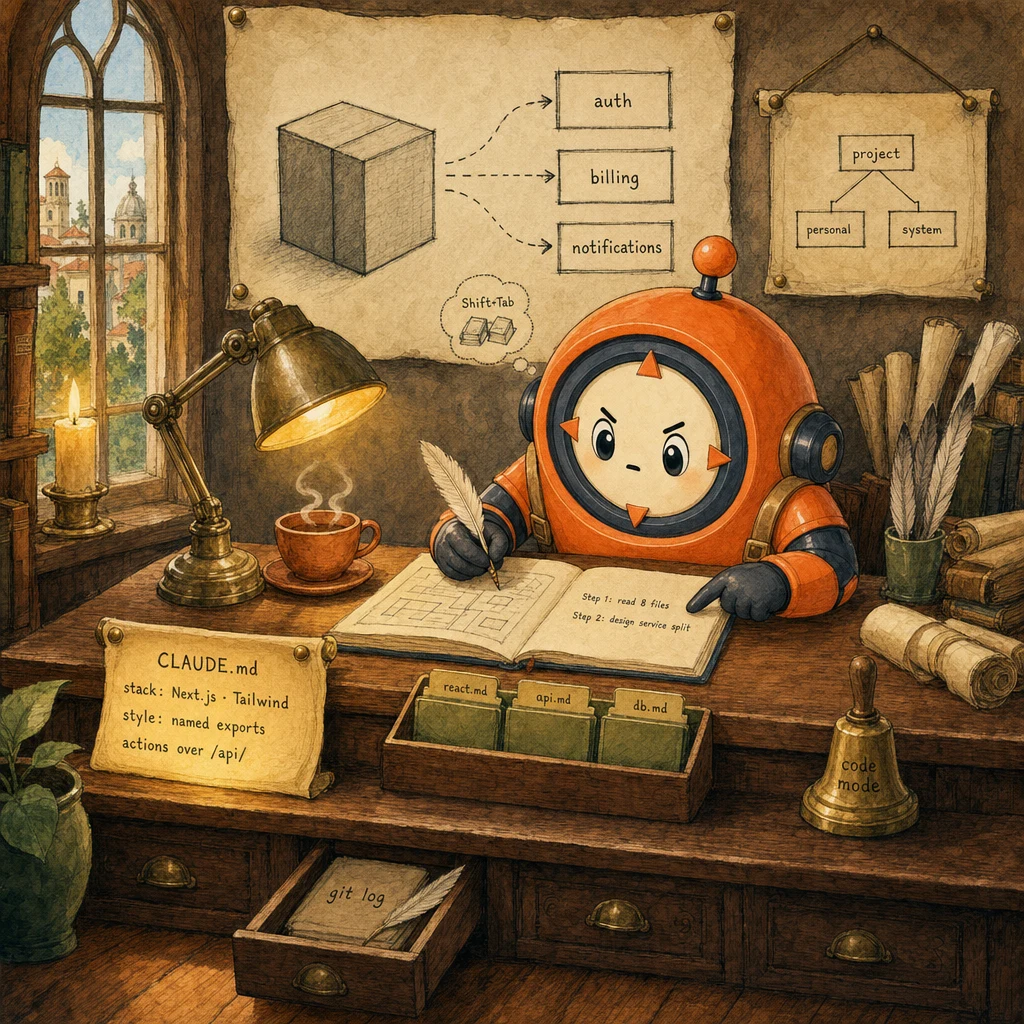

CLAUDE.md Hierarchy

three-level persistent memory

Anchors team conventions, personal preferences, and system-wide rules. Project-level CLAUDE.md is committed to the repo; personal CLAUDE.local.md is gitignored; system-level lives in ~/.claude. Claude Code reads them in order on every session. The project file always wins on conflicts.

Configuration

.claude/CLAUDE.md (project, committed): stack + commands + code style. .claude/CLAUDE.local.md (personal, gitignored): individual prefs. ~/.claude/CLAUDE.md (system): cross-project defaults. Conflicts: project > personal > system.

Plan Mode

exploration before execution

Shift+Tab puts Claude into a read-only state where it can explore files, sketch dependencies, and propose a design. But cannot edit anything until the developer approves. This single gate eliminates the most expensive refactor anti-pattern (jump-to-code-without-understanding-deps).

Configuration

Shift+Tab toggles Plan Mode. Claude responses include a structured plan section. Developer reviews, refines (Adjust the plan to use Drizzle instead of Prisma), then approves. Approval flips to Code Mode and execution begins.

Skills Registry

domain-specific code generation

One Skill per domain (React components, API routes, DB queries). Each Skill has its own system-prompt additions, allowed-tools whitelist, and conventions. The developer invokes the right Skill per task and gets focused output without cross-domain context pollution.

Configuration

.claude/skills/react-components/SKILL.md (Tailwind + TS + named exports). .claude/skills/api-routes/SKILL.md (zod + middleware + structured returns). Each has frontmatter + allowed-tools list. Loaded conditionally.

.claude/rules/ Globs

rules keyed by file path

Splits the monolithic CLAUDE.md into area-scoped rule files that load only when Claude is editing matching files. Prevents the prompt from carrying API conventions when editing React, or vice versa. The attention budget stays focused on what's relevant to the current edit.

Configuration

.claude/rules/react.md (globs: /*.tsx, /*.jsx). .claude/rules/api.md (globs: app/api/). .claude/rules/db.md (globs: lib/db/). Claude reads only the rule files matching the current edit's path.

Code-Review Subagent

scoped tools, fresh context, two-pass review

Spawned per PR with [Read, Grep, Bash] only. No Edit, no Write. Runs two passes: per-file local review against .claude/rules/, then a separate integration pass for cross-file consistency. Fresh context prevents lost-in-the-middle on long PRs.

Configuration

Subagent task: 'Review changed files [list]. Pass 1: per-file style + tests against .claude/rules/. Pass 2: integration. API boundaries, shared state, type alignment.' allowed-tools: ['Read','Grep','Bash']. Returns structured verdict.

Data flow

Eight steps to production

Create the project-level CLAUDE.md

At the repo root, write .claude/CLAUDE.md documenting stack, commands, and code style. Keep it tight. This file is read on every session and competes for attention with the actual task. 200-400 words is the sweet spot. Commit to version control so the whole team's Claude Code sessions inherit the same rules.

# .claude/CLAUDE.md (committed to repo, ~300 words)

# Project

Next.js 15 App Router + TypeScript strict + Tailwind v4 + Drizzle ORM.

# Commands

- Dev: `pnpm dev`

- Tests: `pnpm test`

- Lint + typecheck: `pnpm lint && pnpm typecheck`

- Build: `pnpm build`

# Code Style

- Named exports only (no default exports)

- 2-space indent

- Server Actions in app/actions/. Prefer over /api/

- Drizzle queries in lib/db/queries/

- Components: server by default; "use client" only when state or effects needed

# Architecture

- Auth: middleware.ts at root; protect /account and /admin

- Schema: lib/db/schema.ts is the single source of truth

- Types: derive from schema where possible (z.infer / drizzle types)Split conventions into .claude/rules/ globs

Once CLAUDE.md grows past ~500 words, split it. Move React conventions to .claude/rules/react.md with a glob **/*.tsx, API routes to .claude/rules/api.md (app/api/**), DB to .claude/rules/db.md (lib/db/**). Claude loads only the rule files matching the file being edited. Attention stays focused.

# .claude/rules/react.md

# globs: ["**/*.tsx", "**/*.jsx"]

#

# ## React Component Rules

# - Tailwind for all styles; no CSS modules, no styled-components

# - Props: typed interface (no inline { foo: string })

# - Server Components by default

# - "use client" only when useState/useEffect/event handlers required

# .claude/rules/api.md

# globs: ["app/api/**"]

#

# ## API Route Rules

# - Always validate input with zod schema imported from lib/schemas/

# - Wrap handler in middleware that injects { user, request_id }

# - Return shape: { status: "ok", data } | { status: "error", error: { code, message } }

# - Log errors with request_id for trace correlation

# .claude/rules/db.md

# globs: ["lib/db/**"]

#

# ## Database Rules

# - Drizzle ORM only. No raw SQL except in migrations

# - Queries in lib/db/queries/ subfolder

# - Types: drizzle-zod for runtime + drizzle-orm InferSelectModel for staticUse Plan Mode for any complex refactor

Shift+Tab → Plan Mode. Ask Claude to analyze the codebase first: read the relevant files, sketch dependencies, identify shared state, propose the refactor approach. Review the plan; refine if needed; only then approve and switch to Code Mode. The 5-minute plan review cost is dwarfed by the 30+ minute rework cost it prevents.

# Workflow: Shift+Tab → Plan Mode

#

# Developer prompt:

# "Plan: split this monolith into 3 services. Auth, billing, notifications.

# Identify shared utilities, shared DB schemas, and call boundaries."

#

# Claude (in Plan Mode, read-only):

# 1. Reads app/, lib/, prisma/schema.prisma

# 2. Sketches dependency graph

# 3. Returns structured plan:

# - auth/ owns: User, Session, AuthMethod

# - billing/ owns: Customer, Invoice, Payment

# - notifications/ owns: Email, Push, AuditLog

# - shared/: lib/db/connection.ts, lib/utils/id.ts

# - call boundaries: HTTP for sync, queue for async

# - migration order: shared → auth → billing → notifications

#

# Developer reviews, asks: "Is the queue Redis or SQS?"

# Claude refines plan with the answer.

# Developer: "Approved. Execute step 1 only."

# Claude switches to Code Mode and executes that step.Build Skills for domain-specific generation

Create one Skill per domain. Each Skill has frontmatter (name + description + when_to_use), system-prompt additions for that domain, and an allowed-tools whitelist. The developer invokes the right Skill per task. Skills replace the monolithic-prompt pattern that bloats context with irrelevant rules.

# .claude/skills/react-components/SKILL.md

---

name: react-components

description: Generate production-grade React components (Tailwind + TS strict).

when_to_use: When asked to create or modify a .tsx component file.

allowed-tools: [Read, Grep, Glob, Edit]

---

You are generating a React component for this Next.js 15 codebase.

## Conventions

- Server Components by default. Use "use client" only when state/effects required.

- Props: typed interface, never inline.

- Tailwind for all styling; no CSS modules.

- Named export, never default.

- Include a JSDoc one-liner for any non-trivial prop.

## Pattern

```tsx

interface FooProps {

// ...

}

export function Foo({ ... }: FooProps) {

return ( ... );

}

```Spawn a code-review Subagent on every PR

After Claude Code generates a diff, spawn a code-reviewer Subagent with [Read, Grep, Bash] only. No edits. The subagent runs in a fresh context and does two passes: per-file local review (style, tests) and integration (cross-file consistency, API boundaries). Fresh context = no lost-in-the-middle on long PRs.

# Spawn a code-review subagent

from anthropic import Anthropic

client = Anthropic()

REVIEW_PROMPT = """You are a code reviewer. Two passes.

PASS 1. Per-file:

For each changed file, check:

- Conformance with .claude/rules/ matching this file's path

- Test coverage for changed lines

- No regressions in imports or exports

PASS 2. Integration:

Across all changed files:

- API boundaries consistent (caller signature == callee signature)

- Shared types aligned

- DB schema and ORM usage match

Return STRUCTURED verdict:

{

"per_file": [{file, issues: [...]}],

"integration": [{issue, files_affected: [...]}],

"blocker_count": int,

"warning_count": int

}"""

# Spawn. Note allowed-tools is scoped Read/Grep/Bash only

resp = client.messages.create(

model="claude-sonnet-4.5",

max_tokens=8192,

system=REVIEW_PROMPT,

tools=[READ_TOOL, GREP_TOOL, BASH_TOOL],

messages=[{"role": "user", "content": f"Review PR diff: {pr_diff}"}],

)Use @mentions to target context, not load the whole repo

When asking about a specific area, use @path/to/file in the prompt. Claude pulls in only that file plus its imports. Not the entire codebase. Pair with CLAUDE.md's Key Files section so the team has a documented set of high-value entry points to mention.

# In CLAUDE.md, document the key files:

#

# # Key Files

# - Database schema: @lib/db/schema.ts

# - Auth middleware: @middleware.ts

# - API request validator: @lib/validators/api.ts

# - Server action helpers: @lib/actions/_helpers.ts

#

# Then in a prompt:

# "How does the rate limiter integrate with auth? @middleware.ts @lib/auth.ts"

#

# Claude pulls in only those two files + transitively their imports.

# Context stays focused; the whole repo doesn't bloat the message.Sequence non-orthogonal tasks; never mix in one session

Refactor + optimize is two tasks, not one. If the developer asks for both in a single session, expect ~40% rework. The optimization feedback invalidates half the refactor. Run them as sequential sessions: refactor cleanly first, commit, then optimize the new structure with fresh context.

# Wrong: one prompt, two orthogonal goals

# "Refactor this Redux store to Zustand and also optimize bundle size."

# Result: refactor 80% done, then optimization feedback causes rework.

# ~40% wasted work.

# Right: sequential sessions

#

# Session 1 (Plan Mode): refactor only

# "Plan: refactor app/store/ from Redux to Zustand. Pure structural;

# do not change file boundaries or optimize anything yet."

# Approve plan, execute, commit.

#

# Session 2 (new context, Plan Mode): optimize

# "Plan: now optimize bundle size on the new Zustand store. What dynamic

# imports? What tree-shake wins? Any code-split candidates?"

# Approve plan, execute, commit.

#

# Each session has clean context and one goal. Code review is per-PR easier.Save the rule when Claude makes a mistake

When Claude generates something the team doesn't want (default exports, wrong import order, /api/ instead of server actions), don't just correct in chat. Ask Claude to save the rule: 'Save this to CLAUDE.md or the right .claude/rules/ file.' Next session. And every other team member's session. Inherits the fix.

# Developer flow:

#

# 1. Claude generates: `export default function Foo() {}`

# 2. Developer corrects: "Use named export. Save this to .claude/rules/react.md."

# 3. Claude:

# - Edits .claude/rules/react.md, adds:

# "## Exports

# - Named exports only. Never `export default`."

# - Updates the original file to use named export.

# - Commits both in the same change.

#

# 4. Next session. For ANY developer on the team. Claude reads the rule

# and never generates the default export again. Convention drift = 0.

def save_rule_to_repo(rule_text: str, target_file: str):

"""Pseudocode: Claude actually does this through Edit + Bash tools."""

with open(f".claude/rules/{target_file}", "a") as f:

f.write(f"\n{rule_text}\n")

subprocess.run(["git", "add", f".claude/rules/{target_file}"])The four decisions

| Decision | Right answer | Wrong answer | Why |

|---|---|---|---|

| Where do team coding conventions live? | .claude/CLAUDE.md (project-level, committed); split to .claude/rules/*.md when it exceeds ~500 words | personal ~/.claude/CLAUDE.md (not shared) or inline comments in source files | Conventions have to be shared and version-controlled to survive team turnover. Personal config doesn't reach the next developer; inline comments rot. |

| Complex refactor (20+ files, multi-service) | Plan Mode first; review the plan; approve; only then execute. Sequential subtasks per session. | Jump to code; correct as you go; merge refactor + optimization in one prompt | Plan Mode's 5-minute review cost is dwarfed by the 30+ minute rework when the model guesses at structure. Mixed-goal sessions cause ~40% rework. |

| Multi-domain repo (React + API + DB) | One Skill per domain in .claude/skills/; .claude/rules/*.md keyed by file glob | One monolithic CLAUDE.md with every convention; one mega-tool list for everything | Attention budget per turn is finite. Loading API rules when editing React wastes attention and dilutes routing accuracy. |

| PR review on a 14-file PR | Two-pass via a code-review Subagent with [Read, Grep, Bash] only. Pass 1 per-file, pass 2 integration | Single-pass review in the same session that wrote the code | Same-session review inherits the writer's context bias. By file 14, lost-in-the-middle drops the conventions established on file 3. Fresh context fixes both. |

Where it breaks

Five failure pairs. Each one is one exam question. The fix is always architectural, deterministic gates, structured fields, pinned state.

No CLAUDE.md. Each developer's Claude session generates code with different style. Snake_case in one file, camelCase in another, default exports in a repo of named exports.

AP-06Create .claude/CLAUDE.md at the project root, commit it, and document stack + commands + code style. Claude reads it on every session. When you correct Claude, ask it to save the new rule to CLAUDE.md so the next session inherits it.

Developer asks Claude to 'split this monolith into microservices'. Claude jumps to creating new files without understanding shared state, dependencies, or boundaries. ~40% of the changes get reworked.

AP-07Use Plan Mode (Shift+Tab) first. Have Claude analyze the monolith, identify boundaries, sketch the dependency graph, and present a plan. Review and approve, then switch to Code Mode for execution.

Single Claude Code session refactoring 50 files across React, API, and DB. Context bloats with file reads and assumptions; later edits are inconsistent with earlier ones.

AP-08Split into Skills (react-components, api-routes, database-queries) with their own system-prompt additions and tool whitelists. Invoke the right Skill per task; each runs in a focused context.

GitHub Action reviews a 14-file PR in one Claude session. By file 14, lost-in-the-middle has dropped the conventions seen on file 3. Inline comments contradict each other.

AP-09Spawn a code-review Subagent with scoped tools (Read + Grep + Bash). Two passes: per-file local against .claude/rules/, then integration for cross-file consistency. Fresh context, no carry-over noise.

Developer prompts: 'Refactor Redux to Zustand AND optimize bundle size.' Claude attempts both; refactor is 80% done when optimization feedback invalidates half. ~40% rework.

AP-11Sequence orthogonal tasks. Session 1: refactor only, commit. Session 2: optimize the new structure with fresh context. Each session has one clear goal; code review is per-PR easier; rework drops to <10%.

Cost & latency

Project CLAUDE.md ~600 bytes; three .claude/rules/*.md ~400 bytes each; 1-2 Skills ~500 bytes each. Negligible repo bloat; inlined to system prompt at session start.

Claude reads 6-10 key files (~15K input tokens) and returns a 1-2K-token plan. Cost ~$0.02-0.05. Saves ~$0.30+ in rework. ROI ~15×.

Initial code: ~20K input tokens (codebase + rules) + 5K output. Tests + revisions: +$0.02-0.05. Total ~$0.08-0.15.

Scoped to changed files only (~5K tokens) + 1-2K verdict. Two-pass adds ~30%. Catches 70%+ of style + integration bugs before human review.

Skills are .md files that load into the system prompt only when invoked. Per-Skill ~500 bytes; loading <100ms. No extra API calls; just attention engineering.

Ship checklist

Two passes. Build-time gates verify the code; run-time gates verify the system in production.

Build-time

- Project-level .claude/CLAUDE.md committed; covers stack + commands + code style↗ claude-md-hierarchy

- .claude/rules/*.md split by file glob once CLAUDE.md exceeds ~500 words↗ attention-engineering

- Plan Mode (Shift+Tab) is the team norm for any refactor > 5 files↗ plan-mode

- Skills directory populated for each domain (react, api, db, review)↗ skills

- Each Skill has a tight allowed-tools whitelist (no Bash + Edit unless needed)↗ tool-calling

- Code-review Subagent spawned per PR with [Read, Grep, Bash] only↗ subagents

- Two-pass review: per-file local + integration↗ context-window

- @mentions document Key Files in CLAUDE.md to target context efficiently

- Orthogonal tasks (refactor + optimize) sequenced into separate sessions

- Corrections saved to CLAUDE.md or .claude/rules/ on the spot, not just in chat

- CI/CD job runs the code-review Subagent on every PR pre-merge

Run-time

- CLAUDE.md exists, committed, < 500 words; pre-commit hook flags drift past that

- .claude/rules/*.md keyed by glob, each ≤ 200 words

- Plan Mode used in the last 5 multi-file refactors (audit via git log + chat history)

- Skills directory has at least one Skill per major domain

- Code-review Subagent runs in CI on every PR > 3 files

- Two-pass review verdict posts to PR as a structured comment

- No Skill has Edit + Bash + Write all granted (privilege bloat alert)

- Developer onboarding doc references

.claude/CLAUDE.mdandclaude skills liston day one

Five exam-pattern questions

Your team is using Claude Code on the same Next.js codebase. Each developer's session generates code with different style. Some default exports, some named; some snake_case files, some kebab-case. What is the most maintainable way to enforce one set of conventions across the team?

.claude/CLAUDE.md at the project root. Document stack, commands, and code style in 200-400 words. Commit it to version control so every team member's Claude Code session reads the same rules. When Claude generates something inconsistent, correct it AND ask Claude to save the corrected rule to CLAUDE.md (or the relevant .claude/rules/ file) so the next session inherits the fix. One source of truth, version-controlled, shared via git pull.You're restructuring a monolith (20+ services, 100+ files) into microservices. Claude Code begins making changes immediately on the first prompt. What should you have done first, and why?

Use Drizzle instead of Prisma), and approves; only then does Claude switch to Code Mode and execute. The 5-minute review cost prevents the 30+ minute rework when Claude guesses at structure.Your repo has React components (Tailwind), API routes (zod + middleware), and database queries (Drizzle). Should Claude Code use one big tool list and one CLAUDE.md, or specialised setup?

react-components (Tailwind + TS), api-routes (zod + middleware), database-queries (Drizzle patterns). Each Skill has its own system-prompt additions and an allowed-tools whitelist. Pair with .claude/rules/*.md keyed by file glob (react.md for **/*.tsx, api.md for app/api/**). The developer invokes the right Skill per task; attention stays focused on the relevant domain.GitHub Actions runs Claude Code to review a 14-file PR. The review feedback on file 14 contradicts the decision on file 3. Why, and how do you fix it architecturally?

[Read, Grep, Bash]) and run two passes. Pass 1 reviews each file in isolation against .claude/rules/; pass 2 reviews integration (cross-file consistency, API boundaries). Fresh, scoped context. No carry-over noise.Should `.claude/CLAUDE.md` be committed to the repository, and who owns updating it?

.claude/CLAUDE.local.md (gitignored) is for personal preferences that shouldn't be team-wide.Frequently asked

Why split CLAUDE.md into .claude/rules/ instead of keeping it monolithic?

.claude/rules/*.md keyed by file glob loads only the rules matching the current edit. The prompt stays focused on what's actually relevant.What's the difference between Plan Mode and just asking Claude to read the code first?

Can I use Skills and Subagents together?

code-reviewer Skill that defines the review rubric. Subagent owns the context boundary; Skill owns the domain expertise.How small should a Skill be?

api-routes-rest vs api-routes-graphql, or react-components-server vs react-components-client). Smaller Skills route more accurately and load faster.When should I use a Subagent vs. just continuing in the current session?

What's the fastest way to discover what Skills the team has?

claude skills list from the repo root. It scans .claude/skills/ (project), ~/.claude/skills/ (personal), and reports each Skill's name, description, and when_to_use. Pair with a CLAUDE.md ## Available Skills section that points to the canonical Skill set so new developers see them on day one.Should I commit `.claude/skills/` to the repo?

.claude/skills/ and are committed. They're team conventions, just like .claude/rules/. Personal experiments live in ~/.claude/skills/ (system-wide) and stay out of the repo until they're proven and ready to be promoted.