Quick answer

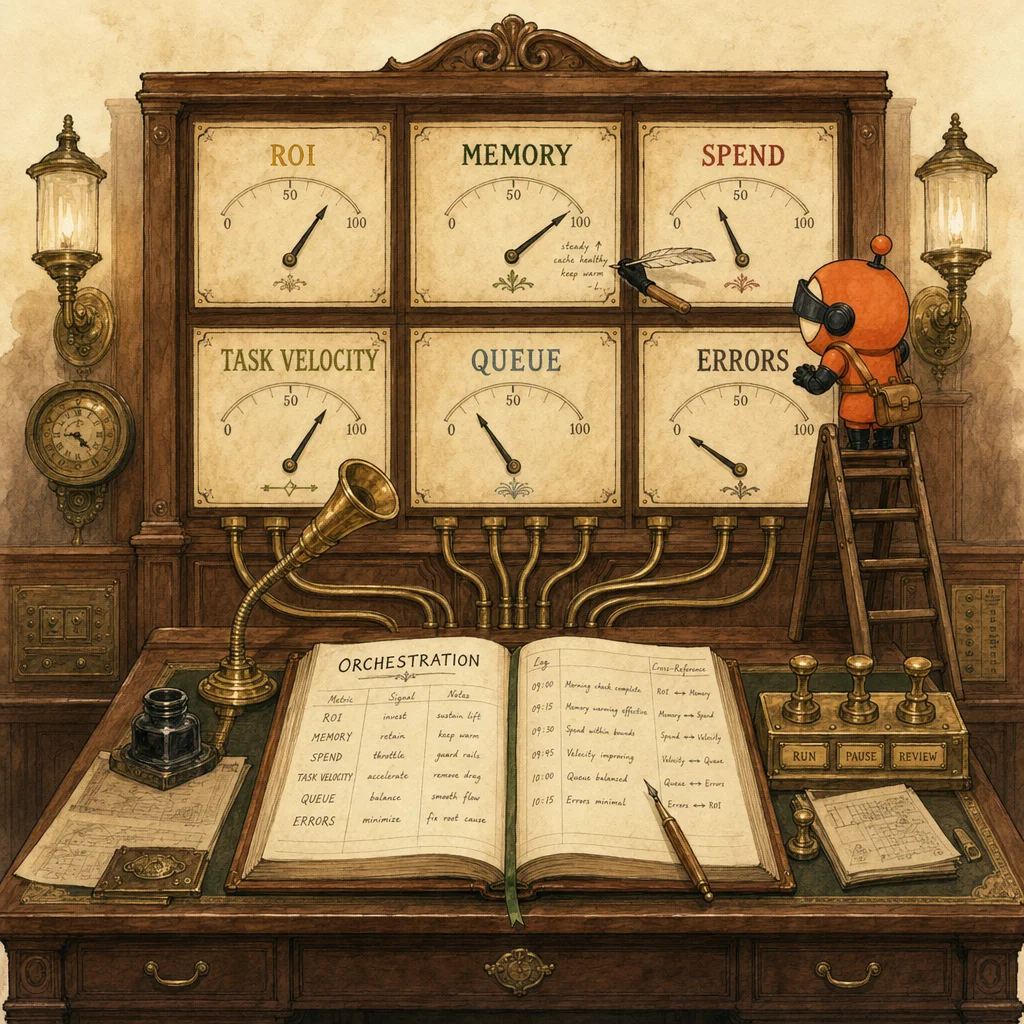

Intelligence Control Plane = the centralized observability layer over a multi-agent Claude Code deployment. One view: which agent ran, what skill it used, what it remembered, what it cost, what value it produced. Pattern is borrowed from cloud infrastructure. The proof points: 4:1 ROI, 45% lower CAC, 40-85% token reduction. Most teams don't have an AI capability problem; they have an AI observability problem. Orchestration now beats prompting.

The stat that frames the shift

Teams reportedly shipping incremental AI-generated features for $37.50 each in model spend — not thousands in dev hours (Stormy AI, May 10).

That number is the headline because it inverts the cost model most teams still budget for. If features cost dollars not engineer-weeks, the question stops being "can we afford to build this?" and starts being "can we measure what we built and prove it's working?"

Raw model quality isn't the hard part anymore. The Intelligence Control Plane is.

The four numbers worth tracking

- 4:1 ROI for growth teams using a centralized Claude Code framework (Stormy AI)

- 45% lower CAC from automating data pipelines and creative testing (Stormy AI)

- 40-85% lower token use from model routing and context compaction in multi-agent workflows (MorphLLM, May 11)

- A broader market shift from disconnected chat tools toward centralized visual intelligence systems (The Crunch, May 7)

These aren't vibes. They're the cost-side proof that the orchestration layer pays for itself within months, not years.

Why this matters

The new visual Claude Code agentic OS pattern isn't just adding a nicer interface. It's pushing a centralized visual system that lets you track skills, memory, costs, and ROI in one view.

And that's the real shift.

Most teams don't have an AI capability problem. They have an AI observability problem. They can't see which agent used what context, what it remembers, or whether the spend produced anything useful. The agents run; the dashboard doesn't roll them up.

But once disconnected pockets of intelligence are pulled into one overview, performance gets easier to tune. Cost doesn't hide. Memory doesn't reset silently. ROI isn't just vibes in a Slack thread.

Two non-obvious implications

- The bottleneck moves from prompting to orchestration. Two years ago, the winning team had the best prompts. Today the prompts are mostly good enough; the wins and losses concentrate in which agent runs when, on what context, against what cost budget, with what fallback.

- The winning teams won't just use better models — they'll measure better systems. Vendor-level model differences are shrinking; orchestration-level architecture differences are widening. The leverage is at the higher layer.

In other words: the valuable screen may not be the model output. It's the one that shows what every agent is doing, remembering, and costing.

What's been harder in your stack so far?

The honest answer most CTOs give privately: proving ROI, tracking memory, or seeing where model spend actually goes — usually all three at once. The teams that solve this layer first earn the 4:1 ROI. The teams that don't will hit a ceiling at one or two agents and stall there for a year.

How this shows up on the exam

D1 (Agentic Architecture, 27% of the exam) tests orchestration as a first-class architectural layer. The classic distractor: a question describes an agent that produces inconsistent results, and the trap answers are "use a better model" or "improve the prompt." The correct answer is almost always an architectural change at the orchestration layer — explicit subagent boundaries, structured handoff via case-facts blocks, policy hooks before destructive actions, eval gates between stages. The Intelligence Control Plane is the management-tier name for that architecture; the exam tests the engineering-tier components.

D3 (Claude Code Configuration) tests the same pattern at the configuration layer. CLAUDE.md hierarchy is the schema the control plane reads. Skills are the capability units the control plane meters. Plan mode is the explicit-orchestration discipline the control plane requires. Questions in this domain that ask "where does X belong" frequently have correct answers at the configuration or hierarchy layer — not at the model or prompt layer. Internalize that the visible artifacts (CLAUDE.md, Skills frontmatter, slash commands) are what makes the agent observable.

The buy-vs-build call

For most teams in 2026: buy the dashboards, build the orchestration. Dashboards are commodity now — pick a tool that already rolls up agent runs, costs, and memory state. Orchestration logic — which agent runs when, on what context, with what fallback — is your differentiator and has to be authored.

The mistake most teams make is the inverse: they build dashboards from scratch and outsource orchestration to whatever the vendor's default flow is. Wrong way around. The dashboards are off-the-shelf. The control plane logic is the moat.

Where this lands in the exam-prep map

Each blog post bridges into the evergreen pillars. These are the most relevant follow-ups for this story.

Concept

Evaluation

The Intelligence Control Plane is evaluation infrastructure: continuous measurement of every agent run rather than per-launch benchmarks.

Open ↗Concept

Skills

Tracking skills usage + cost per skill is the gauge that converts skill libraries from craft to managed capability.

Open ↗Concept

CLAUDE.md hierarchy

Centralized memory observation requires structured memory at the source — the hierarchy is the schema the control plane reads.

Open ↗Scenario

Agent skills for developer tooling

Centralizing Claude Code into a control plane is the production-shape of this scenario family — observability is what the scenario actually rewards.

Open ↗7 questions answered

What is the Intelligence Control Plane?

Why is observability harder than capability?

What numbers actually back this pattern?

What does 'orchestration beats prompting' actually mean?

How does this map to the CCA-F?

Is this just better tooling, or a real architectural shift?

Should I build this myself or buy?

Synthesized from research output on 2026-05-12. LinkedIn cross-post pending.

Last reviewed 2026-05-12.