On this page

TLDR

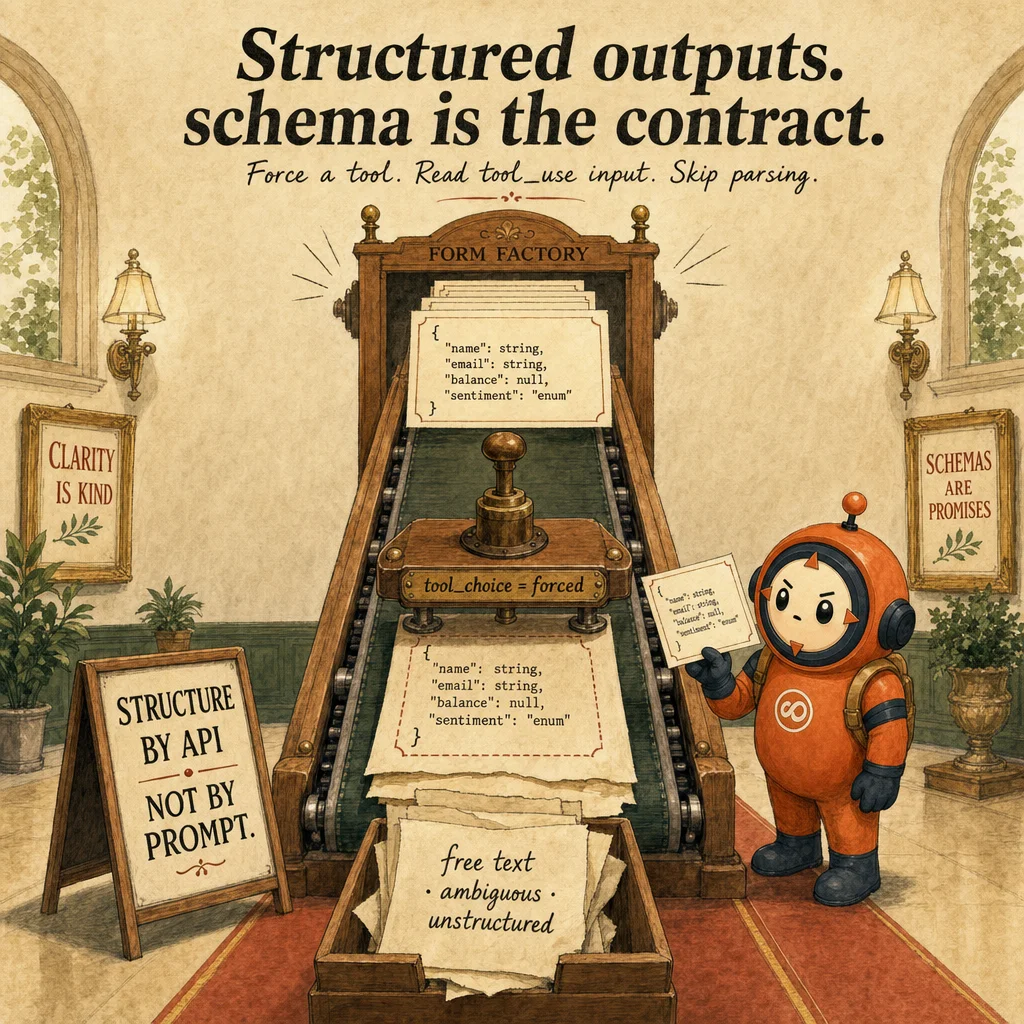

Structured outputs guarantee Claude returns JSON matching a schema instead of natural language. Force a tool with a JSON schema; the API enforces structure, not your parser. Schema design (nullable fields, 'unclear' enum values) prevents fabrication.

What it is

Structured output is a deterministic contract between the model and your application: Claude returns data in a specific JSON shape, guaranteed to match a schema you define. Without structured output, Claude returns prose wrapped in whatever Markdown it picks: sometimes with explanatory text, sometimes with code blocks, sometimes with tangents. With structured output, you pass a JSON schema and Claude returns valid JSON matching it, or the request fails. Pattern: User → Prompt + Schema → Claude → Valid JSON.

The power of structured output is that it shifts the burden from output parsing (your problem) to token generation (Claude's problem). You don't extract fields from messy prose. You don't validate structure after the fact. Instead, you pass a schema (tool_use blocks with tool_choice: forced, or JSON-mode APIs) and Claude ensures conformance. Schema design is 80% of the work: bad schemas cause fabrication (e.g. inventing a refund reason when the contract is silent).

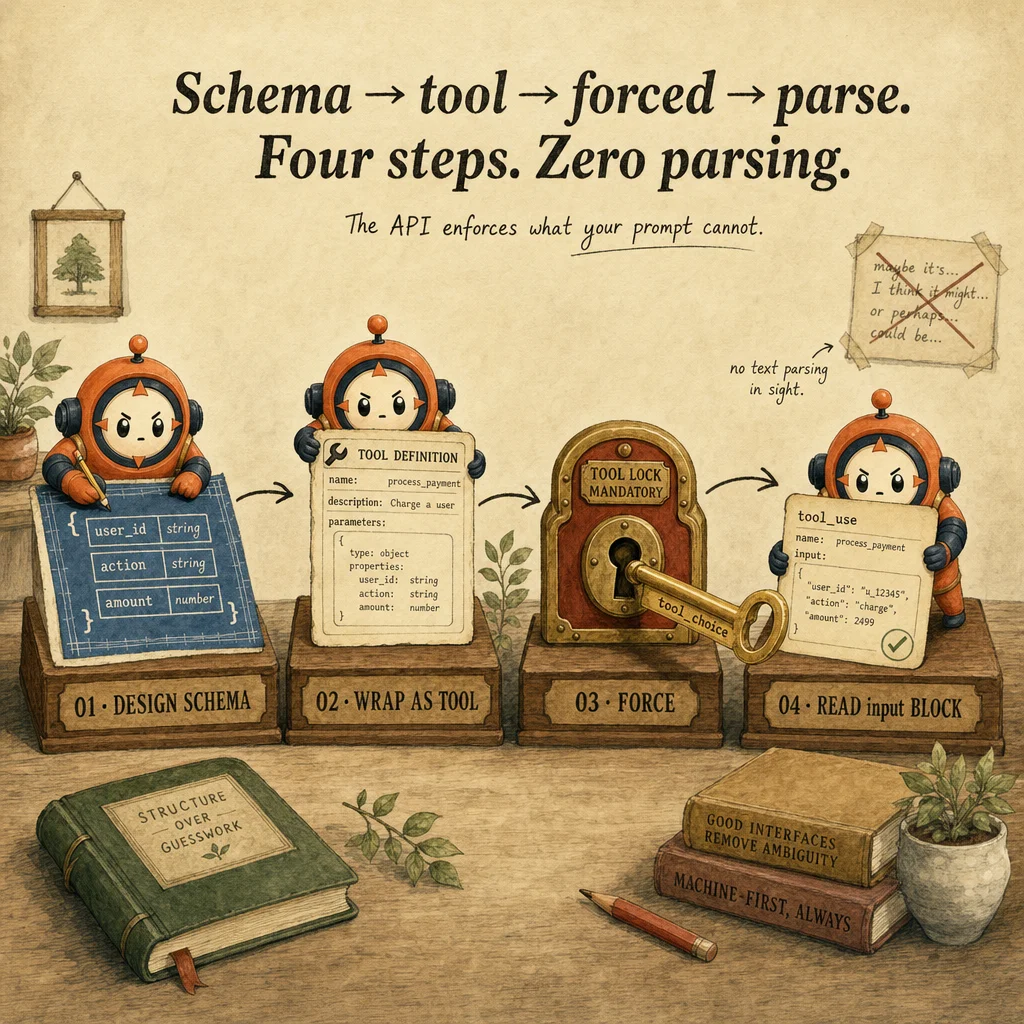

Three patterns exist: (1) tool_use blocks: pass {type: "tool", name: "extract_fields"} as a pseudo-tool, Claude must call it with your schema as input; (2) tool_choice forced: same, but with deterministic routing; (3) JSON-mode APIs (Anthropic Batch, some partners): Claude returns only JSON. Tool use is recommended, it's transparent, supports retries, and integrates naturally with agentic loops.

The validation-retry loop is the structural answer to schema violations and fabrication. When Claude returns invalid JSON or a fabricated value, you don't blame the model. You send the original document + the invalid extraction + the specific error back to Claude and ask it to correct. This loop typically runs 2-3 times, with each retry reducing errors by 70-80%. Without the loop, you silently accept garbage.

How it works

The tool_use + schema pattern uses Claude's native tool-calling. You define a tool with a JSON schema in input_schema: {type: "object", properties: {...}, required: [...]}. Claude sees the tool, understands the schema, and calls it with arguments matching it. The guarantee is structural: token generation is constrained so Claude can only output JSON matching the schema. If Claude tries invalid JSON, the model rejects it and re-generates.

tool_choice: forced adds determinism by removing the choice. Instead of asking "should I call this tool," you assert "you MUST." The request: {tool_choice: {type: "tool", name: "extract_fields"}}. Claude skips reasoning about whether to call and goes straight to execution. Saves a turn and guarantees the tool fires. Use forced for extraction pipelines where the tool is always the goal; use regular tool_use (with auto) when the model should decide.

The validation-retry loop structure: (1) Send user input + document + extraction schema. (2) Claude extracts and calls the tool with JSON. (3) Validate the JSON against the schema AND check for fabrication (required fields that are null, generic strings when the source is specific). (4) If valid: done. (5) If invalid: send back the original + failed extraction + specific error ("field 'refund_amount' should be null when document is silent, not '0.00'"). (6) Claude retries with feedback in context.

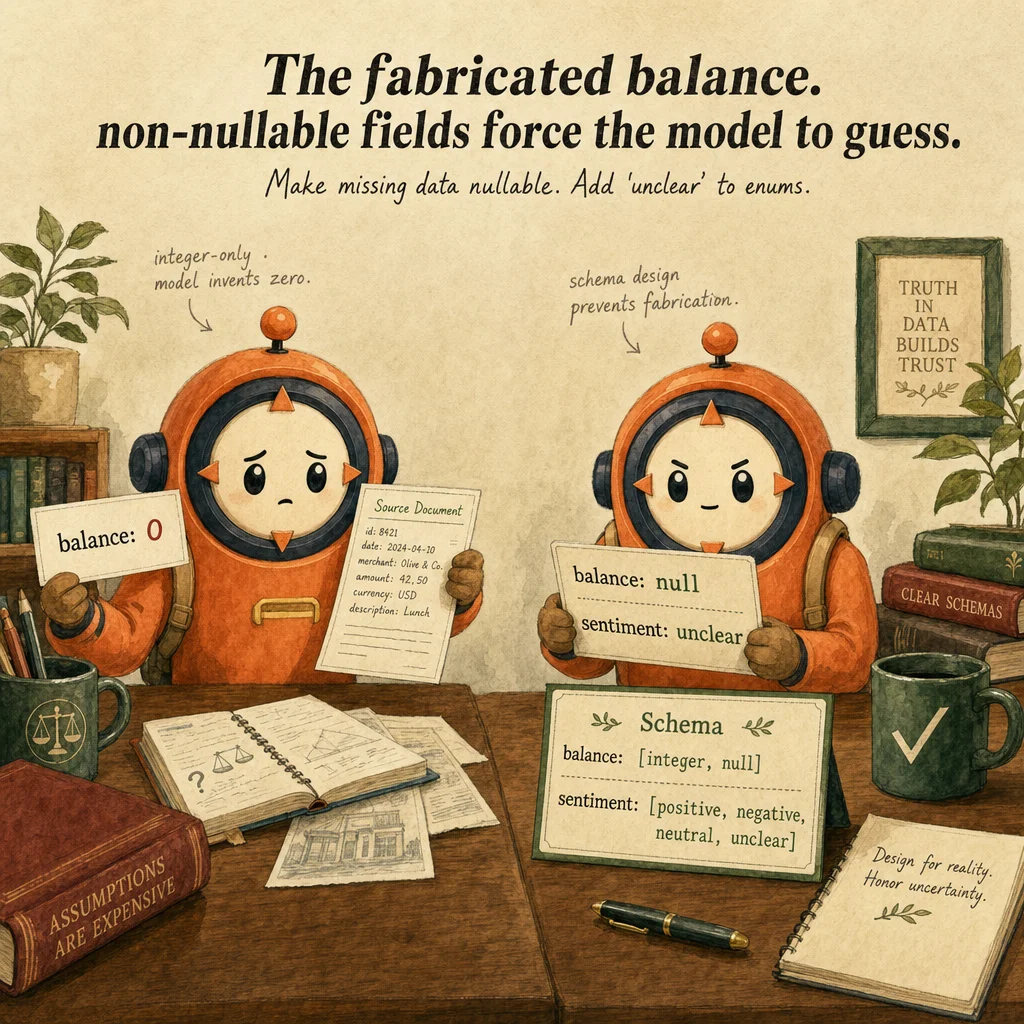

Fabrication happens when the schema doesn't protect against it. Example: {refund_reason: {type: "string"}} without a nullable field or enum. Claude sees a required string and fills it with something plausible, even if the contract never states a reason. The fix: {refund_reason: {type: ["string", "null"]}, confidence: {type: "number"}} and instruct Claude: "set null if silent." Nullable fields + escape hatches (enum with "unclear") are the schema anti-fabrication pattern.

Where you'll see it

Healthcare intake form extraction

Patient intake notes flow through tool_use with a JSON schema (patient_id, dob, complaint, meds[], allergies[]). Validation-retry: invalid date format → Claude sees the error → fixes input → resubmits. Error rate drops from 8% (prompt-only) to 0.3% with the schema + retry.

Refund authorization with policy hooks

Customer service agent extracts refund_amount, customer_id, reason via structured output. PostToolUse hook validates: amount within policy? customer eligible? Hook blocks illegal refunds before execution. Compliance reaches 100%; prompt-only achieves ~70%.

Research claim extraction with provenance

Literature review returns JSON: [{claim, evidence_tier, sources: [{title, url, date}]}]. Schema requires every claim to have ≥1 source. PreToolUse checks tier label; PostToolUse deduplicates. Output is deterministic and audit-ready for clinicians.

Code examples

from anthropic import Anthropic

import json

client = Anthropic()

extract_intake = {

"name": "save_patient_intake",

"description": "Save structured patient intake data with field validation.",

"input_schema": {

"type": "object",

"properties": {

"patient_id": {"type": "string"},

"dob": {"type": "string", "format": "date"},

"complaint": {"type": "string"},

"medications": {"type": "array", "items": {"type": "string"}},

"allergies": {"type": "array", "items": {"type": "string"}},

"balance": {"type": ["number", "null"]}, # nullable prevents fabrication

},

"required": ["patient_id", "dob", "complaint"],

},

}

def extract(intake_text: str) -> dict:

resp = client.messages.create(

model="claude-opus-4-5",

max_tokens=1024,

tools=[extract_intake],

# Force the tool, guarantees structured JSON, not free text

tool_choice={"type": "tool", "name": "save_patient_intake"},

messages=[{"role": "user", "content": f"Extract:\n{intake_text}"}],

)

if resp.stop_reason != "tool_use":

raise ValueError("expected tool_use, got " + resp.stop_reason)

tool_block = next(b for b in resp.content if b.type == "tool_use")

return tool_block.input # already structured, no parsing needed

from datetime import datetime

def extract_with_retry(text: str, max_attempts: int = 3) -> dict:

messages = [{"role": "user", "content": f"Extract intake:\n{text}"}]

for attempt in range(max_attempts):

resp = client.messages.create(

model="claude-opus-4-5", max_tokens=1024,

tools=[extract_intake],

tool_choice={"type": "tool", "name": "save_patient_intake"},

messages=messages,

)

tool_block = next(b for b in resp.content if b.type == "tool_use")

data = tool_block.input

# Schema enforces TYPES; we still need to validate semantics.

error = validate_semantically(data)

if not error:

return data

# Feed the error back to Claude, it can fix and retry

messages.append({"role": "assistant", "content": resp.content})

messages.append({

"role": "user",

"content": [{"type": "tool_result", "tool_use_id": tool_block.id,

"content": f"Validation error: {error}. Fix and resubmit."}],

})

raise RuntimeError(f"failed after {max_attempts} attempts")

def validate_semantically(data: dict) -> str | None:

try:

datetime.strptime(data["dob"], "%Y-%m-%d")

except ValueError:

return f"dob '{data['dob']}' is not YYYY-MM-DD format"

if data.get("complaint", "").strip() == "":

return "complaint is empty"

return None

Looks right, isn't

Each row pairs a plausible-looking pattern with the failure it actually creates. These are the shapes exam distractors are built from.

Just write 'output JSON' in the prompt, Claude is good enough.

Prompt-only JSON output is ~85% reliable. The model occasionally inserts narrative ('Sure, here is the JSON: ...') or omits fields. Forced tool_use with a schema is 100% structured.

Forced tool_use guarantees the output is correct.

Forced tool_use guarantees STRUCTURE, not CONTENT correctness. The model can still emit semantically wrong values. Add validation-retry for semantic checks.

Use non-nullable fields so missing data is treated as an error.

Non-nullable forces the model to fabricate when data is missing. Mark optional fields as nullable ([type, 'null']); add an 'unclear' enum value. Honest non-answers beat invented ones.

Side-by-side

| Approach | Reliability | Audit | Best for |

|---|---|---|---|

| Prompt-only ('output JSON') | ~85% | Weak (free text) | Casual extraction; non-critical |

| tool_use + schema (forced) | ~99% structure | Good (logged input) | Most extraction tasks |

| + validation-retry | ~99%+ structure & semantics | Excellent (retry trail) | Healthcare, finance, legal |

| + PostToolUse policy hook | 100% policy compliance | Audit-grade (pre-exec gate) | Compliance-critical workflows |

Decision tree

Do you need guaranteed JSON structure?

Do extraction errors have business consequences?

Is this a compliance-critical workflow (refund, medical, legal)?

Question patterns

You prompt Claude for JSON and 15% of responses include explanatory text. How do you fix this for production?

tool_use with a JSON schema and tool_choice: forced. Token generation is constrained to match the schema; structure is guaranteed. Prompt-only approaches are probabilistic.Your schema has `{refund_reason: {type: "string"}}`. Claude returns `"reason: unable to determine"` when the contract is silent. What's wrong?

{refund_reason: {type: ["string", "null"]}}. Or add an enum: ["refund", "replacement", "unclear"]. Give Claude a way to say "I don't know."A validation-retry loop runs 5 times and still fails. What's the next step?

You force `tool_choice: forced` for extraction. Sometimes Claude returns `tool_use` with empty input. Why?

Your extraction tool returns confidence: 0.4 for a critical field. What should production do?

You use `tool_choice: forced` with extended_thinking. The API returns 400. Why?

any tool_choice. Use auto when thinking is on. If extraction must be guaranteed, drop thinking; if reasoning matters more, accept text fallback.Schema validation passes but the extracted `customer_id` is `"customer_001"` when the document says `"cus_abc123"`. What's the gap?

"pattern": "^cus_[a-z0-9]+$". Or validate semantically in your harness and trigger validation-retry on mismatch.