On this page

TLDR

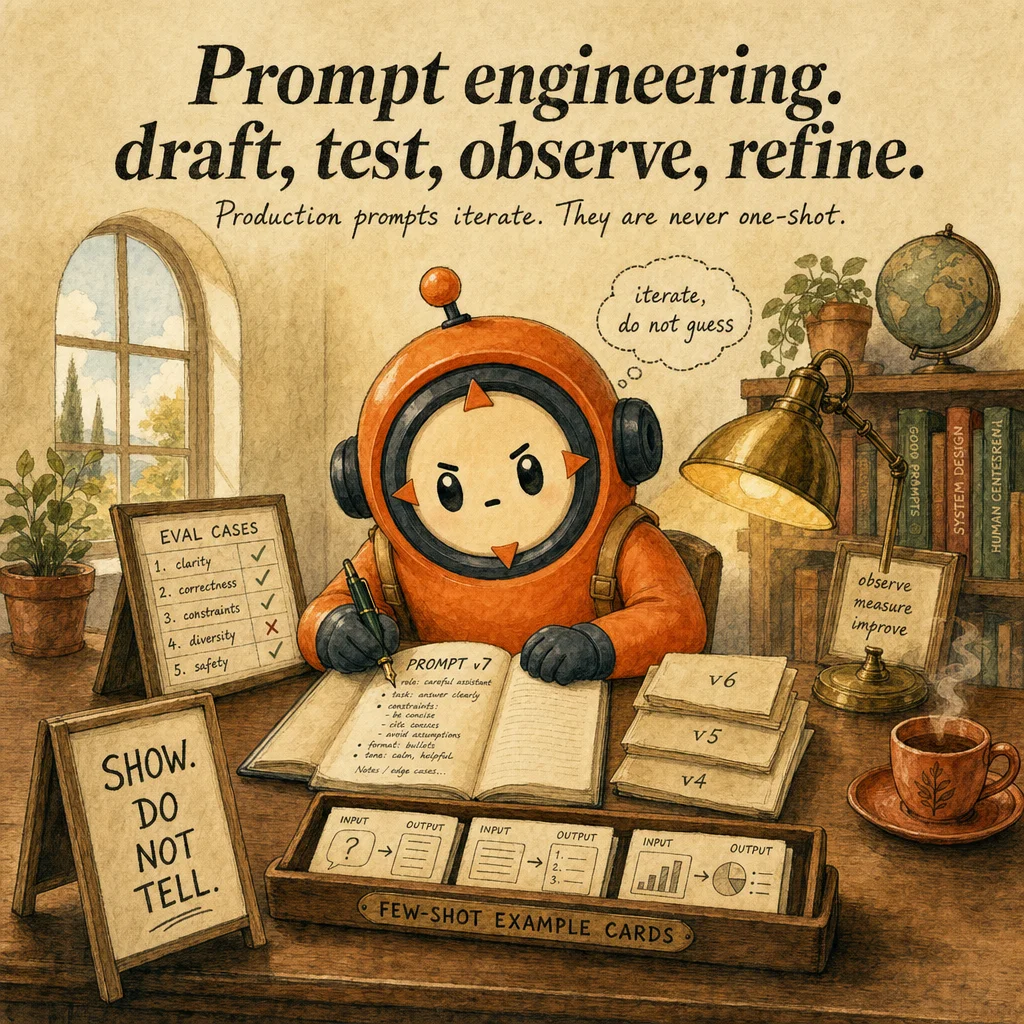

Prompt engineering is a craft of seven techniques that turn a brittle one-shot prompt into a production-grade contract: few-shot examples, iterative refinement against an eval suite, anti-fabrication schemas, structured templates, explicit do-don't constraints, output anchoring via tools, and test-driven prompt edits. The exam trap is treating any one technique as 'the answer'; production prompts compose all seven. Anthropic best-practice

What it is

Prompt engineering is the discipline of shaping Claude's output by writing a prompt, measuring it against test cases, observing where it fails, and tightening the prompt until the eval score plateaus. It is not writing one clever sentence. A production prompt converges over 5-15 iterations against a frozen suite of 20-50 cases, of which 3-5 are known-failure cases that anchor the regression boundary. Per ASC-A01 Course 11 §16, prompt v1 scoring 7.66 climbs to 8.7 in v2 only because the eval gave a quantitative delta to chase.

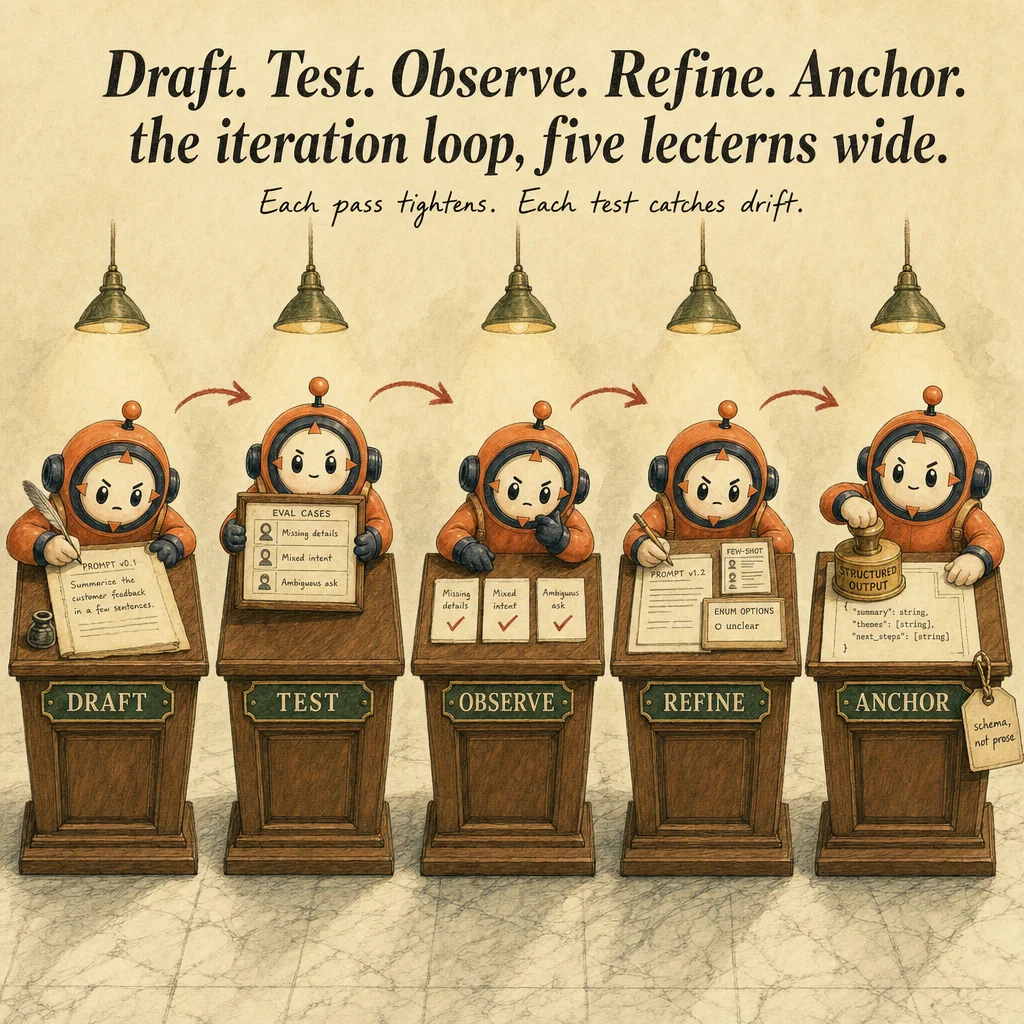

The seven load-bearing techniques are few-shot prompting (showing 2-5 input-output pairs so Claude can pattern-match the harder cases), iterative refinement (the Draft → Test → Observe → Refine → Anchor loop), anti-fabrication patterns (nullable fields, unclear enum values, citation-required answers), structured templates (role + objective + tone + tools + constraints + format + escalation), constraint elicitation (concrete do-don't lists with worked examples), output anchoring (force a tool_use schema, do not ask 'output JSON' in prose), and test-driven prompting (every change validated against a frozen eval suite, regression-free or roll back).

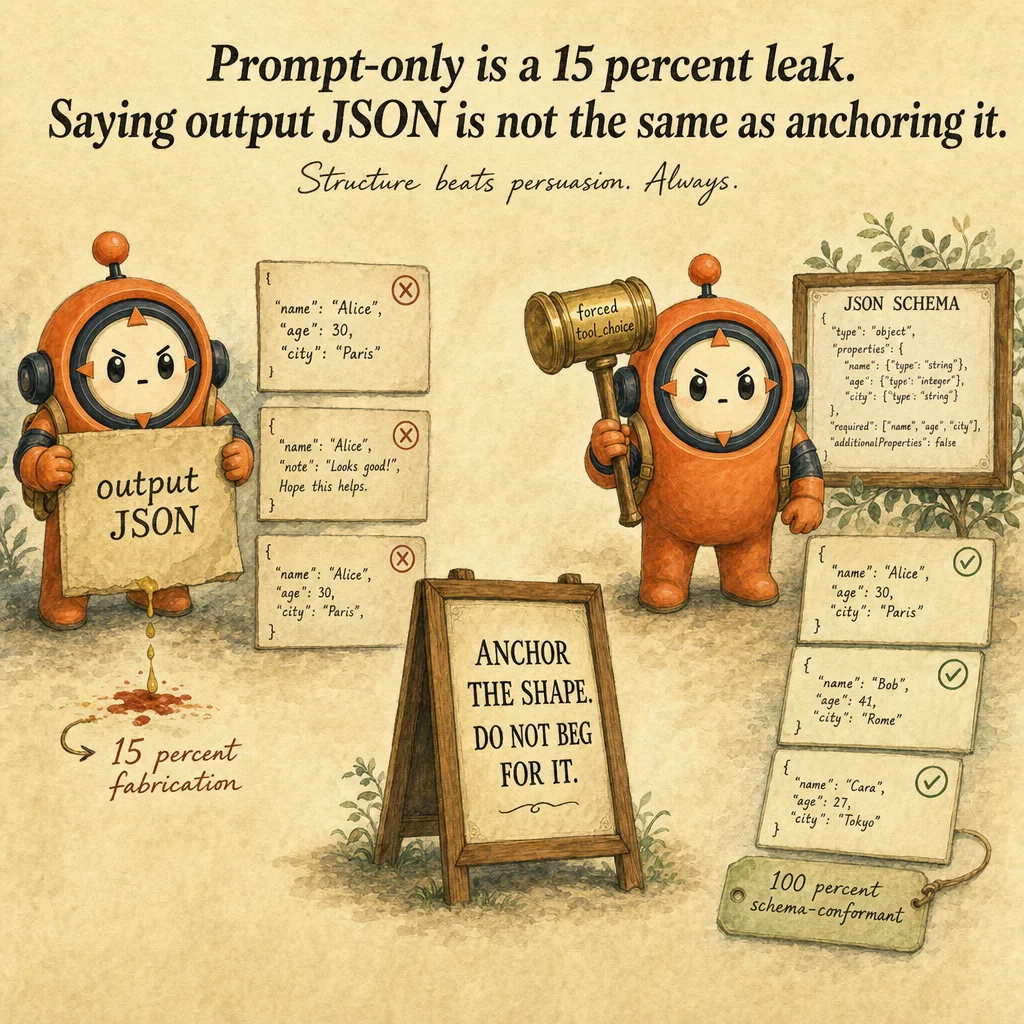

The reason every Domain 4 question on the exam is a composition question, never a single-technique question, is that real production prompts are layered. A refund agent uses a structured system-prompt template, three few-shot examples covering the sarcasm-style edge case, an unclear enum option for the refund-reason field, a forced tool_choice for output anchoring, and a 50-case eval that gates every prompt PR. Strip any one layer and the leak rate climbs above 5%. Per ACP-T03 §4.4, natural-language 'output JSON' prompts leak structure ~15% of the time; tool-anchored prompts leak 0%.

How it works

Iterative refinement is the spine. You start with a baseline prompt that almost works, build a small eval set (20-50 cases is the sweet spot, of which 3-5 are deliberate failure cases you have already seen Claude get wrong), and run the prompt against the suite to get a score. Score is the only signal that matters; subjective 'this feels better' is theater. Per ASC-A01 Course 12 §22, the score itself isn't inherently good or bad. What matters is whether you can improve it by refining your prompts. Each refinement targets the lowest-scoring case, you write a tighter instruction or add an example, then re-run the entire suite to make sure no other case regressed.

Few-shot prompting is the highest-ROI lever inside the loop. Per ASC-A01 Course 12 §29, you wrap each example in <sample_input> and <ideal_output> XML tags, you choose 2-5 examples that cover the failure modes (sarcasm, ambiguity, edge formats), and you optionally add a one-line explanation of *why* the example is ideal. A 2-3 example before-after pair reduces misclassification by 30-60%, the single biggest single-edit accuracy gain you can make. The mistake is using examples Claude already gets right; always pick examples that match the cases you have observed Claude fail on.

Output anchoring and anti-fabrication are where natural-language prompting reaches its ceiling. Asking 'please output JSON' in prose leaks structure roughly 15% of the time under load. The fix is tool_use with a JSON schema and tool_choice: forced, the API constrains token generation to match the schema, so structure is guaranteed at 100%. Fabrication is a separate problem, schemas guarantee shape, not truth. Per ACP-T03 §4.4 and the structured-data-extraction scenario doc, when a source is genuinely silent, give the model two honest exits: nullable fields (`type: ['string', 'null']`) and an `unclear` / `not_provided` enum value. Without these escape hatches, required-string fields force fabrication and the leak rate climbs above 5%.

Where you'll see it

Refund agent prompt PR gate

Every prompt change PR runs against a 60-case golden suite (20 happy path, 20 edge, 10 escalations, 10 known-failure). The CI fails the PR if the average score drops or any previously-passing case regresses. Per ASC-A01 Course 11 §15, the alternative, 'test once and decide it's good enough', carries a significant risk of breaking in production. Three iterations typically take a prompt from 80% to 95%.

Sarcasm-aware sentiment classifier

A social-listening team's first prompt scored 71% on a sarcasm-heavy suite. Adding three <sample_input> / <ideal_output> pairs that explicitly covered Plan-9-style ironic praise lifted the score to 88%. The team picked the examples directly from the eval's lowest-scoring cases, which per ASC-A01 Course 12 §29 is the canonical way to source few-shot examples. No model upgrade required.

Contract-extraction anti-fabrication

A legal-ops extractor was fabricating termination_clause text when the contract was silent. The fix was schema-level, not prompt-level: nullable strings plus an unclear enum option in the tool input schema. Per the structured-data-extraction scenario doc, fabrication rate dropped from 8% to under 0.5% once the model had an honest exit. The system prompt remained almost unchanged.

Code examples

from anthropic import Anthropic

import json

client = Anthropic()

SYSTEM = """You classify tweet sentiment as positive, negative, or unclear.

DO emit one of: positive | negative | unclear.

DON'T add commentary or explanation.

DON'T pick positive when the tone is sarcastic.

Here are example input-output pairs:

<sample_input>I love how my flight was delayed three hours.</sample_input>

<ideal_output>negative</ideal_output>

<sample_input>Best coffee I've had all week, genuinely.</sample_input>

<ideal_output>positive</ideal_output>

<sample_input>idk what to make of this product</sample_input>

<ideal_output>unclear</ideal_output>"""

def classify(tweet: str) -> str:

resp = client.messages.create(

model="claude-opus-4-5",

max_tokens=10,

system=SYSTEM,

messages=[{"role": "user", "content": tweet}],

)

return resp.content[0].text.strip().lower()

def run_eval(cases_path: str) -> float:

cases = [json.loads(line) for line in open(cases_path)]

correct = sum(1 for c in cases if classify(c["tweet"]) == c["expected"])

score = correct / len(cases)

print(f"Score: {score:.2%} ({correct}/{len(cases)})")

return score

# Iterate: edit SYSTEM, re-run, compare. Ship only on regression-free improvement.

v1_score = run_eval("sentiment_cases.jsonl")from anthropic import Anthropic

client = Anthropic()

# Anti-fabrication: nullable + 'unclear' enum exit.

EXTRACT_TOOL = {

"name": "extract_refund_decision",

"description": "Extract refund decision from a customer ticket.",

"input_schema": {

"type": "object",

"properties": {

"decision": {

"type": "string",

"enum": ["approve", "deny", "escalate", "unclear"],

},

"refund_reason": {"type": ["string", "null"]},

"amount_usd": {"type": ["number", "null"]},

"confidence": {"type": "number", "minimum": 0, "maximum": 1},

},

"required": ["decision", "confidence"],

},

}

def extract(ticket: str) -> dict:

resp = client.messages.create(

model="claude-opus-4-5",

max_tokens=512,

tools=[EXTRACT_TOOL],

tool_choice={"type": "tool", "name": "extract_refund_decision"},

messages=[{"role": "user", "content": ticket}],

)

for block in resp.content:

if block.type == "tool_use":

return block.input

raise RuntimeError("forced tool_choice did not fire")

# 'unclear' + nullable fields let the model say 'I don't know' honestly.

result = extract("Customer wants a refund. No order ID provided.")

# Expected: {"decision": "escalate", "refund_reason": null, "amount_usd": null, "confidence": 0.3}Looks right, isn't

Each row pairs a plausible-looking pattern with the failure it actually creates. These are the shapes exam distractors are built from.

Adding 'output valid JSON, do not include any other text' to the system prompt to enforce structure.

Natural-language prompting for structure leaks ~15% of the time under load. Per ACP-T03 §4.4, the only structural guarantee is tool_use with a JSON schema and tool_choice: forced. Prompt instructions are advice, not enforcement.

Spending three days hand-tuning the wording of the system prompt to fix a 12% accuracy gap.

Per ASC-A01 Course 12 §29, adding 2-3 well-chosen few-shot examples typically yields a 30-60% misclassification reduction in a single edit. Prose tweaks deliver diminishing returns; concrete examples deliver step-changes.

Using Claude itself to grade 100% of your eval cases because it scales better than humans.

Per the evaluation concept (docid #f86eb3), model-based grading is not reproducible across model versions and fails on deterministic checks (tool sequences, JSON schemas). Use code-based grading for anything measurable; reserve model grading for subjective qualities like tone.

Required-string field for refund_reason so the schema always returns a value the downstream code can parse.

Required strings force fabrication when the source is silent. Per the structured-data-extraction scenario, fabrication climbs above 5% without an unclear enum option or a nullable type. Always give the model an honest exit.

Iterating the prompt 20+ times against the same 10 hand-picked test cases until the score reaches 100%.

Per the evaluation concept §Q3, that's overfitting. Score plateau on a tiny fixed suite masks production blind spots. Grow the suite to 50+ externally-validated cases and pair with shadow-mode production evals.

Decision tree

Are you anchoring the output format?

tool_use with a JSON schema and tool_choice: forced. Structure is guaranteed at 100%, no parsing risk.Do you have a frozen 20-50 case eval suite, of which 3-5 are known-failure cases?

Is your accuracy gap concentrated in specific edge cases (sarcasm, ambiguity, format variance)?

<sample_input> / <ideal_output> examples that cover those exact cases. Per ASC-A01 Course 12 §29, this is the highest-ROI single edit.Is the model fabricating values when the source is silent?

unclear / not_provided enum option. Per the structured-data-extraction scenario, this drops fabrication from 8% to under 0.5%.required list.Question patterns

Your extraction prompt says 'output valid JSON'. 12% of responses include explanatory prose. Best fix?

"add 'do not include any text outside the JSON' to the system prompt". Per ACP-T03 §4.4, prompt-only is probabilistic (~15% leak); tool-anchored output is structurally guaranteed (0% leak).Your sentiment classifier misses sarcastic tweets ~30% of the time. The cheapest fix?

<sample_input> / <ideal_output> XML tags. Per ASC-A01 Course 12 §29, this technique reduces misclassification by 30-60%. The distractor is "upgrade to a larger model"; the right answer is one prompt edit with concrete failure-case examples.A teammate edited the system prompt and shipped to production. A week later, accuracy dropped 6 points. What was missing from their workflow?

"more A/B testing in production". Right answer: gate prompt PRs on offline eval delta, not on subjective review.Your refund agent's `refund_reason` field returns fabricated reasons when the contract is silent. Schema-level fix?

unclear enum option: {type: ['string', 'null'], enum: ['refund', 'replacement', 'unclear', null]}. Per the structured-data-extraction scenario doc, required-string fields force fabrication when the source is silent. The distractor is "add a temperature: 0 setting"; the actual fix is giving the model an honest exit in the schema.Your prompt iterates 47 times and the eval score keeps oscillating between 78% and 82%. What's the underlying problem?

"use a more capable model". Right answer: grow the suite to 50+ externally-validated cases, then iterate. Without golden data, evals measure consistency, not correctness.Your system prompt uses vague guidance like 'be careful with refunds' and policy violations stay at 5%. Why doesn't the model improve when you add 'be especially careful'?

"DO escalate refunds > $500. DON'T auto-approve any refund without an order_id."). Distractor: "add stronger language like NEVER". Right answer: replace adjectives with concrete rules and worked examples.You add a great new few-shot example and the targeted case finally passes, but two unrelated cases regress. What do you do?

"ship anyway because the targeted case was the priority". Right answer: the new example is teaching Claude something that conflicts with the others; either add a counter-example for the regressing cases or rewrite the new example to be more specific.A new prompt scores 92% on your 50-case suite. You ship. Production failures climb. Why was the eval misleading?

"you should have raised the bar to 95%". Right answer: pair offline evals with shadow-mode evaluation on real traffic, and grow the golden suite from production failures.Frequently asked

Why XML tags for few-shot examples instead of plain bullets?

<sample_input> and <ideal_output> tags create unambiguous boundaries Claude can attend to. Plain bullets blur into the surrounding instructions.