The problem

What the customer needs

- Guaranteed structure on every output. A downstream pipeline must never see a record missing a field.

- Honest non-answers when source data is genuinely missing. Better an explicit 'unclear' than a fabricated value.

- Bulk extraction at acceptable cost. 1000 documents/night at <$5 total.

Why naive approaches fail

- Prompt 'output JSON' → 15% leak with prose wrapping ('Sure, here's the JSON:'); downstream parser breaks.

- Required fields with no nullable option → model fabricates values when source is silent (refund_reason becomes 'customer dissatisfied' even when the email said nothing).

- Single-pass extraction with no retry → semantic errors slip through (date as 'next Tuesday', amount as -50, customer_id with embedded whitespace).

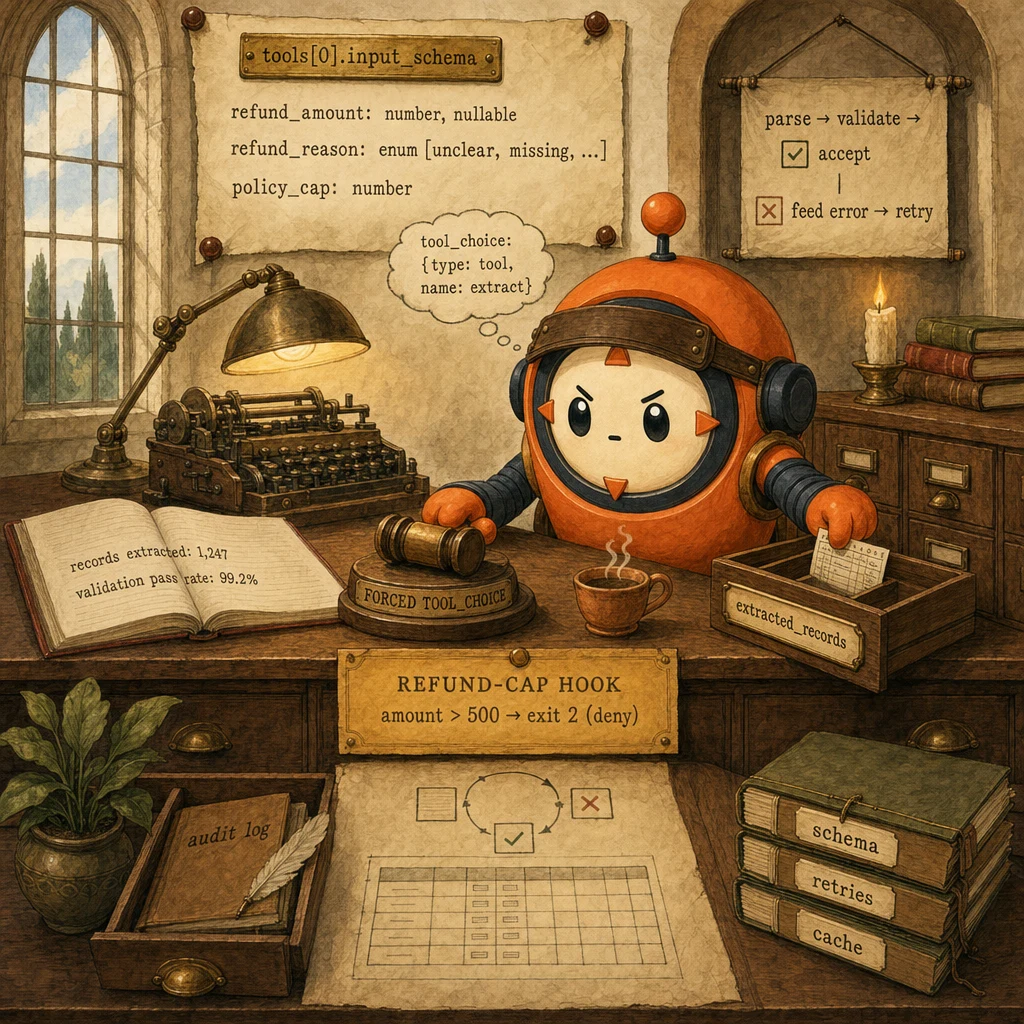

- Schema conformance = 100% (forced tool_use guarantees shape)

- Fabrication rate < 1% (nullable + enum escapes give the model an honest opt-out)

- Validation-retry convergence ≥ 95% within 3 attempts; remainder routed to human review

- Bulk runs use Batch API (50% discount, 24h SLA) for non-blocking volume

- Schema cached with

cache_control: ephemeralfor ~90% savings on sustained traffic

The system

What each part does

5 components, each owns a concept. Click any card to drill into the underlying primitive.

JSON Schema Definition

the contract, in input_schema

The output shape lives inside a tool definition, not as freeform text instruction. Required vs nullable, enum vs string, integer vs number. Every property is explicit. The model can only emit a tool_use call that matches; the SDK rejects anything else.

Configuration

tools = [{ name: "extract_record", input_schema: { type: "object", properties: { customer_id: { type: "string", pattern: "^cust_[0-9]+$" }, refund_amount: { type: ["number", "null"] }, refund_reason: { type: "string", enum: ["damage", "wrong_item", "late", "other", "unclear"] } }, required: ["customer_id", "refund_amount", "refund_reason"] } }]

Forced tool_choice

tool_choice: { type: 'tool', name: ... }

Setting tool_choice to a specific tool name guarantees the model fires that tool. No prose wrapping, no 'I'd be happy to help' preamble, no probabilistic adherence. This is the single biggest reliability lever; it converts 85% prompt-only adherence into 100% structural adherence.

Configuration

tool_choice: { type: 'tool', name: 'extract_record' }. Use 'auto' only for open-ended flows where the model decides whether to call any tool. Forced is for mandatory extraction.

Validation-Retry Loop

parse → validate → feed-error-back

Schema enforcement guarantees STRUCTURE. Semantic validation (date format, amount sign, ID pattern, business rules) runs in code after parse. On failure, the harness feeds a specific error message back to the model ('refund_amount is -50, must be ≥ 0') and retries. Typically converges in ≤ 2 retries. Generic 'try again' doesn't work; specific errors do.

Configuration

loop: extract → parse → validate_semantically → if invalid, append { role: 'user', content: 'Validation failed: <specific error>. Re-extract.' } → retry. Max retries: 3. After 3, route to human review.

Nullable Fields + Enum Escape Hatches

the anti-fabrication architecture

When a source genuinely doesn't contain a value, the model has two honest options: emit null (if the schema allows nullable) or emit a designated 'unclear' / 'not_provided' enum value. Without these escape hatches, required-string fields force the model to invent. Fabrication rate climbs above 5%. With them, fabrication drops below 1%.

Configuration

Field types: ["string", "null"] for optional values. Enums always include "unclear" or "other" as the last option. Few-shot examples explicitly show the model emitting unclear when source is silent. Anchors the behaviour.

Schema Caching + Batch API

cost discipline at volume

The schema is the largest stable token cost (~500-2000 tokens depending on complexity). Mark the tools array with cache_control: ephemeral; the 5-min TTL keeps it warm across sustained traffic, dropping schema-token cost ~90%. For overnight bulk runs, the Batch API gives a flat 50% discount. Combined with caching, bulk extraction cost drops 95%+ vs naive sync calls.

Configuration

Sync API: tools array with cache_control: { type: 'ephemeral' }. Cache hit rate ≥ 70% within 5-min windows. Batch API: submit 1000+ extractions overnight, results within 24h, no real-time retries (resubmit failures the next batch).

Data flow

Eight steps to production

Author the JSON schema as a tool definition

Define the output shape in tools[0].input_schema. A JSON Schema object. Every required field listed in required[]. Every optional field has ["<type>", "null"] so the model can emit null. Every constrained string is an enum with an explicit escape (unclear, not_provided, other). Add pattern regex on IDs that have a known format.

from anthropic import Anthropic

client = Anthropic()

EXTRACT_TOOL = {

"name": "extract_record",

"description": "Extract a structured record from a customer email.",

"input_schema": {

"type": "object",

"properties": {

"customer_id": {"type": "string", "pattern": "^cust_[0-9]+$"},

"refund_amount": {"type": ["number", "null"]},

"refund_reason": {

"type": "string",

"enum": ["damage", "wrong_item", "late", "other", "unclear"],

},

"urgency": {

"type": "string",

"enum": ["low", "medium", "high", "unclear"],

},

},

"required": ["customer_id", "refund_amount", "refund_reason", "urgency"],

},

}Force tool_choice to the extraction tool

tool_choice: { type: 'tool', name: 'extract_record' } is the structural contract. The model has no choice but to fire the tool with arguments matching the schema. Any prose preamble or wrapping disappears. This single setting turns 85% prompt-only adherence into 100% structural adherence.

def extract_one(email_text: str) -> dict:

resp = client.messages.create(

model="claude-sonnet-4.5",

max_tokens=1024,

tools=[EXTRACT_TOOL],

tool_choice={"type": "tool", "name": "extract_record"},

messages=[{"role": "user", "content": email_text}],

)

# The model MUST emit a tool_use block matching EXTRACT_TOOL.input_schema

for block in resp.content:

if block.type == "tool_use" and block.name == "extract_record":

return block.input # already a dict matching the schema shape

raise RuntimeError("forced tool_choice did not yield tool_use. SDK bug")Add nullable types and enum escape hatches

Every field that might be genuinely missing in the source gets ["<type>", "null"]. Every constrained string includes an explicit unclear / not_provided / other option. This gives the model an honest exit when the source is silent. Without it, required-string fields force fabrication. Pair with a few-shot example showing the model correctly emitting unclear.

# Few-shot: show the model how to emit 'unclear' on a silent source

FEW_SHOT_EXAMPLES = [

{

"role": "user",

"content": "I want a refund for my order. Thanks.",

},

{

"role": "assistant",

"content": [{

"type": "tool_use",

"name": "extract_record",

"input": {

"customer_id": "cust_unknown", # signals: pattern won't match, route to human

"refund_amount": None,

"refund_reason": "unclear",

"urgency": "unclear",

},

}],

},

{

"role": "user",

"content": [{

"type": "tool_result",

"tool_use_id": "...",

"content": "ok",

}],

},

]

# Then your real message follows:

# messages = FEW_SHOT_EXAMPLES + [{"role": "user", "content": email_text}]Wrap extraction in a validation-retry loop

Schema guarantees structure; semantics need code. After parsing the tool_use input, validate semantically: refund_amount > 0, customer_id matches the canonical pattern beyond the regex, urgency-vs-amount sanity (a $50K refund tagged 'low' is suspicious). On failure, feed a specific error back to the model and retry. Specific errors converge; generic 'try again' loops forever.

import re

def validate(record: dict) -> list[str]:

"""Returns a list of specific error messages (empty = valid)."""

errors = []

if record.get("refund_amount") is not None and record["refund_amount"] < 0:

errors.append("refund_amount must be non-negative")

if not re.fullmatch(r"cust_\d{4,}", record.get("customer_id", "")):

errors.append("customer_id must match cust_<4+ digits>")

if record.get("refund_amount", 0) > 1000 and record.get("urgency") == "low":

errors.append("refund_amount > 1000 with urgency='low' is suspicious")

return errors

def extract_with_retry(email_text: str, max_retries: int = 3) -> dict:

messages = [{"role": "user", "content": email_text}]

for attempt in range(max_retries):

resp = client.messages.create(

model="claude-sonnet-4.5",

max_tokens=1024,

tools=[EXTRACT_TOOL],

tool_choice={"type": "tool", "name": "extract_record"},

messages=messages,

)

tool_use = next(b for b in resp.content if b.type == "tool_use")

record = tool_use.input

errors = validate(record)

if not errors:

return record

# Feed the SPECIFIC error back; generic 'retry' doesn't converge

messages.append({"role": "assistant", "content": resp.content})

messages.append({

"role": "user",

"content": [{

"type": "tool_result",

"tool_use_id": tool_use.id,

"content": f"Validation failed: {'; '.join(errors)}. Re-extract.",

"is_error": True,

}],

})

raise ValueError(f"extraction did not converge in {max_retries} attempts")Cache the schema with cache_control: ephemeral

The schema is the largest stable token cost in steady-state extraction (~500-2000 tokens for non-trivial shapes). Mark the tools array with cache_control: { type: 'ephemeral' }. The 5-min TTL keeps it warm across sustained traffic; cached input tokens cost ~10% of fresh tokens. Hit rate stays ≥ 70% with continuous extraction; ~90% schema-token savings.

def extract_with_cache(email_text: str) -> dict:

resp = client.messages.create(

model="claude-sonnet-4.5",

max_tokens=1024,

tools=[

{

**EXTRACT_TOOL,

"cache_control": {"type": "ephemeral"}, # 5-min TTL

},

],

tool_choice={"type": "tool", "name": "extract_record"},

messages=[{"role": "user", "content": email_text}],

)

# Inspect cache stats for observability

print(f"cache_creation: {resp.usage.cache_creation_input_tokens}")

print(f"cache_read: {resp.usage.cache_read_input_tokens}")

return next(b.input for b in resp.content if b.type == "tool_use")Add a PreToolUse hook on policy bounds

When extraction touches policy-bearing values (refund cap, transaction limits), don't trust the model. Wrap it in a PreToolUse hook that exits 2 on violation. The hook reads tool_input.refund_amount and compares to the known cap; on breach, it returns an error to the model with the cap reference, and the model re-extracts with the constraint visible. Deterministic policy enforcement, not probabilistic.

# .claude/hooks/extract_policy.py

import sys, json, os

REFUND_CAP = float(os.environ.get("REFUND_CAP", "500"))

payload = json.loads(sys.stdin.read())

if payload["tool_name"] != "extract_record":

sys.exit(0)

amount = payload["tool_input"].get("refund_amount") or 0

if amount > REFUND_CAP:

print(

f"refund_amount ${amount} exceeds policy cap ${REFUND_CAP}; "

f"emit refund_amount=null and refund_reason='unclear' instead",

file=sys.stderr,

)

sys.exit(2) # DENY. Model sees error, re-extracts with the bound

sys.exit(0)Use Batch API for bulk overnight runs

Sync API is the right call when latency matters. For overnight backfills (1000+ documents), the Batch API gives a flat 50% discount with a 24h SLA. Combined with schema caching, bulk extraction cost drops 95%+ vs naive sync calls. Resubmit failures as a new batch the next morning. Batch API is async, no real-time retry inside the batch.

import json

def submit_batch_extraction(emails: list[dict]) -> str:

"""Submit a batch of extraction requests for overnight processing."""

requests = []

for email in emails:

requests.append({

"custom_id": f"extract-{email['id']}",

"params": {

"model": "claude-sonnet-4.5",

"max_tokens": 1024,

"tools": [EXTRACT_TOOL],

"tool_choice": {"type": "tool", "name": "extract_record"},

"messages": [{"role": "user", "content": email["body"]}],

},

})

batch = client.messages.batches.create(requests=requests)

print(f"Batch {batch.id} submitted with {len(requests)} extractions")

print(f"Expected ready: {batch.expires_at}")

return batch.id

# Next morning. Fetch results, validate each, requeue failures

def harvest_batch(batch_id: str):

batch = client.messages.batches.retrieve(batch_id)

if batch.processing_status != "ended":

return {"status": "not_ready"}

results = client.messages.batches.results(batch_id)

accepted, rejected = [], []

for r in results:

if r.result.type == "succeeded":

tu = next(b for b in r.result.message.content if b.type == "tool_use")

if not validate(tu.input):

accepted.append(tu.input)

continue

rejected.append(r.custom_id)

return {"accepted": accepted, "rejected_for_retry": rejected}Stratified accuracy reporting (not aggregate)

A 95% aggregate accuracy can hide a 60% accuracy on a critical document type. Track validation pass rate stratified by source. By document type (email vs PDF vs HTML), by sender domain, by extraction date. Bad strata surface fast. Aggregate metrics lie; stratified ones tell the truth.

from collections import defaultdict

def stratified_accuracy(records: list[dict]) -> dict:

"""Group validation results by document type, surface weak strata."""

by_type = defaultdict(lambda: {"pass": 0, "fail": 0})

for r in records:

errors = validate(r["extracted"])

bucket = "pass" if not errors else "fail"

by_type[r["doc_type"]][bucket] += 1

report = {}

for doc_type, counts in by_type.items():

total = counts["pass"] + counts["fail"]

report[doc_type] = {

"total": total,

"pass_rate": counts["pass"] / total if total else 0,

"fail_count": counts["fail"],

}

# Sort by pass_rate ascending. Worst strata first

return dict(sorted(report.items(), key=lambda kv: kv[1]["pass_rate"]))

# Output:

# {

# "html_email": {"total": 200, "pass_rate": 0.62, "fail_count": 76}, # WEAK

# "pdf": {"total": 150, "pass_rate": 0.91, "fail_count": 14},

# "plain_text": {"total": 650, "pass_rate": 0.99, "fail_count": 6},

# }The four decisions

| Decision | Right answer | Wrong answer | Why |

|---|---|---|---|

| Output shape guarantee | Forced tool_choice with input_schema as the contract | Prompt instruction 'output JSON' or 'respond with valid JSON' | Prompt instruction is probabilistic (~85% adherence in production); forced tool_use is structural (100%). The cost difference is negligible; the reliability difference is decisive. |

| Field that might be missing in the source | ["<type>", "null"] AND/OR enum with explicit 'unclear' option | Required field with no nullable / no escape. Force the model to invent | Without an honest exit, the model fabricates. Fabrication rate climbs above 5% on required-string fields. Nullable + enum escapes drop it below 1%. |

| Validation failure | Validation-retry loop with the SPECIFIC error fed back | Generic 'please try again' or single-pass with no retry | Specific errors (e.g. 'refund_amount is -50, must be ≥ 0') converge in ≤ 2 retries. Generic retries don't converge; the model regenerates the same bad output. |

| 1000 extractions overnight | Batch API + schema caching (50% × ~90% = ~95% savings) | Sync API with caching, or sync API without caching | Bulk + non-blocking = Batch API by default. Cap is the 24h SLA. For latency-critical extractions, stay sync + cached; for backfill, switch to Batch. |

Where it breaks

Five failure pairs. Each one is one exam question. The fix is always architectural, deterministic gates, structured fields, pinned state.

System prompt says 'respond with JSON only'. ~15% of responses include prose wrapping ('Sure, here's the JSON:'); downstream parser breaks on every fifth document.

AP-SDE-01Forced tool_choice + input_schema. The model has no choice but to fire the tool with arguments matching the schema. 100% structural adherence.

refund_reason is required string. Source email says nothing about a reason. Model fabricates 'customer dissatisfied' to satisfy the schema. Fabrication rate ~7%.

AP-SDE-02Make the field nullable AND/OR add an explicit 'unclear' enum option. Few-shot one example showing the model emit 'unclear' on a silent source. Fabrication drops below 1%.

Validation fails (refund_amount = -50). The pipeline drops the record entirely. Operator sees 5% silent loss; nobody notices for two weeks.

AP-SDE-03Validation-retry loop: feed the specific error back to the model, retry up to 3 times. 70-80% of validation failures converge within 2 retries.

Schema accepts refund_amount: -50 (number type passes). Schema accepts customer_id: 'cust_ 42' (string type passes). Bad data ships downstream.

AP-SDE-04Validate semantically AFTER schema parse: bounds checks (amount ≥ 0), regex on IDs (no embedded whitespace), business rules (urgency-vs-amount sanity). Schema enforces shape; code enforces meaning.

Refund cap is enforced in the system prompt ('never extract refund_amount > 500'). Production sees 3% violations leak through; auditor flags.

AP-SDE-05PreToolUse hook on extract_record: reads tool_input.refund_amount, compares to policy cap, exits 2 on breach with a specific error message. Deterministic, not probabilistic.

Cost & latency

Schema ~1500 tokens at cache-read price (~$0.0001) + email body ~500 input tokens + ~150 output tokens. Sustained traffic with ≥70% cache hit rate keeps per-record cost predictable.

5-10% of records retry once; 1-2% retry twice. Specific-error feedback converges quickly. Overall pipeline cost up ~5% to gain ~99% schema-conformance + ~99% semantic-conformance.

Batch API flat 50% discount × schema caching ~90% savings = ~95% total. 1000 documents @ ~$0.0008 each (sync cached) drops to ~$0.0004 each (batch + cache). $0.40 vs $0.80 per 1000.

PreToolUse hook is a Python/TS subprocess reading stdin and exiting 0/2. No LLM call. Cost is purely syscall-level latency.

Real production extraction at typical complexity. Batch + cache halves it. Adding human review of unconverged records adds operator-time cost but recovers the long tail.

Ship checklist

Two passes. Build-time gates verify the code; run-time gates verify the system in production.

Build-time

- Schema lives in tools[0].input_schema (not in the prompt)↗ structured-outputs

- tool_choice forced to the extraction tool name↗ tool-choice

- Every optional field uses ["<type>", "null"] or includes an 'unclear' enum↗ structured-outputs

- Few-shot example demonstrates the 'unclear' / null exit on silent source

- Validation-retry loop with SPECIFIC errors fed back; max_retries = 3↗ evaluation

- Schema cached with cache_control: ephemeral; hit rate monitored ≥ 70%↗ prompt-caching

- PreToolUse hook on policy-bearing fields (cap, threshold)↗ hooks

- Batch API used for bulk overnight runs (>100 docs)↗ batch-api

- Stratified accuracy reporting (by doc_type, sender, date). Never aggregate-only

- Failed-after-retries records routed to human review queue with original + last error

- Telemetry: schema cache hit rate, validation pass rate, retry distribution, fabrication rate

Run-time

- Schema versioned in source control; PR-reviewed before deploy

- Few-shot 'unclear' / null example in every extraction prompt

- Validation-retry loop with specific-error feedback; max_retries = 3

- PreToolUse hook on policy-bearing fields with unit tests

- Schema cache hit rate monitored; alert if drops below 50%

- Batch API job for nightly backfill with auto-resubmit on transient failures

- Stratified accuracy dashboard updated daily; alert on any stratum < 90%

- Human-review queue for records that fail after 3 retries; SLA documented

Five exam-pattern questions

Your prompt-only JSON extractor leaks ~15% with prose wrapping ('Sure, here is the JSON: ...'). Downstream parser breaks every 7th document. What's the architectural fix?

AP-SDE-01.Schema has `refund_reason: { type: 'string' }` (required). Source email says nothing about a reason. Production logs show ~7% of records have invented reasons like 'customer dissatisfied'. How do you stop the model from fabricating?

type: ['string', 'null']) or use an enum that includes an explicit 'unclear' option. Pair with one few-shot example showing the model correctly emit 'unclear' on a silent source. Fabrication rate drops from ~7% to <1% because the model now has a structurally-correct way to say 'I don't know'. Tagged to AP-SDE-02.Validation fails on a record (refund_amount = -50). Your pipeline drops the record silently. What architectural change recovers most of these without human intervention?

tool_result with is_error: true and the specific error message ('refund_amount is -50, must be ≥ 0'); retry up to 3 times. 70-80% of validation failures converge within 2 retries because the model now sees what's wrong. Generic 'try again' messages don't converge. Tagged to AP-SDE-03.You're processing 1000 customer emails overnight for a backfill. What API choice minimizes cost without sacrificing reliability?

cache_control: ephemeral saves another ~90% on the schema-token cost. Combined: ~95% savings vs naive sync calls. Resubmit failed records as a fresh batch the next morning; Batch API is async, no real-time retry inside the batch.Aggregate accuracy is 95%. Your CTO wants to know if it's safe to ship. What additional view do you produce before answering?

html_email (which dominates volume) while plain-text scores 99%. Surface the worst stratum. Aggregate metrics lie; stratified metrics tell the truth and surface the documents that need targeted few-shots, schema tweaks, or human review.Frequently asked

Why not just prompt 'output JSON'. Claude is good at it now?

tool_choice is structural. The SDK rejects anything that doesn't match the schema. The cost is identical; the reliability difference is decisive. Use prompts for tone; use forced tools for shape.Can I cache the schema if it changes between calls?

How does Batch API interact with the validation-retry loop?

What's the difference between the schema's `pattern` regex and code-level validation?

pattern: '^cust_[0-9]+$' rejects malformed IDs at parse time. Faster, structural. Semantic checks (the customer_id must exist in our DB, the refund_amount must be ≤ the original purchase total) need code; they're business rules, not syntax. Use pattern for cheap structural rejection; use code for everything that requires lookups or business logic.Should I run extended_thinking with structured extraction?

tool_choice: 'auto' and accept ~95% reliability, OR run a sync pre-pass to disambiguate, then a forced extraction on the cleaned input. Don't try to combine extended_thinking with forced tool_choice in one call.How do I test that nullable + enum escapes are working?

null and 'unclear'. Never invented values. If you see invented values (e.g. a refund_reason that's not in the source), the few-shot or the schema doesn't yet give the model an honest exit. Iterate.Does forced tool_choice work with multi-tool registries?

tool_choice: { type: 'tool', name: 'extract_record' } forces THAT tool. The other 4 are inert for this call. Use this when extraction is mandatory but the broader agent has other tools available; for extraction-only, the registry can have just one tool.