The problem

What the customer needs

- Find every reference to a function across a 1,000-file repo without reading every file.

- Review the agent's proposed changes with a fresh perspective so confirmation bias doesn't pass through.

- Generate up-to-date docs that reflect the actual code, not last quarter's spec.

Why naive approaches fail

- Monolithic single agent with 15 tools trying to do everything → routing accuracy drops 8% per tool past 5; the agent alternates between similar tools and misses obvious matches.

- Read every file first to 'understand the codebase' → context floods at file 80; the agent has lost track of the original task by file 200.

- Same session generates AND reviews the code → confirmation bias passes through; the reviewer agrees with the writer because it shares the writer's context.

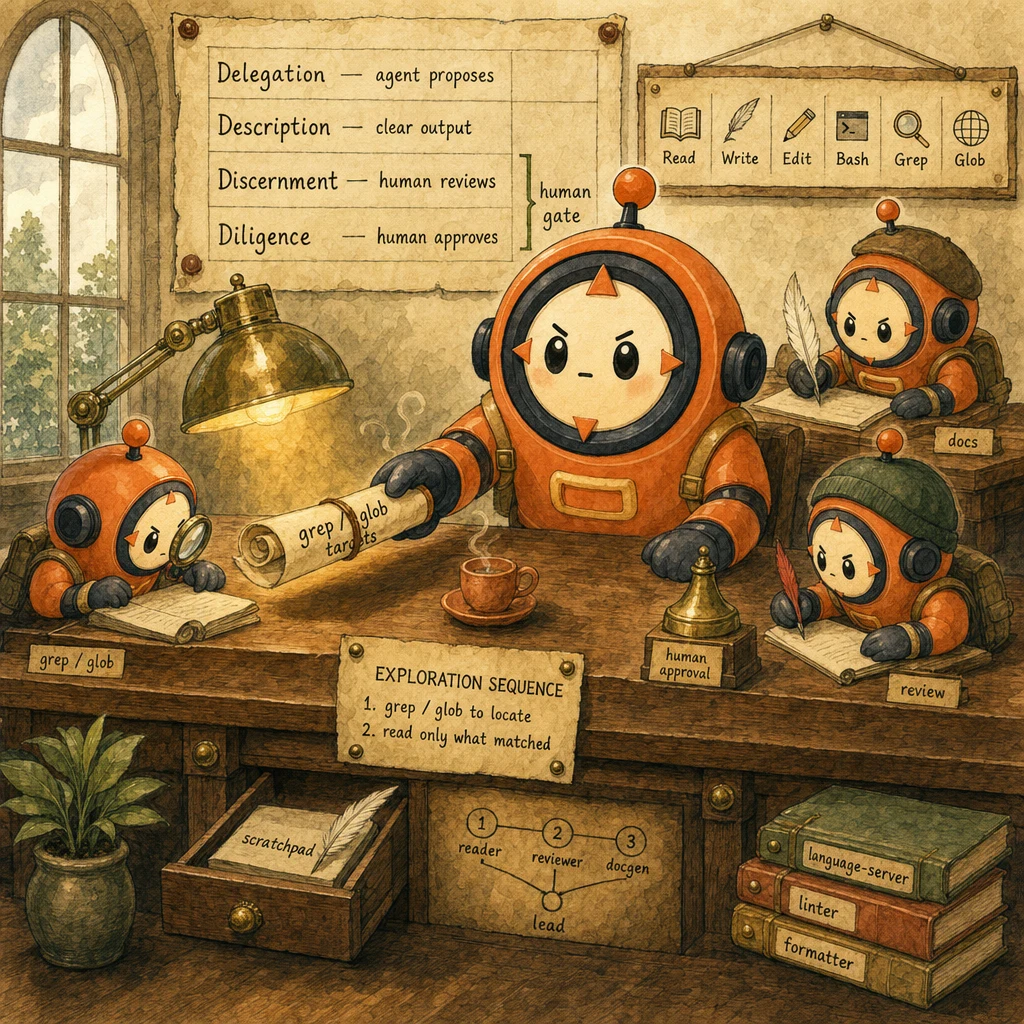

- Grep / Glob locates matched files first; Read opens only those files

- Code review runs in an independent subagent with fresh context

- Tool count ≤ 5 per subagent; specialists distributed across reader / reviewer / docgen

- 4D Framework approval gate before any auto-merge or destructive action

- Bash structured-output flags (

--format json,--porcelain) replace fragile regex parsing

The system

What each part does

5 components, each owns a concept. Click any card to drill into the underlying primitive.

Built-in Tool Suite

Read · Write · Edit · Bash · Grep · Glob

The six built-in tools cover almost every productivity task. Use Grep + Glob to locate before Read; use Edit (not Write) for in-place changes to existing files; reserve Bash for actual commands (compile, test, run). Never as a fallback for file I/O. The grep-then-read sequence is the single biggest token-efficiency lever.

Configuration

Tool whitelist per subagent: Reader=[Read,Grep,Glob]; Reviewer=[Read,Grep,Bash(test,lint)]; Doc-gen=[Read,Write,Glob]. Never grant 15 tools to one agent. Accuracy drops 8% per tool past 5.

Codebase Context Loader

imports · dependencies · architecture

Walks the repo at session start: parses package.json / pyproject.toml, reads README.md + .claude/CLAUDE.md, builds a dependency graph (top 50 imports), surfaces the architecture-decisions doc. Loaded once into the lead agent's context so it doesn't re-discover on every turn.

Configuration

Run on first invocation: project_type, key_dirs, top_imports[], architecture_summary. Persist as .claude/context-cache.json with hash-based invalidation. Refresh on package.json change.

Code-Review Subagent

independent · fresh context

Spawned per change-set with [Read, Grep, Bash(test,lint)] only. No Edit, no Write. Fresh context, no inherited history from the writing agent. Reviews against .claude/rules/ and the codebase context. Returns { verdict, issues: [{ line, severity, message }], summary }. Independence is the architectural point. Same-session review just rubber-stamps.

Configuration

system: 'You are a code reviewer. Read only. Check against .claude/rules/ and existing patterns.' tools: [Read, Grep, Bash(npm test, npm run lint)]. Receives diff + context summary; returns structured verdict.

Doc-Generator Subagent

writes from code, not from spec

Specialised subagent that reads source files and generates documentation that reflects what the code actually does, not what last quarter's spec said it would do. Writes JSDoc / docstrings inline (Edit), README sections (Write), or external API docs (Write to docs/). Tools scoped to read source + write docs only.

Configuration

system: 'Generate docs from source. Use the code as ground truth.' tools: [Read, Write(docs/**, *.md), Glob]. Run after code-review-subagent passes; auto-attached as part of the PR.

MCP Server Integrations

language servers · linters · formatters

Optional but high-leverage in multi-language repos. Hook in pyright for Python type info, tsc --noEmit for TS, eslint/ruff for lint, prettier/black for formatting. Each MCP server adds language-specific context the agent can query without re-implementing it. Selected per file extension; not all loaded at once.

Configuration

MCP registry per workspace: { '.ts': ['tsc', 'eslint'], '.py': ['pyright', 'ruff'], '.go': ['gopls'] }. Routed by file extension; agent calls mcp.lint(file) and gets structured diagnostics.

Data flow

Eight steps to production

Profile the codebase before any task

On first invocation, run a one-time codebase-context loader: language(s), framework, top entry points, dependency graph. Cache to .claude/context-cache.json. The lead agent loads this on every subsequent task instead of re-discovering. Saves ~4K tokens per turn.

# scripts/profile_codebase.py

import json, subprocess

from pathlib import Path

from collections import Counter

def profile() -> dict:

profile = {"languages": [], "framework": None, "key_dirs": [], "top_imports": []}

if Path("package.json").exists():

pkg = json.loads(Path("package.json").read_text())

profile["languages"].append("typescript")

deps = list((pkg.get("dependencies") or {}).keys())

if "next" in deps: profile["framework"] = "next.js"

elif "react" in deps: profile["framework"] = "react"

if Path("pyproject.toml").exists():

profile["languages"].append("python")

# Top 50 imported modules across the repo

imports = Counter()

for ext, regex in [("ts", r"^import .* from ['\"]"), ("py", r"^(import|from) ")]:

for f in Path(".").rglob(f"*.{ext}"):

if "node_modules" in f.parts or ".venv" in f.parts:

continue

for line in f.read_text(errors="ignore").splitlines()[:50]:

if line.startswith(("import", "from")):

imports[line.split()[1].rstrip(',')] += 1

profile["top_imports"] = [m for m, _ in imports.most_common(50)]

profile["key_dirs"] = [

p.name for p in Path(".").iterdir()

if p.is_dir() and not p.name.startswith(".") and p.name not in ("node_modules", "dist")

]

Path(".claude/context-cache.json").write_text(json.dumps(profile, indent=2))

return profile

if __name__ == "__main__":

print(json.dumps(profile(), indent=2))Always Grep + Glob before Read

The single biggest exploration anti-pattern is Read on every file in a directory. Instead, Grep finds the symbol or pattern across the repo (returns matched files + line numbers); Glob narrows by path; only Read the files that matched. On a 1000-file repo searching for a function name, this is the difference between 1000 Reads and 12 Reads.

# Wrong. Read everything, hope for the best

# for f in glob('**/*.ts'):

# content = read_file(f) # 1000 reads, context floods, agent gets lost

# Right. Grep first to locate, then Read only matched

def find_function_usages(name: str) -> list[dict]:

"""Grep + Glob first, Read only what matched."""

# Step 1: Grep finds the pattern across the repo

grep_result = run_tool("Grep", {

"pattern": rf"\b{name}\b",

"type": "ts",

"output_mode": "files_with_matches", # NOT 'content'. Too verbose

})

matched_files = grep_result["files"] # e.g. 12 files, not 1000

# Step 2: Glob filters further if needed

if len(matched_files) > 30:

# Too many. Narrow by path

matched_files = [f for f in matched_files if "src/" in f]

# Step 3: Read only the files that matched

file_contents = {}

for f in matched_files[:20]: # cap at 20 to bound context

file_contents[f] = run_tool("Read", {"file_path": f})

return [{"file": f, "content": c} for f, c in file_contents.items()]Distribute tools across 2-3 specialised subagents

One 15-tool agent routes worse than three 5-tool specialists. Define Reader (Grep + Glob + Read), Reviewer (Read + Grep + Bash test/lint), Doc-Generator (Read + Write + Glob). Each runs in its own context. The lead agent coordinates and merges results. Routing accuracy stays high because each specialist has a clear domain.

# Specialist subagent definitions

SPECIALISTS = {

"reader": {

"system": "You are a codebase reader. Use Grep+Glob to locate, "

"then Read only what matched. Never modify files.",

"tools": ["Read", "Grep", "Glob"],

},

"reviewer": {

"system": "You are an independent code reviewer. Fresh context, "

"no carry-over from the writer. Check against .claude/rules/.",

"tools": ["Read", "Grep", "Bash(npm test, npm run lint, ruff check)"],

},

"docgen": {

"system": "You generate docs from source. Use the code as ground truth.",

"tools": ["Read", "Write(docs/**, *.md)", "Glob"],

},

}

def spawn_specialist(role: str, task: str) -> dict:

spec = SPECIALISTS[role]

return client.messages.create(

model="claude-sonnet-4.5",

max_tokens=2048,

system=spec["system"],

tools=load_tools(spec["tools"]),

messages=[{"role": "user", "content": task}],

)

# Lead agent coordinates

def refactor_function(name: str, new_name: str):

locations = spawn_specialist("reader", f"Find all usages of {name}")

proposed_diff = generate_renames(locations, new_name)

review = spawn_specialist("reviewer", f"Review this diff: {proposed_diff}")

if review["verdict"] != "approve":

return {"status": "review_failed", "issues": review["issues"]}

return {"status": "ready_to_apply", "diff": proposed_diff, "review": review}Spawn the reviewer in a SEPARATE session

If the reviewer shares context with the writer, it inherits the writer's assumptions and rubber-stamps. The fix is structural: the reviewer subagent runs with a fresh messages array, fresh system prompt, no inherited tool history. It sees only the diff + the project rules. The independence is the entire point. Confirmation bias is structural, not philosophical.

def review_diff_independently(diff: str, file_paths: list[str]) -> dict:

"""Spawn a fresh-context reviewer. NO carry-over from the writer."""

REVIEWER_SYSTEM = """You are an independent code reviewer.

You have NO context from the agent that wrote this diff. You see only:

1. The diff itself

2. The current state of the affected files

3. The project rules in .claude/rules/

Check for:

- Style conformance (matches .claude/rules/)

- Test coverage (does the diff change behavior without test changes?)

- Type safety (any unsafe casts or 'any' added?)

- API breakage (did a public signature change?)

Return JSON: { verdict: 'approve'|'request_changes', issues: [{ file, line, severity, message }], summary }"""

# CRITICAL: fresh messages array, fresh system, fresh tool list

return client.messages.create(

model="claude-sonnet-4.5",

max_tokens=2048,

system=REVIEWER_SYSTEM,

tools=[READ_TOOL, GREP_TOOL, BASH_TEST_LINT_TOOL], # no Edit/Write

messages=[{

"role": "user",

"content": f"Diff to review:\n{diff}\n\nAffected files: {file_paths}",

}],

)Use a scratchpad file for cross-turn state

Long productivity tasks (rename across 50 files, refactor 3 services) span many turns. Don't try to keep all state in the message history. Write it to .claude/scratchpad.md. The lead agent reads it at the start of every turn, updates it after each subagent returns, and trims old entries. The scratchpad is the agent's working memory; the message history is the audit trail.

# .claude/scratchpad.md. Agent working memory across turns

SCRATCHPAD = Path(".claude/scratchpad.md")

def update_scratchpad(section: str, content: str):

"""Append-and-replace by section. Trims old entries past 20."""

if not SCRATCHPAD.exists():

SCRATCHPAD.write_text("# Agent scratchpad\n\n")

text = SCRATCHPAD.read_text()

# Replace existing section or append

marker = f"## {section}"

if marker in text:

# Replace existing section

before, _, rest = text.partition(marker)

_, _, after = rest.partition("\n## ")

new = before + marker + "\n" + content + "\n## " + after if "\n## " in rest else before + marker + "\n" + content

else:

new = text + "\n" + marker + "\n" + content

SCRATCHPAD.write_text(new)

# Usage in the agent loop

update_scratchpad(

"Rename Task: FooBar -> BazQux",

"Files matched: 12. Already updated: 8. Remaining: src/old.ts, src/legacy.ts.",

)Wire MCP servers per language extension

Multi-language repos benefit from language-specific MCP servers. pyright for Python types, tsc --noEmit for TS, eslint and prettier for JS, ruff for Python lint. Don't load all MCP servers at session start. Route by file extension when a tool needs them. Keeps context lean, reduces overhead.

# Per-extension MCP routing

MCP_BY_EXTENSION = {

".ts": ["tsc", "eslint", "prettier"],

".tsx": ["tsc", "eslint", "prettier"],

".py": ["pyright", "ruff", "black"],

".go": ["gopls", "gofmt"],

".rs": ["rust-analyzer", "rustfmt"],

}

def select_mcp_servers(file_paths: list[str]) -> list[str]:

"""Pick the minimum set of MCP servers needed for these files."""

extensions = {Path(f).suffix for f in file_paths}

servers = set()

for ext in extensions:

for server in MCP_BY_EXTENSION.get(ext, []):

servers.add(server)

return sorted(servers)

# In the lead agent before spawning the reviewer

def review_with_mcp(diff: str, files: list[str]):

mcp_servers = select_mcp_servers(files)

return spawn_specialist(

"reviewer",

f"Review diff: {diff}\nUse MCP servers: {mcp_servers}",

)Implement the 4D approval gate

Delegation (agent proposes), Description (clear output), Discernment (human reviews), Diligence (human approves). The 4D Framework. Code-changing actions are gated behind explicit human approval. The agent presents the diff + the reviewer's verdict + a one-paragraph summary; the human clicks approve or rejects. No auto-merge for productivity tasks; the gate exists because the cost of a bad merge is far higher than the friction of one click.

from typing import Literal, TypedDict

class ApprovalRequest(TypedDict):

delegation: str # what the agent proposes (1 sentence)

description: str # the diff + summary of changes

discernment: dict # reviewer subagent's verdict + issues

risk_level: Literal["low", "medium", "high"]

def request_approval(req: ApprovalRequest) -> Literal["approve", "reject"]:

"""4D gate. ALWAYS waits for human input. No auto-approve."""

print("=" * 60)

print(f"DELEGATION: {req['delegation']}")

print(f"\nDESCRIPTION:\n{req['description']}")

print(f"\nDISCERNMENT (review verdict): {req['discernment']['verdict']}")

if req['discernment'].get('issues'):

print(f" Issues:")

for issue in req['discernment']['issues']:

print(f" - {issue['severity']}: {issue['message']}")

print(f"\nRISK LEVEL: {req['risk_level']}")

print("=" * 60)

print("DILIGENCE: Approve? [y/N]: ", end="")

return "approve" if input().strip().lower() == "y" else "reject"

# Usage in the lead agent

def apply_refactor_safely(diff: str, files: list[str], reviewer_verdict: dict):

decision = request_approval({

"delegation": f"Apply rename refactor across {len(files)} files",

"description": f"Diff:\n{diff}",

"discernment": reviewer_verdict,

"risk_level": "medium" if len(files) > 20 else "low",

})

if decision == "approve":

run_tool("Edit", {"diff": diff})

else:

print("Refactor rejected by human; no files changed.")Use Bash structured output, not regex

Tools that emit JSON (gh pr diff --json, git log --pretty=format:%H%x09%s, npm test --reporter json, eslint --format json) replace fragile prose-parsing. Even simple commands have structured-output flags (git status --porcelain, ls -la --time-style=long-iso). Always prefer them. Regex on free-form output is the reason most agent pipelines break in the third week of production.

# Wrong. Regex on prose output

# output = run("git log --oneline -10")

# commits = re.findall(r"^([a-f0-9]{7,}) (.+)$", output, re.M)

# # Breaks when commit message contains a colon or special chars

# Right. Structured output, parse JSON

import json, subprocess

def recent_commits(n: int = 10) -> list[dict]:

"""git log with structured output, no regex needed."""

raw = subprocess.check_output(

["git", "log", f"-{n}",

"--pretty=format:%H%x09%h%x09%s%x09%an%x09%aI"],

text=True,

)

return [

dict(zip(["sha", "short", "subject", "author", "date"], line.split("\t")))

for line in raw.splitlines() if line

]

def lint_results(file: str) -> list[dict]:

"""eslint --format json gives a structured contract."""

raw = subprocess.check_output(

["npx", "eslint", "--format", "json", file],

text=True,

)

return json.loads(raw) # array of { filePath, messages: [{ ruleId, severity, line, message }] }

def pr_diff_files() -> list[str]:

"""gh pr diff --name-only. One file per line, no parsing needed."""

return subprocess.check_output(

["gh", "pr", "diff", "--name-only"],

text=True,

).strip().split("\n")The four decisions

| Decision | Right answer | Wrong answer | Why |

|---|---|---|---|

| Multi-file refactoring task (20+ files) | Distribute work across 2-3 specialist subagents (reader → reviewer → applier); 4-5 tools each | One monolithic 15-tool agent does everything in one session | Routing accuracy drops ~8% per tool past 5; 15 tools degrades quality dramatically. Specialist subagents with narrow tool whitelists keep accuracy high and contexts clean. |

| Exploring a 1000-file codebase for usages of a function | Grep + Glob first to locate matched files, then Read only those | Read every file to 'understand the codebase' before deciding | Read-everything-first floods context and the agent loses track of the original task. Grep returns matched files in seconds with structured output; Read narrows to ~10-20 files instead of ~1000. |

| Reviewing the agent's own code changes | Spawn an independent reviewer subagent with fresh context, no inherited history | Same session generates AND reviews the code | Same-session review inherits the writer's assumptions and rubber-stamps. Confirmation bias is structural here, not philosophical. Independence at the architectural layer is the only fix. |

| Auto-merging the agent's code changes | 4D Framework: Delegation → Description → Discernment → Diligence (human click) | Auto-merge if reviewer subagent says 'approve' | The cost of a bad merge (broken main, rolled-back deploy, lost time across the team) is far higher than the friction of one human click. The 4D gate exists because trust in agent output is best earned incrementally with humans in the loop on destructive actions. |

Where it breaks

Five failure pairs. Each one is one exam question. The fix is always architectural, deterministic gates, structured fields, pinned state.

Single agent has 15 tools (Read, Write, Edit, Bash, Grep, Glob, WebSearch, gh, npm, jest, eslint, prettier, git, jq, sed). Tool selection accuracy drops; the agent calls Bash(cat) when Read would do, alternates between similar tools, and occasionally hangs comparing options.

AP-25Distribute tools across 2-3 specialised subagents (Reader, Reviewer, Doc-gen). Each subagent has 4-5 tools maximum. Routing accuracy stays high because each specialist has a clear tool set and a clear job.

Agent generates a refactor diff, the reviewer subagent approves it, and the workflow auto-merges. A subtle regression ships to main. Rollback takes 40 min; the team's trust in the agent drops for the rest of the quarter.

AP-264D Framework: Delegation (agent proposes) → Description (clear output) → Discernment (reviewer verdict) → Diligence (human clicks approve). The human gate is non-negotiable for code-changing actions.

Same Claude session writes the code AND reviews it. The reviewer agrees with the writer on every choice. Same context, same assumptions, same blind spots. Confirmation bias passes through; bugs reach production.

AP-27Spawn the reviewer as an independent subagent: fresh messages array, fresh system prompt, no inherited history. It sees only the diff + the project rules. Independence is structural, not philosophical.

Agent runs Read on every file in src/ to 'understand the codebase'. By file 80, context is full of irrelevant content; the agent has lost the original question. Returns generic recommendations instead of specific matches.

Grep + Glob first to locate; Read only matched files. The exploration sequence is non-negotiable: locate → narrow → read. On a 1000-file repo, this is the difference between 1000 Reads and 12.

Workflow parses git log --oneline output with regex to extract commit SHAs. A commit message containing parentheses or a colon breaks the regex; the workflow silently misses commits or attributes them to the wrong author.

Use structured output flags (git log --pretty=format:%H%x09%s, npm test --reporter json, eslint --format json, gh pr diff --json). Parse JSON or fixed-delimiter output. Regex over prose is the reason most agent pipelines break in the third week.

Cost & latency

Grep is one tool call (~200 tokens output, files-with-matches mode). Read on 10-15 files at ~2K tokens each = ~25K input + ~500 output. ~$0.005 typical. Avoiding read-everything saves orders of magnitude on big repos.

Reviewer reads the diff (~3K tokens) + affected files (~6K tokens) + .claude/rules (~500 tokens) + emits structured verdict (~1K). ~$0.012 at Sonnet 4.5 prices. Cheap insurance against a bad merge.

Doc-gen reads source (~4K) + writes JSDoc/docstring (~2K output) + writes README section if asked (~3K output). ~$0.024 typical. Worth running on every commit-back-to-main to keep docs current.

Profile + Grep + Read 12 files + propose diff + Review + Approve + Apply. Sums to ~$0.05 typical. The 4D gate adds zero token cost (human input); reviewer subagent is the dominant line item.

MCP context (language-server output, lint diagnostics) adds ~500-2K tokens per call. Routed per file extension so the cost only applies when language-specific context actually helps.

Ship checklist

Two passes. Build-time gates verify the code; run-time gates verify the system in production.

Build-time

- Codebase context loader runs once per session, caches to .claude/context-cache.json↗ claude-md-hierarchy

- Grep + Glob exploration sequence enforced; never Read-first across many files↗ context-window

- Specialist subagents defined: Reader, Reviewer, Doc-gen. Each with 4-5 tools↗ subagents

- Reviewer subagent runs in fresh context (no inherited writer history)↗ evaluation

- Tool whitelists scoped per specialist (no Edit/Write on Reader; no Edit on Reviewer)↗ tool-calling

- Scratchpad file (.claude/scratchpad.md) persists cross-turn agent state

- MCP servers selected per file extension; not all loaded at session start↗ mcp

- 4D Framework approval gate before any auto-merge or destructive action↗ 4d-framework

- Bash structured-output flags used everywhere (--format json, --porcelain, etc.)

- Tool count audit per subagent: ≤ 5 tools, no exceptions

- Per-task telemetry: tool selection accuracy, reviewer verdict distribution, approval-gate latency

Run-time

- Codebase context loader runs in <2s on first session; cached for subsequent sessions

- Grep+Glob+Read sequence enforced (lint check on subagent system prompts)

- Specialist subagent definitions reviewed via PR. Tool whitelists are an audit gate

- Reviewer subagent independence verified by integration test (no shared message history)

- 4D Framework approval gate fires on every code-changing action; auto-merge disabled

- Scratchpad TTL + size-bound: archive old entries past 20 sections

- MCP server health checks per session start; degraded mode on outage

- Telemetry: tool-selection accuracy, reviewer verdict distribution, approval-gate p95 latency

Five exam-pattern questions

A developer-productivity agent explores a 1,000-file codebase looking for references to a function. It uses `Read` on every file and runs out of context after 200 files. How should you fix this?

Read-everything with the Grep + Glob first, Read only matched sequence. Step 1: Grep(pattern: '\\bFunctionName\\b', output_mode: 'files_with_matches') returns the 12 files that actually contain the symbol. Step 2: Glob narrows further if needed (e.g. only src/**). Step 3: Read only the matched files. On a 1,000-file repo this is the difference between 1,000 Reads and 12. Orders of magnitude on tokens and the difference between a focused agent and a context-flooded one. Tagged to AP-28.An agent auto-generates and auto-reviews code in a single Claude session. The reviewer always agrees with the writer, even when bugs slip through. What architectural change fixes this?

messages array, fresh system prompt, and a tool whitelist scoped to [Read, Grep, Bash(test, lint)]. No Edit, no Write. The reviewer sees only the diff + the project rules; it has zero context from the writer. Confirmation bias is structural in same-session review; only architectural independence eliminates it. Tagged to AP-27.You're building a documentation agent that processes 12 source files across React components, API routes, and database queries. Your first attempt is one agent with 15 tools. How many specialised subagents should you create?

AP-25.Your productivity agent successfully rewrites a critical function and immediately auto-merges the change to main. A subtle regression ships to production. What gate was missing?

AP-26.An agent uses `git log --oneline` and parses the output with regex to extract commit SHAs and messages. After a commit message containing parentheses ships, the workflow silently misattributes commits. What's the rule?

git log --oneline parsed by regex with git log --pretty=format:%H%x09%h%x09%s%x09%an%x09%aI (tab-delimited, no ambiguity). Or --pretty=format:%H then git show for full info. Same applies to npm test --reporter json, eslint --format json, gh pr diff --json. Regex over prose is the reason most agent pipelines break in their third week. Structured output is a contract; regex on prose is a guess. Tagged to AP-29.Frequently asked

Should I use Read or Grep to explore a large codebase?

Can an agent review code it just generated in the same session?

How many tools should a developer-productivity agent have?

Do I need MCP servers for code-productivity workflows?

pyright for Python types, tsc --noEmit for TS, ruff for Python lint, eslint for JS. Route per file extension. Don't load all MCP servers at session start.Should productivity agents auto-merge code?

How do you handle multi-language codebases?

--type ts or --type py to narrow searches by language. The Reviewer subagent loads pyright for .py files and tsc for .ts files. The Doc-Generator emits language-appropriate doc syntax (JSDoc for JS/TS, docstrings for Python). One agent topology, multiple language plug-ins.