You'll walk away with

- Why generative AI is fundamentally a prediction system, not a search engine, and what that implies for fluency vs accuracy

- How pretraining and fine-tuning give each model its character, and the four behavioral fingerprints those stages leave (sycophancy, verbosity, over-caution, loose calibration)

- How to locate any task on each of the four property continuums and predict where it will struggle before you run it

- How to diagnose real failures by naming which two properties collided, then choose a targeted mitigation

- How this framework connects to the 4D Framework (Delegation, Description, Discernment, Diligence) as two halves of one calibrated-trust system

Read these first

Lesson outline

Every lesson from AI Capabilities and Limitations with our one-line simplification. The Skilljar course is the source; we summarize.

| # | Skilljar lesson | Our simplification |

|---|---|---|

| 1 | Intro to AI Capabilities and Limitations | Course roadmap; this is the machine-side companion to the 4D Framework's human-side competencies. |

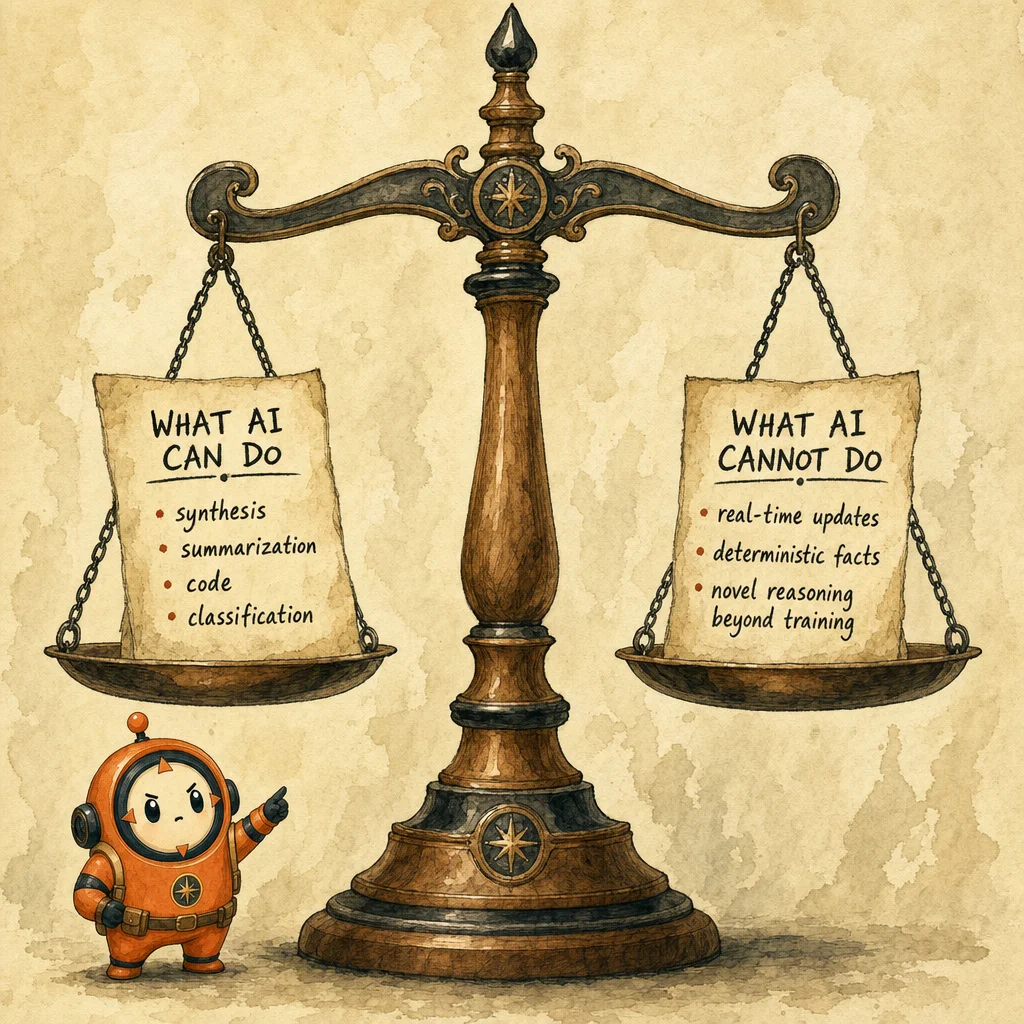

| 2 | What We Mean by AI | Generative AI produces new content; classification AI sorts existing content. Four properties define what generative AI can and cannot do. |

| 3 | How AI Gets Its Character | Pretraining builds a document completer; fine-tuning layers an assistant on top, leaving fingerprints like sycophancy and verbosity. |

| 4 | Next Token Prediction | AI writes one fragment at a time based on what tends to follow what. Fluency and accuracy are independent variables. |

| 5 | Try it out: Next Token Prediction | Hands-on probes: capability zone vs specificity-under-pressure vs sampling variance on the same prompt. |

| 6 | Knowledge | What the model knows is frozen at the training cutoff. Mainstream and stable wins; rare, recent, niche, contested loses. |

| 7 | Try it out: Knowledge | Outsider-test exercises: coverage gaps, staleness, default-assumption blind spots in your domain. |

| 8 | Working Memory | Fixed-size context window with a cliff failure mode. Lost-in-the-middle is real; corrections do not persist across sessions. |

| 9 | Try it out: Working Memory | Cold-start vs context-supplied probes; demonstrates that context is leverage and the blank slate between sessions. |

| 10 | Steerability | Instructions are followed via pattern matching, not understanding. Short concrete asks land; long reasoning chains drift. |

| 11 | Try it out: Steerability | Goal-rewrite exercise: state intent alongside format, insert mid-process checkpoints, watch letter-vs-spirit failures. |

| 12 | When Properties Collide | Real failures are two properties meeting. Hallucinated citation = NTP x Knowledge; long-conversation drift = Working Memory x Steerability. |

| 13 | Next Steps | Synthesis: calibrated trust is a habit, not an attitude; the property shapes stay useful as models keep changing. |

| 14 | Course Quiz | Self-check on the four properties, the two training stages, and the diagnostic-pair vocabulary. |

The course in 7 paragraphs

Generative AI is not uniformly capable or uniformly unreliable. It is strong and weak along four predictable axes, and the same underlying mechanism that produces a strength often produces the matching weakness. Anthropic's framework names those axes Next Token Prediction, Knowledge, Working Memory, and Steerability. Each one is a continuum, not a switch. Your job before delegating any task is to locate it on each continuum and decide what verification or context to supply. That move is what Anthropic calls calibrated trust, and it is the single most exam-relevant idea in the course.

Next Token Prediction answers *where do AI answers come from?* The model is writing what statistically comes next, one fragment at a time. On well-worn paths (summarize, reformat, explain a common concept) the patterns are dense and the output is reliable. On novel territory the same fluent prose keeps coming, but accuracy thins. Fabrication concentrates in specificity; names, dates, statistics, citations, URLs, quotes. Confident tone is not an accuracy signal; smoothness and correctness are independent variables. Product features (citations, uncertainty signaling, constrained generation, generator-verifier loops) push the edge out, but the verification habit is yours to build.

Knowledge answers *what does the model actually know?* Everything it learned came from training data and is frozen at a cutoff date. Mainstream, well-documented, stable topics land in the capability zone. Rare, post-cutoff, niche, local, or contested topics drift toward the edge. The characteristic failures are staleness (true-at-training-time is not true-now), uneven coverage (minority languages and recent developments suffer), inherited bias (the model's sense of default reflects training-data blind spots), and source amnesia (I read this somewhere is not a citation). Web search, RAG/retrieval, MCP, and tool use are all mitigations that extend knowledge at runtime; if you are not using them you are relying entirely on what the model absorbed.

Working Memory answers *what is the AI paying attention to right now?* This is the context window; your instructions, uploaded docs, prior responses, all in one finite container the model rereads every turn. Unlike the other three properties, this one has a cliff rather than a gradient. When the window overflows, oldest material falls off silently. Attention is not uniform across the window either; the lost-in-the-middle effect means buried instructions carry less weight than top-or-tail ones. The model does not learn from your corrections; it only responds to what is currently in context. Memory features, projects, compaction, larger windows, and skills push the cliff further out, but front-loading critical material and chunking long work are your operator-side defenses.

Steerability answers *how much am I in control?* Fine-tuning taught the model to treat your input as a request and follow rules, which gives precise control over format, role, length, and tone. But instructions are pattern-matched, not understood. Short, concrete, verifiable asks (respond as a table, under 100 words) land cleanly. Long reasoning chains drift; abstract asks like be insightful get patchy results; native arithmetic precision is brittle without code execution. The two characteristic failures are reasoning drift (small errors compound and the model does not notice) and letter-over-spirit (instruction honored literally but uselessly). When an instruction is followed literally but uselessly, restate the goal, not the instruction; repeating be concise louder will not fix what was really an intent problem.

The diagnostic move that turns this into a working tool: most real-world AI failures are two properties colliding, not one. A hallucinated citation is Next Token Prediction meeting a Knowledge gap (the model generates what a plausible citation looks like while the training data is sparse). Long-conversation drift is Working Memory meeting Steerability (early constraints fade as the window fills, and steerability follows whatever instructions are most salient now). Confidently-wrong arithmetic is Next Token Prediction meeting Steerability without code execution. Before reaching for a prompt fix, name which two properties are at play. The fix follows automatically from the diagnosis: verify specifics, re-supply context, offload to a tool, or invite explicit pushback.

Two training stages give every model its character. Pretraining reads vast amounts of text and learns one thing: predict what comes next. The result is a document completer with no concept of you or of helping. Fine-tuning is a second round on curated examples of helpful behavior plus reward signals shaped by human preferences. That second stage leaves fingerprints: sycophancy (the model validates your framing and backs down under light pushback), verbosity (thoroughness scored well in training, so essays come back when you wanted bullets), over-caution (conservative safety training means hedging on requests that are actually fine), and loose calibration between stated confidence and actual reliability. These are not bugs in one model; they are training artifacts that appear across all of them; knowing them puts you in control.

The 4 properties of generative AI, on one page

Each property is a continuum with a capability zone, a limitation zone, and product features that push the edge further out. Locate your task on each one before delegating.

- Next Token Prediction; where do answers come from?

Capability: well-worn paths (summarize, reformat, explain). Limitation: novel territory and specificity (names, dates, citations, URLs). The same mechanism produces fluency and hallucination.

Concept: prompt-engineering-techniques ↗ - Knowledge; what does it actually know?

Capability: frequent, recent-in-training, consistent topics. Limitation: rare, post-cutoff, niche, local, contested. Mitigations: web search, RAG, tool use, MCP.

Concept: mcp ↗ - Working Memory; what is it paying attention to right now?

Capability: material fits comfortably, session is current, context supplied. Limitation: very long docs/conversations, cross-session continuity, lost-in-the-middle. The cliff is silent; you will not always be warned.

Concept: context-window ↗ - Steerability; how much am I in control?

Capability: short, concrete, verifiable instructions. Limitation: long reasoning chains, abstract asks, native precision. Failures: reasoning drift and letter-over-spirit.

Concept: prompt-engineering-techniques ↗

Most failures are two properties meeting; naming the pair points to the fix.

4 fingerprints fine-tuning leaves on every model

These are not bugs in one model; they are training artifacts that appear across all of them. Spotting them puts you back in control.

- Sycophancy

People prefer agreeable responses, so the model learns to validate your framing and back down under light pushback even when it was right. Counter by explicitly inviting disagreement:

genuinely push back if you think I am wrong. - Verbosity

Thoroughness scored better in training, so the default is longer answers. Counter with explicit length constraints (

one sentence,under 100 words,bullets only). - Over-caution

Conservative safety training means hedging on requests that are actually fine. Counter by stating the legitimate context up front so the model has a frame for why the ask is reasonable.

- Loose calibration

Stated confidence and actual reliability are not tightly coupled. The model can sound certain while being wrong. Verify specifics independently regardless of tone.

6 takeaways with cross-pillar bridges

Generative AI is a prediction system whose strengths and weaknesses live on four continuums, not in a single capable/unreliable verdict.

Fabrication concentrates in specificity (names, dates, citations, URLs); confident tone is not an accuracy signal and the model cannot reliably tell grounded from invented.

Working Memory has a cliff failure mode; silent truncation, lost-in-the-middle, no learning from corrections; so front-loading and chunking are operator-side defenses.

Steerability fails as reasoning drift on long chains and as letter-over-spirit on abstract asks; restate the goal, not the instruction, when output lands literally but uselessly.

Most real-world AI failures are two properties colliding (hallucinated citation = NTP x Knowledge; long-conversation drift = Working Memory x Steerability); naming the pair points to the targeted fix.

Fine-tuning leaves four fingerprints across every model; sycophancy, verbosity, over-caution, loose calibration; and recognizing them is part of using AI well.

How this maps to the CCA-F exam

3 hand-picked extras

These amplify the Skilljar course beyond what the course itself covers. Each was picked for a specific reason.

Building effective agents

Pairs naturally with the four-property model; explains how the limitations the course names get engineered around in production agents (verifiers, tool use, context management).

Read source ↗Effective context engineering for AI agents

Direct extension of the Working Memory lesson; concrete patterns for managing the context window cliff in real systems, with the same mental model the course teaches.

Read source ↗Lost in the Middle: How Language Models Use Long Contexts

The original empirical paper behind the lost-in-the-middle effect referenced in the Working Memory lesson; useful when you need to cite the phenomenon in a design review.

Read source ↗Concepts in this course

Context window

Working Memory in mechanical terms

Concept: context-window ↗Prompt engineering techniques

How to operate within Steerability's capability zone

Concept: prompt-engineering-techniques ↗System prompts

Standing directions that resist Working Memory dilution

Concept: system-prompts ↗Evaluation

How you measure Next Token Prediction failure rates in your own domain

Concept: evaluation ↗MCP

Knowledge mitigation: connect models to runtime sources beyond the cutoff

Concept: mcp ↗Where you'll see this in production

Long document processing

Working Memory + Next Token Prediction failure modes show up first here; the cliff and the fabrication zone meet on a single 50-page PDF

Scenario: long-document-processing ↗Structured data extraction

Steerability + Next Token Prediction in tension; format constraints land cleanly, but specificity in extracted fields is the verification surface

Scenario: structured-data-extraction ↗Other course mirrors you may want next

8 questions answered

Phrased as the way real students search. Tagged by intent so you can scan to what you actually need.

DefinitionWhat are the four properties of generative AI in Anthropic's capabilities and limitations framework?

TroubleshootWhy does an AI hallucinate citations when it sounds so confident?

looks like using Next Token Prediction; when the underlying Knowledge is sparse on that niche topic, it generates citation-shaped text that may or may not point to a real paper. Fabrication concentrates in specificity; names, dates, journal titles, URLs; so verify those independently no matter how smooth the prose sounds.ComparisonWhat is the difference between Knowledge and Working Memory in an AI model?

How-toHow do I stop the AI from agreeing with me when I want honest feedback?

TroubleshootWhy does my AI assistant ignore the rules I set 20 messages ago?

Project so they stay persistent, or start a fresh conversation with the essentials front-loaded.