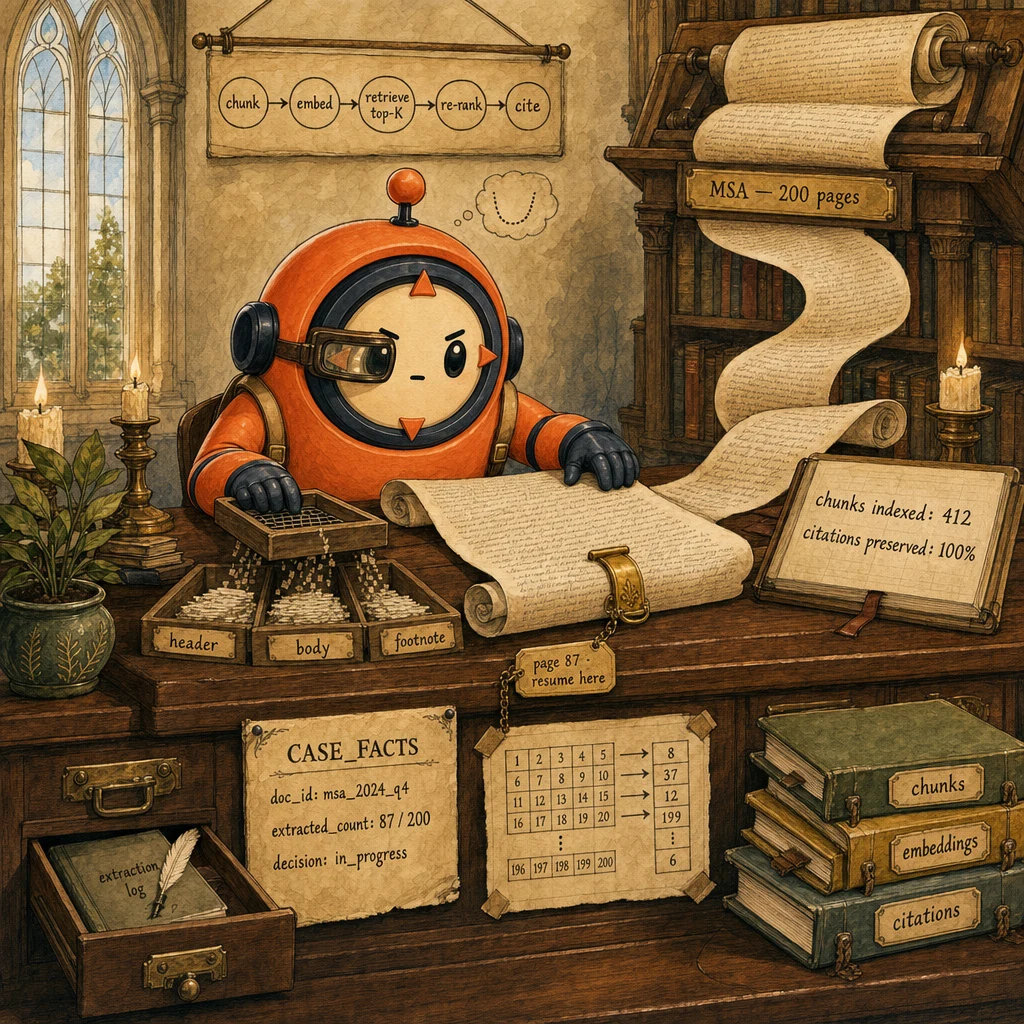

The problem

What the customer needs

- Process 200-page contracts without max_tokens errors and without losing the order/case ID partway through.

- Audit-grade citations. Every extracted clause traces back to a specific chunk and page number.

- Bulk overnight runs for backfills. 1000 documents in one batch, results next morning.

Why naive approaches fail

- Stuff the whole document into the prompt → max_tokens at page 120; lost-in-the-middle drops the order ID established on page 1.

- Progressive summarization of facts → '$247.83' becomes '~$250' in the case-facts; audit fails because exact values were paraphrased.

- RAG without citation tracking → model hallucinates source pages; auditor can't verify any claim against the original document.

- Semantic chunking (paragraph + section boundaries), not fixed-size, with 10-20% overlap

- Top-K retrieval (K = 5 typical) returns only relevant chunks; full document never enters context

- CASE_FACTS block pinned at every prompt top. Never summarized, only the conversation history is

- Checkpoint-and-resume on

max_tokens: state saved, fresh session, resume from checkpoint - Citations (chunk_id + page) propagate through every extraction; auditor can verify each claim

- Batch API for bulk overnight extraction (≥ 100 docs at 50% off)

The system

What each part does

5 components, each owns a concept. Click any card to drill into the underlying primitive.

Semantic Chunker

paragraph + section boundaries, not fixed-size

Splits the document at natural boundaries (sections, paragraphs, list items) rather than at fixed byte counts. Preserves the meaning of each chunk; a sentence is never cut in half. Adds 10-20% overlap between adjacent chunks so a clause that spans a boundary still appears whole in at least one chunk. Fixed-size chunking destroys context; semantic chunking preserves it.

Configuration

Chunk size: 500-2000 tokens (≈400-1500 words). Overlap: 10-20%. Boundary precedence: section header → paragraph → sentence (never break mid-sentence). Each chunk gets a deterministic chunk_id and a page number for citation.

Retrieval Index (top-K)

embeddings + cosine similarity

Embeds every chunk once at ingest time; stores embeddings in a vector index (FAISS, pgvector, Pinecone). At extraction time, the agent's question gets embedded; the index returns the top-K most-similar chunks (K = 5 typical). Only those K chunks enter context. The full document never does. Latency p95 < 100ms even on 10K-chunk documents.

Configuration

Embedding model: Voyage-3 or OpenAI text-embedding-3-small (~$0.13 / M tokens). Distance: cosine similarity. K: 5 (sweet spot. Bigger dilutes context, smaller misses relevance). Re-rank with Claude Haiku for the top-20 → top-5 if precision matters.

CASE_FACTS Block

immutable anchor at prompt top

Pinned at the very top of every system prompt iteration. Holds doc_id, extracted_count, decisions already made, policy_cap. Survives every chunk swap. NEVER summarized. Exact values like '$247.83' stay exact across hundreds of turns. The conversation history below it CAN be summarized; case-facts cannot. The architectural difference between 'reliable extraction' and 'paraphrased nonsense'.

Configuration

system: "CASE_FACTS (immutable; re-read every turn): doc_id={doc_id}, extracted_count={count}, last_clause_id={cid}". Updated by hooks after state-changing tool calls.

Checkpoint-and-Resume

the architectural fix for max_tokens

When the model returns stop_reason: max_tokens, the harness writes the current case-facts + last extracted record + chunk position to a durable store (Convex DB, S3, local JSONL), then starts a FRESH session and reads the checkpoint as its case-facts. The new session continues from where the old left off. No data loss; no re-processing; no manual intervention.

Configuration

On stop_reason==max_tokens: persist({doc_id, extracted_count, last_chunk_id, partial_extraction}). New session: system_prompt loads case-facts from checkpoint. Idempotent on the chunk_id key.

Citation Tracker

chunk_id + page on every output

Every tool result includes the chunk_id(s) and page number(s) that supported the extraction. The model's output schema requires citations: [{chunk_id, page, span?}]; downstream consumers can click any extracted value and see the exact paragraph in the original document. Audit-grade provenance, structurally enforced.

Configuration

extract_clause output_schema: { clause_text, clause_type, citations: [{ chunk_id, page, span?: 'character offsets within chunk' }] }. The model can't emit a clause without at least one citation.

Data flow

Eight steps to production

Semantic chunking with overlap

Walk the document; split at section / paragraph / sentence boundaries (in that precedence). Aim for 500-2000-token chunks; add 10-20% overlap between adjacent chunks so a clause spanning a boundary stays whole in at least one chunk. Each chunk gets a deterministic chunk_id (hash of content) and a page number for citation.

import hashlib

from typing import TypedDict

class Chunk(TypedDict):

chunk_id: str

page: int

text: str

def chunk_document(pages: list[str], target_tokens: int = 1200, overlap: float = 0.15) -> list[Chunk]:

"""Semantic chunking with overlap. Split at section, paragraph, sentence."""

chunks = []

buffer = ""

page_buffer_started = 1

for page_num, page_text in enumerate(pages, start=1):

for paragraph in split_into_paragraphs(page_text):

# If adding this paragraph would exceed target, emit current buffer

if approx_tokens(buffer + paragraph) > target_tokens and buffer:

chunks.append({

"chunk_id": hashlib.md5(buffer.encode()).hexdigest()[:12],

"page": page_buffer_started,

"text": buffer.strip(),

})

# Carry forward the last 15% as overlap

tail = buffer[-int(len(buffer) * overlap):]

buffer = tail + paragraph + "\n\n"

page_buffer_started = page_num

else:

buffer += paragraph + "\n\n"

if buffer.strip():

chunks.append({

"chunk_id": hashlib.md5(buffer.encode()).hexdigest()[:12],

"page": page_buffer_started,

"text": buffer.strip(),

})

return chunks

def split_into_paragraphs(page_text: str) -> list[str]:

return [p for p in page_text.split("\n\n") if p.strip()]

def approx_tokens(text: str) -> int:

return len(text) // 4 # rule of thumbEmbed and index every chunk

At ingest time, embed each chunk once with a strong embedding model (Voyage-3 or OpenAI text-embedding-3-small) and store in a vector index. Index by chunk_id; the embedding becomes the search key. Re-embedding only fires on content change (hash-keyed cache). For a 200-page document at ~500 chunks, embedding is a ~$0.05 one-time cost.

# embed_and_index.py

import voyageai

vo = voyageai.Client() # picks up VOYAGE_API_KEY

def embed_and_index(chunks: list[Chunk], collection: str):

"""Embed every chunk; store in vector DB keyed by chunk_id."""

texts = [c["text"] for c in chunks]

# Voyage supports batched embedding. Much cheaper than per-chunk

embeddings = vo.embed(texts, model="voyage-3", input_type="document").embeddings

# Pseudo-code for vector store; real impl uses pgvector / Pinecone / FAISS

for chunk, embedding in zip(chunks, embeddings):

vector_store.upsert(

collection=collection,

id=chunk["chunk_id"],

vector=embedding,

metadata={"page": chunk["page"], "text_preview": chunk["text"][:200]},

)

return len(embeddings)

# Re-embedding cache: skip if chunk content hash hasn't changed

def smart_reindex(doc_id: str, new_chunks: list[Chunk]):

existing = vector_store.list_chunks(collection=doc_id)

existing_ids = {c["id"] for c in existing}

new_ids = {c["chunk_id"] for c in new_chunks}

to_delete = existing_ids - new_ids

to_add = [c for c in new_chunks if c["chunk_id"] not in existing_ids]

vector_store.delete_many(doc_id, list(to_delete))

embed_and_index(to_add, doc_id)Retrieve top-K chunks; never the full document

When the agent asks a question (e.g., 'what's the indemnification clause?'), embed the question, retrieve the top-K=5 most-similar chunks, and pass ONLY those into context. The full document never enters the prompt. K=5 is the sweet spot. Bigger K dilutes context with marginally-relevant chunks; smaller K misses the right one. Re-rank top-20 with Claude Haiku if precision matters.

def retrieve_top_k(question: str, doc_id: str, k: int = 5) -> list[dict]:

"""Top-K retrieval. Full doc never enters context."""

q_embed = vo.embed([question], model="voyage-3", input_type="query").embeddings[0]

candidates = vector_store.search(collection=doc_id, vector=q_embed, top_k=20)

# Optional re-rank with Haiku for precision

if len(candidates) > k:

candidates = rerank_with_haiku(question, candidates)[:k]

return [

{

"chunk_id": c["id"],

"page": c["metadata"]["page"],

"text": c["metadata"]["text_full"], # rehydrate from chunk store

"score": c["score"],

}

for c in candidates[:k]

]

def rerank_with_haiku(question: str, candidates: list[dict]) -> list[dict]:

"""Use Haiku to re-rank embedding candidates (cheap, focused)."""

prompt = (

f"Question: {question}\n\n"

+ "\n\n".join(f"[{i}] {c['metadata']['text_preview']}"

for i, c in enumerate(candidates))

+ "\n\nReturn the indices of the top 5 most relevant chunks, JSON only:"

)

resp = client.messages.create(

model="claude-haiku-4-5-20251001",

max_tokens=64,

messages=[{"role": "user", "content": prompt}],

)

indices = json.loads(resp.content[0].text)

return [candidates[i] for i in indices]Pin CASE_FACTS at the prompt top. Never summarize

Every prompt iteration starts with a CASE_FACTS block: doc_id, extracted_count, last_clause_id, decisions already made. The block is rebuilt from durable state every turn. It survives summarization, model swaps, and session resets. Critically, EXACT VALUES ($247.83, not ~$250; cust_4711, not the customer) stay verbatim. The conversation history below it CAN be summarized; the case-facts cannot.

def build_system_prompt(case_facts: dict, retrieved_chunks: list[dict]) -> str:

"""Pin CASE_FACTS at the top; retrieved chunks below; conversation last."""

chunks_text = "\n\n".join(

f"### CHUNK {c['chunk_id']} (page {c['page']})\n{c['text']}"

for c in retrieved_chunks

)

return f"""You are a long-document extraction agent.

CASE_FACTS (immutable; re-read every turn; values are EXACT, never paraphrased):

- doc_id: {case_facts['doc_id']}

- doc_type: {case_facts.get('doc_type', 'unknown')}

- extracted_count: {case_facts.get('extracted_count', 0)}

- last_clause_id: {case_facts.get('last_clause_id', 'none')}

- policy_cap: ${case_facts.get('policy_cap', 0):,.2f}

Constraints:

- Cite every extraction with chunk_id + page from the chunks below.

- Never paraphrase exact values from CASE_FACTS or chunk text.

- Branch on stop_reason. On max_tokens, save state. The harness will resume.

RETRIEVED CHUNKS (top-K; only these are in context):

{chunks_text}"""

def update_case_facts(case_facts: dict, new_extraction: dict) -> dict:

"""Hook-style update; preserves all values verbatim."""

return {

**case_facts,

"extracted_count": case_facts.get("extracted_count", 0) + 1,

"last_clause_id": new_extraction["clause_id"],

}Checkpoint on max_tokens; resume in a fresh session

When stop_reason == 'max_tokens', the harness writes the current case-facts + last extracted record + chunk position to a durable store, then starts a fresh session and re-loads the checkpoint as its case-facts. Because case-facts are at the prompt top, the new session continues exactly where the old left off. No data loss; no manual intervention; no need for the agent to even know.

import json

from datetime import datetime

CHECKPOINT_DIR = ".checkpoints"

def save_checkpoint(case_facts: dict, last_record: dict, position: dict):

"""Persist state on max_tokens. Idempotent on doc_id."""

path = f"{CHECKPOINT_DIR}/{case_facts['doc_id']}.json"

with open(path, "w") as f:

json.dump({

"case_facts": case_facts,

"last_record": last_record,

"position": position, # {chunk_id, paragraph_offset}

"saved_at": datetime.utcnow().isoformat() + "Z",

}, f)

return path

def load_checkpoint(doc_id: str) -> dict | None:

path = f"{CHECKPOINT_DIR}/{doc_id}.json"

if not os.path.exists(path):

return None

with open(path) as f:

return json.load(f)

def extract_with_resume(doc_id: str, question: str, max_iter: int = 50):

"""Top-level loop with automatic checkpoint-and-resume."""

checkpoint = load_checkpoint(doc_id) or {}

case_facts = checkpoint.get("case_facts") or {"doc_id": doc_id}

for iteration in range(max_iter):

chunks = retrieve_top_k(question, doc_id, k=5)

resp = client.messages.create(

model="claude-sonnet-4.5",

max_tokens=4096,

system=build_system_prompt(case_facts, chunks),

tools=[EXTRACT_CLAUSE_TOOL],

messages=[{"role": "user", "content": question}],

)

if resp.stop_reason == "end_turn":

return {"status": "complete", "case_facts": case_facts}

if resp.stop_reason == "max_tokens":

# Save and continue in a fresh session

save_checkpoint(case_facts, last_record={}, position={})

continue # next iteration starts a fresh session

if resp.stop_reason == "tool_use":

tool_use = next(b for b in resp.content if b.type == "tool_use")

case_facts = update_case_facts(case_facts, tool_use.input)

return {"status": "iteration_cap", "case_facts": case_facts}Citations. Chunk_id + page on every output

Every extraction tool emits a citations: [{ chunk_id, page }] array; the schema makes citations REQUIRED. The model can't extract a clause without pointing at the chunks that supported it. Downstream consumers (auditors, reviewers, regulators) click any extracted value and see the exact paragraph in the original. Audit-grade provenance, structurally enforced.

EXTRACT_CLAUSE_TOOL = {

"name": "extract_clause",

"description": (

"Extract a contractual clause from the retrieved chunks.\n"

"Use this when the user asks for a specific clause type.\n"

"Edge cases: if the clause type is not present in any retrieved chunk, "

"emit clause_text='not_found' with empty citations.\n"

"ALWAYS cite chunk_id and page for every extraction."

),

"input_schema": {

"type": "object",

"properties": {

"clause_id": {"type": "string"},

"clause_type": {

"type": "string",

"enum": ["indemnification", "termination", "payment", "ip", "other"],

},

"clause_text": {"type": "string"},

"citations": {

"type": "array",

"minItems": 1, # require at least one citation

"items": {

"type": "object",

"properties": {

"chunk_id": {"type": "string"},

"page": {"type": "integer", "minimum": 1},

"span": {

"type": "string",

"description": "character offsets within the chunk, e.g. '128-340'",

},

},

"required": ["chunk_id", "page"],

},

},

},

"required": ["clause_id", "clause_type", "clause_text", "citations"],

},

}Bulk extraction via Batch API (50% off, 24h)

When the use case is 'extract every payment clause from 1000 contracts overnight', the Batch API earns its 50% discount. Submit at 6 PM, results ready at 6 AM. No real-time retry inside the batch; failures get resubmitted in the next batch with their specific error in the next message. Combined with prompt caching on the system prompt + tool registry, bulk extraction cost drops 95%+ vs naive sync calls.

def submit_bulk_extraction(docs: list[dict], clause_type: str) -> str:

"""Submit a batch of clause-extraction requests for overnight processing."""

requests = []

for doc in docs:

chunks = retrieve_top_k(f"Find {clause_type} clauses", doc["id"], k=5)

case_facts = load_checkpoint(doc["id"]) or {"doc_id": doc["id"]}

requests.append({

"custom_id": f"clause-{doc['id']}-{clause_type}",

"params": {

"model": "claude-sonnet-4.5",

"max_tokens": 2048,

"system": [{

"type": "text",

"text": build_system_prompt(case_facts, chunks),

"cache_control": {"type": "ephemeral"}, # cache the system prompt

}],

"tools": [

{**EXTRACT_CLAUSE_TOOL, "cache_control": {"type": "ephemeral"}},

],

"tool_choice": {"type": "tool", "name": "extract_clause"},

"messages": [{"role": "user", "content": f"Extract all {clause_type} clauses."}],

},

})

batch = client.messages.batches.create(requests=requests)

return batch.id

# Next morning. Fetch + harvest

def harvest_bulk(batch_id: str):

results = client.messages.batches.results(batch_id)

accepted, retry_queue = [], []

for r in results:

if r.result.type == "succeeded":

tu = next(b for b in r.result.message.content if b.type == "tool_use")

accepted.append(tu.input)

else:

retry_queue.append(r.custom_id)

return {"accepted": accepted, "retry": retry_queue}Stratified accuracy + adversarial 'silent source' tests

Aggregate accuracy hides per-document-type weakness. Stratify by doc_type (MSA vs DPA vs SOW), by clause_type, by page-section (front/middle/back). Surface the worst stratum. Pair with an adversarial test set of 50 documents where the requested clause is GENUINELY absent. The right behaviour is clause_text='not_found' with empty citations, NEVER an invented clause. Hallucinated extractions = audit-fail.

from collections import defaultdict

def stratified_accuracy(extractions: list[dict]) -> dict:

"""Pass rate by doc_type × clause_type × page-section."""

buckets = defaultdict(lambda: {"pass": 0, "fail": 0})

for e in extractions:

section = ("front" if e["citations"][0]["page"] <= 30

else "back" if e["citations"][0]["page"] > 150

else "middle")

key = (e["doc_type"], e["clause_type"], section)

bucket = "pass" if validate_extraction(e) else "fail"

buckets[key][bucket] += 1

report = {}

for (doc_type, clause_type, section), counts in buckets.items():

total = counts["pass"] + counts["fail"]

report[f"{doc_type}/{clause_type}/{section}"] = {

"total": total,

"pass_rate": counts["pass"] / total if total else 0,

}

return dict(sorted(report.items(), key=lambda kv: kv[1]["pass_rate"]))

def adversarial_silent_source_test() -> float:

"""50 docs where the requested clause is GENUINELY absent."""

correct = 0

for doc in load_silent_source_docs(): # known to NOT contain the clause

result = extract_with_resume(doc["id"], "Find the indemnification clause")

# Right: clause_text='not_found' with empty citations

# Wrong: ANY invented clause text

if result.get("case_facts", {}).get("last_extraction", {}).get("clause_text") == "not_found":

correct += 1

return correct / 50 # target: ≥ 95%The four decisions

| Decision | Right answer | Wrong answer | Why |

|---|---|---|---|

| 200-page document into the prompt | Chunk + index + retrieve top-K (K=5) | Stuff the whole document into one prompt | Stuffing hits max_tokens around page 120 and triggers lost-in-the-middle. Top-K retrieval keeps the prompt small and focused. The full document never enters context, so length is bounded by chunk count not document size. |

| Storing transactional values across many turns | CASE_FACTS block. Exact values, never paraphrased | Progressive summarization that paraphrases the conversation including facts | Summarization erodes precision. '$247.83' becomes '~$250'; 'cust_4711' becomes 'the customer'. CASE_FACTS keeps exact values verbatim; conversation history can be summarized, facts cannot. |

| Hit max_tokens mid-document | Save state + start fresh session + reload from checkpoint | Increase max_tokens or just retry from scratch | Larger windows defer the problem; checkpoint-and-resume permanently solves it. Restarting from scratch loses everything extracted so far. The architectural pattern scales to documents of any length. |

| Bulk overnight processing of 1000 documents | Batch API + cached system prompt + cached tool registry | Sync API in a tight loop | Batch API gives a flat 50% discount with a 24h SLA. Fine for non-blocking backfill. Caching adds another ~90% off the system + tools. Combined: ~95% savings vs naive sync. Sync API is for latency-critical extraction only. |

Where it breaks

Five failure pairs. Each one is one exam question. The fix is always architectural, deterministic gates, structured fields, pinned state.

Try to paste a 150-page contract into a single prompt. Hits max_tokens at page 120; lost-in-the-middle drops the order ID established on page 1; agent makes contradictory recommendations on later pages.

AP-LDP-01Chunk + index + top-K retrieval. Only K=5 chunks ever enter context. The full document is searchable but never present. Length is bounded by retrieval, not document size.

Long conversation summarizes every 10 turns. Refund amount '$247.83' becomes '~$250' in the summary; customer ID 'cust_4711' becomes 'the customer'. Audit fails because exact values were paraphrased.

AP-LDP-02CASE_FACTS block at every prompt top. Never summarized. Holds exact values verbatim. Only the message history below is summarized; the case-facts persist verbatim across every iteration.

Long batch job processes 200 pages, hits max_tokens at turn 15. The whole pipeline aborts; everything extracted so far is lost; operator has to restart from page 1.

AP-LDP-03Checkpoint-and-resume: on stop_reason: max_tokens, persist state (case_facts + last extraction + chunk position) and start a fresh session that reloads the checkpoint as its case-facts. Idempotent on chunk_id.

Agent retrieves chunks and emits extracted clauses without saying which chunk supported each one. Auditor asks 'where does this come from?' and there's no answer; auditor flags the run as un-verifiable.

AP-LDP-04Citation tracker: every extraction emits citations: [{ chunk_id, page }] with minItems: 1 in the schema. The model can't extract a clause without pointing at the supporting chunks. Audit-grade provenance is structurally enforced.

Fixed-size chunks (every 1000 characters) split mid-sentence and mid-paragraph. A clause that spans a chunk boundary appears truncated in both adjacent chunks; retrieval misses it; extraction is wrong.

AP-LDP-05Semantic chunking with overlap. Split at section / paragraph / sentence boundaries (in that precedence). Add 10-20% overlap between adjacent chunks so a boundary-spanning clause stays whole in at least one chunk. Each chunk is meaning-complete.

Cost & latency

500 chunks × ~1000 tokens × Voyage-3 at $0.13/M tokens ≈ $0.06. One-time cost at ingest; never re-paid unless content changes.

Embedding query (~$0.0001) + vector lookup (~$0.0001) + 5 chunks × ~1000 tokens (cached) + ~500 output tokens. Cache hit rate ≥ 70% drops effective per-call cost ~80%.

Batch API 50% discount × prompt caching ~90% off the system + tools = ~95% off naive sync. 1000 extractions @ $0.008 each = $8 total overnight.

JSON dump of case_facts + last extraction + chunk position. Negligible per-document; at 1000 docs in flight, <15MB total. Idempotent re-loads add no cost.

Embed query (50ms) + vector lookup (50ms) + Claude call (2-3s with cache hit). Acceptable for interactive review of contracts; bulk uses Batch API.

Ship checklist

Two passes. Build-time gates verify the code; run-time gates verify the system in production.

Build-time

- Semantic chunking (paragraph + section boundaries) with 10-20% overlap↗ context-window

- Each chunk has a deterministic chunk_id and a page number

- Embeddings indexed once at ingest; re-embedding only on content change↗ context-window

- Top-K retrieval (K=5 typical); full document never enters context

- CASE_FACTS block pinned at every prompt top. Exact values, never paraphrased↗ case-facts-block

- Checkpoint-and-resume on max_tokens; idempotent on chunk_id↗ checkpoints

- Citations REQUIRED in every extraction tool's schema (minItems: 1)↗ structured-outputs

- System prompt + tool registry cached with cache_control: ephemeral↗ prompt-caching

- Batch API for bulk overnight runs (≥ 100 docs)↗ batch-api

- Stratified accuracy: doc_type × clause_type × page-section↗ evaluation

- Adversarial 'silent source' test (≥ 50 docs known to lack the clause); target ≥ 95% not_found rate

Run-time

- Semantic chunker tested on 5 representative document types (contracts, papers, manuals)

- Embedding cache by chunk content hash; rebuild only on change

- CASE_FACTS schema versioned; migration plan documented

- Checkpoint write is atomic; idempotency tested with deliberate restarts

- Citations schema enforced (minItems: 1); CI lint catches schemas missing the constraint

- Batch-API job retries failures in next batch with the specific error in the next message

- Stratified accuracy dashboard updated daily; alert on any stratum < 90%

- Adversarial 'silent source' eval runs weekly; ≥ 95% not_found rate required

Five exam-pattern questions

150-page contract; the agent processes it in one prompt and dies at page 120 with `max_tokens`. Recovery without losing everything?

stop_reason: max_tokens, persist {case_facts, last_extraction, chunk_position} to durable storage; start a FRESH session whose system prompt loads the checkpoint as its CASE_FACTS; continue from chunk_position. The new session has clean context but inherits exactly the state of the old one. Idempotent on chunk_id. Re-running a chunk just produces the same extraction. Tagged to AP-LDP-03.RAG: should you retrieve all chunks similar to the query, or top-K ranked?

Chunking strategy: fixed-size or semantic?

AP-LDP-05.How do you preserve citations through long-document extraction?

minItems: 1). Every extracted clause emits citations: [{ chunk_id, page, span? }]. The model literally cannot return a clause without pointing at the supporting chunks. Downstream auditors click any extracted value and see the exact paragraph in the original document. Audit-grade provenance, structurally enforced. The model has no way to forget it. Tagged to AP-LDP-04.Long conversation summarized at every 10 turns. Customer ID 'cust_4711' becomes 'the customer'; refund amount '$247.83' becomes '~$250'. The audit fails. What's the architectural fix?

AP-LDP-02.Frequently asked

What's the optimal chunk size?

Should I retrieve all matching chunks or top-K?

How do I prevent lost-in-the-middle?

Does prompt caching help with RAG?

Where do I store the checkpoint?

doc_id. The checkpoint write must be atomic; partial writes confuse the resume path. Retain checkpoints until the document is fully processed; delete on completion or after 30 days, whichever comes first.Can I combine Batch API with checkpoint-and-resume?

max_tokens, the harvest step writes a checkpoint and includes that document in the NEXT batch with the checkpoint as case-facts. Two batches usually finish a long document; rare cases need three.How do I handle a 500-page doc that exceeds even the chunked + paged max_tokens cap?

max_tokens automatically; at 500 pages you'll see ~5-8 checkpoint events across multiple sessions. Each session is bounded; the document length isn't.