The problem

What the customer needs

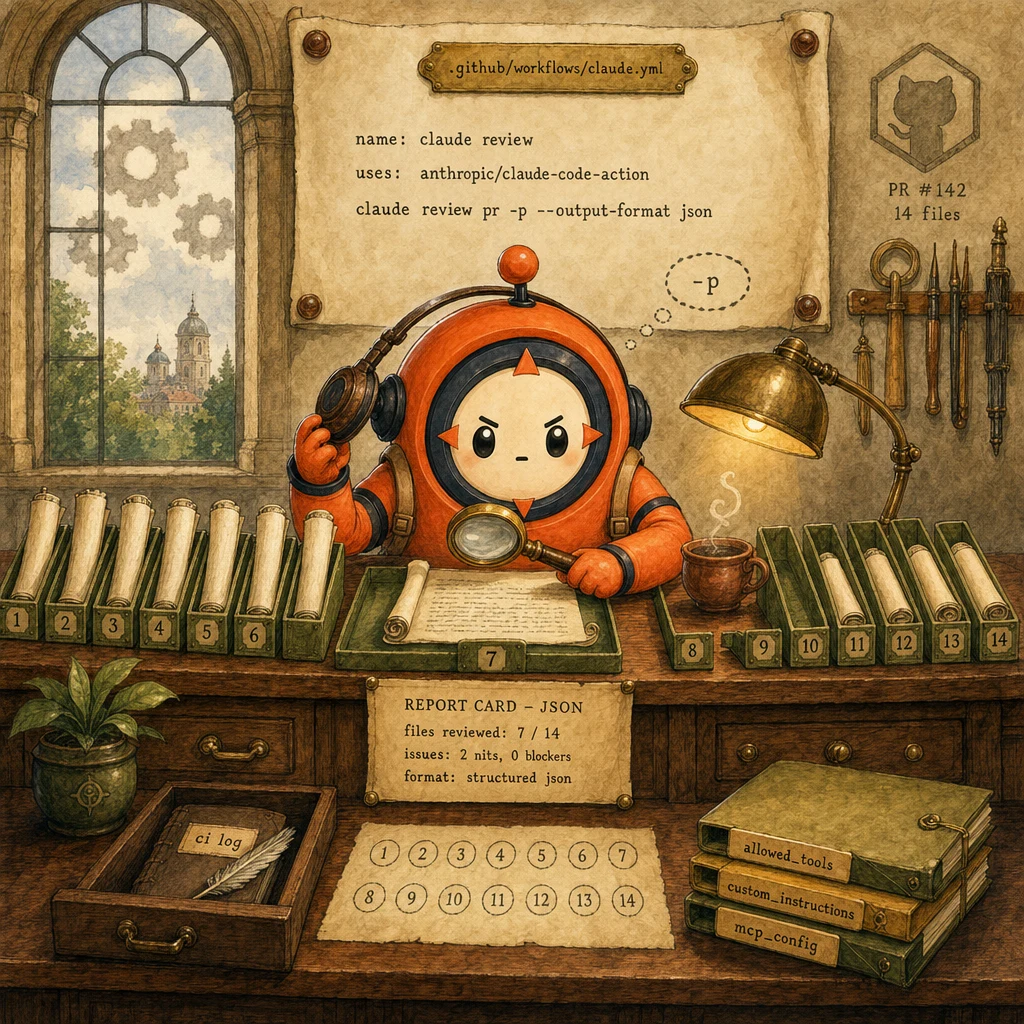

- PR review on every pull request without a human running the CLI manually. Claude Code triggered by GitHub Actions on

pull_request. - Per-file feedback that doesn't lose track of conventions established earlier in the diff. File 14 must agree with file 3 on style and tests.

- Structured output the workflow can parse into PR review comments, not free-form prose with brittle regex.

Why naive approaches fail

- Single session for all 14 files → context pollutes → file 14 contradicts file 3 (lost-in-the-middle).

- Forgetting

-p→ workflow hangs waiting for interactive input → CI timeout after 6 hours, no review posted. - Free-form text output → next step uses regex to extract issues → brittle, breaks on every Claude wording change.

- Per-file PR review fires on every

pull_requestevent - Each file reviewed in its own claude -p session (isolated context)

- Output is JSON (

--output-format json); workflow parses with jq, posts comments via gh CLI allowed_toolsis an explicit list in YAML (no*wildcard)- Project context flows in via

custom_instructionsreading.github/claude-context.md

The system

What each part does

5 components, each owns a concept. Click any card to drill into the underlying primitive.

GitHub Actions Workflow

.github/workflows/claude.yml

Triggers on pull_request events. Authenticates with the Claude Code GitHub App. Loops over the changed files (via gh pr diff --name-only) and dispatches a per-file claude -p invocation. Owns retries, concurrency, and the eventual gh pr review post.

Configuration

on: pull_request. Steps: actions/checkout → install Claude Code CLI → for each changed file, run claude review pr -p --output-format json --custom-instructions .github/claude-context.md. Concurrency: max 4 parallel files; the rest queue.

claude -p Headless Invocation

non-interactive, one-shot per file

Runs Claude Code in headless mode. The -p flag disables the interactive REPL; the agent processes a single task, emits output to stdout, and exits. No human at a keyboard, no waiting on prompts. This is the CI primitive. Without -p, the workflow hangs.

Configuration

claude review pr -p --output-format json --custom-instructions ".github/claude-context.md" --files "src/auth/login.ts" --max-turns 6

Per-File Session Isolation

one claude -p invocation per changed file

Each changed file gets its OWN headless session. No shared context across files. This is the single biggest architectural decision: 14 isolated sessions × small context > 1 session × 14 files of accumulated context. The latter triggers lost-in-the-middle by file 8-10; the former does not.

Configuration

Loop in workflow YAML: for f in $(gh pr diff --name-only); do claude review pr -p --files $f >> review-$f.json; done. Each file's review is independent; the parent workflow aggregates JSON.

Structured JSON Output Contract

--output-format json + jq parsing

Claude emits a structured object per file: { file, verdict (approve | request_changes | comment), issues: [{ line, severity, message, suggestion? }], summary }. The workflow's next step parses with jq and posts inline comments via gh pr review --comment-line. No regex parsing of free-form prose.

Configuration

Schema (per file): { file: string, verdict: 'approve'|'request_changes'|'comment', issues: [{line: int, severity: 'blocker'|'nit'|'praise', message: string, suggestion?: string}], summary: string }

PR Comment Poster + Allowed-Tools Gate

gh pr review + explicit tool whitelist

Final workflow step reads the aggregated JSON, runs gh pr review --request-changes --body $SUMMARY and gh pr review --comment-line N $MSG per issue. Critical: the claude -p invocation declares --allowed-tools Read,Grep,Glob,Bash (no wildcard, no Edit, no Write). CI agents never need write access to the repo; they read and report.

Configuration

--allowed-tools "Read,Grep,Glob,Bash(git diff,git log,gh pr diff)". Wildcards in CI are a red flag. They expand the blast radius of any prompt-injection in PR content.

Data flow

Eight steps to production

Scaffold the GitHub Actions workflow

Create .github/workflows/claude.yml. Trigger on pull_request events. Install the Claude Code GitHub App via /install-github-app (one-time, generates the OAuth token stored as secrets.CLAUDE_CODE_OAUTH_TOKEN). Checkout the PR head ref, install the CLI, then dispatch the per-file review loop.

# .github/workflows/claude.yml

name: Claude PR review

on:

pull_request:

types: [opened, synchronize]

jobs:

review:

runs-on: ubuntu-latest

permissions:

contents: read

pull-requests: write

steps:

- uses: actions/checkout@v4

with:

ref: ${{ github.event.pull_request.head.sha }}

fetch-depth: 2

- name: Install Claude Code CLI

run: npm i -g @anthropic-ai/claude-code

- name: Per-file review

env:

CLAUDE_CODE_OAUTH_TOKEN: ${{ secrets.CLAUDE_CODE_OAUTH_TOKEN }}

GH_TOKEN: ${{ github.token }}

run: ./.github/scripts/per-file-review.shRun claude -p with --output-format json per file

Loop over the changed files (gh pr diff --name-only). For each one, run claude review in headless mode (-p), with --output-format json, --allowed-tools explicit, and --custom-instructions pointing at a markdown file that holds the project's CLAUDE.md context. Capture each file's JSON output to disk; aggregate at the end.

# .github/scripts/per-file-review.sh

#!/usr/bin/env bash

set -euo pipefail

REVIEW_DIR=$(mktemp -d)

echo "files=$REVIEW_DIR" >> "$GITHUB_OUTPUT"

# Per-file independent sessions. NOT one big session

for f in $(gh pr diff --name-only); do

echo "::group::Reviewing $f"

out="$REVIEW_DIR/$(echo "$f" | tr '/' '_').json"

claude review pr \

-p \

--output-format json \

--custom-instructions ".github/claude-context.md" \

--allowed-tools "Read,Grep,Glob,Bash(git diff,git log,gh pr diff)" \

--files "$f" \

--max-turns 6 \

> "$out" || echo '{"file":"'"$f"'","verdict":"comment","issues":[],"summary":"review failed"}' > "$out"

echo "::endgroup::"

done

# Aggregate to one file the next step parses

jq -s '.' "$REVIEW_DIR"/*.json > review-aggregate.jsonInject project context via custom_instructions

Don't pass the entire project CLAUDE.md to claude -p. Too much. Instead, create .github/claude-context.md (committed to the repo) with the CI-relevant slice: stack, code style, what counts as a blocker vs a nit, what to skip (generated files, lockfiles). The --custom-instructions flag injects this into every per-file session.

# .github/claude-context.md (committed; loaded by --custom-instructions)

# Project review rubric

# Stack: Next.js 15 + TypeScript strict + Drizzle. We use Server Actions

# instead of API routes; named exports only; 2-space indent.

#

# Severity rubric:

# blocker = type unsafe, secret leaked, missing test for changed code,

# breaks API contract, server-action without zod validation

# nit = naming inconsistency, missing JSDoc, slightly verbose

# praise = clever simplification, good test, well-named refactor

#

# Skip (do NOT review):

# - pnpm-lock.yaml

# - public/scenarios/*.svg (generated)

# - any file under .next/, dist/, build/, node_modules/

#

# Output format reminder:

# Always emit JSON: { file, verdict, issues[], summary }.

# verdict ∈ {approve, request_changes, comment}.

# issues[].severity ∈ {blocker, nit, praise}.Lock allowed_tools. No wildcards in CI

In CI, the agent processes content from PR authors. Including authors outside your org. That content is untrusted. Wildcard --allowed-tools '*' lets a prompt-injection in a PR body or commit message escalate to write access on your repo. Always declare an explicit list: Read, Grep, Glob, and a NARROW Bash whitelist. Never Edit, Write, or open-ended Bash.

# WRONG. Open invitation to prompt injection

# claude review pr -p --allowed-tools "*"

#

# WRONG. Bash with no whitelist

# claude review pr -p --allowed-tools "Read,Bash"

#

# RIGHT. Every tool explicit, Bash narrowly scoped to read-only commands

ALLOWED='Read,Grep,Glob,Bash(git diff,git log --oneline -20,gh pr diff,gh pr view)'

claude review pr -p \

--allowed-tools "$ALLOWED" \

--files "$f" \

--max-turns 6 \

--output-format json

#

# Periodically audit: grep workflows for any "*" or unscoped Bash

# rg -n 'allowed-tools.*"[^"]*\*' .github/workflows/Parse JSON and post inline PR comments

Aggregate the per-file JSON outputs, then transform into gh pr review calls. One inline comment per issue (line + body), one summary review at the end (approve / request_changes / comment based on whether any blockers fired). The gh CLI handles the GitHub REST mechanics; you just feed it structured input.

# .github/scripts/post-comments.sh

#!/usr/bin/env bash

set -euo pipefail

# Aggregate file from previous step

agg=review-aggregate.json

# Per-issue inline comments

jq -c '.[].issues[] as $i | { file: .[].file, line: $i.line, body: $i.message }' "$agg" \

| while read -r row; do

file=$(echo "$row" | jq -r .file)

line=$(echo "$row" | jq -r .line)

body=$(echo "$row" | jq -r .body)

gh pr review --comment --body "$body" --comment-line "$line" -- "$file"

done

# Summary verdict

blockers=$(jq '[.[] | .issues[] | select(.severity=="blocker")] | length' "$agg")

if [ "$blockers" -gt 0 ]; then

gh pr review --request-changes --body "$blockers blocker(s) found. See inline comments."

else

gh pr review --approve --body "LGTM. Inline nits/praise where applicable."

fiCap concurrency and add a per-PR cost budget

Per-file fan-out is parallel by default. But uncapped parallelism can exhaust the GitHub Actions concurrent-job limit and stack up token spend. Cap at ~4 parallel files. Add a per-PR token budget (env var checked at the start of each file) that aborts further reviews if the running PR would exceed the cap. Cost predictability beats marginal latency wins.

# In per-file-review.sh. Bounded concurrency via xargs -P

gh pr diff --name-only \

| xargs -n 1 -P 4 -I {} bash -c '

file="$1"

out="$REVIEW_DIR/$(echo "$file" | tr / _).json"

claude review pr -p --output-format json --files "$file" \

--custom-instructions ".github/claude-context.md" \

--allowed-tools "Read,Grep,Glob,Bash(git diff,git log,gh pr diff)" \

--max-turns 6 > "$out"

' _ {}

# Per-PR token budget guard (run before the loop)

TOKEN_BUDGET=${CLAUDE_PR_TOKEN_BUDGET:-200000}

estimated=$(gh pr diff --name-only | wc -l | awk -v b="$TOKEN_BUDGET" '{ print int(b / NR) }')

if [ "$estimated" -lt 5000 ]; then

echo "::warning::PR has too many files for budget $TOKEN_BUDGET; consider splitting"

exit 0

fiUse Batch API for nightly audits, sync API for blocking review

PR review is blocking. The developer is waiting; sync API is the right call. But you also want a nightly audit pass (drift detection, security regression scan) that doesn't need to finish in minutes. That's where the Batch API earns its 50% discount: submit overnight, results in 24h, review the next morning. Two different APIs for two different latency budgets.

# Sync API. Pre-merge blocking review (latency matters)

# (this is what the per-file-review.sh script above uses)

# Batch API. Overnight audit job (latency doesn't matter, cost does)

import anthropic, json

client = anthropic.Anthropic()

# Build a batch from yesterday's merged PRs

requests = []

for pr in get_merged_prs(since="24h"):

for f in pr.changed_files:

requests.append({

"custom_id": f"audit-{pr.number}-{f.path}",

"params": {

"model": "claude-sonnet-4.5",

"max_tokens": 1024,

"messages": [{"role": "user", "content": f.diff}],

},

})

batch = client.messages.batches.create(requests=requests)

print(f"Submitted batch {batch.id}; will be ready in ~24h")

# Tomorrow morning, fetch results, write to a Slack #audit-drift channel.Add a CI cost-guard hook + alerting

Wrap the claude -p invocation in a PreToolUse hook that aborts the review if the PR is over a token-budget threshold (e.g. >100K tokens of diff). This protects you from a runaway 10-million-line PR exhausting your monthly Claude budget in one CI run. Pair with a workflow alert to a Slack channel when the hook fires.

# .claude/hooks/ci_cost_guard.py

import sys, json, subprocess

MAX_TOKENS_PER_PR = 200_000

def main():

payload = json.loads(sys.stdin.read())

if payload["tool_name"] != "claude_review_pr":

sys.exit(0)

diff = subprocess.check_output(["gh", "pr", "diff"], text=True)

estimated = len(diff) // 4 # ~4 chars per token rule of thumb

if estimated > MAX_TOKENS_PER_PR:

print(

f"PR exceeds token budget: ~{estimated} tokens > "

f"{MAX_TOKENS_PER_PR}. Split the PR or raise the limit "

f"deliberately.",

file=sys.stderr,

)

sys.exit(2) # DENY

sys.exit(0)The four decisions

| Decision | Right answer | Wrong answer | Why |

|---|---|---|---|

| Reviewing a 14-file PR | Per-file independent claude -p sessions, aggregated at the end | One claude -p session reviewing all 14 files together | Single-session review hits lost-in-the-middle by file 8-10; conventions established on file 3 drop out of attention by file 14. Per-file isolation eliminates that failure mode entirely. |

| Output format from claude -p in CI | --output-format json (parsed with jq → gh pr review) | Free-form prose, parsed downstream with regex | Regex over prose is brittle. Every Claude wording change breaks the pipeline. JSON contract is stable and the SDK guarantees the shape. |

| Tool access in CI | Explicit --allowed-tools list (Read, Grep, Glob, narrow Bash) | Wildcard --allowed-tools '*' or unscoped Bash | PR content is untrusted (external contributors, prompt injection vectors). Wildcards expand the blast radius; explicit whitelists cap it. CI agents never need write access. They read and report. |

| Pre-merge review vs nightly audit | Sync API for blocking pre-merge; Batch API for non-blocking overnight | Use one API for both | Pre-merge review is latency-critical (developer waiting); sync API is right. Nightly audit is cost-sensitive but not latency-bound; Batch API at 50% discount earns its keep. Two budgets, two APIs. |

Where it breaks

Five failure pairs. Each one is one exam question. The fix is always architectural, deterministic gates, structured fields, pinned state.

Workflow runs ONE claude -p over all 14 changed files. By file 14, lost-in-the-middle has dropped the conventions established on file 3. Inline comments on file 14 contradict the comments on file 3.

Per-file independent sessions. Loop the 14 files, run one claude -p invocation per file, aggregate the JSON outputs at the end. Each file gets a fresh, focused context.

Workflow invokes claude review without -p. The CLI starts in interactive mode, waits for input, and the GitHub Actions runner times out after 6 hours with no review posted.

AP-16Always pass -p for non-interactive headless execution. CI hangs are silent failures; the -p flag is what makes Claude Code CI-safe in the first place.

Workflow asks Claude to 'review the file and post inline comments'. Output is free-form prose. Next step uses regex to extract issues. Every Claude phrasing change breaks the regex; the workflow silently posts nothing.

AP-17Always pass --output-format json. The output is a structured contract: { file, verdict, issues[], summary }. Workflow parses with jq and posts via gh pr review --comment-line.

Workflow uses --allowed-tools '*' for convenience. A prompt-injection in a PR description tricks the agent into running Bash(rm -rf .) or writing a malicious file. Repo state corrupted; PR reviewer can't tell what happened.

Always declare an explicit allowed-tools list: Read,Grep,Glob,Bash(git diff,git log,gh pr diff). CI agents never need write tools. Wildcards expand the prompt-injection blast radius; explicit lists cap it.

claude -p runs without --custom-instructions. Claude doesn't know the project's stack, code style, or what counts as a blocker. Reviews are generic; flags style-correct code as 'should use named exports' in a default-exports codebase.

Commit .github/claude-context.md with the CI-relevant slice of CLAUDE.md (stack, severity rubric, files to skip). Pass it via --custom-instructions .github/claude-context.md on every claude -p invocation.

Cost & latency

8 files × ~10K input tokens (file diff + context-md) + ~2K output (JSON verdict) ≈ $0.04-0.10 + parallel overhead. Cap at ~$0.15 with the cost-guard hook to bound runaway 50-file PRs.

50 PRs × 8 files × ~5K tokens (audit-only, lighter prompt) at Batch API 50% discount. Result ready next morning; no developer waiting.

.github/claude-context.md is stable across all per-file invocations within a single PR. Mark it cache_control: ephemeral; 5-min TTL keeps it warm across files in one workflow run.

Per-file ~8-15s × 4 parallel = ~30s + JSON aggregation + gh pr review posts. Fast enough that the developer doesn't context-switch away while waiting.

A 200-file PR (rare but real. E.g. lockfile bumps) without the hook would burn ~$3-6 in one workflow run. The hook denies before the loop starts; cost reverts to $0.

Ship checklist

Two passes. Build-time gates verify the code; run-time gates verify the system in production.

Build-time

- .github/workflows/claude.yml exists; triggers on pull_request opened+synchronize

- Claude Code GitHub App installed; CLAUDE_CODE_OAUTH_TOKEN in repo secrets

- Workflow runs claude review with -p (headless) on every invocation↗ context-window

- Per-file loop. Each changed file in its own claude -p session↗ subagents

- --output-format json on every invocation; jq parses the aggregate↗ structured-outputs

- --allowed-tools is an explicit list (no wildcards, no Edit/Write)↗ tool-calling

- .github/claude-context.md committed; passed via --custom-instructions↗ claude-md-hierarchy

- Concurrency capped (xargs -P 4 or simple JS semaphore)

- PreToolUse cost-guard hook denies PRs over the token budget↗ hooks

- Nightly audit job uses Batch API (50% discount, 24h SLA)↗ batch-api

- PR comments posted via gh pr review --comment-line + summary verdict

Run-time

- .github/workflows/claude.yml committed and triggers on pull_request

- Smoke test: open a 1-file PR; review posts within 60s with structured comments

- Stress test: open a 30-file PR; concurrency cap prevents runner exhaustion

- Cost-guard test: open a synthetic 200-file PR; hook denies before loop starts

- Schema lint: validate every JSON output against the file-review schema

- Permissions audit: workflow

permissions:block is contents:read + pull-requests:write only - Tools audit: no

--allowed-tools '*'anywhere in.github/workflows/ - Nightly audit Batch API job runs and posts to #audit-drift on completion

Five exam-pattern questions

A GitHub Actions CI pipeline runs Claude Code to review PRs. It processes all 14 modified files in a SINGLE claude -p session. After 8 files the output becomes repetitive and misses issues. By file 14, an inline comment contradicts a decision made on file 3. Why?

claude -p invocation per file, aggregate the JSON outputs at the end. Each file gets fresh, focused context. Tagged to AP-15.Your CI/CD workflow hangs when calling `claude review pr.md`. The GitHub Actions runner eventually times out after 6 hours with no review posted. What flag is missing?

-p (or --print) puts the CLI into headless one-shot mode: it processes a single task, emits output to stdout, and exits. Always pass -p in any non-interactive context. Tagged to AP-16.A GitHub Action posts inline review comments on PR lines. Claude outputs free-form prose; the workflow uses regex to extract issues, line numbers, and severities. After every Claude wording update, the regex breaks and reviews silently fail. What architectural change fixes this for good?

{ file, verdict, issues: [{ line, severity, message, suggestion? }], summary }. The workflow parses with jq and posts via gh pr review --comment-line. Regex over prose is brittle by construction; structured-output contracts are the only durable answer. Tagged to AP-17.Your CI/CD pipeline uses `--allowed-tools '*'` because the team didn't want to maintain a list. A PR description includes a prompt-injection that tricks Claude into running `Bash(rm -rf .)`. Repo state corrupted. What's the rule?

--allowed-tools "Read,Grep,Glob,Bash(git diff,git log,gh pr diff,gh pr view)". CI review agents never need Edit, Write, or unscoped Bash; they read and report. Tagged to AP-18.Your Claude Code CI action runs without project context. Reviews flag style-correct code as 'should use named exports' in a default-exports codebase. What YAML field provides the project-specific rubric?

.github/claude-context.md). The file documents the project's stack, severity rubric (what counts as a blocker vs nit), and files to skip (lockfiles, generated assets). Don't pass the entire CLAUDE.md. Too much context. Pass the CI-relevant slice. Tagged to AP-19.Frequently asked

Is claude -p the same as the SDK?

claude -p is for shell-driven workflows (CI, cron, dev scripts) where the input is a prompt and the output is a structured response. CI workflows almost always want -p; bespoke automation almost always wants the SDK.What's the maximum file count per PR before this approach breaks down?

Can I run claude -p on the same PR every time it's updated?

on: pull_request: types: [opened, synchronize]. Synchronize fires on every push to the PR head ref. Idempotency: each run reviews the *current* diff, so old comments stay until they're stale; if you want to dismiss outdated reviews, add a gh pr review --dismiss step that targets reviews on commits no longer at HEAD.How do I keep the cost predictable if the team merges 100+ PRs a day?

xargs -P 4 or JS semaphore). Bounds parallel Claude API calls in flight. With all three, monthly cost variance stays inside ±10%.Should the CI workflow have write access to the repo?

permissions: block: contents: read, pull-requests: write. The CI agent reads code, runs gh pr diff, posts comments. It never needs to push commits. Same logic that bans write tools in --allowed-tools applies at the GitHub permission layer.What's in `.github/claude-context.md` vs the project's main CLAUDE.md?

.github/claude-context.md is the trimmed CI rubric: stack one-liner, severity definitions, files to skip, output-format reminder (~40-80 lines). Smaller context = faster review + cheaper tokens.Can I run claude -p reviews with custom Skills?

.claude/skills/code-reviewer/SKILL.md with the team's review rubric, allowed tools, and output schema. The CI workflow invokes the Skill explicitly: claude review pr -p --skill code-reviewer .... Skills are version-controlled (live in the repo), so the rubric evolves with the codebase and CI behaviour stays in sync.