The problem

What the customer needs

- Run real Python or shell scripts as part of the agent's workflow, not just simulate them.

- Untrusted code stays contained. A misbehaving script does not destroy the host's filesystem or exhaust its memory.

- Predictable termination. A runaway loop or infinite recursion stops at exactly the configured time limit.

- Consistent output shape. The agent sees a predictable JSON contract regardless of which tool ran or how the script printed.

Why naive approaches fail

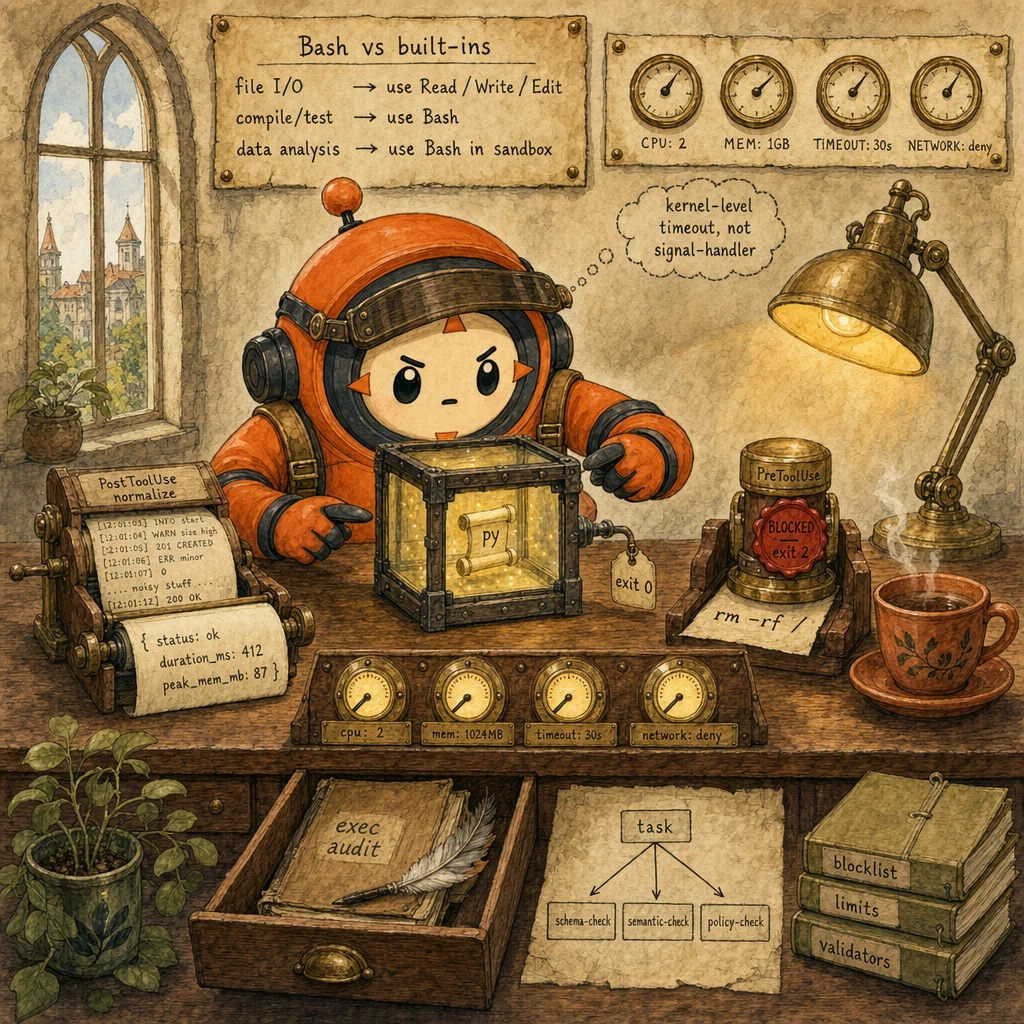

- Use Bash for everything (including

cat file.txtinstead of Read). Audit trail is opaque, file I/O and execution conflate. - No PreToolUse blocklist. A clever prompt-injection in the alert text gets

rm -rf /prodto execute. - No resource limits. A loop allocates 10 GB or runs forever; the sandbox runner is exhausted.

- Heterogeneous raw output passed to the agent. The agent parses inconsistently and routes wrong.

- Schema-only validation. The output matches

{status: string}butstatusis'banana'. The agent acts on nonsense.

- File I/O routes to Read / Write / Edit. Bash is reserved for actual command execution.

- PreToolUse hook on Bash with a destructive blocklist (regex). Exit 2 on match.

- Sandbox runtime (Docker or Firecracker) with kernel-level limits: CPU 2, memory 1GB, timeout 30s, network deny.

- PostToolUse hook normalizes raw output to JSON:

{status, stdout, stderr, duration_ms, peak_memory_mb}. - Semantic validator confirms result shape matches the task type.

- Audit log: every code-exec invocation writes an append-only row.

The system

What each part does

5 components, each owns a concept. Click any card to drill into the underlying primitive.

Bash Tool with Destructive Blocklist

PreToolUse gate, regex-driven

Bash sits behind a PreToolUse hook with a compiled regex blocklist (rm -rf, sudo, drop database, kill -9, chmod 777, curl ... | sh). Match exits 2 with a model-readable stderr message; agent observes the deny as tool_result: is_error: true and re-plans. No prompt-injection bypass: the blocklist is in code, not in the prompt.

Configuration

matcher: 'Bash'. Blocklist regex compiled at hook-load time. Allowlist of safe binaries (kubectl, docker, journalctl, jq, ps, df, top). Exit 2 with stderr.

Sandbox Runtime (Docker or Firecracker)

fresh sandbox per invocation

Each code-exec invocation runs in a freshly spawned isolated environment. Docker for most cases; Firecracker for stronger isolation when running fully untrusted user code. The image is cached so spin-up stays fast (~500ms warm). The sandbox is destroyed after the run; no state leaks between invocations.

Configuration

Sandbox config: { image: code-exec:latest, cpus: 2, memory_mb: 1024, timeout_sec: 30, network: deny, ipc: private, pid: private }. Image is read-only with a small writable tmpfs scratch space.

Resource Limit Enforcement

kernel-level via cgroups or systemd

CPU, memory, time, and network limits are enforced at the kernel level (cgroups for Docker, jailer for Firecracker, systemd-run --property=TimeoutStartSec=30s for raw process spawn). Kernel limits cannot be caught or ignored. Python signal-handler-based timeouts are the canonical wrong answer: a busy-loop or a try: pass swallows them.

Configuration

cgroup limits: cpu.max=2, memory.max=1G, network deny via iptables egress rule. Timeout via systemd-run --property=TimeoutStartSec=30s. Process exit code 137 (SIGKILL) means OOM; 124 means timeout.

PostToolUse Output Normalizer

raw bytes to structured JSON

Real shell output is messy: mixed Unix timestamps and ISO 8601, mixed status code conventions, multiline stack traces, ANSI color codes. The PostToolUse hook normalizes everything into a stable contract: {status, stdout, stderr, duration_ms, peak_memory_mb, exit_code}. Timestamps converted to ISO 8601 UTC. Color codes stripped. Long stdout truncated.

Configuration

matcher: 'Bash'. Hook reads stdin: {tool_name, tool_input, tool_result, latency_ms, peak_memory_mb}. Returns normalized JSON. Truncates stdout > 4 KB. Strips ANSI codes. Always exits 0.

Semantic Result Validator

task-aware sanity check

Schema validation guarantees shape; semantic validation guarantees meaning. Given the task context, check that the normalized result is sensible: passed + failed + skipped equals total; counts are non-negative. Failed semantic validation routes the agent back with a specific error message; it does NOT propagate bad data.

Configuration

Per-task validators registered by Skill. Test-runner validator: passed + failed + skipped == total. Data-analysis validator: row count > 0; required columns present.

Data flow

Eight steps to production

Route file I/O to built-in tools; reserve Bash for execution

The first layer of safety is tool selection. cat file.txt should be a Read call, not a Bash call. grep -r foo should be a Grep call. find . -name '*.py' should be a Glob call. Bash is reserved for what the built-ins cannot do: compile code, run tests, execute a Python data-analysis script. This single distinction shrinks the Bash blast-radius by ~80%.

# Wrong: Bash for everything

# tool_use: Bash, command: "cat config.json"

# tool_use: Bash, command: "grep -r 'TODO' src/"

# Right: built-in tools for I/O; Bash only for execution

# tool_use: Read, file_path: "config.json"

# tool_use: Grep, pattern: "TODO", path: "src/"

# tool_use: Glob, pattern: "**/*.py"

# Bash legitimately for execution:

# tool_use: Bash, command: "pytest tests/ --json-report"

# tool_use: Bash, command: "python analyze.py --input data.csv"

import re

FILE_IO_VIA_BASH = re.compile(

r"^\s*(cat|head|tail|less|more|grep|find|ls|wc|sort|uniq|cut|awk|sed)\s",

)

def warn_on_io_via_bash(tool_name: str, command: str) -> str | None:

if tool_name != "Bash":

return None

if FILE_IO_VIA_BASH.match(command):

first = command.strip().split()[0]

return (

f"Bash command starts with {first!r}. "

f"For file I/O prefer Read / Grep / Glob; reserve Bash for execution."

)

return NoneWire the PreToolUse blocklist hook on Bash

The destructive blocklist runs before the sandbox is even spawned. Compiled regex against rm -rf, sudo, drop database, kill -9, chmod 777, curl | sh. Match exits 2; the agent sees the deny as a tool_result with is_error: true and re-plans.

# .claude/hooks/codeexec_blocklist.py

import sys, json, re

BLOCKLIST = re.compile(

r"\b("

r"rm\s+-rf"

r"|sudo\s+"

r"|drop\s+(database|table)"

r"|kill\s+-9"

r"|chmod\s+777"

r"|>\s*/(etc|usr|var)/"

r"|curl\s+[^|]+\|\s*sh"

r")\b",

re.IGNORECASE,

)

ALLOWLIST_BINS = {

"python", "python3", "pytest", "node", "npm", "pnpm",

"tsc", "eslint", "prettier", "ruff", "black", "go", "cargo",

"kubectl", "docker", "jq",

}

def main():

payload = json.loads(sys.stdin.read())

if payload["tool_name"] != "Bash":

sys.exit(0)

cmd = (payload["tool_input"].get("command") or "").strip()

if BLOCKLIST.search(cmd):

print(f"BLOCKED: command matches destructive pattern. command={cmd!r}", file=sys.stderr)

sys.exit(2)

first = cmd.split()[0] if cmd else ""

if first and first not in ALLOWLIST_BINS:

print(f"BLOCKED: binary {first!r} not on the code-exec allowlist.", file=sys.stderr)

sys.exit(2)

sys.exit(0)

if __name__ == "__main__":

main()Spawn the sandbox with kernel-level limits

Once the blocklist allows the command, the sandbox runs the actual code. Docker is the default; Firecracker for stronger isolation. The sandbox is fresh per invocation, runs read-only with a tmpfs scratch space, and enforces CPU / memory / time / network limits at the kernel level via cgroups.

import subprocess, time

def run_in_sandbox(command: str, timeout_s: int = 30) -> dict:

"""Run command in a Docker sandbox with kernel-level limits."""

start = time.monotonic()

try:

result = subprocess.run(

[

"docker", "run", "--rm",

"--cpus", "2",

"--memory", "1024m",

"--memory-swap", "1024m",

"--network", "none",

"--read-only",

"--tmpfs", "/tmp:size=128m",

"--ipc", "private",

"--pid", "private",

"code-exec:latest",

"bash", "-c", command,

],

capture_output=True, text=True,

timeout=timeout_s,

)

return {

"status": "ok" if result.returncode == 0 else "exit_nonzero",

"exit_code": result.returncode,

"stdout": result.stdout,

"stderr": result.stderr,

"duration_ms": int((time.monotonic() - start) * 1000),

}

except subprocess.TimeoutExpired:

return {

"status": "timeout",

"exit_code": 124,

"stdout": "",

"stderr": f"command exceeded {timeout_s}s timeout",

"duration_ms": timeout_s * 1000,

}Use kernel timeouts, not Python signal handlers

The canonical wrong answer to 'how do I time-out a script after 30 seconds?' is signal.signal(signal.SIGALRM, handler). Python signal handlers can be caught (try: ... except: pass), can be ignored, and do not fire inside C extensions. Use kernel-level timeouts: systemd-run --property=TimeoutStartSec=30s, Docker's intrinsic timeout. Kernel timeouts cannot be caught.

# Wrong: Python signal handler

# import signal

# def handler(signum, frame):

# raise TimeoutError("timed out")

# signal.signal(signal.SIGALRM, handler)

# signal.alarm(30)

# # User script can do try/except and swallow the SIGALRM.

# Right: kernel timeout via systemd-run

import subprocess

def run_with_kernel_timeout(command: str, timeout_s: int = 30) -> int:

result = subprocess.run(

[

"systemd-run", "--user", "--scope",

f"--property=TimeoutStartSec={timeout_s}s",

"--property=MemoryMax=1G",

"--property=CPUQuota=200%",

"bash", "-c", command,

],

)

# Exit code 124 means systemd killed it for timeout; user script cannot prevent.

return result.returncodePostToolUse output normalizer

Real shell output is messy. The PostToolUse hook normalizes everything into a stable contract before the agent sees it. Strip ANSI color codes, convert timestamps to ISO 8601 UTC, truncate stdout / stderr above 4 KB.

import sys, json, re, datetime

ANSI = re.compile(r"\x1b\[[0-9;]*m")

UNIX_TS = re.compile(r"\b1[6-9]\d{8}\b")

def normalize_stdout(text: str, max_bytes: int = 4096) -> str:

text = ANSI.sub("", text)

text = UNIX_TS.sub(

lambda m: datetime.datetime.utcfromtimestamp(int(m.group())).isoformat() + "Z",

text,

)

if len(text) > max_bytes:

head = text[: max_bytes // 2]

tail = text[-max_bytes // 2 :]

omitted_lines = text[max_bytes // 2 : -max_bytes // 2].count("\n")

text = f"{head}\n... truncated ({omitted_lines} more lines) ...\n{tail}"

return text

def main():

payload = json.loads(sys.stdin.read())

if payload["tool_name"] != "Bash":

print(json.dumps(payload))

sys.exit(0)

raw_result = payload.get("tool_result") or {}

normalized = {

"status": raw_result.get("status", "unknown"),

"exit_code": raw_result.get("exit_code", -1),

"stdout": normalize_stdout(raw_result.get("stdout", "")),

"stderr": normalize_stdout(raw_result.get("stderr", "")),

"duration_ms": raw_result.get("duration_ms"),

"peak_memory_mb": raw_result.get("peak_memory_mb"),

}

payload["tool_result"] = normalized

print(json.dumps(payload))

sys.exit(0)

if __name__ == "__main__":

main()Validate the result semantically

Schema validation guarantees shape; semantic validation guarantees meaning. After normalization, run a task-specific validator. For a test runner: passed + failed + skipped == total; counts are non-negative. Failed validation returns is_error: true with the specific check that failed.

import json

from typing import TypedDict

class ValidationResult(TypedDict):

valid: bool

errors: list[str]

def validate_pytest_output(result: dict) -> ValidationResult:

errors = []

try:

report = json.loads(result["stdout"])

except json.JSONDecodeError:

errors.append("pytest stdout is not valid JSON")

return {"valid": False, "errors": errors}

summary = report.get("summary", {})

passed = summary.get("passed", 0)

failed = summary.get("failed", 0)

skipped = summary.get("skipped", 0)

total = summary.get("total", 0)

if any(v < 0 for v in (passed, failed, skipped, total)):

errors.append(f"negative test counts in summary: {summary}")

if passed + failed + skipped != total:

errors.append(f"counts inconsistent: passed={passed} + failed={failed} + skipped={skipped} != total={total}")

if total == 0 and result.get("exit_code") == 0:

errors.append("pytest reported total=0 but exited 0; expected tests to run")

return {"valid": len(errors) == 0, "errors": errors}Test resource limits and timeouts adversarially

Ship the sandbox config; then break it on purpose. Run scripts that allocate 10 GB; verify the kernel kills them at 1 GB with exit code 137. Run busy loops; verify timeout at 30s with exit code 124. Run network-egress attempts; verify the deny rule fires.

def adversarial_oom_test() -> bool:

"""Allocate 10 GB. Sandbox should kill at 1 GB cap."""

code = "x = bytearray(10 * 1024 * 1024 * 1024)"

result = run_in_sandbox(f"python3 -c {code!r}")

if result["exit_code"] != 137:

print(f"FAIL: expected exit 137 (OOM kill), got {result['exit_code']}")

return False

print("PASS: OOM kill at 1 GB cap")

return True

def adversarial_timeout_test() -> bool:

"""Busy loop. Sandbox should kill at 30s cap."""

result = run_in_sandbox("python3 -c 'while True: pass'", timeout_s=30)

if result["status"] != "timeout":

print(f"FAIL: expected status=timeout, got {result['status']}")

return False

print("PASS: timeout kill at 30s")

return TrueAudit-log every code-exec invocation

Every code-exec invocation writes an append-only row to durable storage: timestamp, command, hook decisions, sandbox metrics (duration, peak memory, exit code), validation outcome, agent that requested it. Retain for at least 90 days.

import datetime, json

from pathlib import Path

AUDIT_DIR = Path("audit")

AUDIT_DIR.mkdir(exist_ok=True)

def audit_code_exec(command, pre_decision, sandbox_result, validation, agent_id):

today = datetime.date.today().isoformat()

row = {

"ts": datetime.datetime.utcnow().isoformat() + "Z",

"agent_id": agent_id,

"command": command[:200],

"pre_decision": pre_decision,

"sandbox": {

"status": sandbox_result.get("status"),

"exit_code": sandbox_result.get("exit_code"),

"duration_ms": sandbox_result.get("duration_ms"),

"peak_memory_mb": sandbox_result.get("peak_memory_mb"),

},

"validation": validation,

}

with open(AUDIT_DIR / f"{today}.jsonl", "a") as f:

f.write(json.dumps(row) + "\n")The four decisions

| Decision | Right answer | Wrong answer | Why |

|---|---|---|---|

| Reading the contents of `config.json` from agent code | Use the Read tool. file_path: 'config.json'. | Use Bash. command: 'cat config.json'. | Built-in tools are auditable, fast, and structurally distinct from execution. Bash conflates file I/O with execution and bloats the audit trail; the PreToolUse blocklist also has to reason about every cat/grep/find. |

| Preventing destructive Bash commands at runtime | PreToolUse hook on Bash with a compiled regex blocklist. Exit 2 on match. | System prompt instruction: 'never run destructive commands'. | Prompts are probabilistic and leak under prompt injection or unusual phrasing. Hooks are deterministic, run before the sandbox spawns, and emit a model-readable stderr message that becomes a tool_result is_error: true. |

| Stopping a runaway script after 30 seconds | Kernel timeout: systemd-run --property=TimeoutStartSec=30s, or Docker --timeout, or cgroup limit. | Python signal handler: signal.signal(signal.SIGALRM, handler). | Signal handlers can be caught, ignored, or never delivered (e.g. blocked inside a C extension). Kernel timeouts cannot be caught by the running code; the kernel sends SIGKILL and the process exits regardless. |

| Validating that a test-runner result is sane | Semantic validation: passed + failed + skipped equals total; counts are non-negative; total > 0 for a non-empty run. | Schema validation only: the JSON has the expected shape. | Schema guarantees shape; semantic guarantees meaning. A schema-valid output of {passed: -5, failed: 2, total: 0} is structurally fine but semantically nonsense. |

Where it breaks

Five failure pairs. Each one is one exam question. The fix is always architectural, deterministic gates, structured fields, pinned state.

Agent calls Bash with cat data.json, grep -r foo, find . -name *.py. Audit trail is opaque and the PreToolUse blocklist has to reason about every cat / grep / find.

Route file I/O to built-in tools: Read, Grep, Glob. Reserve Bash for actual command execution. The first layer of safety is tool selection.

A clever prompt-injection in alert text gets rm -rf /prod past the agent. The Bash command runs because no hook scanned it first.

PreToolUse hook with a compiled regex blocklist (rm -rf, sudo, drop database, kill -9, chmod 777, curl | sh). Match exits 2 with stderr.

An agent's Python script allocates 10 GB and crashes the runner. Another runs an infinite loop and starves the queue.

AP-CODEEXEC-03Sandbox config with kernel-level limits: CPU 2, memory 1024 MB, timeout 30 s, network deny. Enforced via Docker cgroups, Firecracker jailer, or systemd-run scopes.

Bash output goes straight back: mixed Unix timestamps and ISO 8601, ANSI color codes, multiline stack traces. The agent parses inconsistently.

AP-CODEEXEC-04PostToolUse hook normalizes everything: strip ANSI, convert timestamps to ISO 8601 UTC, truncate stdout above 4 KB, emit a stable contract.

The result JSON has the expected shape but passed: -5, total: 0. Schema-valid; semantically nonsense. The agent acts on it.

Semantic validators registered per task: passed + failed + skipped equals total; counts are non-negative; total > 0 for a non-empty run.

Cost & latency

Skill body ~500 tokens system + parameters ~50 tokens + working tokens ~1500-3000 input + ~500 output.

Docker image cached in the runner. Warm spin-up is dominated by container start and cgroup setup.

Sandbox spin-up (500 ms) + actual code (1-5 s) + PostToolUse normalization (50 ms) + semantic validation (20 ms).

Most data-analysis or test-runner Skills are short and small. Resource caps prevent the long tail from dominating.

JSONL row ~2-5 KB per invocation. 10 K invocations per month ~30-50 MB. Negligible at object-storage prices.

Ship checklist

Two passes. Build-time gates verify the code; run-time gates verify the system in production.

Build-time

- File I/O routes to Read / Write / Edit. Bash reserved for actual execution↗ tool-calling

- PreToolUse hook on Bash with compiled regex blocklist. Allowlist of safe binaries↗ hooks

- Sandbox runtime (Docker or Firecracker) per invocation. Image cached for warm spin-up↗ subagents

- Kernel-level resource limits: CPU 2, memory 1024 MB, timeout 30 s, network deny↗ tool-calling

- PostToolUse hook normalizes raw output to JSON contract↗ structured-outputs

- Per-task semantic validators (test runner, data analysis, type checker)↗ evaluation

- Adversarial test suite: OOM kill, timeout kill, network deny each verified end-to-end

- Audit log: append-only JSONL with command, hook decisions, sandbox metrics, validation outcome↗ evaluation

- Retention 90+ days. Indexed by agent_id and timestamp

- Telemetry: per-invocation duration, peak_memory, hook deny rate, validation pass rate

Run-time

- Sandbox image built and cached on every runner; cold-start time documented

- PreToolUse blocklist regex tested against an adversarial 'destructive-attempt' eval set

- Kernel-level resource limits verified end-to-end (OOM kill, timeout kill, network deny)

- PostToolUse normalizer tested against 5 representative output shapes

- Per-task semantic validators registered for every Skill that emits code-exec results

- Audit log retention 90+ days; indexed; replay tool reconstructs any invocation in seconds

- Allowlist of safe binaries kept narrow; PR review on every additional binary

- Telemetry: per-invocation duration, peak memory, hook deny rate, validation pass rate

Five exam-pattern questions

A Skill executes Python code. The agent calls Bash with command: 'cat data.json'. What is the correct tool to use here and why?

file_path: 'data.json'. Reserve Bash for actual command execution (compiling, running tests, executing a Python data-analysis script). Built-in tools are auditable and structurally distinct from execution; the PreToolUse blocklist has a smaller reasoning surface when Bash usage is narrow. Tagged to AP-CODEEXEC-01.Your code-execution Skill runs untrusted Python. What prevents the code from running `rm -rf /` or other destructive commands?

rm -rf, sudo, drop (database|table), kill -9, chmod 777, curl | sh). On match, the hook exits 2 with a model-readable stderr message; the SDK delivers that as a tool_result with is_error: true. The agent observes the deny and re-plans. The blocklist lives in code, not in the prompt; prompt-injection cannot bypass it. Tagged to AP-CODEEXEC-02.A Skill executes a data-analysis script. The script can run for 5 minutes (max) or 10 seconds (expected). How should you enforce the time limit?

systemd-run --property=TimeoutStartSec=30s, or Docker's intrinsic timeout via cgroups. Kernel timeouts cannot be caught: the kernel sends SIGKILL and the process exits regardless. Python signal.signal(signal.SIGALRM, ...) is the canonical wrong answer because the user script can try: ... except: pass it. Exit code 124 is the standard timeout signature. Tagged to AP-CODEEXEC-03.A PostToolUse hook normalizes code-execution output. The raw output is heterogeneous: Unix timestamp, ISO 8601 date, status code, ANSI color codes, multi-line stack trace. How should the hook normalize this?

{status, exit_code, stdout, stderr, duration_ms, peak_memory_mb}. Strip ANSI color codes via regex. Convert Unix timestamps to ISO 8601 UTC. Truncate stdout above 4 KB with a ... truncated (N more lines) ... marker. Map exit_code to status (0 -> ok, 137 -> oom, 124 -> timeout). Always exit the hook with code 0; this hook is for shape, not for denial. Tagged to AP-CODEEXEC-04.A Skill validates the result semantically. It runs a test suite and gets back: `{passed: 5, failed: 1, skipped: 0, total: 6}`. What semantic check should validate this result?

passed + failed + skipped == total (here 5 + 1 + 0 = 6: passes). (2) All counts are non-negative. (3) total > 0 for a non-empty run. If any check fails, return is_error: true with the specific failure. Schema validation alone catches malformed JSON; semantic validation catches schema-valid nonsense like {passed: -5, total: 0}. Tagged to AP-CODEEXEC-05.Frequently asked

What languages can a code-execution Skill run?

python:3.12-slim plus a few preinstalled libraries covers 90% of use cases.Can code access the network?

--network none denies all egress at the kernel level. Opt in for specific tasks by spawning the sandbox with --network bridge and specific iptables rules.What happens if code runs out of memory?

status: oom in the normalized result.Can code persist state across Skill invocations?

How do I validate output semantically?

Can a Skill call another Skill that does code execution?

What is the timeout for code execution?

sandbox_timeout_sec parameter in the Skill frontmatter. The kernel enforces it; the running code cannot extend or ignore it.