The problem

What the customer needs

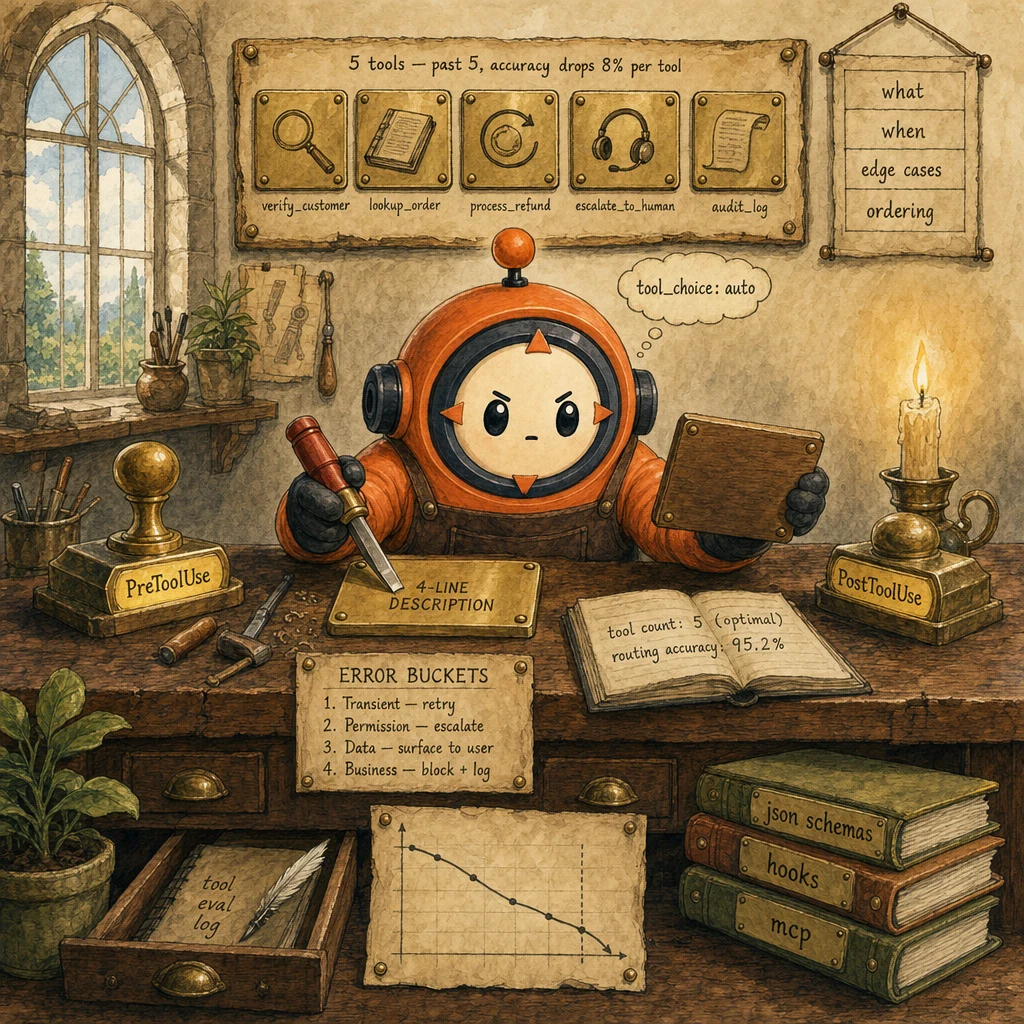

- A tool registry the agent routes accurately. The right tool fires on the first try ≥ 95% of the time.

- Risky operations gated structurally. Refund cap, destructive Bash, write access policed by hooks not prompts.

- Errors that the agent can reason about. A permission-denied looks different from a transient timeout, and the agent retries accordingly.

Why naive approaches fail

- 15-tool registry → routing accuracy drops 8% per tool past 5; the agent alternates and misses obvious matches.

- Vague one-line tool descriptions → agent picks the wrong tool ~12% of the time.

- Prompt-only policy enforcement ('never refund > $500') → leaks 3-5% in production despite emphatic phrasing.

- Tool count per agent ≤ 5; rare tools moved to specialist sub-agents

- Every tool description follows the 4-line pattern (what / when / edge cases / ordering)

- PreToolUse hook gates every policy-bearing tool; exit 2 on violation

- PostToolUse hook normalizes outputs and logs every call to the audit trail

- Tool errors emit one of the 4 structured buckets (Transient · Permission · Data · Business)

- MCP servers used for cross-agent tool sharing. No inline duplication

The system

What each part does

5 components, each owns a concept. Click any card to drill into the underlying primitive.

Tool Registry (4-5 Tools)

the optimum, not the maximum

The agent's toolbox. Empirically, 4-5 tools is the routing-accuracy sweet spot; past that, accuracy drops ~8% per added tool because descriptions overlap and the model alternates. If the use case needs more tools, split into specialist sub-agents. Each with its own 4-5-tool registry. And route between them with a triage classifier.

Configuration

Cap: 4-5 tools. Beyond: split agents. Don't cram a customer-support tool, a refund tool, a sentiment tool, and 11 admin tools into one agent. That's three agents pretending to be one.

4-Line Description Pattern

what · when · edge cases · ordering

The canonical tool description shape. Line 1: what the tool does. Line 2: when to call it. Line 3: edge cases (returns, failure modes). Line 4: ordering (which tools must come before / after). This pattern makes routing structural. The model reads the pattern in every description and learns the shape. Vague one-liners produce ~12% wrong-tool selection; the 4-line pattern drops it below 3%.

Configuration

description: "Look up a customer by customer_id and confirm they are active.\nUse this BEFORE any other tool that mentions the customer.\nEdge cases: returns 'not_found' if customer_id is missing.\nAlways run before lookup_order or process_refund."

PreToolUse Hook (Policy Gate)

deterministic, before exec

Sits between the model's tool_use request and the actual tool execution. Reads tool_input (e.g., refund_amount), compares to policy (amount <= cap), exits 0 (allow) or 2 (deny with stderr message). Deny routes the model back with the policy reason, and the agent re-plans. The single-most-effective lever for converting probabilistic prompt-only policies into 100%-deterministic gates.

Configuration

matcher: "process_refund". Hook reads stdin JSON: {tool_name, tool_input}. Exits 0 to allow, exits 2 with stderr to deny. SDK forwards stderr back to the model as a tool_result with is_error=true.

PostToolUse Hook (Normalization + Audit)

after exec, before next turn

Fires AFTER the tool runs but BEFORE the result is fed to the model. Normalizes raw outputs (timestamps to ISO-8601, status codes to enum names, field renames), captures side-effect signals, and writes the canonical audit log entry. Without it, the model sees inconsistent output shapes across calls; with it, every call has a predictable contract and an audit trail.

Configuration

matcher: '*'. Hook reads stdin: {tool_name, tool_input, tool_result}. Transforms tool_result into normalized shape. Writes audit row to durable log. Always exits 0 (does not deny. That's PreToolUse's job).

4-Bucket Structured Error Contract

Transient · Permission · Data · Business

Every tool that can fail emits an error in one of four explicit buckets, not a free-form string. The harness reads is_error: true + error.bucket, then routes: Transient → retry; Permission → escalate (don't retry, won't fix itself); Data → surface to user; Business → block + log + escalate. Without this contract, the agent retries permission errors forever and surfaces transient ones as catastrophes.

Configuration

tool_result on failure: { is_error: true, content: { bucket: "Transient"|"Permission"|"Data"|"Business", code, detail, retryable: bool } }. Agent reads bucket and retryable; never branches on detail text.

Data flow

Eight steps to production

Cap the registry at 4-5 tools (split otherwise)

Audit your current tool list. Past 5, you're guaranteed losing routing accuracy. The fix is structural: identify the use cases that actually share state vs those that don't, then split into specialist agents with their own 4-5-tool registries. Use a triage classifier (or a top-level coordinator agent) to route the user request to the right specialist.

# AUDIT: count + classify tools

SUPPORT_TOOLS = ["verify_customer", "lookup_order", "process_refund",

"escalate_to_human", "audit_log"] # 5. At the optimum

ADMIN_TOOLS = ["create_user", "delete_user", "reset_password",

"lock_account", "unlock_account", "audit_admin"] # 6. Split

# WRONG: cram all 11 into one agent

# tools = SUPPORT_TOOLS + ADMIN_TOOLS # 11. Routing accuracy drops ~32%

# RIGHT: two specialist agents, triage routes between them

def triage(user_request: str) -> str:

"""Tiny classifier. Pick the specialist agent."""

if any(w in user_request.lower() for w in ["refund", "order", "ticket"]):

return "support"

if any(w in user_request.lower() for w in ["password", "account", "user"]):

return "admin"

return "support" # default

def route(user_request: str) -> dict:

specialist = triage(user_request)

tools = SUPPORT_TOOLS if specialist == "support" else ADMIN_TOOLS

return run_agent(tools=tools, message=user_request)Write every tool description in the 4-line pattern

Line 1: what (one sentence). Line 2: when (which user intent triggers this). Line 3: edge cases (what happens on failure, missing args). Line 4: ordering (which tools must come before / after). This pattern is the model's structural cue. It reads the pattern across all 5 tool descriptions and routes accordingly. Vague one-liners produce ~12% wrong-tool selection; this pattern drops it below 3%.

# Anthropic 4-line pattern. What / when / edge cases / ordering

TOOLS = [

{

"name": "verify_customer",

"description": (

"Look up a customer by customer_id and confirm they are active.\n"

"Use this BEFORE any other tool that mentions the customer.\n"

"Edge cases: returns 'not_found' if customer_id is missing or stale.\n"

"Always run before lookup_order or process_refund."

),

"input_schema": {

"type": "object",

"properties": {"customer_id": {"type": "string", "pattern": "^cust_[0-9]+$"}},

"required": ["customer_id"],

},

},

{

"name": "process_refund",

"description": (

"Issue a refund to a verified customer up to the policy cap.\n"

"Use ONLY after verify_customer has confirmed the customer is active.\n"

"Edge cases: returns Permission error if amount > policy_cap (handled by hook).\n"

"Never call before verify_customer; never call twice in one conversation."

),

"input_schema": {

"type": "object",

"properties": {

"customer_id": {"type": "string"},

"amount": {"type": "number", "minimum": 0},

"reason": {"type": "string", "enum": ["damage", "wrong_item", "late", "other"]},

},

"required": ["customer_id", "amount", "reason"],

},

},

]Wire the PreToolUse hook on policy-bearing tools

For every tool that touches money, identity, or destructive state, the PreToolUse hook is the architectural gate. It reads tool_input from stdin JSON, applies the policy check in code (not in a prompt), and exits 0 or 2. Exit 2's stderr message is fed back to the model as a tool_result with is_error: true. The model re-plans with the policy in view.

# .claude/hooks/refund_policy.py

import sys, json, os

POLICY_CAP = float(os.environ.get("REFUND_CAP", "500"))

def main():

payload = json.loads(sys.stdin.read())

tool_name = payload["tool_name"]

tool_input = payload["tool_input"]

if tool_name != "process_refund":

sys.exit(0) # not our concern, allow

amount = tool_input.get("amount", 0)

if amount > POLICY_CAP:

# Stderr is fed back to the model as a tool_result with is_error=true

print(

f"refund ${amount} exceeds policy cap ${POLICY_CAP}; "

f"escalate via escalate_to_human or reduce the amount",

file=sys.stderr,

)

sys.exit(2) # DENY

# Additional structural checks. Verify customer is active, etc.

if not tool_input.get("customer_id", "").startswith("cust_"):

print("customer_id missing or malformed; call verify_customer first",

file=sys.stderr)

sys.exit(2)

sys.exit(0) # allow

if __name__ == "__main__":

main()Wire the PostToolUse hook for normalization + audit

PostToolUse fires AFTER the tool runs, BEFORE the model sees the result. Two jobs: normalize the output shape (timestamps to ISO-8601, status codes to enum names, ms to seconds, etc.) so the model sees a consistent contract across calls; and write a canonical audit row capturing tool_name, tool_input, normalized_output, latency, and stop_reason context. The audit log is the replay tool when production breaks at turn 18.

# .claude/hooks/postuse_normalize.py

import sys, json, datetime

def normalize(tool_name: str, raw: dict) -> dict:

"""Project tool-specific raw output into a stable shape."""

if tool_name == "lookup_order":

return {

"order_id": raw.get("id") or raw.get("order_id"),

"status": (raw.get("status") or "unknown").upper(),

"created_at": (

datetime.datetime

.fromtimestamp(raw["created_unix"])

.isoformat() + "Z"

) if "created_unix" in raw else raw.get("created_at"),

"total_cents": int(raw.get("total_cents", round(raw.get("total_dollars", 0) * 100))),

}

if tool_name == "verify_customer":

return {

"customer_id": raw.get("customer_id") or raw.get("id"),

"active": bool(raw.get("active") or raw.get("is_active")),

"tier": raw.get("tier") or raw.get("plan") or "standard",

}

return raw # tools without a known shape pass through

def main():

payload = json.loads(sys.stdin.read())

tool_name = payload["tool_name"]

raw_result = payload["tool_result"]

normalized = normalize(tool_name, raw_result)

payload["tool_result"] = normalized

# Append canonical audit row

with open("audit.jsonl", "a") as f:

f.write(json.dumps({

"ts": datetime.datetime.utcnow().isoformat() + "Z",

"tool": tool_name,

"input": payload["tool_input"],

"output": normalized,

"latency_ms": payload.get("latency_ms"),

}) + "\n")

# Pipe normalized payload back to stdout. SDK uses this as the new tool_result

print(json.dumps(payload))

sys.exit(0)

if __name__ == "__main__":

main()Emit errors in 4 structured buckets

Every tool that can fail returns an error tagged with one of four buckets: Transient (network blip, retry), Permission (403/401, escalate. Don't retry, won't fix itself), Data (input malformed, surface to user), Business (policy violation, log + escalate). The agent reads bucket and retryable, and routes accordingly. Without this contract, the agent retries permission errors forever and surfaces transient blips as catastrophes.

from enum import Enum

from typing import TypedDict

class ErrorBucket(str, Enum):

TRANSIENT = "Transient"

PERMISSION = "Permission"

DATA = "Data"

BUSINESS = "Business"

class ToolError(TypedDict):

bucket: ErrorBucket

code: str # e.g. "RATE_LIMITED", "FORBIDDEN", "INVALID_INPUT", "POLICY_BREACH"

detail: str # human-readable; for the model

retryable: bool

def classify(http_status: int, body: dict) -> ToolError:

"""Project arbitrary backend error → 4-bucket contract."""

if http_status >= 500 or http_status == 429:

return {"bucket": ErrorBucket.TRANSIENT, "code": "RETRY",

"detail": f"upstream {http_status}; retry with backoff",

"retryable": True}

if http_status in (401, 403):

return {"bucket": ErrorBucket.PERMISSION, "code": "FORBIDDEN",

"detail": "agent lacks permission; escalate, do not retry",

"retryable": False}

if http_status == 400:

return {"bucket": ErrorBucket.DATA, "code": "INVALID_INPUT",

"detail": body.get("message", "input failed validation"),

"retryable": False} # retry won't fix; user must reformulate

if http_status == 422:

return {"bucket": ErrorBucket.BUSINESS, "code": "POLICY_BREACH",

"detail": body.get("message", "request violates business policy"),

"retryable": False}

return {"bucket": ErrorBucket.TRANSIENT, "code": "UNKNOWN",

"detail": str(body), "retryable": True}

# Tool wrapper emits the contract via tool_result

def call_lookup_order(order_id: str) -> dict:

resp = http_get(f"/orders/{order_id}")

if resp.status_code != 200:

return {"is_error": True, "error": classify(resp.status_code, resp.json())}

return {"is_error": False, "data": resp.json()}Use tool_choice 'auto' for specialists; 'forced' only for mandatory extraction

tool_choice: 'auto' is the right default. The model decides whether to call any tool, and which one, based on the request. tool_choice: 'any' forces the model to call SOME tool (rarely useful). tool_choice: { type: 'tool', name: ... } forces a specific tool. Only correct for extraction pipelines where the tool is mandatory. Forced tool_choice on a conversational specialist agent removes the agent's reasoning capacity.

# auto. The right default for specialist agents

def support_agent(message: str):

return client.messages.create(

model="claude-sonnet-4.5",

max_tokens=1024,

tools=SUPPORT_TOOLS,

tool_choice={"type": "auto"}, # agent decides

messages=[{"role": "user", "content": message}],

)

# forced. Only for mandatory extraction

def extract_one(email: str):

return client.messages.create(

model="claude-sonnet-4.5",

max_tokens=1024,

tools=[EXTRACT_TOOL],

tool_choice={"type": "tool", "name": "extract_record"}, # MUST fire

messages=[{"role": "user", "content": email}],

)

# any. Rarely useful; "must call SOME tool but I won't pick which"

# def some_specialist():

# return client.messages.create(

# tool_choice={"type": "any"},

# ... # only for unusual flows where any of N tools is acceptableShare tools across agents via MCP servers

When two agents both need lookup_order or verify_customer, don't duplicate the tool inline. Expose it through an MCP server. Each agent connects to the MCP server, advertises it as a tool, and gets a single source of truth. Updating the tool's behavior is a single deploy; the agents pick it up automatically. MCP also abstracts auth, observability, and rate-limiting away from each agent.

# .claude/mcp.json. Declare the MCP servers your project uses

{

"mcpServers": {

"crm": {

"command": "npx",

"args": ["-y", "@yourorg/crm-mcp-server"],

"env": {

"CRM_API_KEY": "${CRM_API_KEY}",

"CRM_BASE_URL": "https://crm.example.com"

}

}

}

}

# In your agent. MCP tools auto-show up in the tools list

async def support_agent(message: str):

# The CRM MCP server contributes verify_customer + lookup_order

return await client.messages.create(

model="claude-sonnet-4.5",

max_tokens=1024,

# tools are auto-loaded from MCP. Your local registry only adds:

tools=[PROCESS_REFUND_TOOL, ESCALATE_TO_HUMAN_TOOL],

tool_choice={"type": "auto"},

messages=[{"role": "user", "content": message}],

mcp_servers=["crm"], # fictional pseudo-API; real call sites vary by SDK

)Test routing accuracy with a 50-intent eval set

Tool design is empirical. Build a 50-intent eval set where each intent has a known correct tool. Run the agent over it, count first-call accuracy. Below 95% routing accuracy means the descriptions need work; below 90% likely means too many tools. Re-run the eval after every tool addition or description tweak.

# eval/tool_routing.py. Measure first-call routing accuracy

INTENTS = [

{"text": "I want a refund for order 12345",

"expected_first_tool": "verify_customer"},

{"text": "My order hasn't arrived",

"expected_first_tool": "verify_customer"},

{"text": "Cancel my account please",

"expected_first_tool": "escalate_to_human"},

# ... 47 more

]

def routing_accuracy() -> dict:

correct = total = 0

misses = []

for intent in INTENTS:

resp = client.messages.create(

model="claude-sonnet-4.5",

max_tokens=512,

tools=SUPPORT_TOOLS,

tool_choice={"type": "auto"},

messages=[{"role": "user", "content": intent["text"]}],

)

first_tool = next(

(b.name for b in resp.content if b.type == "tool_use"),

None,

)

total += 1

if first_tool == intent["expected_first_tool"]:

correct += 1

else:

misses.append({

"text": intent["text"],

"expected": intent["expected_first_tool"],

"got": first_tool,

})

return {

"accuracy": correct / total,

"n": total,

"misses": misses[:10], # first 10 for review

}

# Re-run after every change to the tool registry; gate deploys on >= 95%The four decisions

| Decision | Right answer | Wrong answer | Why |

|---|---|---|---|

| Tool count for a specialist agent | 4-5 tools (the optimum); split into specialist sub-agents past 5 | 15 tools 'because the model is smart enough' | Routing accuracy drops ~8% per tool past 5. By 15 tools, accuracy is ~30% lower than at 5. The fix is structural (split the agent), not model-side (bigger model doesn't compensate for ambiguous tool descriptions). |

| Tool description format | 4-line pattern: what / when / edge cases / ordering | One vague sentence ('verifies a customer') | Vague descriptions cause ~12% wrong-tool selection. The 4-line pattern is the model's structural cue. It reads the pattern across all 5 descriptions and routes accordingly. Wrong-tool rate drops below 3%. |

| Refund-cap policy enforcement | PreToolUse hook reads tool_input.amount, exits 2 on violation | System prompt: 'never refund more than $500' | Prompt-only enforcement leaks 3-5% in production despite emphatic phrasing. PreToolUse hooks are deterministic. Exit 2 means deny, full stop. For policy-bearing tools, the hook is the only credible architecture. |

| Tool error contract | 4 buckets (Transient · Permission · Data · Business) + retryable boolean | Free-form error messages parsed by the agent | Free-form errors force the agent to interpret strings; structured buckets let it BRANCH (Transient → retry; Permission → escalate; Data → surface; Business → block + log). Without buckets, the agent retries permission errors forever and panics on transient blips. |

Where it breaks

Five failure pairs. Each one is one exam question. The fix is always architectural, deterministic gates, structured fields, pinned state.

15-tool agent with overlapping descriptions. Routing accuracy at first-call drops to ~65%; the agent alternates between similar tools, sometimes calls 3 tools before settling on the right one. Latency up; cost up; quality down.

AP-ATD-01Cap at 4-5 tools per agent. Move rare tools (used <10% of conversations) to specialist sub-agents. Use a triage classifier to route requests to the right sub-agent. Each sub-agent stays at 4-5 tools.

Tool descriptions are one-liners ('verifies the customer', 'looks up an order'). Agent misroutes ~12% of calls because it can't tell when each tool applies.

AP-ATD-02Anthropic 4-line pattern: what / when / edge cases / ordering. Each line targets a specific routing decision the model has to make. Wrong-tool rate drops below 3%.

Refund cap enforced via system-prompt language. Production logs show 3-5% of refunds violate the cap. Audit fails; finance rolls back; trust in the agent drops.

AP-ATD-03PreToolUse hook reads tool_input.amount, compares to policy, exits 2 with stderr message on violation. Deterministic, not probabilistic. Policy violations drop to 0.

Tool returns 403; agent retries indefinitely. Tool returns 500; agent crashes. Tool returns 400 with malformed input; agent gives up without surfacing the input issue to the user.

AP-ATD-044-bucket structured error contract (Transient · Permission · Data · Business) with retryable boolean. Agent reads bucket, branches: Transient → retry with backoff; Permission → escalate; Data → surface to user; Business → block + log + escalate.

Agent calls lookup_order before verify_customer. Wrong record returned 12% of the time because the customer_id wasn't validated first. Bad data pollutes downstream decisions.

AP-ATD-05Tool descriptions explicitly state ordering ('Always run BEFORE process_refund'; 'Use ONLY after verify_customer has confirmed the customer is active'). The 4-line pattern's last line is for ordering precisely because ordering is so often the routing failure.

Cost & latency

Hooks run as subprocesses reading stdin JSON. No LLM call. Pure local Python/TS. The latency is below the noise floor of a typical tool API call.

50 messages × ~500 tokens input + ~50 tokens output at Sonnet 4.5 prices. Cheap insurance against routing regressions; run on every tool registry change and weekly in CI.

Tools array is stable; mark with cache_control: ephemeral. Schema-cache hit rate ≥ 70% drops effective per-call cost ~90% on the tools array.

MCP runs as a separate process or service; network round-trip adds latency. Worth the cost when 2+ agents share the tool. Single source of truth beats inline duplication.

Append-only JSONL write in the PostToolUse hook. At 1000 calls/day, 1MB/day, 30MB/month. Negligible storage. Indispensable for production debugging.

Ship checklist

Two passes. Build-time gates verify the code; run-time gates verify the system in production.

Build-time

- Tool count per agent ≤ 5; rare tools moved to specialist sub-agents↗ tool-calling

- Every tool description follows the 4-line pattern (what / when / edge cases / ordering)↗ tool-calling

- PreToolUse hook on every policy-bearing tool; exit 2 on violation↗ hooks

- PostToolUse hook normalizes outputs and writes the audit log↗ hooks

- All tool errors emit one of 4 buckets (Transient · Permission · Data · Business) + retryable boolean↗ structured-outputs

- tool_choice: 'auto' on specialist agents; 'forced' only for mandatory extraction↗ tool-choice

- Shared tools exposed via MCP servers; no inline duplication across agents↗ mcp

- 50-intent routing-accuracy eval set; gate deploys on ≥ 95%↗ evaluation

- Agent branches on bucket + retryable; no parsing of error.detail text

- Hook stderr messages are model-readable (specific, actionable, reference policy)

- Audit log: tool_name, tool_input, normalized_output, latency_ms, stop_reason context

Run-time

- Tool registry audit: every agent has ≤ 5 tools; documented in repo

- Description format lint: every tool has 4 lines (what / when / edges / ordering)

- PreToolUse hook on every policy-bearing tool; unit-tested for allow/deny

- PostToolUse hook normalization tested on at least 5 representative output shapes

- 4-bucket error contract enforced in CI: every tool wrapper returns the contract

- 50-intent routing eval runs in CI; gates deploy on ≥ 95% accuracy

- MCP server health checks before agent invocation; degraded mode on outage

- Audit log retained ≥ 90 days; indexed by tool_name + customer_id

Five exam-pattern questions

Your agent has 6 tools. Routing accuracy drops from 95% (with 5 tools) to 87% (with 6). What's the cause and the architectural fix?

lookup_order_status + lookup_order_details → lookup_order) or move the rare tool to a specialist sub-agent and route requests to the right specialist with a triage classifier. Don't 'just use a smarter model'. Bigger models don't compensate for ambiguous tool descriptions. Tagged to AP-ATD-01.Your tool description is one sentence: 'verifies a customer'. The agent uses it correctly ~88% of the time. What format produces ≥97% accuracy?

AP-ATD-02.PreToolUse hook fires before tool_use; PostToolUse fires after. Which one blocks risky operations like a refund-cap violation, and why?

A tool returns HTTP 403. Should the agent retry?

bucket: 'Permission', retryable: false. The agent reads bucket and retryable and routes: Permission means 'agent lacks the privilege; escalate, don't retry'. Retry won't fix it (the missing permission won't appear in the next 30 seconds). Without this contract, the agent retries permission errors forever and panics on transient ones. Exactly the failure mode the buckets exist to prevent. Tagged to AP-ATD-04.When should tool_choice be `'auto'`, `'any'`, or `{ type: 'tool', name: ... }`?

auto is correct.Frequently asked

Why does the 4-line description pattern matter so much?

Can I use the 4-line pattern in MCP tool descriptions?

tools[] array as inline tools; the description format is identical. If you ship an MCP server, write the 4-line pattern into the server's tool definitions. Downstream agents inherit the routing accuracy without doing anything.What if the policy is too complex for a hook?

How do I add a new tool to the registry without breaking routing?

Should every tool emit the 4-bucket error contract?

{ is_error: true, error: { bucket, code, detail, retryable } }. The agent's retry logic relies on it; without uniformity, you'd write per-tool retry code and miss bugs.When do I split into specialist sub-agents vs add tools to the existing one?

verify_customer + lookup_order + process_refund) can stay in one agent. Tools that don't share state (admin functions vs support functions vs analytics) belong in different agents. Split, route with a triage classifier, each agent stays at 4-5 tools.