The problem

What the customer needs

- Pick up the conversation at turn 15 and still see the customer ID, decision, and contact preference from turn 2.

- Be honored immediately when they say 'I want to speak to a human'. No negotiation, no 'let me try first'.

- Not be re-asked the same clarifying question three turns after they already answered it.

Why naive approaches fail

- Single-block message history hits lost-in-the-middle by turn 9; the agent loses the order ID and re-asks.

- Prompt-only clarification language ('don't repeat questions') leaks 8% of cases; the agent re-asks anyway.

- Sentiment-triggered escalation creates 50% false positives: angry-but-valid customers get escalated unnecessarily.

- Turn-15 retrieval of customer_id + prior decision = 100% (case-facts pinned, never summarized)

- Repeat-clarification rate < 1% (programmatic prerequisite block, not prompt language)

- False-escalation rate < 5% (policy-gap + explicit-request triggers only, sentiment ignored)

- p95 turn latency < 5s including hook overhead and history compression

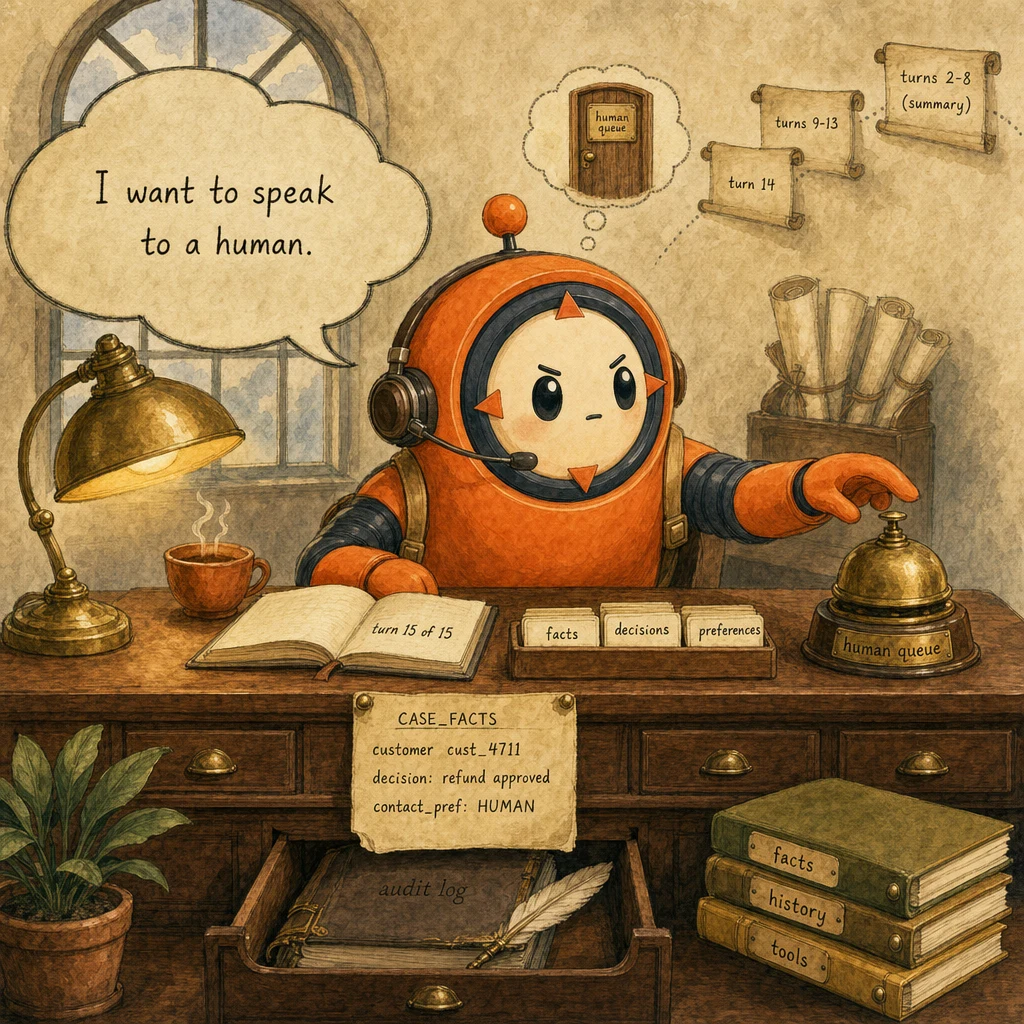

The system

What each part does

5 components, each owns a concept. Click any card to drill into the underlying primitive.

Case-Facts Block

immutable customer state, top of prompt

Pinned at the very top of every system-prompt iteration. Holds customer_id, decision_made, contact_preference, escalation_requested, policy_cap. Survives history compression and is re-read every turn. That is the entire point.

Configuration

system: f"CASE_FACTS:\n customer={cust_id} · decision={decision} · contact={pref} · escalated={escalated}". Updated by hooks after any state-changing tool call. Never paraphrased; always exact.

Session State Manager

decisions + flags between turns

Tracks the structured state that case-facts cannot: which clarification questions have been answered, which tool results are still in play, whether the customer has explicitly asked for a human. Updated post-each-tool-call. Read by the hook before any subsequent tool dispatch.

Configuration

state: {clarifications_answered: [order_id, refund_or_credit], last_tool_result, escalation_requested: false, contact_preference: 'email'}. Persisted in session store, loaded into prompt as a serialized block.

History Summarizer

turns 2-14 → 3 lines at turn 15

Watches conversation length. When the message list exceeds 15 entries, replaces turns 2-N-1 with a single 3-line summary preserving decisions, not transcripts. Case-facts stays untouched at the prompt top. The summary lives in the message list, not in case-facts.

Configuration

if len(messages) > 15: summary = compress_to_3_lines(messages[1:-1]); messages = [messages[0], {role: 'user', content: summary}, messages[-1]]. Keeps token count flat while preserving decision continuity.

Clarification Gate Hook

PreToolUse · prerequisite block

Sits between Claude's tool_use request and tool execution. If a downstream tool needs verified_id and case-facts.verified_id is null, exits 2 with a deterministic message routing Claude to call get_customer first. This is the difference between probabilistic prompt language and 100% prerequisite enforcement.

Configuration

Hook fires before process_refund / update_account / escalate_to_human. Reads case_facts.verified_id and conversation flags. Exit 2 with stderr message routes Claude back; no leakage, no exceptions.

Stop-Reason Loop Control

branch on the field, not the text

Reads stop_reason after every API response. end_turn → exit cleanly. tool_use → execute, append result, continue. max_tokens → save partial state and escalate (never silently truncate). Never branches on response text containing 'done' or 'goodbye'.

Configuration

while True: resp = client.messages.create(...). if resp.stop_reason "end_turn": return. if resp.stop_reason "tool_use": dispatch + append. if resp.stop_reason == "max_tokens": persist + escalate.

Data flow

Eight steps to production

Define the case-facts anchor block

Pin the immutable customer facts at the very top of the system prompt. These survive compression, are re-read every turn, and are never paraphrased. The block is the single load-bearing pattern of the whole scenario. Get this wrong and turn 15 forgets turn 2.

from anthropic import Anthropic

client = Anthropic()

def build_system_prompt(case_facts: dict) -> str:

return f"""You are a conversational support agent.

CASE_FACTS (immutable; re-read every turn; never paraphrased):

- customer_id: {case_facts['customer_id']}

- decision_made: {case_facts.get('decision_made', 'none')}

- contact_preference: {case_facts.get('contact_preference', 'unset')}

- escalation_requested: {case_facts.get('escalation_requested', False)}

- policy_cap: ${case_facts.get('cap', 500)}

Constraints:

- Branch on stop_reason. Never on response text.

- If escalation_requested is True: route to human queue, no negotiation.

- If a clarifying question was already answered, do not re-ask (state below)."""Build the session-state structure

Case-facts holds immutable customer state; session-state holds the conversational state. Answered clarifications, last tool result, escalation flag. Together they replace the lost-in-the-middle problem with structural retrieval. Loaded into the prompt as a serialized block right after CASE_FACTS.

def build_session_block(state: dict) -> str:

return f"""SESSION_STATE (updated post-each-turn):

- clarifications_answered: {state.get('clarifications_answered', [])}

- last_tool: {state.get('last_tool')} → {state.get('last_tool_result_summary')}

- escalation_requested: {state.get('escalation_requested', False)}

- contact_preference: {state.get('contact_preference', 'email')}

"""

# After every tool call, update + persist

state['clarifications_answered'].append('order_id')

state['last_tool'] = 'lookup_order'

state['last_tool_result_summary'] = 'order_status=delivered'

session_store.save(case_facts['customer_id'], state)Wire the PreToolUse clarification hook

Programmatic prerequisite enforcement. Before any account-modifying tool, the hook checks case-facts + session-state. Missing prerequisites → exit 2 with a structured stderr message; Claude reads it and routes to the prerequisite tool first. Prompt language alone leaks 8%; this hook is 100%.

# .claude/hooks/clarification_gate.py

import sys, json

def main():

payload = json.loads(sys.stdin.read())

tool_name = payload["tool_name"]

case_facts = payload.get("case_facts", {})

session = payload.get("session_state", {})

# Account-modifying tools require verified identity

if tool_name in ("process_refund", "update_account", "escalate_to_human"):

if not case_facts.get("verified_id"):

print("verified_id missing. Call get_customer first", file=sys.stderr)

sys.exit(2)

# Honor explicit escalation request. No further tool calls

if session.get("escalation_requested") and tool_name != "escalate_to_human":

print("user requested human; route to escalation queue, no other tools", file=sys.stderr)

sys.exit(2)

sys.exit(0)

if __name__ == "__main__":

main()Run the loop on stop_reason, not text

The single most-tested distractor in this scenario is parsing response text for 'done'. Claude can return text + tool_use in the same message; the structured stop_reason field is the only authoritative termination signal. Branch on it. Always.

def run_conversation_turn(user_msg: str, case_facts: dict, state: dict, max_iter: int = 12):

messages = load_history(case_facts['customer_id']) + [{"role": "user", "content": user_msg}]

system = build_system_prompt(case_facts) + "\n\n" + build_session_block(state)

for _ in range(max_iter):

resp = client.messages.create(

model="claude-sonnet-4.5",

max_tokens=2048,

system=system,

tools=tools,

messages=messages,

)

if resp.stop_reason == "end_turn":

persist_history(case_facts['customer_id'], messages, resp)

return extract_text(resp)

if resp.stop_reason == "tool_use":

tool_uses = [b for b in resp.content if b.type == "tool_use"]

results = [execute_tool(t, case_facts, state) for t in tool_uses]

messages.append({"role": "assistant", "content": resp.content})

messages.append({"role": "user", "content": results})

update_state(state, tool_uses, results)

continue

if resp.stop_reason == "max_tokens":

persist_partial(case_facts, state, resp)

return {"status": "partial_escalate", "text": extract_text(resp)}

return {"status": "iteration_cap"}Compress conversation history at turn 15

When the message list exceeds 15 entries, replace turns 2 through N-1 with a single summary that preserves decisions, not transcripts. Case-facts stays at the prompt top, untouched. The summary lives in the message list. This frees ~40% of tokens with zero decision loss.

def compress_history(messages: list, threshold: int = 15) -> list:

if len(messages) <= threshold:

return messages

# Preserve the original user message + the most recent exchange.

first = messages[0]

last = messages[-1]

middle = messages[1:-1]

# Summarize: extract decision points only (not full transcript)

decisions = extract_decisions(middle) # e.g. ["user_asked_for_refund", "agent_verified_customer", "policy_allowed_$50"]

summary = "CONVERSATION SUMMARY (turns 2-" + str(len(messages) - 1) + "):\n" + "\n".join(f"- {d}" for d in decisions)

return [first, {"role": "user", "content": summary}, last]

# Usage in the turn loop

messages = compress_history(messages, threshold=15)Honor explicit human-handoff requests immediately

When the customer says 'speak to a human', the agent does not negotiate. The hook flips session_state.escalation_requested → true. The next tool dispatch is escalate_to_human; everything else is blocked. Sentiment is orthogonal. Angry customers with valid requests still get the answer first.

# Detection lives in the agent's prompt; latching lives in state

EXPLICIT_HUMAN_PHRASES = [

"speak to a human",

"talk to a person",

"i want a human",

"transfer me",

"give me a human",

]

def detect_explicit_handoff(user_msg: str) -> bool:

msg = user_msg.lower()

return any(phrase in msg for phrase in EXPLICIT_HUMAN_PHRASES)

def handle_user_turn(user_msg: str, case_facts: dict, state: dict):

if detect_explicit_handoff(user_msg):

state['escalation_requested'] = True

# The next agent loop will see this in session_state and the hook

# will block any tool except escalate_to_human.

return run_conversation_turn(user_msg, case_facts, state)

# Sentiment is intentionally NOT consulted here.Cache the system prompt + tools

System prompt + tool definitions are stable across turns; only case-facts and session-state change. Mark the stable parts with cache_control: ephemeral and pay ~90% less for those bytes on every turn after the first. With 5-min TTL on continuous traffic, hit rate stays above 70%.

# Split system into stable (cached) + dynamic (fresh) blocks

resp = client.messages.create(

model="claude-sonnet-4.5",

max_tokens=2048,

system=[

{

"type": "text",

"text": STABLE_SYSTEM_PREAMBLE, # role + constraints, never changes

"cache_control": {"type": "ephemeral"},

},

{

"type": "text",

"text": build_case_facts_block(case_facts) + build_session_block(state), # changes per turn

},

],

tools=tools, # tools array also auto-cached when stable

messages=messages,

)

# Inspect resp.usage.cache_creation_input_tokens / cache_read_input_tokens to verify hit rate.Audit-log the conversation arc

Every closed conversation writes a structured row: customer_id, turn_count, tool_calls_in_order, escalation_reason (if any), elapsed_ms_total, csat. Skip the full transcript. The structured trace is enough to replay any failure and is 50× smaller. Store for 90 days minimum.

def audit_conversation(case_facts: dict, state: dict, agent_path: list, elapsed_ms: int, csat: int | None):

db.audit.insert({

"ts": datetime.utcnow(),

"customer_id": case_facts["customer_id"],

"turn_count": len(agent_path),

"tool_calls": [c["name"] for c in agent_path if c.get("type") == "tool_use"],

"stop_reasons": [c.get("stop_reason") for c in agent_path],

"escalation_reason": state.get("escalation_reason"),

"compression_fired_at_turn": state.get("compression_at_turn"),

"elapsed_ms": elapsed_ms,

"csat": csat,

})The four decisions

| Decision | Right answer | Wrong answer | Why |

|---|---|---|---|

| Multi-turn customer state | case-facts block at top of prompt + session-state block | progressive summarization of customer_id + amount | Transactional values must be pinned, never paraphrased. Summarization erodes precision; case-facts is structural. |

| Customer says 'speak to a human' | set escalation_requested → block all tools except escalate_to_human | negotiate ('let me try once more') or suggest alternatives | Explicit user requests are non-negotiable. Cost of overriding stated preference (churn, complaint escalation) exceeds any benefit of one-more-attempt. |

| Long conversation context fills | compress turns 2 through N-1 into 3 lines at turn 15; case-facts stays untouched | keep all messages OR summarize the case-facts block | Conversation history has diminishing returns; case-facts are structural. Compress history, never facts. |

| Angry customer with valid request | process the request normally; sentiment does not trigger escalation | escalate on negative sentiment | Sentiment is orthogonal to escalation need. Angry-but-valid customers should get the answer; only policy gaps + tool limits + explicit requests warrant escalation. |

Where it breaks

Five failure pairs. Each one is one exam question. The fix is always architectural, deterministic gates, structured fields, pinned state.

By turn 9, agent has summarized cust_4711's order ID to 'a recent order'. Treats turn 10 as a new conversation.

AP-35Pin CASE_FACTS at top of system prompt. Re-read every turn. Never paraphrased. Compression only touches the message list, never the case-facts block.

System prompt says 'do not re-ask answered questions'. Agent re-asks 'which order?' on turn 4 and turn 8. 8% leakage.

AP-02Track answered clarifications in session_state. PreToolUse hook checks state and blocks downstream tools if a prerequisite clarification is unanswered. Deterministic, not probabilistic.

50 turns fill the context window. Lost-in-the-middle effect drops the order ID. Agent makes contradictory recommendations.

AP-03At turn 15, summarize turns 2 through N-1 into 3 lines preserving decisions only. Case-facts stays at prompt top. Frees ~40% tokens with zero decision loss.

User said 'I do not want to be contacted by phone' on turn 3. Agent suggests phone callback on turn 9.

AP-04Persist session_state with contact_preference + decision flags. Read into prompt every turn alongside case-facts.

Angry customer with a valid refund request is escalated because tone is negative. 50% false-positive rate, customers learn that anger = faster service.

AP-22Escalation triggers only on (a) policy gap, (b) tool limit, (c) explicit user request. Sentiment is logged for reporting but never gates escalation.

Cost & latency

12 avg turns × (cached system + tools + dynamic case-facts/session-state + accumulating history). Cache hits ~70% on stable preamble + tools.

Pre-cache: ~$0.05. With ephemeral cache on stable system + tools: ~$0.022. ~55% reduction on long conversations.

Streaming first token in ~150ms. Tool round-trips 1.5-2s each. Average 1.5 tool calls per turn + hook (<100ms) + compose.

12 verbose message blocks (≈8K tokens) → 3-line summary (≈250 tokens). Frees the long-tail conversations from lost-in-the-middle and OOM-on-history.

5-min ephemeral TTL on stable preamble + tool definitions. Continuous chat traffic keeps cache warm. Per-turn case-facts/session-state stays fresh, as it should.

Ship checklist

Two passes. Build-time gates verify the code; run-time gates verify the system in production.

Build-time

- Case-facts block pinned at top of every system-prompt iteration↗ case-facts-block

- Session-state block serialized into prompt after case-facts↗ session-state

- PreToolUse clarification gate hook (deterministic prerequisite block)↗ hooks

- Loop branches on stop_reason, never on response text↗ agentic-loops

- History summarizer fires at turn 15; case-facts left untouched↗ context-window

- Explicit human-handoff phrase detection latches escalation_requested = true↗ escalation

- Sentiment is logged but never gates escalation

- Stable system preamble cached with cache_control: ephemeral↗ prompt-caching

- Tool definitions cached (unchanged across turns)↗ tool-calling

- Audit log per closed conversation (structured, not transcript)

- Iteration cap (max_iter=12) as a safety net, not the primary control

Run-time

- Unit tests for case-facts block construction (immutable, ordered, exact-string)

- Integration test: 20-turn conversation with case-facts assertion at every turn

- Hook test: missing verified_id → exit 2; escalation_requested + non-escalate tool → exit 2

- Compression test: at turn 15, message list shrinks; case-facts unchanged; decisions preserved

- Explicit-handoff test: 'I want a human' → escalation_requested latches true → hook blocks other tools

- Latency monitor: alert if p95 turn-latency > 6s for ≥ 5 min

- Cost monitor: alert if per-conversation cost > $0.04 (signals cache hit rate dropped)

- False-escalation monitor: alert if sentiment-only escalations exceed 1% of total

Five exam-pattern questions

By turn 8 of a long conversation, your agent has lost the customer's order ID and refund amount. The agent treats turn 9 as if it were turn 1. What is the architectural fix?

customer_id, order_id, refund_amount, policy_cap, decision_made, contact_preference, escalation_requested. It is immutable and re-read every turn. Transactional values (IDs, amounts) must never be summarized; only conversation reasoning chains can be paraphrased. When the message list grows past 15, compress turns 2 through N-1 into a 3-line summary. The case-facts block stays untouched at the prompt top. Tagged to AP-35.Your agent asks 'which order?' on turn 4 and again on turn 8, even though the customer specified the order ID on turn 2. The system prompt says 'do not re-ask answered questions'. What's leaking?

clarifications_answered: ['order_id', 'refund_or_credit']). A PreToolUse hook reads session-state before any downstream tool dispatch and exits 2 if a prerequisite clarification is unanswered. The hook is deterministic; the prompt is probabilistic. For business-critical guarantees, structural beats linguistic. Tagged to AP-02.A 15-turn conversation has filled 60% of the context window. The agent begins making contradictory recommendations (suggests escalation on turn 12, then re-engages with solving on turn 14). What approach minimizes token waste while preserving conversation quality?

'user requested refund · agent verified customer · policy allows full refund · customer chose refund over credit'. Discard the verbose back-and-forth. Case-facts is untouched. It was never going to be summarized. This frees ~40% of tokens with zero decision loss.On turn 5, the customer says 'I want to speak to a human'. Your agent says 'Let me see if I can solve this for you first' and continues the conversation. Why is this wrong, and how do you fix it architecturally?

state.escalation_requested = true, and configure the PreToolUse hook to block all tools except escalate_to_human when that flag is set. The agent's only legal next move is the structured handoff to the human queue. No negotiation, no alternatives.An angry customer is requesting a refund that the policy clearly allows. Your agent escalates because tone analysis flagged the message as 'distressed'. What's the failure mode, and what should the escalation criteria actually be?

AP-22.Frequently asked

Why pin case-facts in the system prompt instead of passing them as a tool result?

What's the right threshold for triggering history compression?

If case-facts is immutable, how do I update it when the customer changes their decision?

Why a PreToolUse hook for clarification, instead of putting the rule in the prompt?

Should I cache the case-facts block?

cache_control: ephemeral) and a dynamic block (case-facts + session-state, fresh every turn). You get ~70% hit rate on the cached portion and zero staleness on the dynamic portion.What if the customer switches topics mid-conversation (refund → tech support)?

How do I test that conversation continuity is actually working?

What goes in the audit log for a long conversation?

customer_id, turn_count, ordered list of tool_calls (just names + timestamps), per-turn stop_reasons, compression_fired_at_turn (if any), escalation_reason (if any), elapsed_ms_total, csat (if surveyed). Skip the full transcript. The structured trace is 50× smaller and replays any failure path. Retain 90 days minimum for production debugging and exam-style retrospectives.