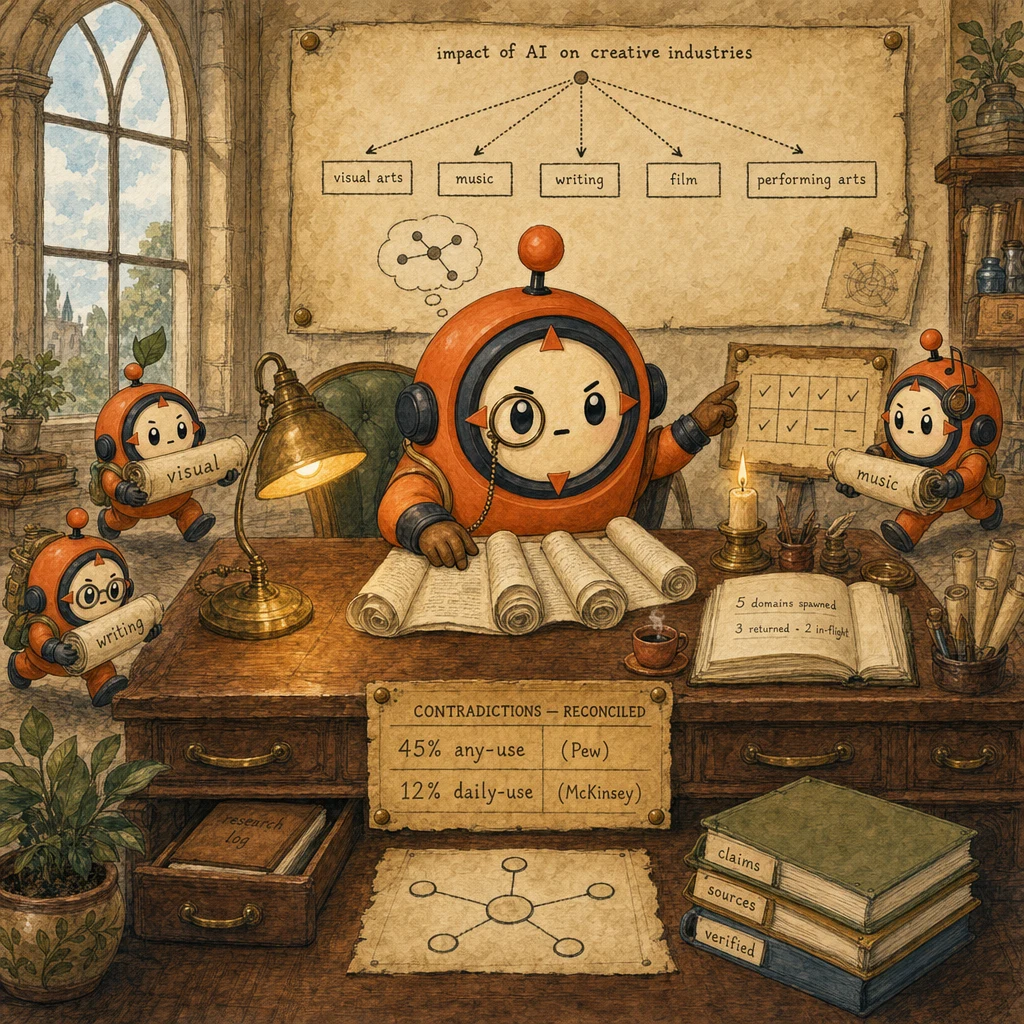

The problem

What the customer needs

- Complete coverage of the research topic. Every relevant sub-domain enumerated, none silently dropped.

- Reconciled contradictions preserved with attribution, not flattened into one 'most likely' number.

- Cited final report that traces every claim to a verifiable source and acknowledges data gaps.

Why naive approaches fail

- Coordinator decomposes 'creative industries' into only visual arts. Misses music, writing, film, performing arts.

- Web-search subagent times out and returns empty results as success. Coordinator treats as 'no info' instead of 'needs retry'.

- Synthesis picks the 'more likely' statistic between 45% (Pew) and 12% (McKinsey). Drops the conflict, ships misinformation.

- Topic-coverage gap rate = 0 (decomposition reviewed before spawn)

- Timeout-as-empty-results rate = 0 (structured error context required)

- Contradiction-preservation rate = 100% (both stats + sources retained)

- Subagent-to-subagent direct call rate = 0 (all routes through coordinator)

The system

What each part does

5 components, each owns a concept. Click any card to drill into the underlying primitive.

Coordinator Agent

the hub of hub-and-spoke

Receives the user query, performs SEMANTIC decomposition (not lexical) into all relevant sub-domains, spawns research subagents in parallel with explicit task prompts, awaits all results, routes findings to verification, hands verified claims to synthesis. Owns every cross-subagent communication path.

Configuration

Decomposition is the load-bearing step. For 'impact of AI on creative industries' the coordinator must enumerate visual + music + writing + film + performing arts, not stop at the first sub-domain. Spawn pattern: asyncio.gather (Python) / Promise.all (TS).

Research Subagent (parallel)

scoped tools, isolated context

One subagent per sub-domain. Receives an explicit task prompt. No inherited history, no parent context. Runs research with a narrow tool whitelist (Read, WebSearch, Bash). Returns structured findings JSON: {claim, sources: [{url, date, confidence}]}. Never editorialises; reports facts as stated.

Configuration

system: "You are a research specialist. Find authoritative sources. Return JSON {findings: [{claim, sources: [...]}]}." tools: [Read, WebSearch, Bash]. messages: [{role: "user", content: task_from_coordinator}].

Verification Subagent

fact-check + reconcile contradictions

Cross-checks all claims from research subagents. When two sources conflict (45% Pew vs 12% McKinsey), preserves both with their context and attribution rather than picking the 'more likely' one. Returns verified claims with confidence scores; the verification step is what protects the report from misinformation.

Configuration

Input: pooled claims from all research subagents. Output: {verifications: [{claim, verified, confidence, sources_reconciled, notes}]}. Notes field captures the context that explains apparent contradictions (different timeframes, definitions, populations).

Synthesis Subagent

read-only narrative generator

Receives verified claims + the coordinator's narrative prompt. Writes a cohesive Markdown report with inline citations [1], [2]. CRITICAL: tools restricted to Read only. No WebSearch, no Bash. This prevents re-research and keeps synthesis focused on stitching the verified facts into a story.

Configuration

system: "You are a synthesis specialist. Read verified findings and write a cited narrative. Do NOT research." tools: [Read]. Input: {verified_claims, narrative_prompt}. Output: Markdown with [n] citations.

Error Propagation Layer

structured timeout context

When a subagent times out or hits a dead end, returns structured error context the coordinator can act on: {status: 'timeout', query, partial_results, alternatives}. Coordinator inspects status_code and either retries with a narrower scope, accepts partial data, or transparently marks the gap in the final report.

Configuration

On timeout: {status: 'timeout', query, partial, alternatives: ['narrower query', 'different keywords', ...]}. On no_results: {status: 'no_results', query, alternatives}. Never return [] as success. Silence loses the failure context.

Data flow

Eight steps to production

Build the coordinator's semantic decomposition

The decomposition step is where most coverage failures actually originate. Analyse the topic semantically and enumerate ALL relevant sub-domains before spawning anything. For 'creative industries', that means visual arts AND music AND writing AND film AND performing arts. Not the first one that comes to mind. The decomposition is the coordinator's load-bearing responsibility.

from typing import List

def decompose_query(query: str) -> List[str]:

"""Semantic decomposition. Enumerate all relevant sub-domains.

The exam-question distractor is to blame subagents for narrow

coverage when the coordinator's decomposition was the bug.

"""

q = query.lower()

if "creative industries" in q:

domains = [

"visual arts (digital art, graphic design, photography)",

"music production and composition",

"writing (novels, journalism, screenwriting)",

"film and video production",

"performing arts (theater, dance)",

]

elif "healthcare" in q:

domains = [

"clinical decision support",

"medical imaging and diagnostics",

"drug discovery and trials",

"patient-facing communication",

"administrative + revenue cycle",

]

else:

# Generic fallback. STILL decompose, never single-shot

domains = [

f"{query}. Recent academic literature",

f"{query}. Industry case studies",

f"{query}. Empirical adoption data",

]

return [f"Find AI impact on {d}" for d in domains]Define subagent system prompts and tool whitelists

Every subagent gets its own system prompt + scoped tool list. Research subagents get [Read, WebSearch, Bash]; verification gets [Read, WebSearch, Bash] + a fact-check rubric; synthesis gets [Read] only. That read-only restriction is the architectural detail that prevents synthesis from re-researching mid-narrative.

RESEARCH_SUBAGENT_SYSTEM = """You are a research specialist.

1. Find authoritative sources on the assigned sub-domain.

2. Extract key claims with evidence.

3. Return structured JSON ONLY:

{"findings": [

{"claim": "...", "sources": [{"url": "...", "date": "YYYY-MM-DD", "confidence": 0.0-1.0}]}

]}

Do NOT synthesize, editorialize, or pick winners between conflicting sources.

Report facts as stated. Preserve contradictions for verification."""

VERIFICATION_SUBAGENT_SYSTEM = """You are a fact-checker.

1. Read pooled claims from research subagents.

2. Cross-check each claim against its sources.

3. When sources conflict, PRESERVE BOTH with attribution + context.

4. Return JSON:

{"verifications": [

{"claim": "...", "verified": true|false, "confidence": 0.0-1.0,

"sources_reconciled": [...], "notes": "context about conflicts"}

]}"""

SYNTHESIS_SUBAGENT_SYSTEM = """You are a synthesis specialist.

1. Read verified findings (JSON).

2. Write a cohesive Markdown narrative with inline [n] citations.

3. Acknowledge data gaps transparently when verifications flag them.

4. Do NOT conduct new research. You have ONLY the Read tool."""Spawn research subagents in parallel

All research subagents fire at once via async fan-out. Latency is max(subagents), not sum. The whole point of the architecture. Each subagent receives an explicit task prompt with the context it needs; nothing is inherited from the coordinator's history. Cost: N separate API calls. Worth it.

import asyncio

from anthropic import AsyncAnthropic

client = AsyncAnthropic()

async def spawn_research(task: str) -> dict:

"""One isolated research subagent. No inherited history."""

resp = await client.messages.create(

model="claude-sonnet-4.5",

max_tokens=2048,

system=RESEARCH_SUBAGENT_SYSTEM,

tools=RESEARCH_TOOLS, # [Read, WebSearch, Bash]

messages=[{"role": "user", "content": task}],

)

return {

"task": task,

"stop_reason": resp.stop_reason,

"result": extract_json(resp),

}

async def research_in_parallel(tasks: list[str]) -> list[dict]:

"""Fan out N subagents at once. Latency = max, not sum."""

return await asyncio.gather(*(spawn_research(t) for t in tasks))

# Coordinator usage

tasks = decompose_query("impact of AI on creative industries")

results = asyncio.run(research_in_parallel(tasks))Return structured error context, never silence

When a subagent times out or hits no results, the WORST thing it can do is return []. The coordinator then can't tell whether 'no info exists' or 'we never got the data'. A critical distinction for the final report. Always return a structured error: status + query + partial_results + alternatives. The coordinator inspects status_code and decides: retry, narrow, or transparently mark the gap.

def handle_subagent_error(error_type: str, query: str, partial: list | None = None) -> dict:

"""Structured error context. Coordinator consumes status_code to decide."""

if error_type == "timeout":

return {

"status": "timeout",

"query": query,

"partial_results": partial or [],

"alternatives": [

"Narrow the query to a single sub-aspect",

"Try synonyms or domain-specific terms",

"Reduce the time horizon (e.g., last 12 months)",

],

}

if error_type == "no_results":

return {

"status": "no_results",

"query": query,

"alternatives": ["Broaden keywords", "Check spelling", "Try sister terms"],

}

if error_type == "rate_limited":

return {"status": "rate_limited", "query": query, "retry_after_s": 60}

return {"status": "unknown_error", "query": query}

# Coordinator inspects status_code

def coordinator_handle(result: dict, original_task: str):

status = result.get("status")

if status == "timeout":

# Either retry with the first alternative or include a transparent gap

return retry_with_narrower_query(result["alternatives"][0])

if status == "no_results":

return mark_data_unavailable(original_task)

return result # successRun the verification subagent and preserve contradictions

Pool all claims from research subagents and pass them to a single verification subagent. When two sources conflict (45% Pew vs 12% McKinsey), the verification subagent's job is NOT to pick a winner. It is to preserve both with their context (different definitions, different timeframes, different populations) and attribute each to its source. Picking one is misinformation; preserving both is journalism.

def build_verification_task(pooled_claims: list[dict]) -> str:

"""Pool all research-subagent claims for fact-checking."""

body = "\n".join(

f"- Claim: {c['claim']}\n Sources: {c['sources']}"

for c in pooled_claims

)

return f"""Verify the following claims pooled from research subagents.

For each claim:

1. Check that sources are credible and dated.

2. If two or more sources CONFLICT, do NOT pick a winner.

Preserve both with their context (timeframe, definition, population)

and emit both in sources_reconciled with notes explaining the apparent

contradiction. The reader gets BOTH numbers + WHY they differ.

3. Return JSON:

{{"verifications": [

{{"claim": "...", "verified": true|false, "confidence": 0.0-1.0,

"sources_reconciled": [...], "notes": "..." }}

]}}

CLAIMS TO VERIFY:

{body}"""

# Coordinator pools claims and dispatches

all_claims = [c for r in research_results for c in r.get("findings", [])]

verification_task = build_verification_task(all_claims)

verified = client.messages.create(

model="claude-sonnet-4.5",

max_tokens=4096,

system=VERIFICATION_SUBAGENT_SYSTEM,

tools=VERIFICATION_TOOLS,

messages=[{"role": "user", "content": verification_task}],

)Run the synthesis subagent with READ-ONLY tools

Synthesis is the final step. It receives verified claims + the coordinator's narrative prompt, and emits Markdown with inline citations. The crucial detail: the synthesis subagent's tool list is [Read] only. No WebSearch, no Bash. That restriction prevents it from re-researching mid-narrative (a common failure mode where synthesis fact-checks itself again and inflates latency 3-5×).

def synthesize(verified_claims: list[dict], narrative_prompt: str) -> str:

"""Read-only narrative generation. No re-research."""

task = f"""Narrative prompt from coordinator:

{narrative_prompt}

Verified findings (JSON):

{json.dumps(verified_claims, indent=2)}

Write a Markdown report that:

1. Flows logically from finding to finding

2. Uses inline citations [1], [2], etc. matching sources_reconciled order

3. ACKNOWLEDGES data gaps transparently where verifications.notes flagged them

4. Does NOT re-research or speculate beyond the verified findings

You have ONLY the Read tool. No WebSearch, no Bash."""

resp = client.messages.create(

model="claude-sonnet-4.5",

max_tokens=3000,

system=SYNTHESIS_SUBAGENT_SYSTEM,

tools=[READ_TOOL_ONLY], # Critical: read-only

messages=[{"role": "user", "content": task}],

)

return extract_text(resp)Route ALL communication through the coordinator

If subagent B needs a finding from subagent A, the answer is NOT to call A from B. The answer is: A finishes, returns to coordinator, coordinator passes the finding into B's task prompt. This single rule preserves isolation (each subagent has clean context), parallelism (when dependencies allow), and visibility (the coordinator owns the whole orchestration graph).

# WRONG. Direct subagent-to-subagent communication

# class ResearcherA:

# def __init__(self, researcher_b):

# self.b = researcher_b # ❌ creates hidden coupling

# async def find_papers(self, topic):

# finding = await self._search(topic)

# await self.b.search_web(finding) # ❌ breaks isolation

# RIGHT. Coordinator owns all routing

async def coordinated_dependent_research(topic: str):

# Phase 1: A runs alone

a_finding = await spawn_research(f"Find academic papers on {topic}")

# Phase 2: coordinator passes A's finding into B's TASK PROMPT

b_task = (

f"Topic: {topic}. "

f"Key research direction surfaced by paper search: "

f"{a_finding['result']['top_direction']}. "

f"Now search the web for industry coverage of that direction."

)

b_result = await spawn_research(b_task)

# Phase 3: coordinator continues with both

return {"a": a_finding, "b": b_result}Cap parallelism and add retry budgets

Parallel fan-out has diminishing returns past 5-7 subagents. API concurrency limits, context-window contention on the coordinator side, and rate-limit backpressure all kick in. Cap concurrency, set a retry budget per subagent (typically 2 retries with narrowed queries), and emit telemetry: spawn count, parallel max, retry rate, partial-data rate. These metrics are how you tune the system in production.

import asyncio

MAX_PARALLEL = 5

RETRY_BUDGET = 2

semaphore = asyncio.Semaphore(MAX_PARALLEL)

async def spawn_with_retry(task: str, attempt: int = 0) -> dict:

"""Bounded-concurrency research with structured-error retry."""

async with semaphore:

result = await spawn_research(task)

status = result.get("result", {}).get("status")

if status == "timeout" and attempt < RETRY_BUDGET:

narrower = narrow_query(result["result"].get("alternatives", [task])[0])

telemetry.increment("subagent.retry", labels={"reason": "timeout"})

return await spawn_with_retry(narrower, attempt + 1)

return result

# Coordinator with bounded fan-out + retries

results = await asyncio.gather(*(spawn_with_retry(t) for t in tasks))The four decisions

| Decision | Right answer | Wrong answer | Why |

|---|---|---|---|

| Coverage gap appears in the final report | Audit the coordinator's decomposition first. It almost always lives there | Tune subagent prompts or upgrade their model | Decomposition is the coordinator's job. If 4 of 5 sub-domains were never enumerated, no amount of subagent quality recovers them. Fix decomposition; the rest follows. |

| Subagent timed out. What does it return? | Structured error: {status: 'timeout', query, partial_results, alternatives} | Empty list [], marked as success | Silence loses the failure context. The coordinator can't distinguish 'no info exists' from 'we never got the data', so the final report can't acknowledge the gap honestly. |

| Two sources disagree (45% Pew vs 12% McKinsey) | Verification preserves both with attribution + context (different definitions, different timeframes) | Synthesis picks the 'more likely' one and drops the other | Both numbers are correct under their respective definitions. Dropping one is misinformation. Preserving both with context is the journalistic move and the architectural one. |

| Subagent A's output is needed by Subagent B | A returns to coordinator; coordinator passes A's finding into B's task prompt | A calls B directly with the finding | Direct subagent-to-subagent calls break isolation, kill parallelism (B waits for A), and hide the dependency from the coordinator's view. Hub-and-spoke is the architecture for a reason. |

Where it breaks

Five failure pairs. Each one is one exam question. The fix is always architectural, deterministic gates, structured fields, pinned state.

Coordinator decomposes 'creative industries' into visual arts only; report misses music, writing, film, performing arts. Subagents finished successfully. The bug is upstream.

AP-10Fix the coordinator's semantic decomposition. Enumerate ALL relevant sub-domains before spawning. The decomposition step is the coordinator's load-bearing responsibility.

Web-search subagent times out, returns []. Coordinator treats as 'no information exists' instead of 'timed out'. Final report has a silent gap.

Return structured error: {status: 'timeout', query, partial_results, alternatives}. Coordinator inspects status_code, retries with a narrower scope, or marks the gap transparently in the report.

Synthesis subagent calls verify_fact for 100 claims sequentially. 80 are simple (Wikipedia), 20 complex. Total 60+ seconds for what should be 10.

AP-12Scope verify_fact narrowly: simple-claim batch verification (parallel, ~3s) + dedicated complex-verification subagent (parallel, pre-synthesis). Synthesis assumes facts are pre-verified.

Two sources conflict (45% any-use Pew vs 12% daily-use McKinsey). Synthesis picks the 'more likely' one; the other is dropped. Report is misinformation.

AP-13Preserve both at the verification step with sources_reconciled + notes explaining the apparent conflict. Synthesis presents both with attribution. Reader sees both numbers and why they differ.

Researcher A (papers) directly hands a finding to Researcher B (web). Isolation breaks; parallelism degrades to sequential; coordinator loses visibility.

AP-14Route everything through the coordinator. A returns to coordinator; coordinator constructs B's task prompt with A's finding embedded. Hub-and-spoke is non-negotiable.

Cost & latency

3 subagents × ~20K input + ~2K output ≈ $0.06; 5 subagents ≈ $0.10. Parallel: latency = max(subagents) ≈ 3-5s, cost = sum.

Reads pooled findings (~15K) + cross-checks (~10K) + emits verifications (~1K) ≈ $0.04. Single pass, serial.

Reads verified findings (~5K) + generates Markdown narrative (~3K). Read-only tools keep cost low; no re-research.

Narrowed retries (~10K input + 1K output). Cap at 2 retries per subagent to bound cost; beyond that, accept partial data and mark the gap.

Decompose ~0.5s + parallel research ~5s + verification ~3s + synthesis ~3s + coordinator overhead. Subagents in parallel save ~10s vs sequential.

Ship checklist

Two passes. Build-time gates verify the code; run-time gates verify the system in production.

Build-time

- Coordinator's decomposition function reviewed for completeness BEFORE first spawn↗ subagents

- Each subagent has its own system prompt + scoped tool whitelist↗ tool-calling

- Synthesis subagent has Read-only tool list (no WebSearch, no Bash)↗ context-window

- All subagent fan-out via async gather / Promise.all↗ agentic-loops

- Structured error context on every timeout / no-results path↗ structured-outputs

- Verification subagent preserves contradictions with attribution↗ evaluation

- No direct subagent-to-subagent calls anywhere in the codebase↗ subagents

- Coordinator inspects status_code on every subagent return

- Bounded concurrency (semaphore) to cap parallel API calls

- Per-subagent retry budget with narrowed-query alternatives

- Telemetry: spawn count, parallel max, retry rate, partial-data rate

Run-time

- Decomposition function unit-tested with 5+ representative topic types

- Each subagent's tool whitelist verified. No Bash + Edit + Write together unless intended

- Structured error contract enforced via TypeScript / Pydantic schema

- Bounded concurrency (semaphore) tested under burst load (20+ queued tasks)

- Retry budget per subagent capped (default 2); telemetry on retry rate

- Verification subagent has fact-check rubric + contradiction-preservation rule documented

- Synthesis subagent's tool list is

[Read]only; lint check prevents regression - End-to-end test: contradictions survive from research → verification → synthesis with attribution

Five exam-pattern questions

A research system decomposes 'impact of AI on creative industries' into three subtopics: visual arts, music, writing. The web-search subagent finds results for all three. The synthesis subagent produces a report covering only visual arts. Why?

A web-search subagent times out and returns an empty result list. The coordinator treats this as 'no information available' and moves forward. The final report is incomplete. What's the architectural fix?

{status: 'timeout', query, partial_results, alternatives: ['narrower query', 'different keywords', ...]}. The coordinator inspects status_code and decides: retry with the first alternative, accept partial data, or transparently mark the gap in the synthesis. Returning [] as success conflates 'no info exists' with 'we never got the data'. And the report can't acknowledge a gap it can't see. Tagged to AP-11.A research report cites two conflicting statistics: '45% of creative workers use AI' (Pew) and '12% use AI daily' (McKinsey). Should synthesis pick the more likely one?

sources_reconciled: [{stat: '45%', source: 'Pew', context: 'any use'}, {stat: '12%', source: 'McKinsey', context: 'daily use'}] plus a notes field explaining the apparent contradiction. Synthesis then presents both with attribution. Picking one is misinformation; preserving both is journalism. Tagged to AP-13.Subagent A (academic papers) finds a key research direction. Subagent B (web search) needs that finding to guide its queries. Should A pass it directly to B?

Topic: ... Key direction from paper search: [A's finding]. Now search the web for that direction. Direct calls break isolation (B inherits A's context noise), kill parallelism (B waits for A even when it could run in parallel), and hide the dependency from the coordinator's orchestration graph. Hub-and-spoke is non-negotiable. Tagged to AP-14.A synthesis subagent needs to verify ~100 facts in a final report. Calling `verify_fact` sequentially takes 60+ seconds. What's the architectural fix?

verify_fact narrowly: simple-claim verification (Wikipedia lookups, ~5ms each) batched in parallel completes ~80 claims in ~2s. Second, dedicate a separate verification subagent that runs before synthesis, processing complex multi-source reconciliation in parallel; synthesis then assumes facts are pre-verified and only reads. Total latency drops from 60s+ to ~3-5s. The general lesson: don't let the synthesis subagent re-research mid-narrative. Tagged to AP-12.Frequently asked

Should subagents run in parallel or in sequence?

asyncio.gather / Promise.all. Cost: N separate API calls. Latency: max(N) ≈ 5-8s, not sum. Sequence only when there's a true data dependency (B needs A's output). And even then, the coordinator handles the chaining; subagents never call each other.Can subagents inherit the coordinator's conversation history?

User asked: [query]. Key context so far: [pinned facts]. Your task: [focused research goal]. Subagent starts fresh with only what's in the task. Inheriting history defeats parallelism and bloats per-subagent context cost.What happens if multiple subagents return partial / timeout results?

Research is incomplete due to timeouts on [X, Y]. The report should acknowledge gaps in those areas explicitly. Transparency beats silence. The reader sees a report that says 'we got these 3 sub-domains; the other 2 timed out' rather than a confidently-misleading report missing 2 whole sub-domains.Should the synthesis subagent have web-search access?

How do we handle contradictions surfaced by research subagents?

notes field explaining the conflict (different timeframes, definitions, populations, methodologies). Synthesis then presents both with context. The reader gets transparency; the system avoids fabricating false certainty.Can a subagent spawn another subagent (nested)?

What's the maximum number of subagents to run in parallel?

MAX_PARALLEL = 5), measure latency at different fan-outs, and tune to your workload. For 10+ tasks, consider Batch API or sequential task chains.